"Information": models, code, and papers

Domain-aware Triplet loss in Domain Generalization

Mar 01, 2023

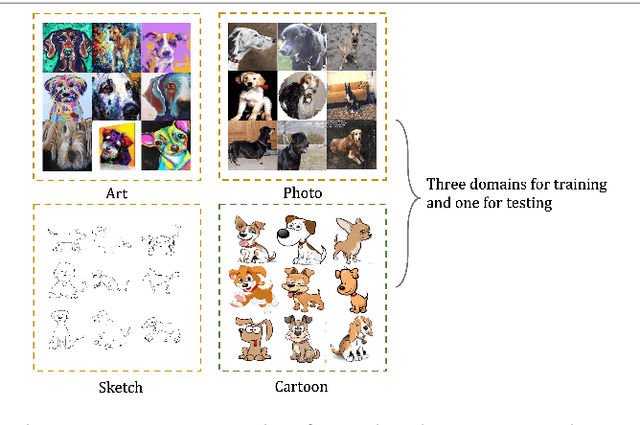

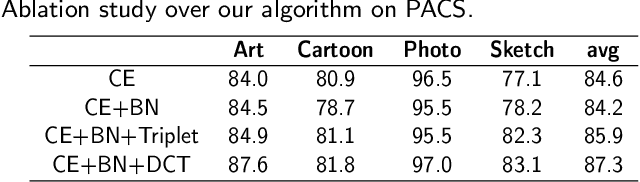

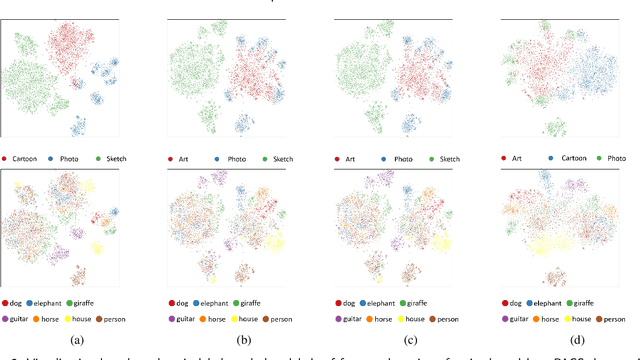

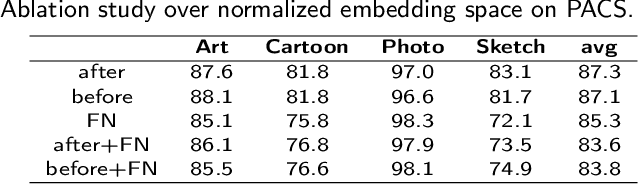

Despite much progress being made in the field of object recognition with the advances of deep learning, there are still several factors negatively affecting the performance of deep learning models. Domain shift is one of these factors and is caused by discrepancies in the distributions of the testing and training data. In this paper, we focus on the problem of compact feature clustering in domain generalization to help optimize the embedding space from multi-domain data. We design a domainaware triplet loss for domain generalization to help the model to not only cluster similar semantic features, but also to disperse features arising from the domain. Unlike previous methods focusing on distribution alignment, our algorithm is designed to disperse domain information in the embedding space. The basic idea is motivated based on the assumption that embedding features can be clustered based on domain information, which is mathematically and empirically supported in this paper. In addition, during our exploration of feature clustering in domain generalization, we note that factors affecting the convergence of metric learning loss in domain generalization are more important than the pre-defined domains. To solve this issue, we utilize two methods to normalize the embedding space, reducing the internal covariate shift of the embedding features. The ablation study demonstrates the effectiveness of our algorithm. Moreover, the experiments on the benchmark datasets, including PACS, VLCS and Office-Home, show that our method outperforms related methods focusing on domain discrepancy. In particular, our results on RegnetY-16 are significantly better than state-of-the-art methods on the benchmark datasets. Our code will be released at https://github.com/workerbcd/DCT

Recent Advances towards Safe, Responsible, and Moral Dialogue Systems: A Survey

Feb 18, 2023

With the development of artificial intelligence, dialogue systems have been endowed with amazing chit-chat capabilities, and there is widespread interest and discussion about whether the generated contents are socially beneficial. In this paper, we present a new perspective of research scope towards building a safe, responsible, and modal dialogue system, including 1) abusive and toxic contents, 2) unfairness and discrimination, 3) ethics and morality issues, and 4) risk of misleading and privacy information. Besides, we review the mainstream methods for evaluating the safety of large models from the perspectives of exposure and detection of safety issues. The recent advances in methodologies for the safety improvement of both end-to-end dialogue systems and pipeline-based models are further introduced. Finally, we discussed six existing challenges towards responsible AI: explainable safety monitoring, continuous learning of safety issues, robustness against malicious attacks, multimodal information processing, unified research framework, and multidisciplinary theory integration. We hope this survey will inspire further research toward safer dialogue systems.

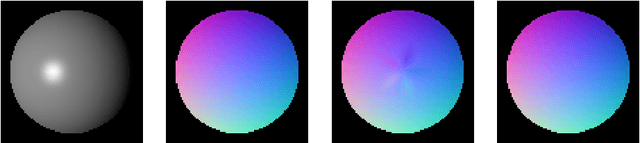

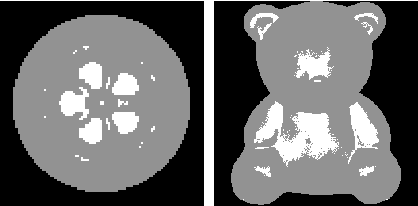

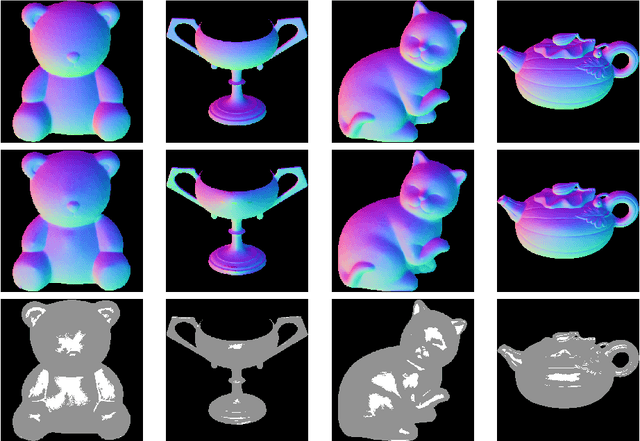

On the Regularising Levenberg-Marquardt Method for Blinn-Phong Photometric Stereo

Feb 17, 2023

Photometric stereo refers to the process to compute the 3D shape of an object using information on illumination and reflectance from several input images from the same point of view. The most often used reflectance model is the Lambertian reflectance, however this does not include specular highlights in input images. In this paper we consider the arising non-linear optimisation problem when employing Blinn-Phong reflectance for modeling specular effects. To this end we focus on the regularising Levenberg-Marquardt scheme. We show how to derive an explicit bound that gives information on the convergence reliability of the method depending on given data, and we show how to gain experimental evidence of numerical correctness of the iteration by making use of the Scherzer condition. The theoretical investigations that are at the heart of this paper are supplemented by some tests with real-world imagery.

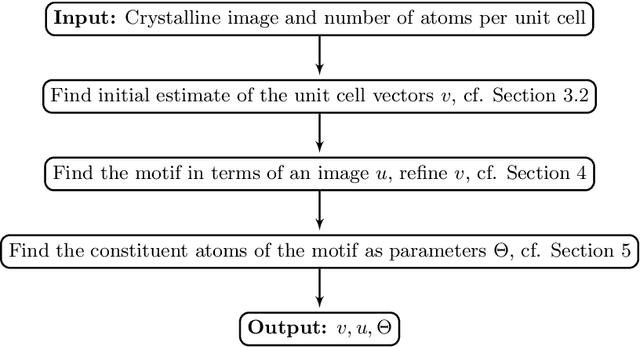

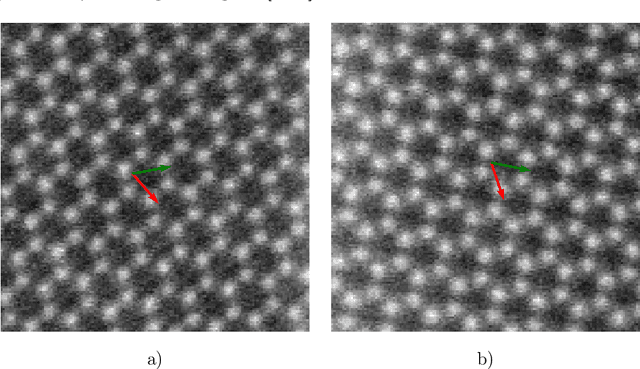

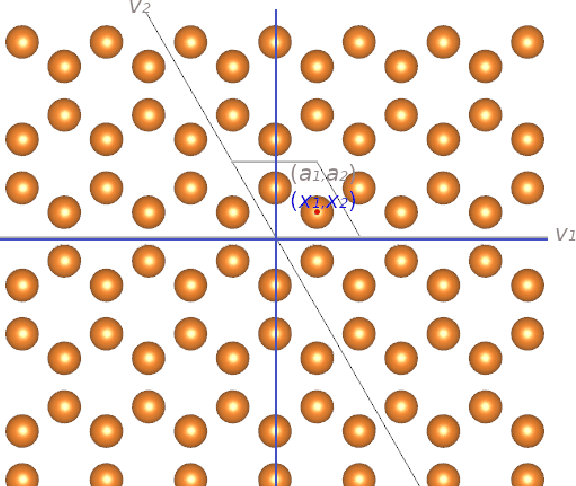

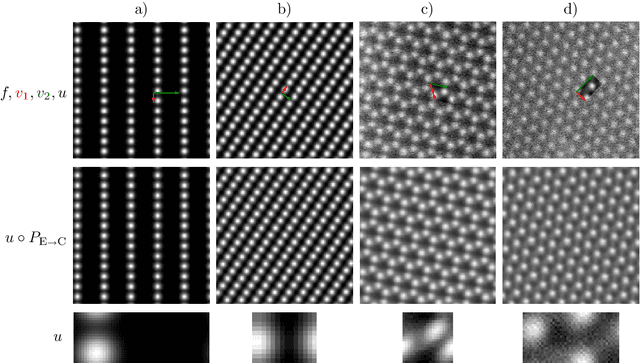

Direct Motif Extraction from High Resolution Crystalline STEM Images

Mar 13, 2023

During the last decade, automatic data analysis methods concerning different aspects of crystal analysis have been developed, e.g., unsupervised primitive unit cell extraction and automated crystal distortion and defects detection. However, an automatic, unsupervised motif extraction method is still not widely available yet. Here, we propose and demonstrate a novel method for the automatic motif extraction in real space from crystalline images based on a variational approach involving the unit cell projection operator. Due to the non-convex nature of the resulting minimization problem, a multi-stage algorithm is used. First, we determine the primitive unit cell in form of two lattice vectors. Second, a motif image is estimated using the unit cell information. Finally, the motif is determined in terms of atom positions inside the unit cell. The method was tested on various synthetic and experimental HAADF STEM images. The results are a representation of the motif in form of an image, atomic positions, primitive unit cell vectors, and a denoised and a modeled reconstruction of the input image. The method was applied to extract the primitive cells of complex $\mu$-phase structures Nb$_\text{6.4}$Co$_\text{6.6}$ and Nb$_\text{7}$Co$_\text{6}$, where subtle differences between their interplanar spacings were determined.

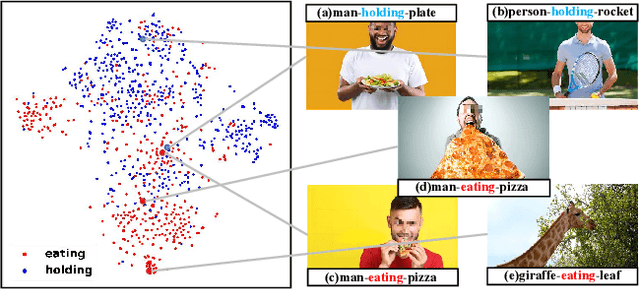

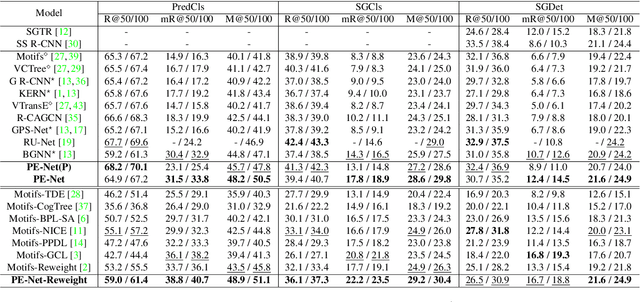

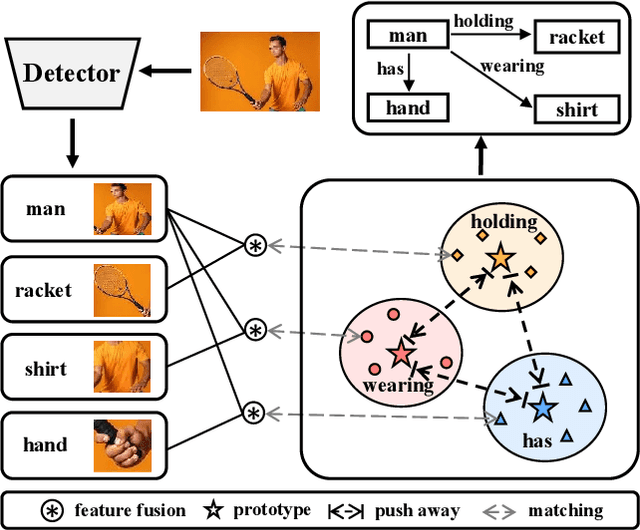

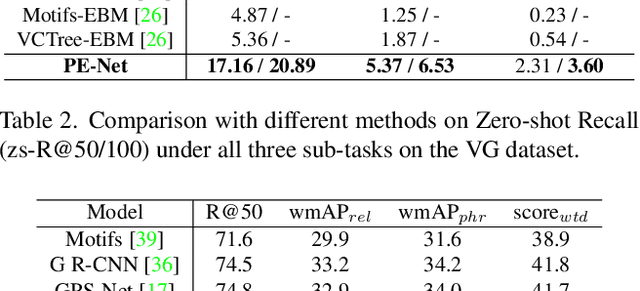

Prototype-based Embedding Network for Scene Graph Generation

Mar 13, 2023

Current Scene Graph Generation (SGG) methods explore contextual information to predict relationships among entity pairs. However, due to the diverse visual appearance of numerous possible subject-object combinations, there is a large intra-class variation within each predicate category, e.g., "man-eating-pizza, giraffe-eating-leaf", and the severe inter-class similarity between different classes, e.g., "man-holding-plate, man-eating-pizza", in model's latent space. The above challenges prevent current SGG methods from acquiring robust features for reliable relation prediction. In this paper, we claim that the predicate's category-inherent semantics can serve as class-wise prototypes in the semantic space for relieving the challenges. To the end, we propose the Prototype-based Embedding Network (PE-Net), which models entities/predicates with prototype-aligned compact and distinctive representations and thereby establishes matching between entity pairs and predicates in a common embedding space for relation recognition. Moreover, Prototype-guided Learning (PL) is introduced to help PE-Net efficiently learn such entitypredicate matching, and Prototype Regularization (PR) is devised to relieve the ambiguous entity-predicate matching caused by the predicate's semantic overlap. Extensive experiments demonstrate that our method gains superior relation recognition capability on SGG, achieving new state-of-the-art performances on both Visual Genome and Open Images datasets.

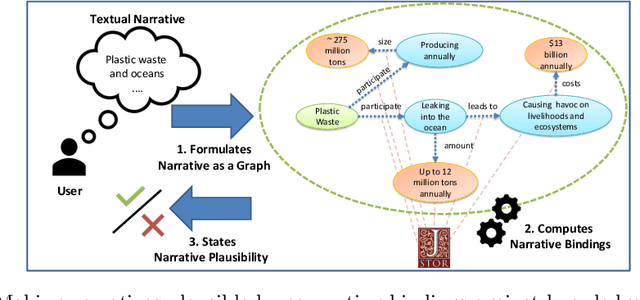

On Dimensions of Plausibility for Narrative Information Access to Digital Libraries

Aug 22, 2022

Designing keyword-based access paths is a common practice in digital libraries. They are easy to use and accepted by users and come with moderate costs for content providers. However, users usually have to break down the search into pieces if they search for stories of interest that are more complex than searching for a few keywords. After searching for every piece one by one, information must then be reassembled manually. In previous work we recommended narrative information access, i.e., users can precisely state their information needs as graph patterns called narratives. Then a system takes a narrative and searches for evidence for each of its parts. If the whole query, i.e., every part, can be bound against data, the narrative is considered plausible and, thus, the query is answered. But is it as easy as that? In this work we perform case studies to analyze the process of making a given narrative plausible. Therefore, we summarize conceptual problems and challenges to face. Moreover, we contribute a set of dimensions that must be considered when realizing narrative information access in digital libraries.

Sources of Richness and Ineffability for Phenomenally Conscious States

Feb 13, 2023

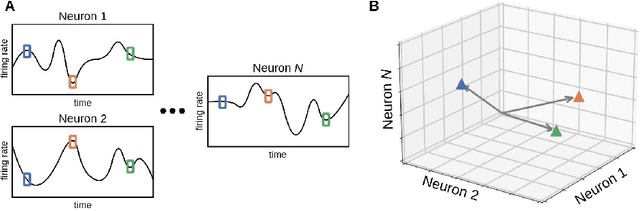

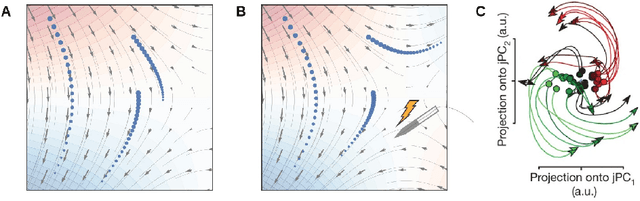

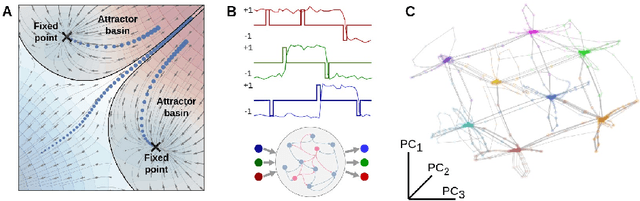

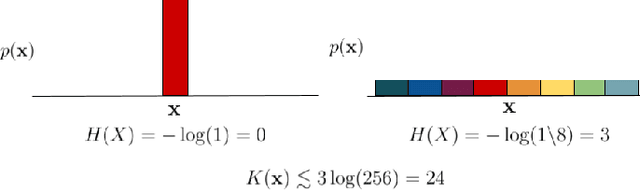

Conscious states (states that there is something it is like to be in) seem both rich or full of detail, and ineffable or hard to fully describe or recall. The problem of ineffability, in particular, is a longstanding issue in philosophy that partly motivates the explanatory gap: the belief that consciousness cannot be reduced to underlying physical processes. Here, we provide an information theoretic dynamical systems perspective on the richness and ineffability of consciousness. In our framework, the richness of conscious experience corresponds to the amount of information in a conscious state and ineffability corresponds to the amount of information lost at different stages of processing. We describe how attractor dynamics in working memory would induce impoverished recollections of our original experiences, how the discrete symbolic nature of language is insufficient for describing the rich and high-dimensional structure of experiences, and how similarity in the cognitive function of two individuals relates to improved communicability of their experiences to each other. While our model may not settle all questions relating to the explanatory gap, it makes progress toward a fully physicalist explanation of the richness and ineffability of conscious experience: two important aspects that seem to be part of what makes qualitative character so puzzling.

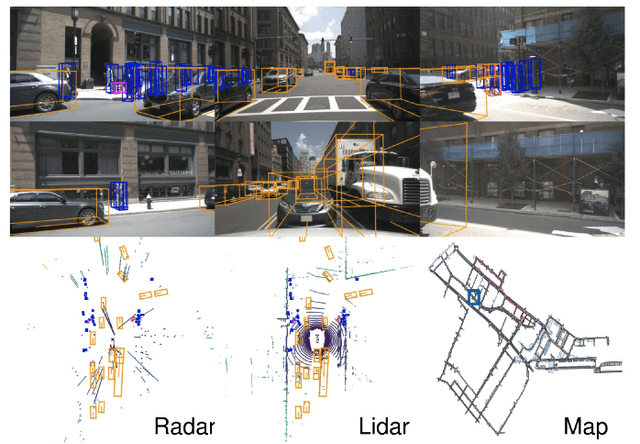

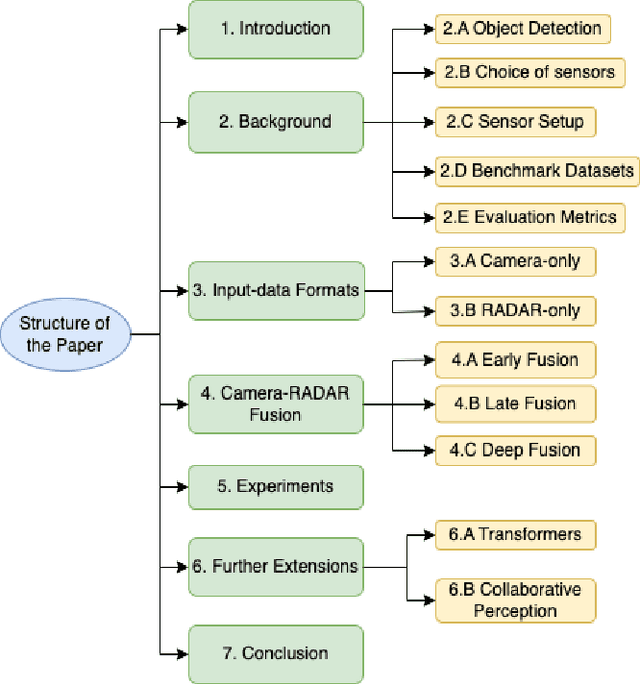

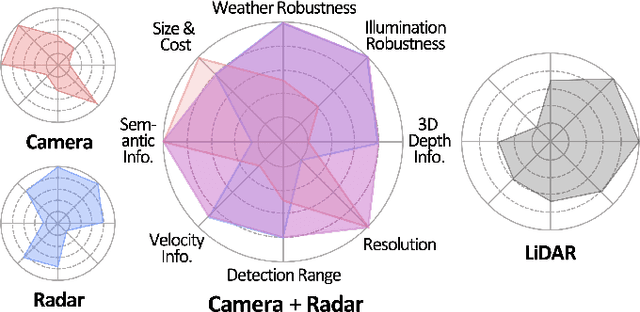

Vision-RADAR fusion for Robotics BEV Detections: A Survey

Feb 13, 2023

Due to the trending need of building autonomous robotic perception system, sensor fusion has attracted a lot of attention amongst researchers and engineers to make best use of cross-modality information. However, in order to build a robotic platform at scale we need to emphasize on autonomous robot platform bring-up cost as well. Cameras and radars, which inherently includes complementary perception information, has potential for developing autonomous robotic platform at scale. However, there is a limited work around radar fused with Vision, compared to LiDAR fused with vision work. In this paper, we tackle this gap with a survey on Vision-Radar fusion approaches for a BEV object detection system. First we go through the background information viz., object detection tasks, choice of sensors, sensor setup, benchmark datasets and evaluation metrics for a robotic perception system. Later, we cover per-modality (Camera and RADAR) data representation, then we go into detail about sensor fusion techniques based on sub-groups viz., early-fusion, deep-fusion, and late-fusion to easily understand the pros and cons of each method. Finally, we propose possible future trends for vision-radar fusion to enlighten future research. Regularly updated summary can be found at: https://github.com/ApoorvRoboticist/Vision-RADAR-Fusion-BEV-Survey

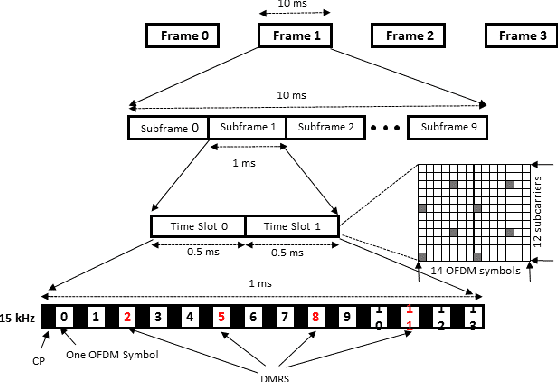

Effect of Variable Physical Numerologies on Link-Level Performance of 5G NR V2X

Mar 17, 2023

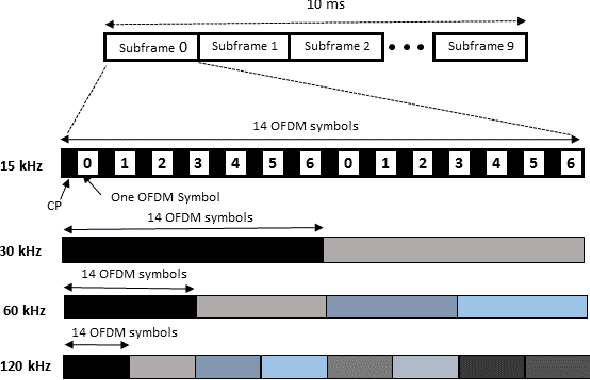

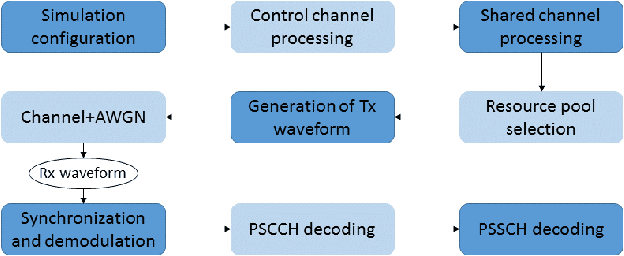

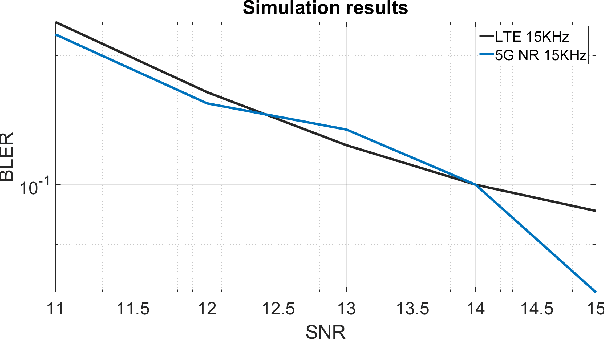

With technology and societal development, the 5th generation wireless communication (5G) contributes significantly to different societies like industries or academies. Vehicle-to-Everything (V2X) communication technology has been one of the leading services for 5G which has been applied in vehicles. It is used to exchange their status information with other traffic and traffic participants to increase traffic safety and efficiency. Cellular-V2X (C-V2X) is one of the emerging technologies to enable V2X communications. The first Long-Term Evolution (LTE) based C-V2X was released on the 3rd Generation Partnership Project (3GPP) standard. 3GPP is working towards the development of New Radio (NR) systems that it is called 5G NR V2X. One single numerology in LTE cannot satisfy most performance requirements because of the variety of deployment options and scenarios. For this reason, in order to meet the diverse requirements, the 5G NR Physical Layer (PHY) is designed to provide a highly flexible framework. Scalable Orthogonal Frequency-Division Multiplexing (OFDM) numerologies make flexibility possible. The term numerology refers to the PHY waveform parametrization and allows different Subcarrier Spacings (SCSs), symbols, and slot duration. This paper implements the Link-Level (LL) simulations of LTE C-V2X communication and 5G NR V2X communication where simulation results are used to compare similarities and differences between LTE and 5G NR. We detect the effect of variable PHY Numerologies of 5G NR on the LL performance of V2X. The simulation results show that the performance of 5G NR improved by using variable numerologies.

Stat-weight: Improving the Estimator of Interleaved Methods Outcomes with Statistical Hypothesis Testing

Mar 17, 2023Interleaving is an online evaluation approach for information retrieval systems that compares the effectiveness of ranking functions in interpreting the users' implicit feedback. Previous work such as Hofmann et al (2011) has evaluated the most promising interleaved methods at the time, on uniform distributions of queries. In the real world, ordinarily, there is an unbalanced distribution of repeated queries that follows a long-tailed users' search demand curve. The more a query is executed, by different users (or in different sessions), the higher the probability of collecting implicit feedback (interactions/clicks) on the related search results. This paper first aims to replicate the Team Draft Interleaving accuracy evaluation on uniform query distributions and then focuses on assessing how this method generalizes to long-tailed real-world scenarios. The reproducibility work raised interesting considerations on how the winning ranking function for each query should impact the overall winner for the entire evaluation. Based on what was observed, we propose that not all the queries should contribute to the final decision in equal proportion. As a result of these insights, we designed two variations of the $\Delta_{AB}$ score winner estimator that assign to each query a credit based on statistical hypothesis testing. To replicate, reproduce and extend the original work, we have developed from scratch a system that simulates a search engine and users' interactions from datasets from the industry. Our experiments confirm our intuition and show that our methods are promising in terms of accuracy, sensitivity, and robustness to noise.

* This preprint has not undergone peer review (when applicable) or any post-submission improvements or corrections. The Version of Record of this contribution is published in Advances in Information Retrieval 45th European Conference on Information Retrieval, ECIR 2023, Dublin, Ireland, April, 2023, Proceedings, Part III, and is available online at https://doi.org/10.1007/978-3-031-28241-6_2

Add to Chrome

Add to Chrome Add to Firefox

Add to Firefox Add to Edge

Add to Edge