Zihang Zou

Towards Lawful Autonomous Driving: Deriving Scenario-Aware Driving Requirements from Traffic Laws and Regulations

Apr 27, 2026Abstract:Driving in compliance with traffic laws and regulations is a basic requirement for human drivers, yet autonomous vehicles (AVs) can violate these requirements in diverse real-world scenarios. To encode law compliance into AV systems, conventional approaches use formal logic languages to explicitly specify behavioral constraints, but this process is labor-intensive, hard to scale, and costly to maintain. With recent advances in artificial intelligence, it is promising to leverage large language models (LLMs) to derive legal requirements from traffic laws and regulations. However, without explicitly grounding and reasoning in structured traffic scenarios, LLMs often retrieve irrelevant provisions or miss applicable ones, yielding imprecise requirements. To address this, we propose a novel pipeline that grounds LLM reasoning in a traffic scenario taxonomy through node-wise anchors that encode hierarchical semantics. On Chinese traffic laws and OnSite dataset (5,897 scenarios), our method improves law-scenario matching by 29.1\% and increases the accuracy of derived mandatory and prohibitive requirements by 36.9\% and 38.2\%, respectively. We further demonstrate real-world applicability by constructing a law-compliance layer for AV navigation and developing an onboard, real-time compliance monitor for in-field testing, providing a solid foundation for future AV development, deployment, and regulatory oversight.

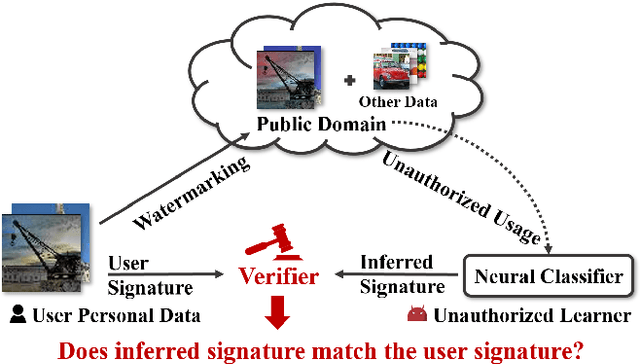

Anti-Neuron Watermarking: Protecting Personal Data Against Unauthorized Neural Model Training

Sep 18, 2021

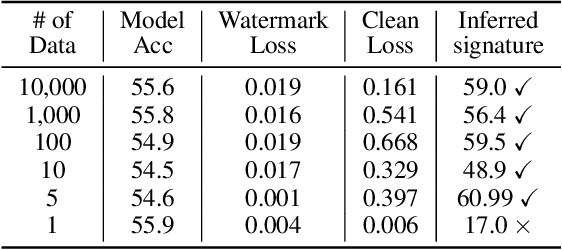

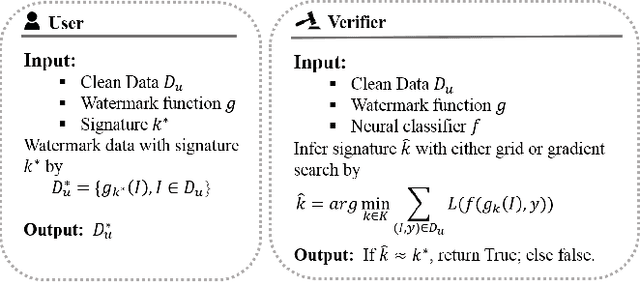

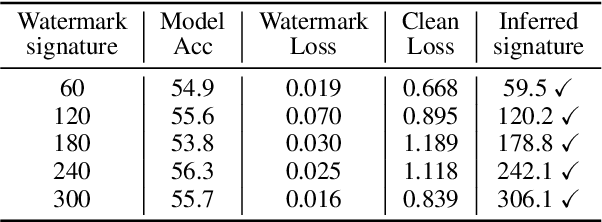

Abstract:In this paper, we raise up an emerging personal data protection problem where user personal data (e.g. images) could be inappropriately exploited to train deep neural network models without authorization. To solve this problem, we revisit traditional watermarking in advanced machine learning settings. By embedding a watermarking signature using specialized linear color transformation to user images, neural models will be imprinted with such a signature if training data include watermarked images. Then, a third-party verifier can verify potential unauthorized usage by inferring the watermark signature from neural models. We further explore the desired properties of watermarking and signature space for convincing verification. Through extensive experiments, we show empirically that linear color transformation is effective in protecting user's personal images for various realistic settings. To the best of our knowledge, this is the first work to protect users' personal data from unauthorized usage in neural network training.

Add to Chrome

Add to Chrome Add to Firefox

Add to Firefox Add to Edge

Add to Edge