Zachary Charles

Improving the convergence of SGD through adaptive batch sizes

Oct 18, 2019

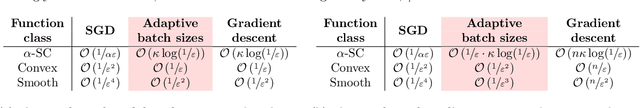

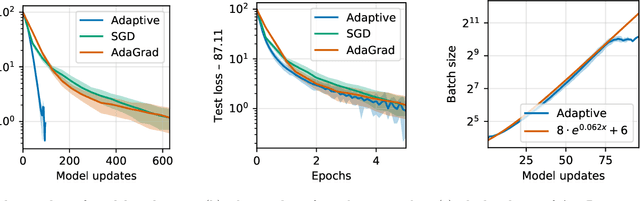

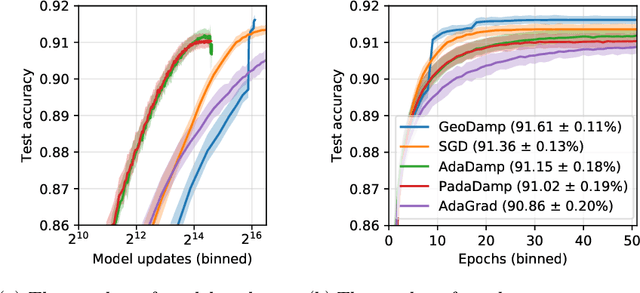

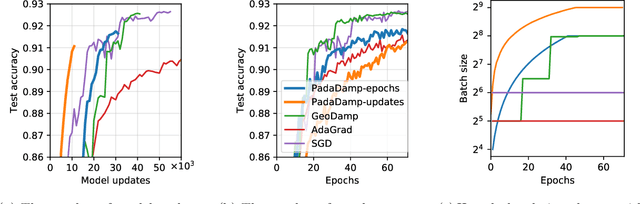

Abstract:Mini-batch stochastic gradient descent (SGD) approximates the gradient of an objective function with the average gradient of some batch of constant size. While small batch sizes can yield high-variance gradient estimates that prevent the model from learning a good model, large batches may require more data and computational effort. This work presents a method to change the batch size adaptively with model quality. We show that our method requires the same number of model updates as full-batch gradient descent while requiring the same total number of gradient computations as SGD. While this method requires evaluating the objective function, we present a passive approximation that eliminates this constraint and improves computational efficiency. We provide extensive experiments illustrating that our methods require far fewer model updates without increasing the total amount of computation.

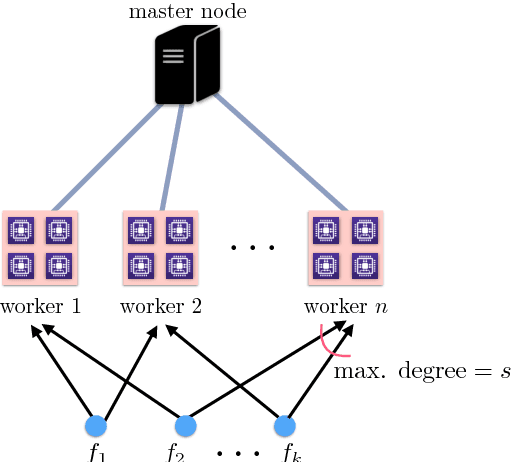

DETOX: A Redundancy-based Framework for Faster and More Robust Gradient Aggregation

Jul 29, 2019

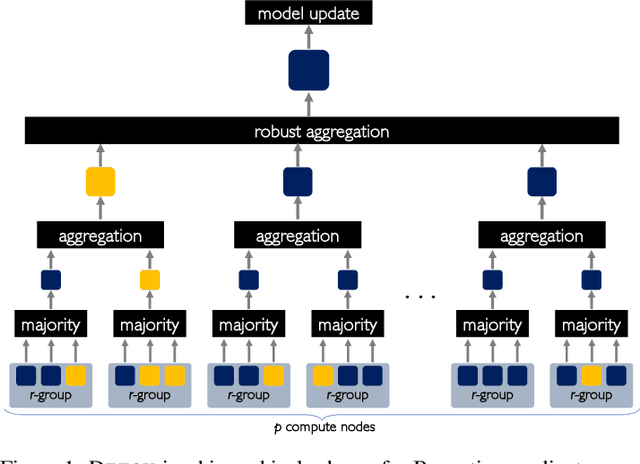

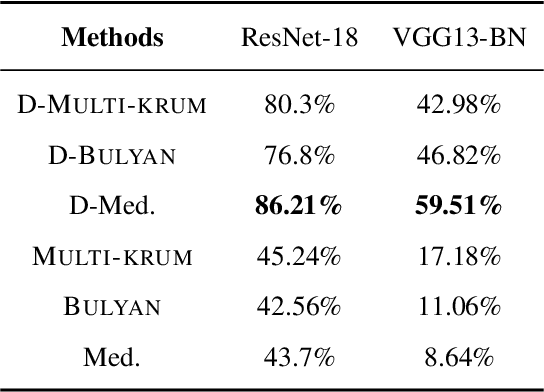

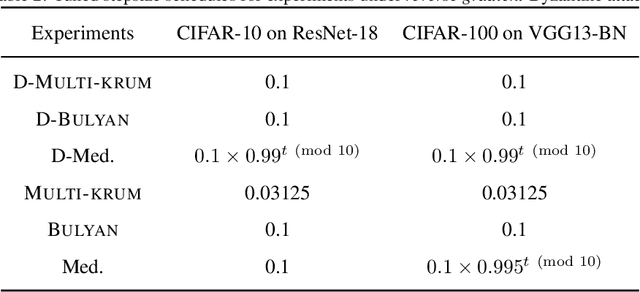

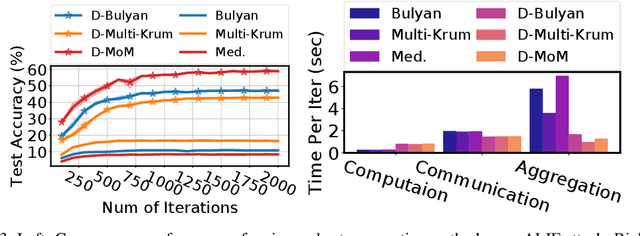

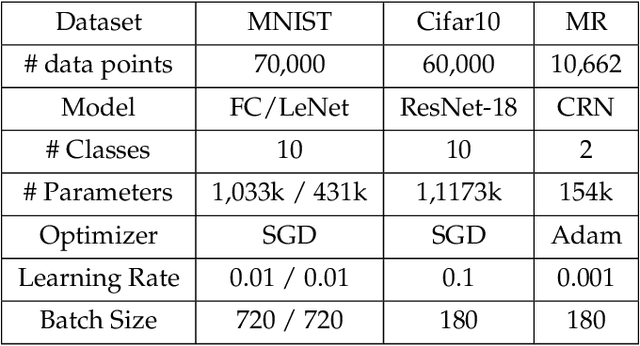

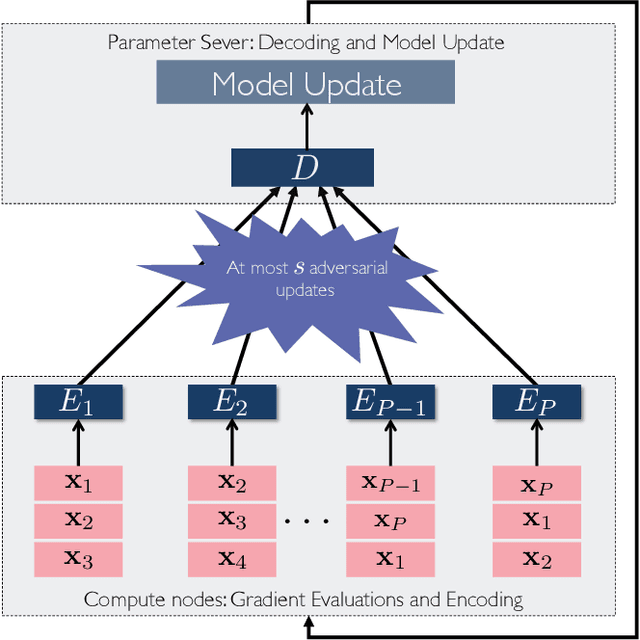

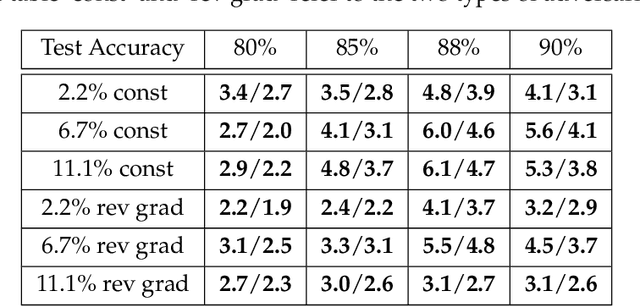

Abstract:To improve the resilience of distributed training to worst-case, or Byzantine node failures, several recent approaches have replaced gradient averaging with robust aggregation methods. Such techniques can have high computational costs, often quadratic in the number of compute nodes, and only have limited robustness guarantees. Other methods have instead used redundancy to guarantee robustness, but can only tolerate limited number of Byzantine failures. In this work, we present DETOX, a Byzantine-resilient distributed training framework that combines algorithmic redundancy with robust aggregation. DETOX operates in two steps, a filtering step that uses limited redundancy to significantly reduce the effect of Byzantine nodes, and a hierarchical aggregation step that can be used in tandem with any state-of-the-art robust aggregation method. We show theoretically that this leads to a substantial increase in robustness, and has a per iteration runtime that can be nearly linear in the number of compute nodes. We provide extensive experiments over real distributed setups across a variety of large-scale machine learning tasks, showing that DETOX leads to orders of magnitude accuracy and speedup improvements over many state-of-the-art Byzantine-resilient approaches.

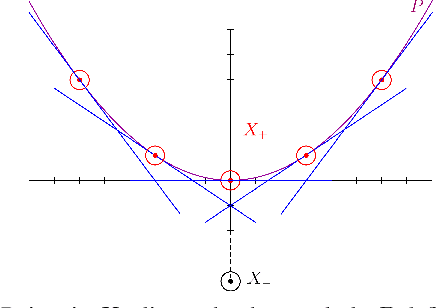

Convergence and Margin of Adversarial Training on Separable Data

May 22, 2019

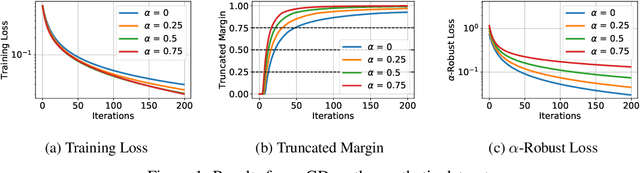

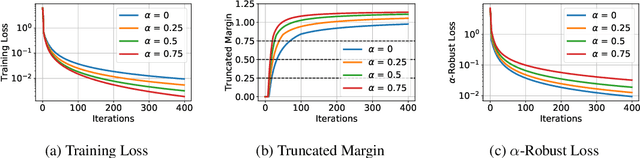

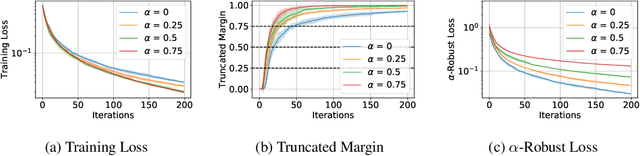

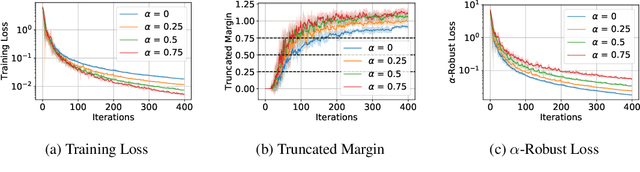

Abstract:Adversarial training is a technique for training robust machine learning models. To encourage robustness, it iteratively computes adversarial examples for the model, and then re-trains on these examples via some update rule. This work analyzes the performance of adversarial training on linearly separable data, and provides bounds on the number of iterations required for large margin. We show that when the update rule is given by an arbitrary empirical risk minimizer, adversarial training may require exponentially many iterations to obtain large margin. However, if gradient or stochastic gradient update rules are used, only polynomially many iterations are required to find a large-margin separator. By contrast, without the use of adversarial examples, gradient methods may require exponentially many iterations to achieve large margin. Our results are derived by showing that adversarial training with gradient updates minimizes a robust version of the empirical risk at a $\mathcal{O}(\ln(t)^2/t)$ rate, despite non-smoothness. We corroborate our theory empirically.

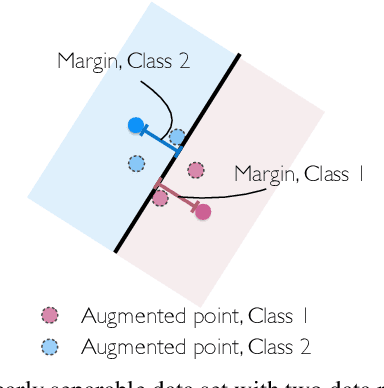

Does Data Augmentation Lead to Positive Margin?

May 08, 2019

Abstract:Data augmentation (DA) is commonly used during model training, as it significantly improves test error and model robustness. DA artificially expands the training set by applying random noise, rotations, crops, or even adversarial perturbations to the input data. Although DA is widely used, its capacity to provably improve robustness is not fully understood. In this work, we analyze the robustness that DA begets by quantifying the margin that DA enforces on empirical risk minimizers. We first focus on linear separators, and then a class of nonlinear models whose labeling is constant within small convex hulls of data points. We present lower bounds on the number of augmented data points required for non-zero margin, and show that commonly used DA techniques may only introduce significant margin after adding exponentially many points to the data set.

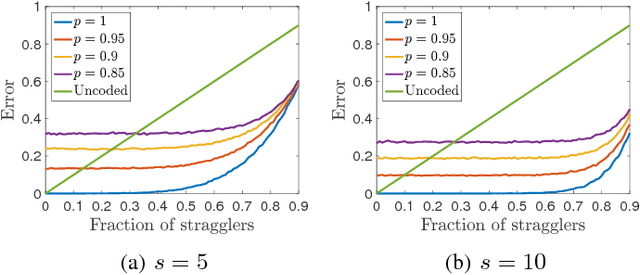

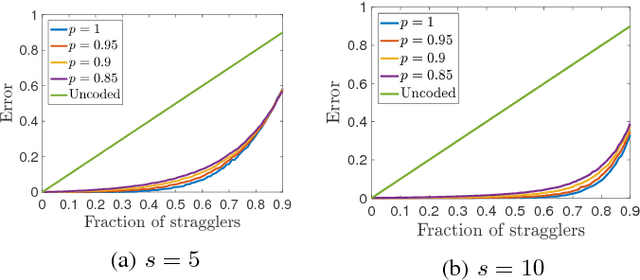

ErasureHead: Distributed Gradient Descent without Delays Using Approximate Gradient Coding

Jan 28, 2019

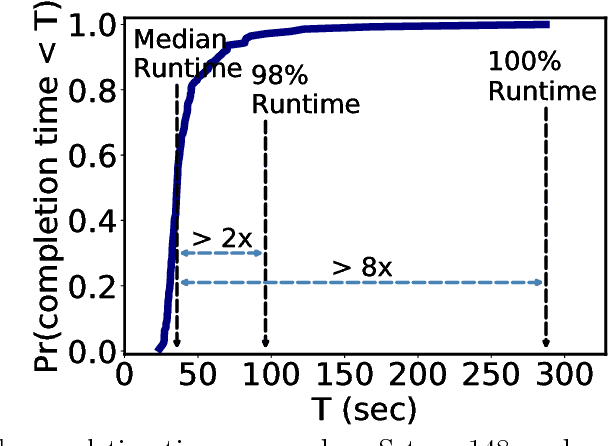

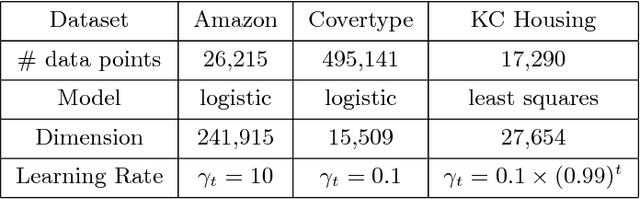

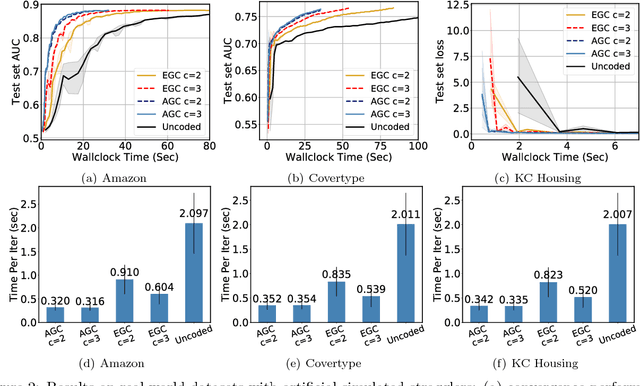

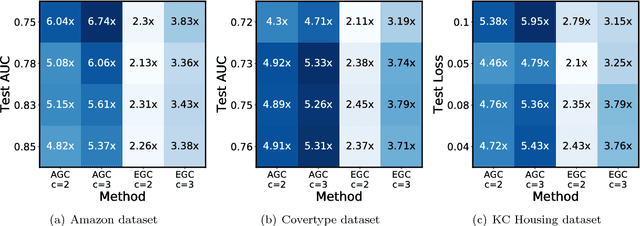

Abstract:We present ErasureHead, a new approach for distributed gradient descent (GD) that mitigates system delays by employing approximate gradient coding. Gradient coded distributed GD uses redundancy to exactly recover the gradient at each iteration from a subset of compute nodes. ErasureHead instead uses approximate gradient codes to recover an inexact gradient at each iteration, but with higher delay tolerance. Unlike prior work on gradient coding, we provide a performance analysis that combines both delay and convergence guarantees. We establish that down to a small noise floor, ErasureHead converges as quickly as distributed GD and has faster overall runtime under a probabilistic delay model. We conduct extensive experiments on real world datasets and distributed clusters and demonstrate that our method can lead to significant speedups over both standard and gradient coded GD.

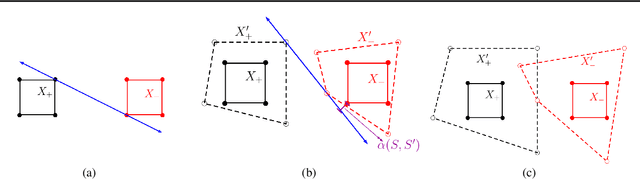

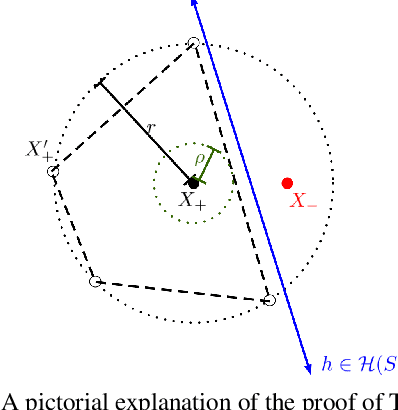

A Geometric Perspective on the Transferability of Adversarial Directions

Nov 08, 2018

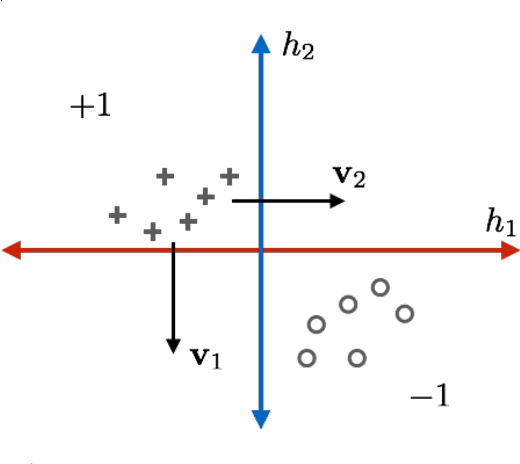

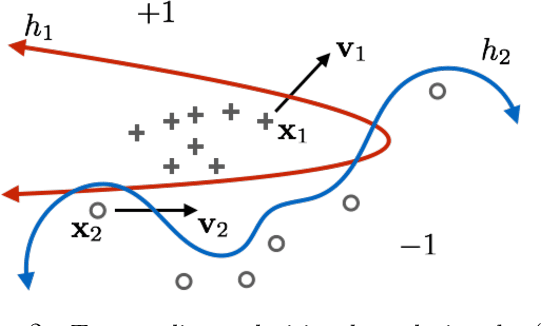

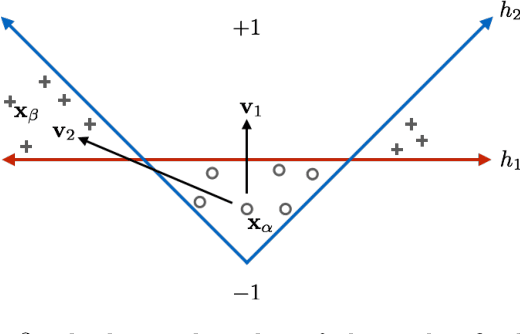

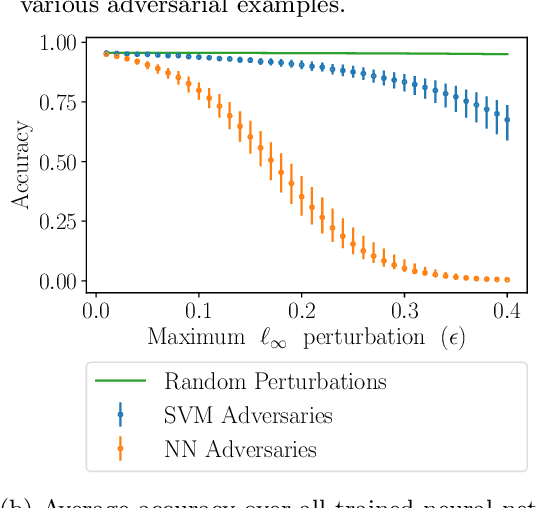

Abstract:State-of-the-art machine learning models frequently misclassify inputs that have been perturbed in an adversarial manner. Adversarial perturbations generated for a given input and a specific classifier often seem to be effective on other inputs and even different classifiers. In other words, adversarial perturbations seem to transfer between different inputs, models, and even different neural network architectures. In this work, we show that in the context of linear classifiers and two-layer ReLU networks, there provably exist directions that give rise to adversarial perturbations for many classifiers and data points simultaneously. We show that these "transferable adversarial directions" are guaranteed to exist for linear separators of a given set, and will exist with high probability for linear classifiers trained on independent sets drawn from the same distribution. We extend our results to large classes of two-layer ReLU networks. We further show that adversarial directions for ReLU networks transfer to linear classifiers while the reverse need not hold, suggesting that adversarial perturbations for more complex models are more likely to transfer to other classifiers. We validate our findings empirically, even for deeper ReLU networks.

ATOMO: Communication-efficient Learning via Atomic Sparsification

Jun 24, 2018

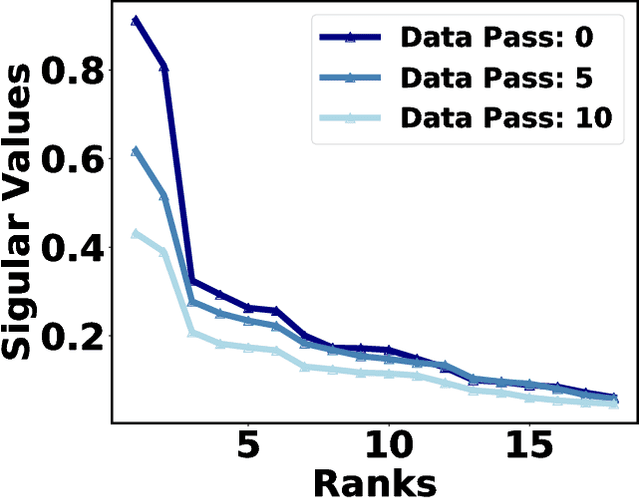

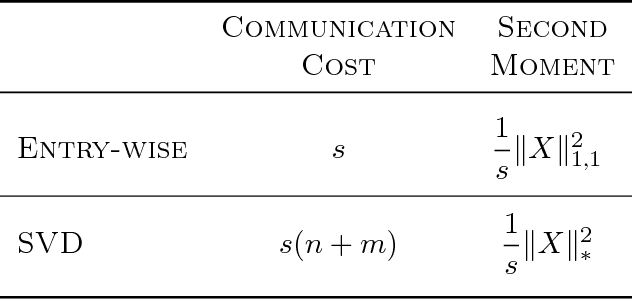

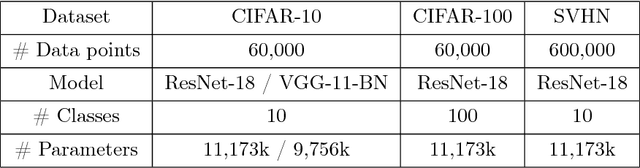

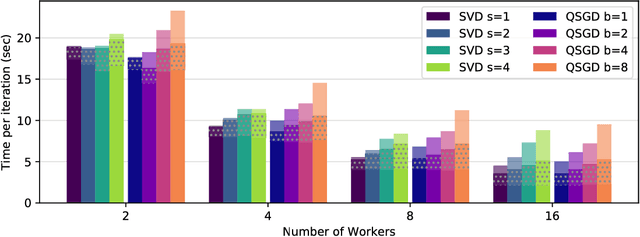

Abstract:Distributed model training suffers from communication overheads due to frequent gradient updates transmitted between compute nodes. To mitigate these overheads, several studies propose the use of sparsified stochastic gradients. We argue that these are facets of a general sparsification method that can operate on any possible atomic decomposition. Notable examples include element-wise, singular value, and Fourier decompositions. We present ATOMO, a general framework for atomic sparsification of stochastic gradients. Given a gradient, an atomic decomposition, and a sparsity budget, ATOMO gives a random unbiased sparsification of the atoms minimizing variance. We show that methods such as QSGD and TernGrad are special cases of ATOMO and show that sparsifiying gradients in their singular value decomposition (SVD), rather than the coordinate-wise one, can lead to significantly faster distributed training.

DRACO: Byzantine-resilient Distributed Training via Redundant Gradients

Jun 22, 2018

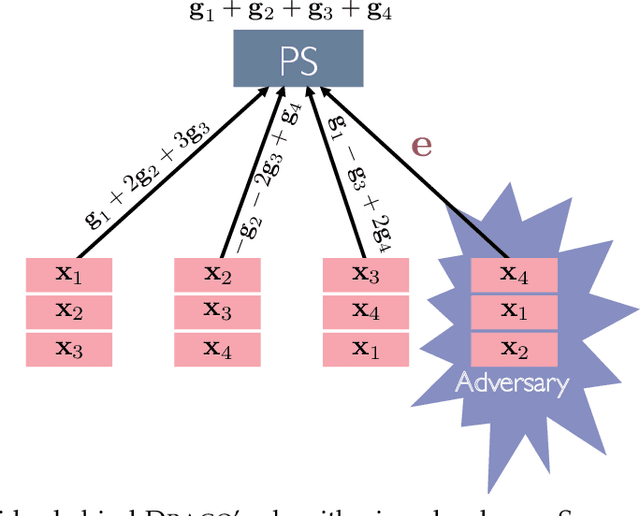

Abstract:Distributed model training is vulnerable to byzantine system failures and adversarial compute nodes, i.e., nodes that use malicious updates to corrupt the global model stored at a parameter server (PS). To guarantee some form of robustness, recent work suggests using variants of the geometric median as an aggregation rule, in place of gradient averaging. Unfortunately, median-based rules can incur a prohibitive computational overhead in large-scale settings, and their convergence guarantees often require strong assumptions. In this work, we present DRACO, a scalable framework for robust distributed training that uses ideas from coding theory. In DRACO, each compute node evaluates redundant gradients that are used by the parameter server to eliminate the effects of adversarial updates. DRACO comes with problem-independent robustness guarantees, and the model that it trains is identical to the one trained in the adversary-free setup. We provide extensive experiments on real datasets and distributed setups across a variety of large-scale models, where we show that DRACO is several times, to orders of magnitude faster than median-based approaches.

Gradient Coding via the Stochastic Block Model

May 25, 2018

Abstract:Gradient descent and its many variants, including mini-batch stochastic gradient descent, form the algorithmic foundation of modern large-scale machine learning. Due to the size and scale of modern data, gradient computations are often distributed across multiple compute nodes. Unfortunately, such distributed implementations can face significant delays caused by straggler nodes, i.e., nodes that are much slower than average. Gradient coding is a new technique for mitigating the effect of stragglers via algorithmic redundancy. While effective, previously proposed gradient codes can be computationally expensive to construct, inaccurate, or susceptible to adversarial stragglers. In this work, we present the stochastic block code (SBC), a gradient code based on the stochastic block model. We show that SBCs are efficient, accurate, and that under certain settings, adversarial straggler selection becomes as hard as detecting a community structure in the multiple community, block stochastic graph model.

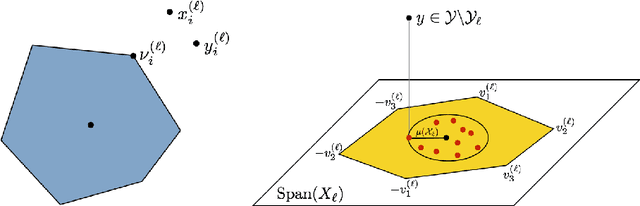

Subspace Clustering with Missing and Corrupted Data

Jan 15, 2018

Abstract:Given full or partial information about a collection of points that lie close to a union of several subspaces, subspace clustering refers to the process of clustering the points according to their subspace and identifying the subspaces. One popular approach, sparse subspace clustering (SSC), represents each sample as a weighted combination of the other samples, with weights of minimal $\ell_1$ norm, and then uses those learned weights to cluster the samples. SSC is stable in settings where each sample is contaminated by a relatively small amount of noise. However, when there is a significant amount of additive noise, or a considerable number of entries are missing, theoretical guarantees are scarce. In this paper, we study a robust variant of SSC and establish clustering guarantees in the presence of corrupted or missing data. We give explicit bounds on amount of noise and missing data that the algorithm can tolerate, both in deterministic settings and in a random generative model. Notably, our approach provides guarantees for higher tolerance to noise and missing data than existing analyses for this method. By design, the results hold even when we do not know the locations of the missing data; e.g., as in presence-only data.

Add to Chrome

Add to Chrome Add to Firefox

Add to Firefox Add to Edge

Add to Edge