Xinhao Tao

Diverse Image Harmonization

Jul 22, 2024

Abstract:Image harmonization aims to adjust the foreground illumination in a composite image to make it harmonious. The existing harmonization methods can only produce one deterministic result for a composite image, ignoring that a composite image could have multiple plausible harmonization results due to multiple plausible reflectances. In this work, we first propose a reflectance-guided harmonization network, which can achieve better performance with the guidance of ground-truth foreground reflectance. Then, we also design a diverse reflectance generation network to predict multiple plausible foreground reflectances, leading to multiple plausible harmonization results. The extensive experiments on the benchmark datasets demonstrate the effectiveness of our method.

Shadow Generation for Composite Image Using Diffusion model

Mar 22, 2024Abstract:In the realm of image composition, generating realistic shadow for the inserted foreground remains a formidable challenge. Previous works have developed image-to-image translation models which are trained on paired training data. However, they are struggling to generate shadows with accurate shapes and intensities, hindered by data scarcity and inherent task complexity. In this paper, we resort to foundation model with rich prior knowledge of natural shadow images. Specifically, we first adapt ControlNet to our task and then propose intensity modulation modules to improve the shadow intensity. Moreover, we extend the small-scale DESOBA dataset to DESOBAv2 using a novel data acquisition pipeline. Experimental results on both DESOBA and DESOBAv2 datasets as well as real composite images demonstrate the superior capability of our model for shadow generation task. The dataset, code, and model are released at https://github.com/bcmi/Object-Shadow-Generation-Dataset-DESOBAv2.

Deep Image Harmonization with Globally Guided Feature Transformation and Relation Distillation

Aug 01, 2023

Abstract:Given a composite image, image harmonization aims to adjust the foreground illumination to be consistent with background. Previous methods have explored transforming foreground features to achieve competitive performance. In this work, we show that using global information to guide foreground feature transformation could achieve significant improvement. Besides, we propose to transfer the foreground-background relation from real images to composite images, which can provide intermediate supervision for the transformed encoder features. Additionally, considering the drawbacks of existing harmonization datasets, we also contribute a ccHarmony dataset which simulates the natural illumination variation. Extensive experiments on iHarmony4 and our contributed dataset demonstrate the superiority of our method. Our ccHarmony dataset is released at https://github.com/bcmi/Image-Harmonization-Dataset-ccHarmony.

RdSOBA: Rendered Shadow-Object Association Dataset

Jun 30, 2023

Abstract:Image composition refers to inserting a foreground object into a background image to obtain a composite image. In this work, we focus on generating plausible shadows for the inserted foreground object to make the composite image more realistic. To supplement the existing small-scale dataset DESOBA, we created a large-scale dataset called RdSOBA with 3D rendering techniques. Specifically, we place a group of 3D objects in the 3D scene, and get the images without or with object shadows using controllable rendering techniques. Dataset is available at https://github.com/bcmi/Rendered-Shadow-Generation-Dataset-RdSOBA.

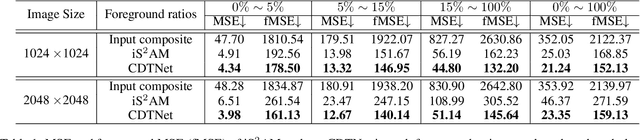

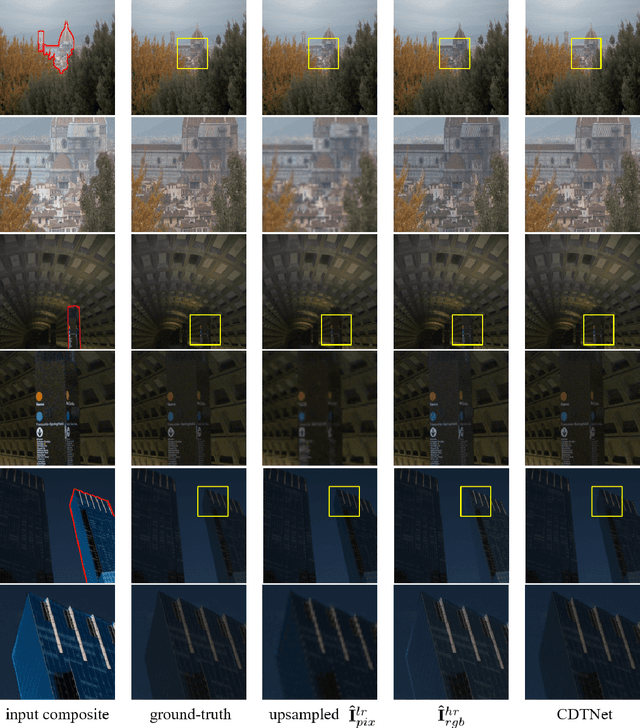

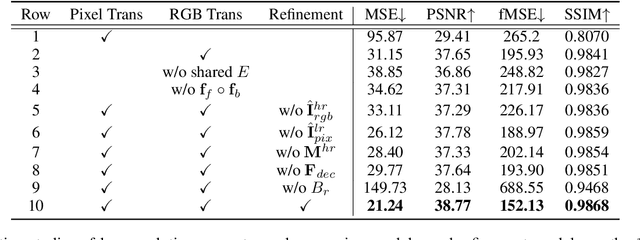

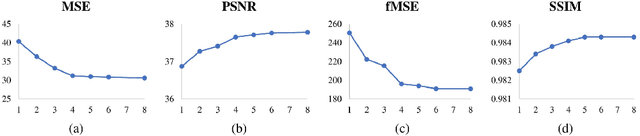

High-Resolution Image Harmonization via Collaborative Dual Transformations

Sep 14, 2021

Abstract:Given a composite image, image harmonization aims to adjust the foreground to make it compatible with the background. High-resolution image harmonization is in high demand, but still remains unexplored. Conventional image harmonization methods learn global RGB-to-RGB transformation which could effortlessly scale to high resolution, but ignore diverse local context. Recent deep learning methods learn the dense pixel-to-pixel transformation which could generate harmonious outputs, but are highly constrained in low resolution. In this work, we propose a high-resolution image harmonization network with Collaborative Dual Transformation (CDTNet) to combine pixel-to-pixel transformation and RGB-to-RGB transformation coherently in an end-to-end framework. Our CDTNet consists of a low-resolution generator for pixel-to-pixel transformation, a color mapping module for RGB-to-RGB transformation, and a refinement module to take advantage of both. Extensive experiments on high-resolution image harmonization dataset demonstrate that our CDTNet strikes a good balance between efficiency and effectiveness.

Add to Chrome

Add to Chrome Add to Firefox

Add to Firefox Add to Edge

Add to Edge