Xinglong Mao

ActFER: Agentic Facial Expression Recognition via Active Tool-Augmented Visual Reasoning

Apr 10, 2026Abstract:Recent advances in Multimodal Large Language Models (MLLMs) have created new opportunities for facial expression recognition (FER), moving it beyond pure label prediction toward reasoning-based affect understanding. However, existing MLLM-based FER methods still follow a passive paradigm: they rely on externally prepared facial inputs and perform single-pass reasoning over fixed visual evidence, without the capability for active facial perception. To address this limitation, we propose ActFER, an agentic framework that reformulates FER as active visual evidence acquisition followed by multimodal reasoning. Specifically, ActFER dynamically invokes tools for face detection and alignment, selectively zooms into informative local regions, and reasons over facial Action Units (AUs) and emotions through a visual Chain-of-Thought. To realize such behavior, we further develop Utility-Calibrated GRPO (UC-GRPO), a reinforcement learning algorithm tailored to agentic FER. UC-GRPO uses AU-grounded multi-level verifiable rewards to densify supervision, query-conditional contrastive utility estimation to enable sample-aware dynamic credit assignment for local inspection, and emotion-aware EMA calibration to reduce noisy utility estimates while capturing emotion-wise inspection tendencies. This algorithm enables ActFER to learn both when local inspection is beneficial and how to reason over the acquired evidence. Comprehensive experiments show that ActFER trained with UC-GRPO consistently outperforms passive MLLM-based FER baselines and substantially improves AU prediction accuracy.

MELLM: Exploring LLM-Powered Micro-Expression Understanding Enhanced by Subtle Motion Perception

May 11, 2025

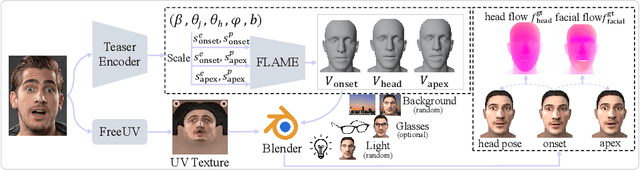

Abstract:Micro-expressions (MEs) are crucial psychological responses with significant potential for affective computing. However, current automatic micro-expression recognition (MER) research primarily focuses on discrete emotion classification, neglecting a convincing analysis of the subtle dynamic movements and inherent emotional cues. The rapid progress in multimodal large language models (MLLMs), known for their strong multimodal comprehension and language generation abilities, offers new possibilities. MLLMs have shown success in various vision-language tasks, indicating their potential to understand MEs comprehensively, including both fine-grained motion patterns and underlying emotional semantics. Nevertheless, challenges remain due to the subtle intensity and short duration of MEs, as existing MLLMs are not designed to capture such delicate frame-level facial dynamics. In this paper, we propose a novel Micro-Expression Large Language Model (MELLM), which incorporates a subtle facial motion perception strategy with the strong inference capabilities of MLLMs, representing the first exploration of MLLMs in the domain of ME analysis. Specifically, to explicitly guide the MLLM toward motion-sensitive regions, we construct an interpretable motion-enhanced color map by fusing onset-apex optical flow dynamics with the corresponding grayscale onset frame as the model input. Additionally, specialized fine-tuning strategies are incorporated to further enhance the model's visual perception of MEs. Furthermore, we construct an instruction-description dataset based on Facial Action Coding System (FACS) annotations and emotion labels to train our MELLM. Comprehensive evaluations across multiple benchmark datasets demonstrate that our model exhibits superior robustness and generalization capabilities in ME understanding (MEU). Code is available at https://github.com/zyzhangUstc/MELLM.

More is Better: A Database for Spontaneous Micro-Expression with High Frame Rates

Jan 03, 2023Abstract:As one of the most important psychic stress reactions, micro-expressions (MEs), are spontaneous and transient facial expressions that can reveal the genuine emotions of human beings. Thus, recognizing MEs (MER) automatically is becoming increasingly crucial in the field of affective computing, and provides essential technical support in lie detection, psychological analysis and other areas. However, the lack of abundant ME data seriously restricts the development of cutting-edge data-driven MER models. Despite the recent efforts of several spontaneous ME datasets to alleviate this problem, it is still a tiny amount of work. To solve the problem of ME data hunger, we construct a dynamic spontaneous ME dataset with the largest current ME data scale, called DFME (Dynamic Facial Micro-expressions), which includes 7,526 well-labeled ME videos induced by 671 participants and annotated by more than 20 annotators throughout three years. Afterwards, we adopt four classical spatiotemporal feature learning models on DFME to perform MER experiments to objectively verify the validity of DFME dataset. In addition, we explore different solutions to the class imbalance and key-frame sequence sampling problems in dynamic MER respectively on DFME, so as to provide a valuable reference for future research. The comprehensive experimental results show that our DFME dataset can facilitate the research of automatic MER, and provide a new benchmark for MER. DFME will be published via https://mea-lab-421.github.io.

Add to Chrome

Add to Chrome Add to Firefox

Add to Firefox Add to Edge

Add to Edge