Wenzhao Jiang

MixTTE: Multi-Level Mixture-of-Experts for Scalable and Adaptive Travel Time Estimation

Jan 06, 2026Abstract:Accurate Travel Time Estimation (TTE) is critical for ride-hailing platforms, where errors directly impact user experience and operational efficiency. While existing production systems excel at holistic route-level dependency modeling, they struggle to capture city-scale traffic dynamics and long-tail scenarios, leading to unreliable predictions in large urban networks. In this paper, we propose \model, a scalable and adaptive framework that synergistically integrates link-level modeling with industrial route-level TTE systems. Specifically, we propose a spatio-temporal external attention module to capture global traffic dynamic dependencies across million-scale road networks efficiently. Moreover, we construct a stabilized graph mixture-of-experts network to handle heterogeneous traffic patterns while maintaining inference efficiency. Furthermore, an asynchronous incremental learning strategy is tailored to enable real-time and stable adaptation to dynamic traffic distribution shifts. Experiments on real-world datasets validate MixTTE significantly reduces prediction errors compared to seven baselines. MixTTE has been deployed in DiDi, substantially improving the accuracy and stability of the TTE service.

Simplified Mamba with Disentangled Dependency Encoding for Long-Term Time Series Forecasting

Aug 22, 2024

Abstract:Recently many deep learning models have been proposed for Long-term Time Series Forecasting (LTSF). Based on previous literature, we identify three critical patterns that can improve forecasting accuracy: the order and semantic dependencies in time dimension as well as cross-variate dependency. However, little effort has been made to simultaneously consider order and semantic dependencies when developing forecasting models. Moreover, existing approaches utilize cross-variate dependency by mixing information from different timestamps and variates, which may introduce irrelevant or harmful cross-variate information to the time dimension and largely hinder forecasting performance. To overcome these limitations, we investigate the potential of Mamba for LTSF and discover two key advantages benefiting forecasting: (i) the selection mechanism makes Mamba focus on or ignore specific inputs and learn semantic dependency easily, and (ii) Mamba preserves order dependency by processing sequences recursively. After that, we empirically find that the non-linear activation used in Mamba is unnecessary for semantically sparse time series data. Therefore, we further propose SAMBA, a Simplified Mamba with disentangled dependency encoding. Specifically, we first remove the non-linearities of Mamba to make it more suitable for LTSF. Furthermore, we propose a disentangled dependency encoding strategy to endow Mamba with cross-variate dependency modeling capabilities while reducing the interference between time and variate dimensions. Extensive experimental results on seven real-world datasets demonstrate the effectiveness of SAMBA over state-of-the-art forecasting models.

Interpretable Cascading Mixture-of-Experts for Urban Traffic Congestion Prediction

Jun 14, 2024

Abstract:Rapid urbanization has significantly escalated traffic congestion, underscoring the need for advanced congestion prediction services to bolster intelligent transportation systems. As one of the world's largest ride-hailing platforms, DiDi places great emphasis on the accuracy of congestion prediction to enhance the effectiveness and reliability of their real-time services, such as travel time estimation and route planning. Despite numerous efforts have been made on congestion prediction, most of them fall short in handling heterogeneous and dynamic spatio-temporal dependencies (e.g., periodic and non-periodic congestions), particularly in the presence of noisy and incomplete traffic data. In this paper, we introduce a Congestion Prediction Mixture-of-Experts, CP-MoE, to address the above challenges. We first propose a sparsely-gated Mixture of Adaptive Graph Learners (MAGLs) with congestion-aware inductive biases to improve the model capacity for efficiently capturing complex spatio-temporal dependencies in varying traffic scenarios. Then, we devise two specialized experts to help identify stable trends and periodic patterns within the traffic data, respectively. By cascading these experts with MAGLs, CP-MoE delivers congestion predictions in a more robust and interpretable manner. Furthermore, an ordinal regression strategy is adopted to facilitate effective collaboration among diverse experts. Extensive experiments on real-world datasets demonstrate the superiority of our proposed method compared with state-of-the-art spatio-temporal prediction models. More importantly, CP-MoE has been deployed in DiDi to improve the accuracy and reliability of the travel time estimation system.

Survey on Trustworthy Graph Neural Networks: From A Causal Perspective

Dec 19, 2023

Abstract:Graph Neural Networks (GNNs) have emerged as powerful representation learning tools for capturing complex dependencies within diverse graph-structured data. Despite their success in a wide range of graph mining tasks, GNNs have raised serious concerns regarding their trustworthiness, including susceptibility to distribution shift, biases towards certain populations, and lack of explainability. Recently, integrating causal learning techniques into GNNs has sparked numerous ground-breaking studies since most of the trustworthiness issues can be alleviated by capturing the underlying data causality rather than superficial correlations. In this survey, we provide a comprehensive review of recent research efforts on causality-inspired GNNs. Specifically, we first present the key trustworthy risks of existing GNN models through the lens of causality. Moreover, we introduce a taxonomy of Causality-Inspired GNNs (CIGNNs) based on the type of causal learning capability they are equipped with, i.e., causal reasoning and causal representation learning. Besides, we systematically discuss typical methods within each category and demonstrate how they mitigate trustworthiness risks. Finally, we summarize useful resources and discuss several future directions, hoping to shed light on new research opportunities in this emerging field. The representative papers, along with open-source data and codes, are available in https://github.com/usail-hkust/Causality-Inspired-GNNs.

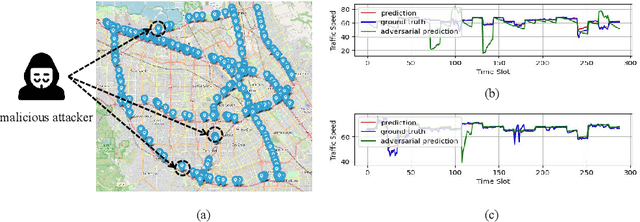

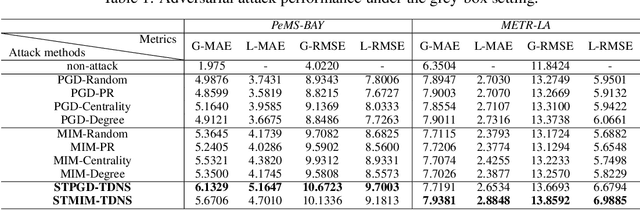

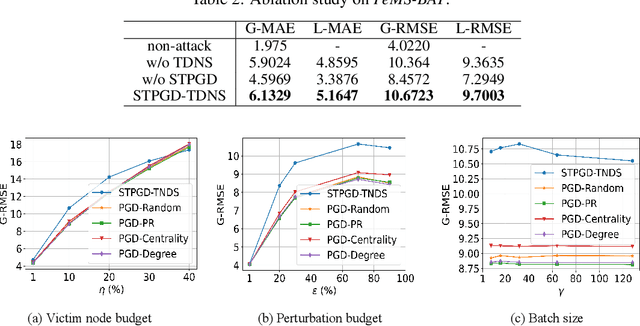

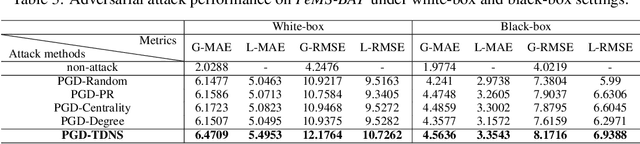

Practical Adversarial Attacks on Spatiotemporal Traffic Forecasting Models

Oct 05, 2022

Abstract:Machine learning based traffic forecasting models leverage sophisticated spatiotemporal auto-correlations to provide accurate predictions of city-wide traffic states. However, existing methods assume a reliable and unbiased forecasting environment, which is not always available in the wild. In this work, we investigate the vulnerability of spatiotemporal traffic forecasting models and propose a practical adversarial spatiotemporal attack framework. Specifically, instead of simultaneously attacking all geo-distributed data sources, an iterative gradient-guided node saliency method is proposed to identify the time-dependent set of victim nodes. Furthermore, we devise a spatiotemporal gradient descent based scheme to generate real-valued adversarial traffic states under a perturbation constraint. Meanwhile, we theoretically demonstrate the worst performance bound of adversarial traffic forecasting attacks. Extensive experiments on two real-world datasets show that the proposed two-step framework achieves up to $67.8\%$ performance degradation on various advanced spatiotemporal forecasting models. Remarkably, we also show that adversarial training with our proposed attacks can significantly improve the robustness of spatiotemporal traffic forecasting models. Our code is available in \url{https://github.com/luckyfan-cs/ASTFA}.

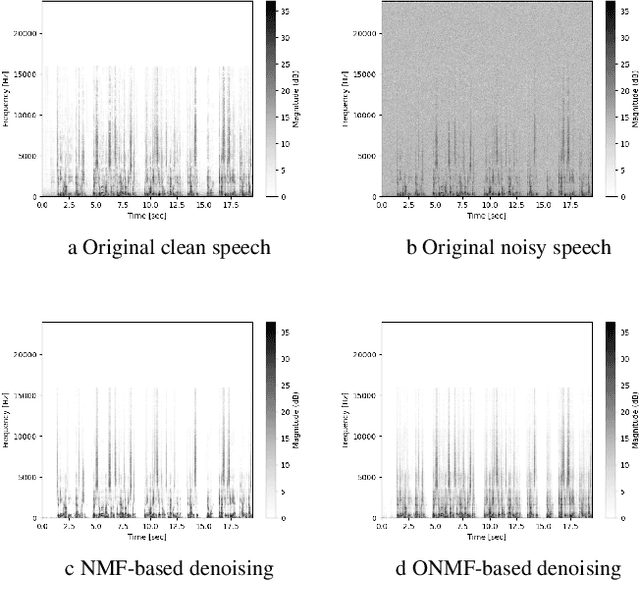

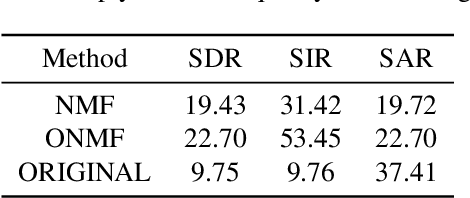

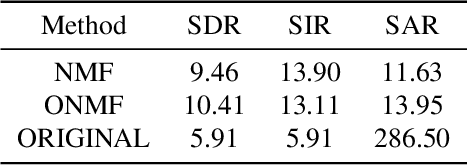

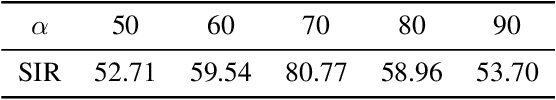

On audio enhancement via online non-negative matrix factorization

Oct 07, 2021

Abstract:We propose a method for noise reduction, the task of producing a clean audio signal from a recording corrupted by additive noise. Many common approaches to this problem are based upon applying non-negative matrix factorization to spectrogram measurements. These methods use a noiseless recording, which is believed to be similar in structure to the signal of interest, and a pure-noise recording to learn dictionaries for the true signal and the noise. One may then construct an approximation of the true signal by projecting the corrupted recording on to the clean dictionary. In this work, we build upon these methods by proposing the use of \emph{online} non-negative matrix factorization for this problem. This method is more memory efficient than traditional non-negative matrix factorization and also has potential applications to real-time denoising.

Add to Chrome

Add to Chrome Add to Firefox

Add to Firefox Add to Edge

Add to Edge