Usama Zafar

Uppsala University

Bayesian Robust Aggregation for Federated Learning

May 05, 2025Abstract:Federated Learning enables collaborative training of machine learning models on decentralized data. This scheme, however, is vulnerable to adversarial attacks, when some of the clients submit corrupted model updates. In real-world scenarios, the total number of compromised clients is typically unknown, with the extent of attacks potentially varying over time. To address these challenges, we propose an adaptive approach for robust aggregation of model updates based on Bayesian inference. The mean update is defined by the maximum of the likelihood marginalized over probabilities of each client to be `honest'. As a result, the method shares the simplicity of the classical average estimators (e.g., sample mean or geometric median), being independent of the number of compromised clients. At the same time, it is as effective against attacks as methods specifically tailored to Federated Learning, such as Krum. We compare our approach with other aggregation schemes in federated setting on three benchmark image classification data sets. The proposed method consistently achieves state-of-the-art performance across various attack types with static and varying number of malicious clients.

Robust Federated Learning Against Poisoning Attacks: A GAN-Based Defense Framework

Mar 26, 2025

Abstract:Federated Learning (FL) enables collaborative model training across decentralized devices without sharing raw data, but it remains vulnerable to poisoning attacks that compromise model integrity. Existing defenses often rely on external datasets or predefined heuristics (e.g. number of malicious clients), limiting their effectiveness and scalability. To address these limitations, we propose a privacy-preserving defense framework that leverages a Conditional Generative Adversarial Network (cGAN) to generate synthetic data at the server for authenticating client updates, eliminating the need for external datasets. Our framework is scalable, adaptive, and seamlessly integrates into FL workflows. Extensive experiments on benchmark datasets demonstrate its robust performance against a variety of poisoning attacks, achieving high True Positive Rate (TPR) and True Negative Rate (TNR) of malicious and benign clients, respectively, while maintaining model accuracy. The proposed framework offers a practical and effective solution for securing federated learning systems.

Reward-Based 1-bit Compressed Federated Distillation on Blockchain

Jun 27, 2021

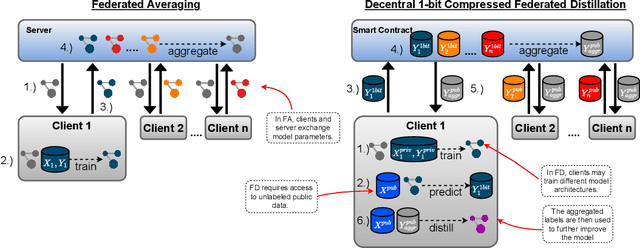

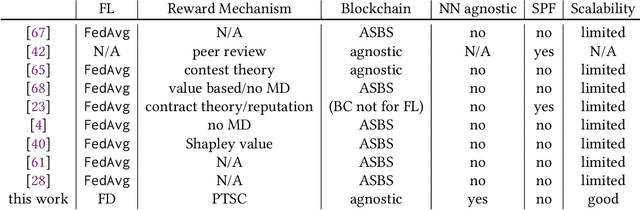

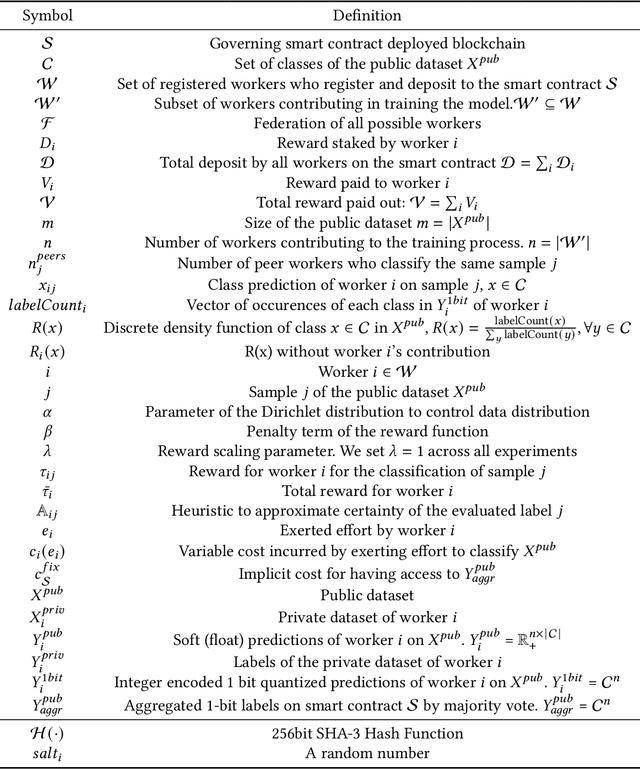

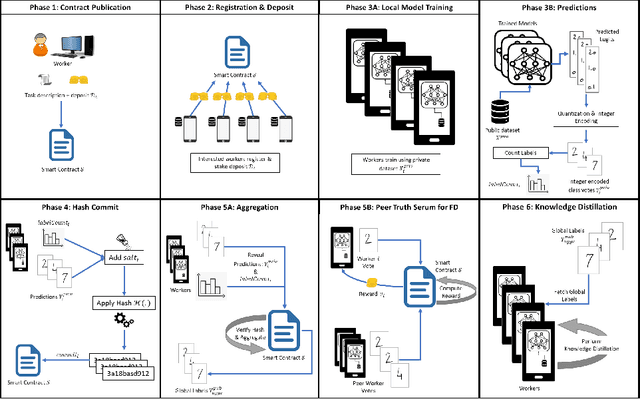

Abstract:The recent advent of various forms of Federated Knowledge Distillation (FD) paves the way for a new generation of robust and communication-efficient Federated Learning (FL), where mere soft-labels are aggregated, rather than whole gradients of Deep Neural Networks (DNN) as done in previous FL schemes. This security-per-design approach in combination with increasingly performant Internet of Things (IoT) and mobile devices opens up a new realm of possibilities to utilize private data from industries as well as from individuals as input for artificial intelligence model training. Yet in previous FL systems, lack of trust due to the imbalance of power between workers and a central authority, the assumption of altruistic worker participation and the inability to correctly measure and compare contributions of workers hinder this technology from scaling beyond small groups of already entrusted entities towards mass adoption. This work aims to mitigate the aforementioned issues by introducing a novel decentralized federated learning framework where heavily compressed 1-bit soft-labels, resembling 1-hot label predictions, are aggregated on a smart contract. In a context where workers' contributions are now easily comparable, we modify the Peer Truth Serum for Crowdsourcing mechanism (PTSC) for FD to reward honest participation based on peer consistency in an incentive compatible fashion. Due to heavy reductions of both computational complexity and storage, our framework is a fully on-blockchain FL system that is feasible on simple smart contracts and therefore blockchain agnostic. We experimentally test our new framework and validate its theoretical properties.

Add to Chrome

Add to Chrome Add to Firefox

Add to Firefox Add to Edge

Add to Edge