Tongzhou Jiang

Trajectory Tracking Using Frenet Coordinates with Deep Deterministic Policy Gradient

Nov 21, 2024Abstract:This paper studies the application of the DDPG algorithm in trajectory-tracking tasks and proposes a trajectorytracking control method combined with Frenet coordinate system. By converting the vehicle's position and velocity information from the Cartesian coordinate system to Frenet coordinate system, this method can more accurately describe the vehicle's deviation and travel distance relative to the center line of the road. The DDPG algorithm adopts the Actor-Critic framework, uses deep neural networks for strategy and value evaluation, and combines the experience replay mechanism and target network to improve the algorithm's stability and data utilization efficiency. Experimental results show that the DDPG algorithm based on Frenet coordinate system performs well in trajectory-tracking tasks in complex environments, achieves high-precision and stable path tracking, and demonstrates its application potential in autonomous driving and intelligent transportation systems. Keywords- DDPG; path tracking; robot navigation

Prioritized experience replay-based DDQN for Unmanned Vehicle Path Planning

Jun 25, 2024

Abstract:Path planning module is a key module for autonomous vehicle navigation, which directly affects its operating efficiency and safety. In complex environments with many obstacles, traditional planning algorithms often cannot meet the needs of intelligence, which may lead to problems such as dead zones in unmanned vehicles. This paper proposes a path planning algorithm based on DDQN and combines it with the prioritized experience replay method to solve the problem that traditional path planning algorithms often fall into dead zones. A series of simulation experiment results prove that the path planning algorithm based on DDQN is significantly better than other methods in terms of speed and accuracy, especially the ability to break through dead zones in extreme environments. Research shows that the path planning algorithm based on DDQN performs well in terms of path quality and safety. These research results provide an important reference for the research on automatic navigation of autonomous vehicles.

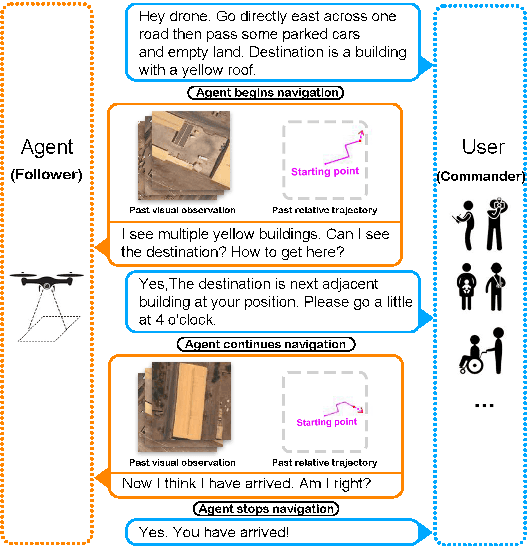

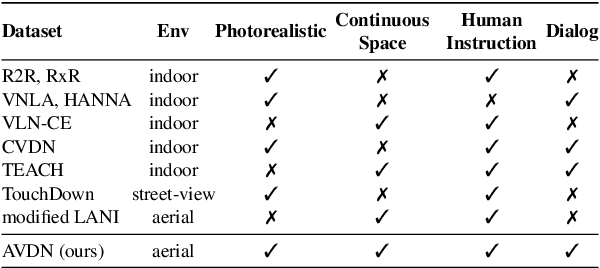

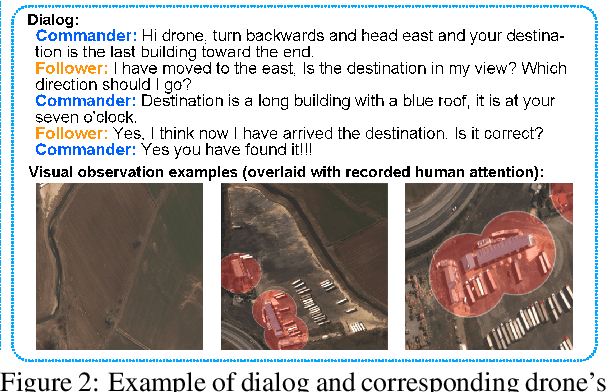

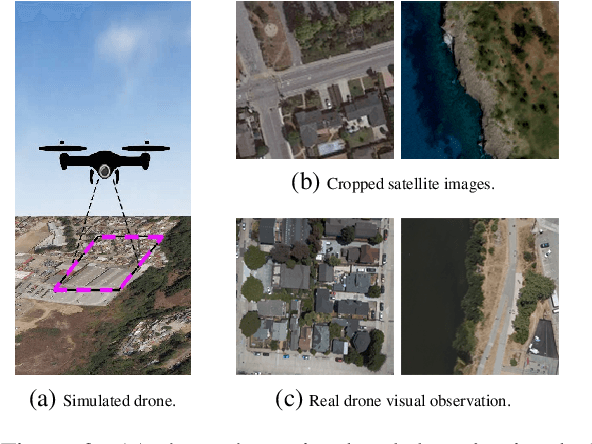

Aerial Vision-and-Dialog Navigation

May 24, 2022

Abstract:The ability to converse with humans and follow commands in natural language is crucial for intelligent unmanned aerial vehicles (a.k.a. drones). It can relieve people's burden of holding a controller all the time, allow multitasking, and make drone control more accessible for people with disabilities or with their hands occupied. To this end, we introduce Aerial Vision-and-Dialog Navigation (AVDN), to navigate a drone via natural language conversation. We build a drone simulator with a continuous photorealistic environment and collect a new AVDN dataset of over 3k recorded navigation trajectories with asynchronous human-human dialogs between commanders and followers. The commander provides initial navigation instruction and further guidance by request, while the follower navigates the drone in the simulator and asks questions when needed. During data collection, followers' attention on the drone's visual observation is also recorded. Based on the AVDN dataset, we study the tasks of aerial navigation from (full) dialog history and propose an effective Human Attention Aided (HAA) baseline model, which learns to predict both navigation waypoints and human attention. Dataset and code will be released.

Add to Chrome

Add to Chrome Add to Firefox

Add to Firefox Add to Edge

Add to Edge