Tetsuya Narita

PLATO Hand: Shaping Contact Behavior with Fingernails for Precise Manipulation

Feb 05, 2026Abstract:We present the PLATO Hand, a dexterous robotic hand with a hybrid fingertip that embeds a rigid fingernail within a compliant pulp. This design shapes contact behavior to enable diverse interaction modes across a range of object geometries. We develop a strain-energy-based bending-indentation model to guide the fingertip design and to explain how guided contact preserves local indentation while suppressing global bending. Experimental results show that the proposed robotic hand design demonstrates improved pinching stability, enhanced force observability, and successful execution of edge-sensitive manipulation tasks, including paper singulation, card picking, and orange peeling. Together, these results show that coupling structured contact geometry with a force-motion transparent mechanism provides a principled, physically embodied approach to precise manipulation.

Human-Exoskeleton Kinematic Calibration to Improve Hand Tracking for Dexterous Teleoperation

Jul 31, 2025Abstract:Hand exoskeletons are critical tools for dexterous teleoperation and immersive manipulation interfaces, but achieving accurate hand tracking remains a challenge due to user-specific anatomical variability and donning inconsistencies. These issues lead to kinematic misalignments that degrade tracking performance and limit applicability in precision tasks. We propose a subject-specific calibration framework for exoskeleton-based hand tracking that uses redundant joint sensing and a residual-weighted optimization strategy to estimate virtual link parameters. Implemented on the Maestro exoskeleton, our method improves joint angle and fingertip position estimation across users with varying hand geometries. We introduce a data-driven approach to empirically tune cost function weights using motion capture ground truth, enabling more accurate and consistent calibration across participants. Quantitative results from seven subjects show substantial reductions in joint and fingertip tracking errors compared to uncalibrated and evenly weighted models. Qualitative visualizations using a Unity-based virtual hand further confirm improvements in motion fidelity. The proposed framework generalizes across exoskeleton designs with closed-loop kinematics and minimal sensing, and lays the foundation for high-fidelity teleoperation and learning-from-demonstration applications.

Few-shot transfer of tool-use skills using human demonstrations with proximity and tactile sensing

Jul 17, 2025

Abstract:Tools extend the manipulation abilities of robots, much like they do for humans. Despite human expertise in tool manipulation, teaching robots these skills faces challenges. The complexity arises from the interplay of two simultaneous points of contact: one between the robot and the tool, and another between the tool and the environment. Tactile and proximity sensors play a crucial role in identifying these complex contacts. However, learning tool manipulation using these sensors remains challenging due to limited real-world data and the large sim-to-real gap. To address this, we propose a few-shot tool-use skill transfer framework using multimodal sensing. The framework involves pre-training the base policy to capture contact states common in tool-use skills in simulation and fine-tuning it with human demonstrations collected in the real-world target domain to bridge the domain gap. We validate that this framework enables teaching surface-following tasks using tools with diverse physical and geometric properties with a small number of demonstrations on the Franka Emika robot arm. Our analysis suggests that the robot acquires new tool-use skills by transferring the ability to recognise tool-environment contact relationships from pre-trained to fine-tuned policies. Additionally, combining proximity and tactile sensors enhances the identification of contact states and environmental geometry.

Strategic Jenga Play via Graph Based Dynamics Modeling

May 14, 2025

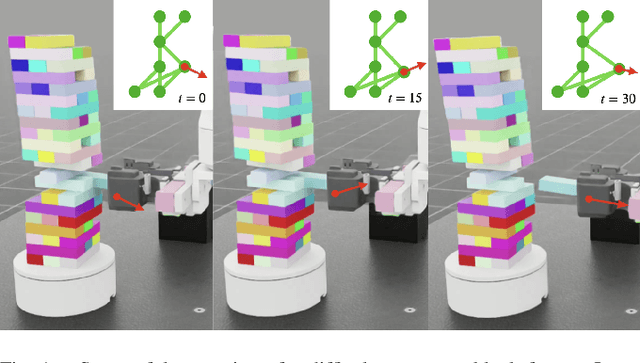

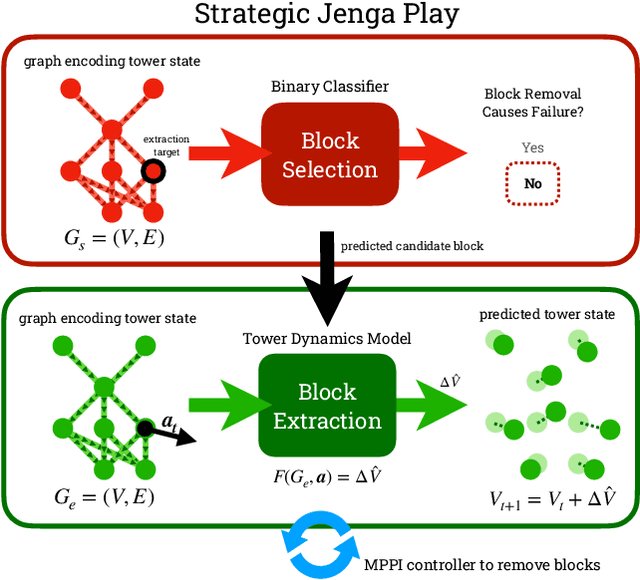

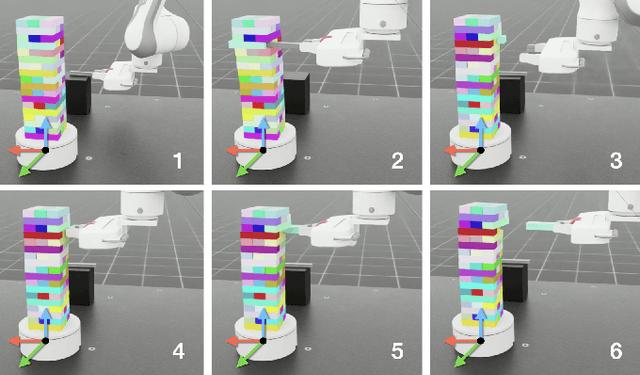

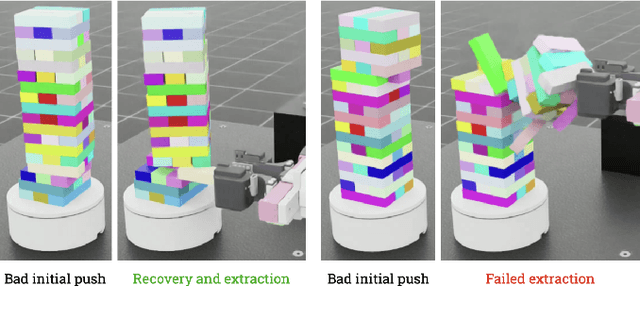

Abstract:Controlled manipulation of multiple objects whose dynamics are closely linked is a challenging problem within contact-rich manipulation, requiring an understanding of how the movement of one will impact the others. Using the Jenga game as a testbed to explore this problem, we graph-based modeling to tackle two different aspects of the task: 1) block selection and 2) block extraction. For block selection, we construct graphs of the Jenga tower and attempt to classify, based on the tower's structure, whether removing a given block will cause the tower to collapse. For block extraction, we train a dynamics model that predicts how all the blocks in the tower will move at each timestep in an extraction trajectory, which we then use in a sampling-based model predictive control loop to safely pull blocks out of the tower with a general-purpose parallel-jaw gripper. We train and evaluate our methods in simulation, demonstrating promising results towards block selection and block extraction on a challenging set of full-sized Jenga towers, even at advanced stages of the game.

Add to Chrome

Add to Chrome Add to Firefox

Add to Firefox Add to Edge

Add to Edge