Tat-Jun Chin

The University of Adelaide

BPnP: Further Empowering End-to-End Learning with Back-Propagatable Geometric Optimization

Sep 13, 2019

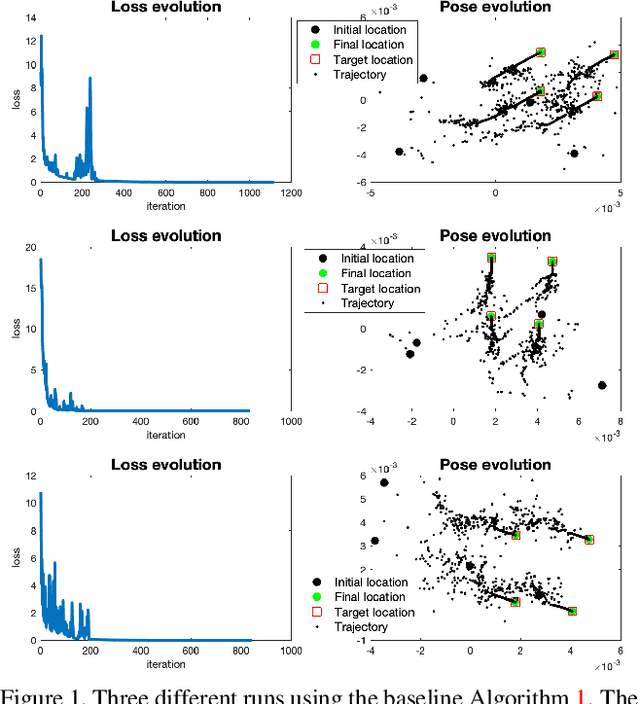

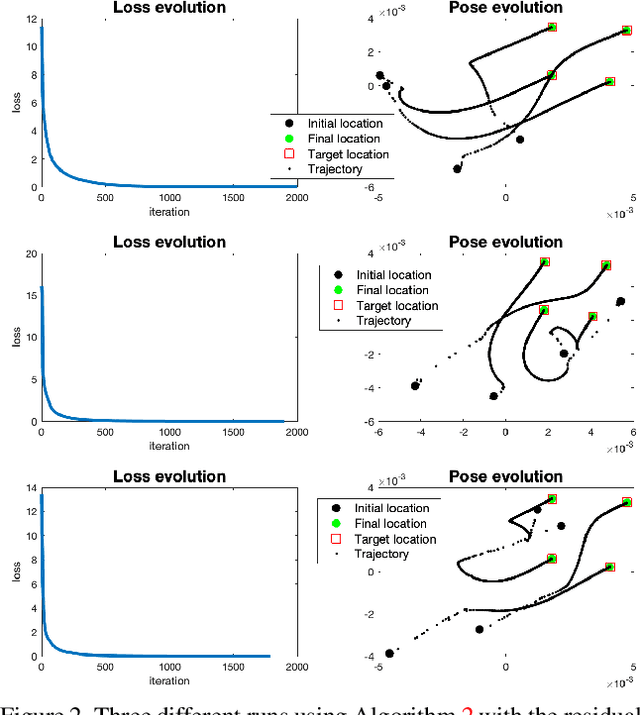

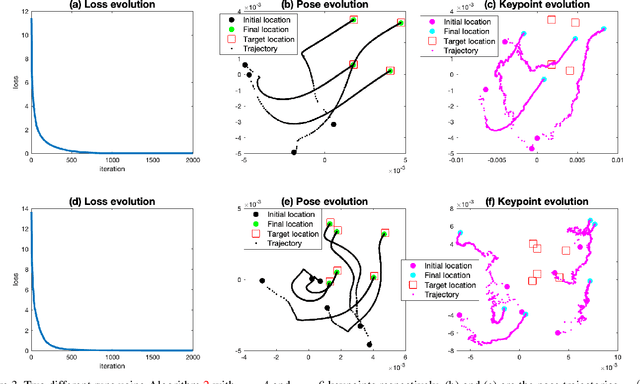

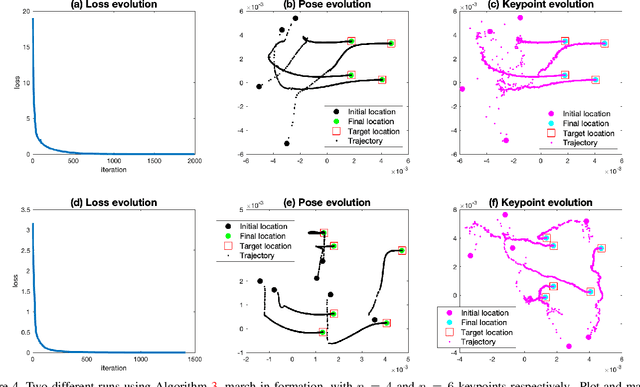

Abstract:In this paper we present BPnP, a novel method to do back-propagation through a PnP solver. We show that the gradients of such geometric optimization process can be computed using the Implicit Function Theorem as if it is differentiable. Furthermore, we develop a residual-conformity trick to make end-to-end pose regression using BPnP smooth and stable. We also propose a "march in formation" algorithm which successfully uses BPnP for keypoint regression. Our invention opens a door to vast possibilities. The ability to incorporate geometric optimization in end-to-end learning will greatly boost its power and promote innovations in various computer vision tasks.

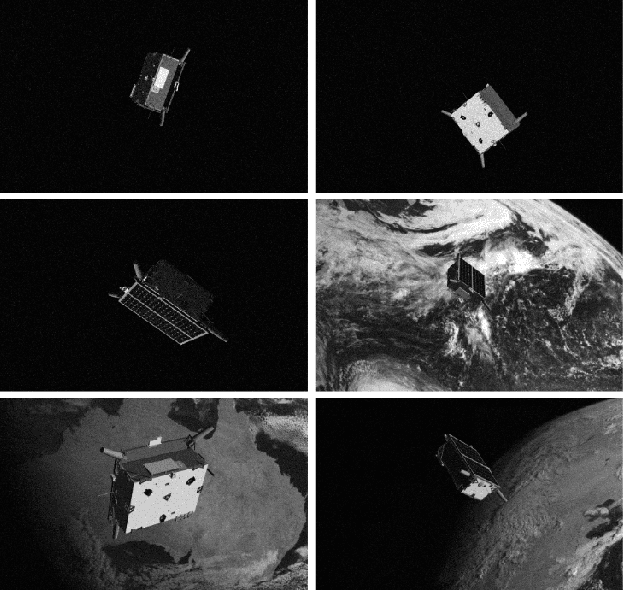

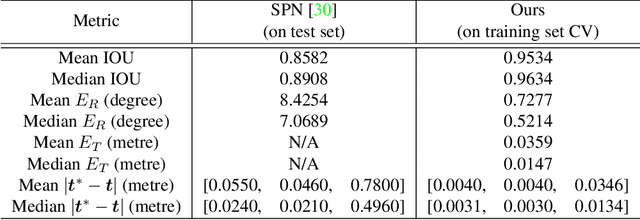

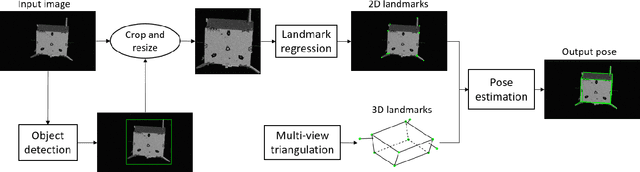

Satellite Pose Estimation with Deep Landmark Regression and Nonlinear Pose Refinement

Aug 30, 2019

Abstract:We propose an approach to estimate the 6DOF pose of a satellite, relative to a canonical pose, from a single image. Such a problem is crucial in many space proximity operations, such as docking, debris removal, and inter-spacecraft communications. Our approach combines machine learning and geometric optimisation, by predicting the coordinates of a set of landmarks in the input image, associating the landmarks to their corresponding 3D points on an a priori reconstructed 3D model, then solving for the object pose using non-linear optimisation. Our approach is not only novel for this specific pose estimation task, which helps to further open up a relatively new domain for machine learning and computer vision, but it also demonstrates superior accuracy and won the first place in the recent Kelvins Pose Estimation Challenge organised by the European Space Agency (ESA).

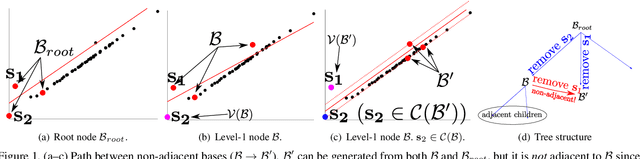

Consensus Maximization Tree Search Revisited

Aug 25, 2019

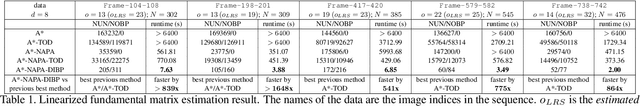

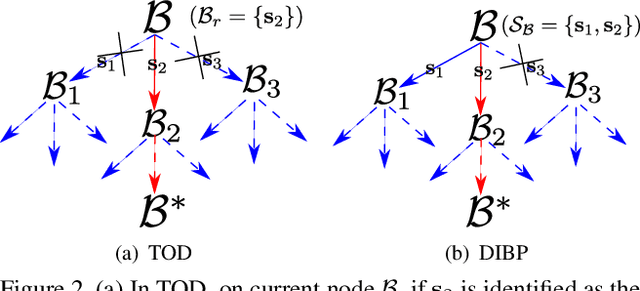

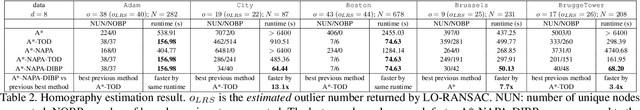

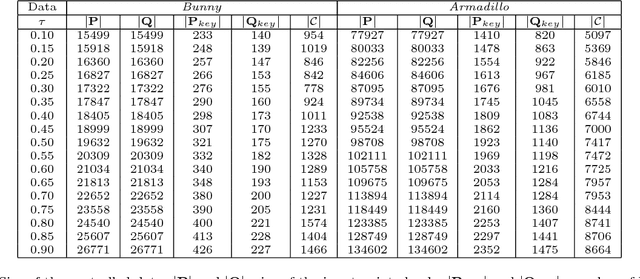

Abstract:Consensus maximization is widely used for robust fitting in computer vision. However, solving it exactly, i.e., finding the globally optimal solution, is intractable. A* tree search, which has been shown to be fixed-parameter tractable, is one of the most efficient exact methods, though it is still limited to small inputs. We make two key contributions towards improving A* tree search. First, we show that the consensus maximization tree structure used previously actually contains paths that connect nodes at both adjacent and non-adjacent levels. Crucially, paths connecting non-adjacent levels are redundant for tree search, but they were not avoided previously. We propose a new acceleration strategy that avoids such redundant paths. In the second contribution, we show that the existing branch pruning technique also deteriorates quickly with the problem dimension. We then propose a new branch pruning technique that is less dimension-sensitive to address this issue. Experiments show that both new techniques can significantly accelerate A* tree search, making it reasonably efficient on inputs that were previously out of reach.

Scalable Place Recognition Under Appearance Change for Autonomous Driving

Aug 01, 2019

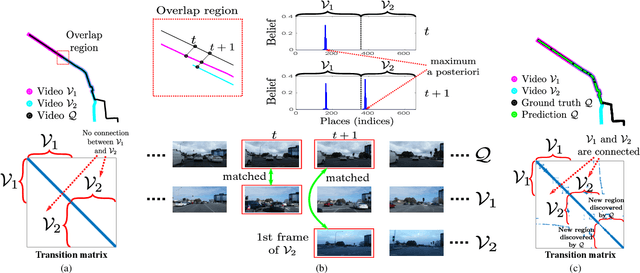

Abstract:A major challenge in place recognition for autonomous driving is to be robust against appearance changes due to short-term (e.g., weather, lighting) and long-term (seasons, vegetation growth, etc.) environmental variations. A promising solution is to continuously accumulate images to maintain an adequate sample of the conditions and incorporate new changes into the place recognition decision. However, this demands a place recognition technique that is scalable on an ever growing dataset. To this end, we propose a novel place recognition technique that can be efficiently retrained and compressed, such that the recognition of new queries can exploit all available data (including recent changes) without suffering from visible growth in computational cost. Underpinning our method is a novel temporal image matching technique based on Hidden Markov Models. Our experiments show that, compared to state-of-the-art techniques, our method has much greater potential for large-scale place recognition for autonomous driving.

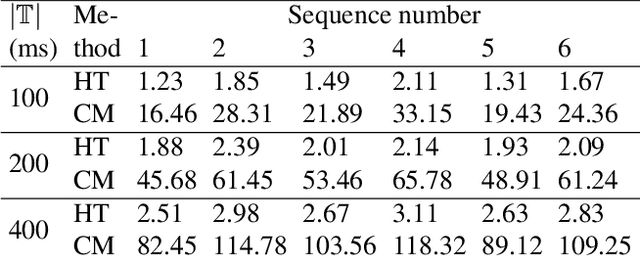

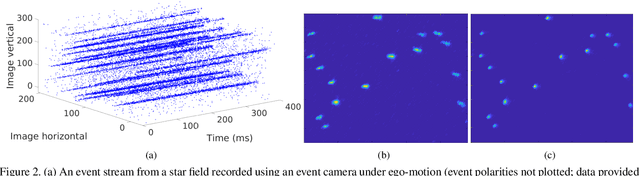

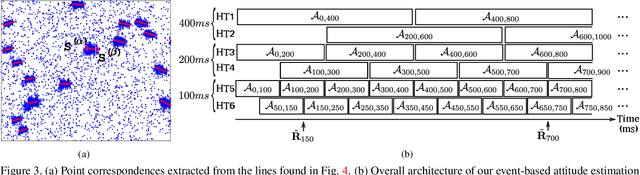

Event-based Star Tracking via Multiresolution Progressive Hough Transforms

Jun 19, 2019

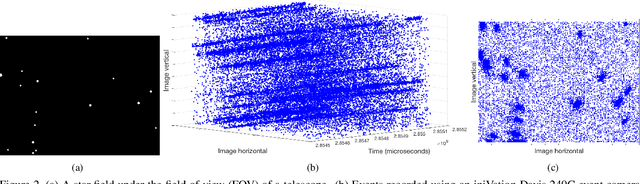

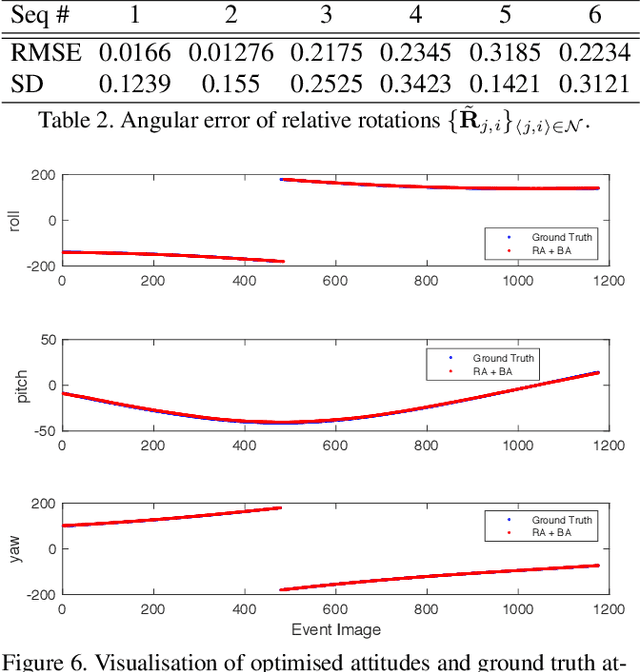

Abstract:Star trackers are state-of-the-art attitude estimation devices which function by recognising and tracking star patterns. Most commercial star trackers use conventional optical sensors. A recent alternative is to use event sensors, which could enable more energy efficient and faster star trackers. However, this demands new algorithms that can efficiently cope with high-speed asynchronous data, and are feasible on resource-constrained computing platforms. To this end, we propose an event-based processing approach for star tracking. Our technique operates on the event stream from a star field, by using multiresolution Hough Transforms to time-progressively integrate event data and produce accurate relative rotations. Optimisation via rotation averaging is then used to fuse the relative rotations and jointly refine the absolute orientations. Our technique is designed to be feasible for asynchronous operation on standard hardware. Moreover, compared to state-of-the-art event-based motion estimation schemes, our technique is much more efficient and accurate.

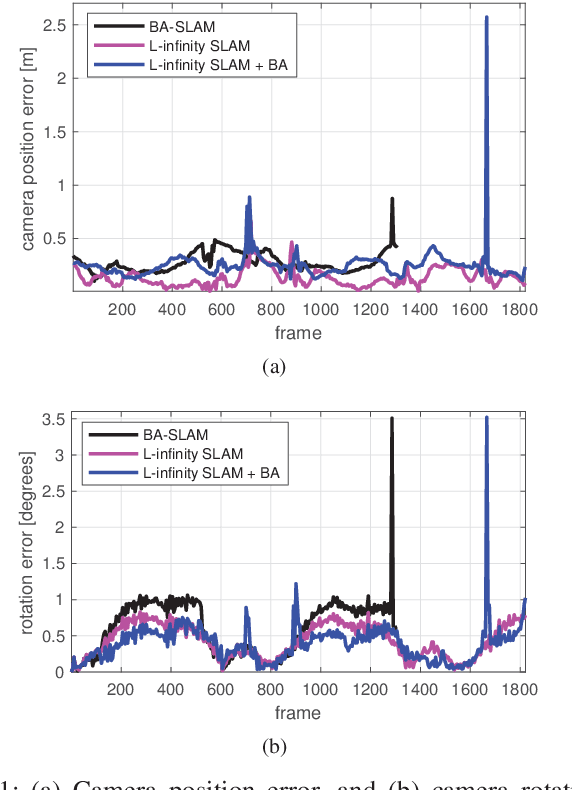

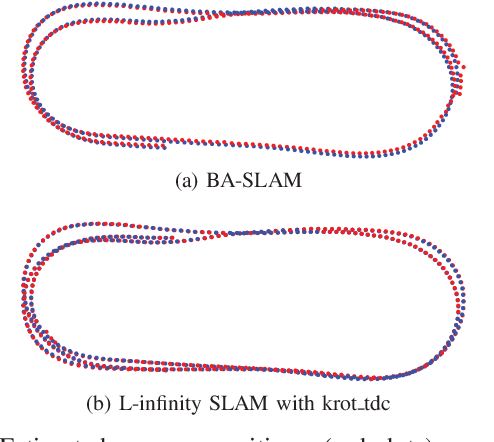

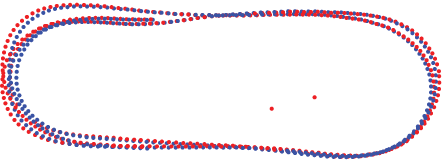

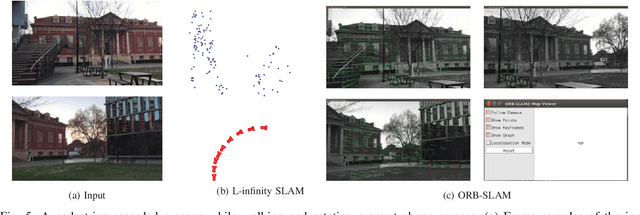

Visual SLAM: Why Bundle Adjust?

Feb 11, 2019

Abstract:Bundle adjustment plays a vital role in feature-based monocular SLAM. In many modern SLAM pipelines, bundle adjustment is performed to estimate the 6DOF camera trajectory and 3D map (3D point cloud) from the input feature tracks. However, two fundamental weaknesses plague SLAM systems based on bundle adjustment. First, the need to carefully initialise bundle adjustment means that all variables, in particular the map, must be estimated as accurately as possible and maintained over time, which makes the overall algorithm cumbersome. Second, since estimating the 3D structure (which requires sufficient baseline) is inherent in bundle adjustment, the SLAM algorithm will encounter difficulties during periods of slow motion or pure rotational motion. We propose a different SLAM optimisation core: instead of bundle adjustment, we conduct rotation averaging to incrementally optimise only camera orientations. Given the orientations, we estimate the camera positions and 3D points via a quasi-convex formulation that can be solved efficiently and globally optimally. Our approach not only obviates the need to estimate and maintain the positions and 3D map at keyframe rate (which enables simpler SLAM systems), it is also more capable of handling slow motions or pure rotational motions.

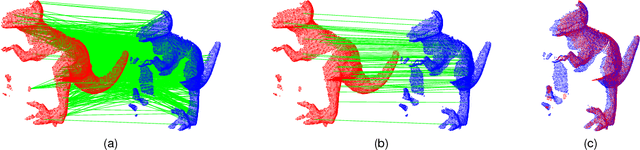

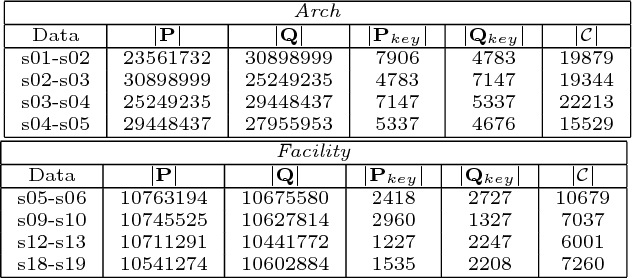

A Practical Maximum Clique Algorithm for Matching with Pairwise Constraints

Feb 05, 2019

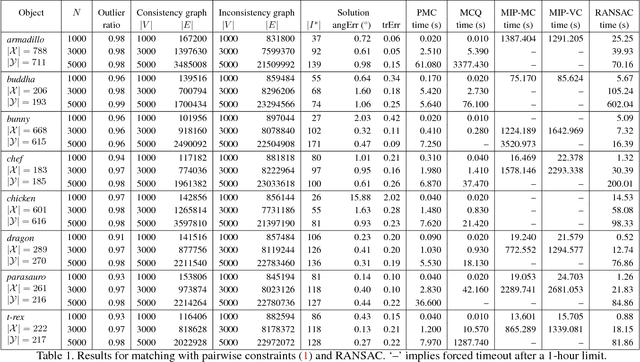

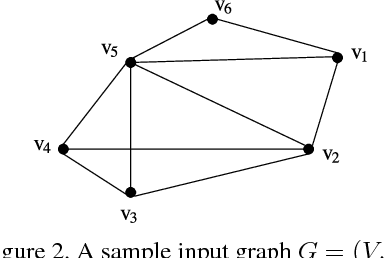

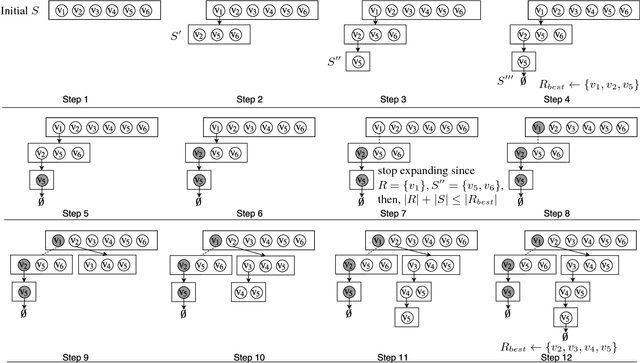

Abstract:A popular paradigm for 3D point cloud registration is by extracting 3D keypoint correspondences, then estimating the registration function from the correspondences using a robust algorithm. However, many existing 3D keypoint techniques tend to produce large proportions of erroneous correspondences or outliers, which significantly increases the cost of robust estimation. An alternative approach is to directly search for the subset of correspondences that are pairwise consistent, without optimising the registration function. This gives rise to the combinatorial problem of matching with pairwise constraints. In this paper, we propose a very efficient maximum clique algorithm to solve matching with pairwise constraints. Our technique combines tree searching with efficient bounding and pruning based on graph colouring. We demonstrate that, despite the theoretical intractability, many real problem instances can be solved exactly and quickly (seconds to minutes) with our algorithm, which makes our approach an excellent alternative to standard robust techniques for 3D registration.

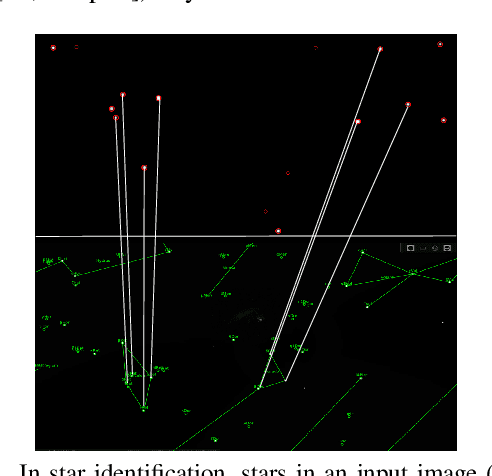

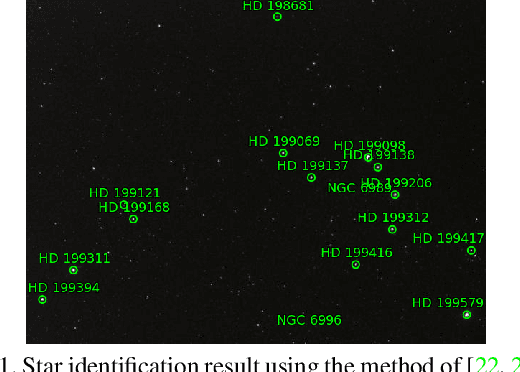

Star Tracking using an Event Camera

Dec 07, 2018

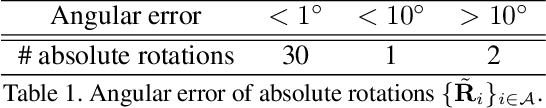

Abstract:Star trackers are primarily optical devices that are used to estimate the attitude of a spacecraft by recognising and tracking star patterns. Currently, most star trackers use conventional optical sensors. In this application paper, we propose the usage of event sensors for star tracking. There are potentially two benefits of using event sensors for star tracking: lower power consumption and higher operating speeds. Our main contribution is to formulate an algorithmic pipeline for star tracking from event data that includes novel formulations of rotation averaging and bundle adjustment. In addition, we also release with this paper a dataset for star tracking using event cameras. With this work, we introduce the problem of star tracking using event cameras to the computer vision community, whose expertise in SLAM and geometric optimisation can be brought to bear on this commercially important application.

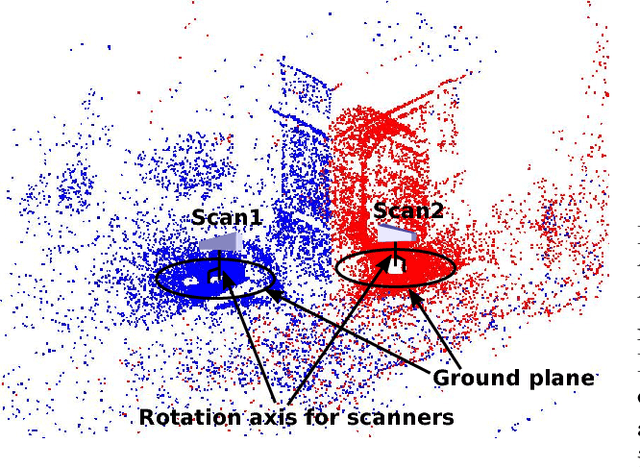

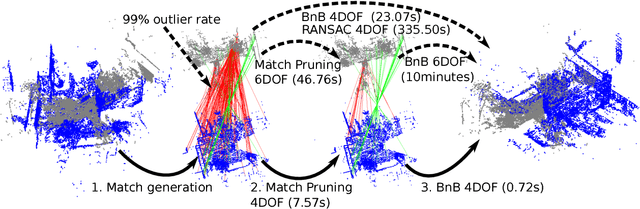

Practical optimal registration of terrestrial LiDAR scan pairs

Nov 30, 2018

Abstract:Point cloud registration is a fundamental problem in 3D scanning. In this paper, we address the frequent special case of registering terrestrial LiDAR scans (or, more generally, levelled point clouds). Many current solutions still rely on the Iterative Closest Point (ICP) method or other heuristic procedures, which require good initializations to succeed and/or provide no guarantees of success. On the other hand, exact or optimal registration algorithms can compute the best possible solution without requiring initializations; however, they are currently too slow to be practical in realistic applications. Existing optimal approaches ignore the fact that in routine use the relative rotations between scans are constrained to the azimuth, via the built-in level compensation in LiDAR scanners. We propose a novel, optimal and computationally efficient registration method for this 4DOF scenario. Our approach operates on candidate 3D keypoint correspondences, and contains two main steps: (1) a deterministic selection scheme that significantly reduces the candidate correspondence set in a way that is guaranteed to preserve the optimal solution; and (2) a fast branch-and-bound (BnB) algorithm with a novel polynomial-time subroutine for 1D rotation search, that quickly finds the optimal alignment for the reduced set. We demonstrate the practicality of our method on realistic point clouds from multiple LiDAR surveys.

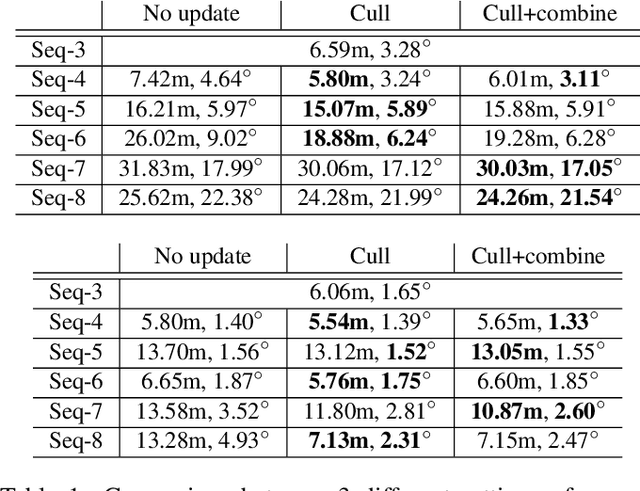

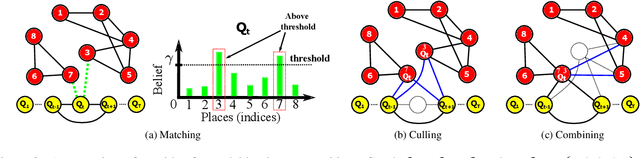

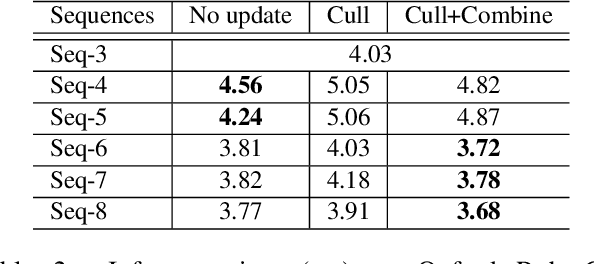

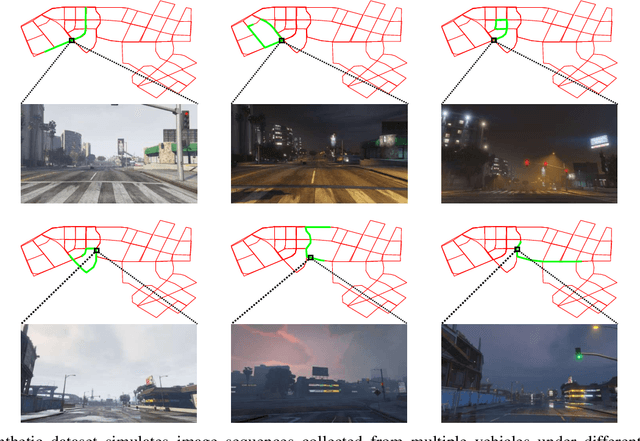

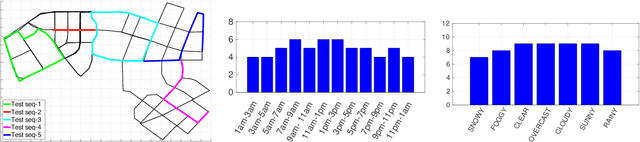

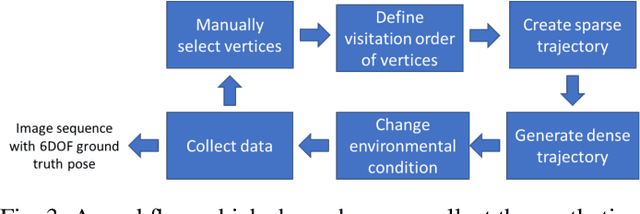

Practical Visual Localization for Autonomous Driving: Why Not Filter?

Nov 20, 2018

Abstract:A major focus of current research on place recognition is visual localization for autonomous driving. However, while many visual localization algorithms for autonomous driving have achieved impressive results, it seems not all previous works have been set in a realistic setting for the problem, namely using training and testing videos that were collected in a distributed manner from multiple vehicles, all traversing through a road network in an urban area under different environmental conditions (weather, lighting, etc.). More importantly, in this setting, we show that exploiting temporal continuity in the testing sequence significantly improves visual localization - qualitatively and quantitatively. Although intuitive, this idea has not been fully explored in recent works. Our main contribution is a novel particle filtering technique that works in conjunction with a visual localization method to achieve accurate city-scale localization that is robust against environmental variations. We provide convincing results on synthetic and real datasets.

Add to Chrome

Add to Chrome Add to Firefox

Add to Firefox Add to Edge

Add to Edge