Tanish Jain

A Multi-Agent System for Generating Actionable Business Advice

Jan 17, 2026Abstract:Customer reviews contain rich signals about product weaknesses and unmet user needs, yet existing analytic methods rarely move beyond descriptive tasks such as sentiment analysis or aspect extraction. While large language models (LLMs) can generate free-form suggestions, their outputs often lack accuracy and depth of reasoning. In this paper, we present a multi-agent, LLM-based framework for prescriptive decision support, which transforms large scale review corpora into actionable business advice. The framework integrates four components: clustering to select representative reviews, generation of advices, iterative evaluation, and feasibility based ranking. This design couples corpus distillation with feedback driven advice refinement to produce outputs that are specific, actionable, and practical. Experiments across three service domains and multiple model families show that our framework consistently outperform single model baselines on actionability, specificity, and non-redundancy, with medium sized models approaching the performance of large model frameworks.

Geometric-Stochastic Multimodal Deep Learning for Predictive Modeling of SUDEP and Stroke Vulnerability

Dec 09, 2025Abstract:Sudden Unexpected Death in Epilepsy (SUDEP) and acute ischemic stroke are life-threatening conditions involving complex interactions across cortical, brainstem, and autonomic systems. We present a unified geometric-stochastic multimodal deep learning framework that integrates EEG, ECG, respiration, SpO2, EMG, and fMRI signals to model SUDEP and stroke vulnerability. The approach combines Riemannian manifold embeddings, Lie-group invariant feature representations, fractional stochastic dynamics, Hamiltonian energy-flow modeling, and cross-modal attention mechanisms. Stroke propagation is modeled using fractional epidemic diffusion over structural brain graphs. Experiments on the MULTI-CLARID dataset demonstrate improved predictive accuracy and interpretable biomarkers derived from manifold curvature, fractional memory indices, attention entropy, and diffusion centrality. The proposed framework provides a mathematically principled foundation for early detection, risk stratification, and interpretable multimodal modeling in neural-autonomic disorders.

Evaluating Machine Learning-based Skin Cancer Diagnosis

Sep 04, 2024

Abstract:This study evaluates the reliability of two deep learning models for skin cancer detection, focusing on their explainability and fairness. Using the HAM10000 dataset of dermatoscopic images, the research assesses two convolutional neural network architectures: a MobileNet-based model and a custom CNN model. Both models are evaluated for their ability to classify skin lesions into seven categories and to distinguish between dangerous and benign lesions. Explainability is assessed using Saliency Maps and Integrated Gradients, with results interpreted by a dermatologist. The study finds that both models generally highlight relevant features for most lesion types, although they struggle with certain classes like seborrheic keratoses and vascular lesions. Fairness is evaluated using the Equalized Odds metric across sex and skin tone groups. While both models demonstrate fairness across sex groups, they show significant disparities in false positive and false negative rates between light and dark skin tones. A Calibrated Equalized Odds postprocessing strategy is applied to mitigate these disparities, resulting in improved fairness, particularly in reducing false negative rate differences. The study concludes that while the models show promise in explainability, further development is needed to ensure fairness across different skin tones. These findings underscore the importance of rigorous evaluation of AI models in medical applications, particularly in diverse population groups.

Insta Pet Therapy: GAN-generated Images for Therapeutic Social Media Content

Apr 17, 2023

Abstract:The positive therapeutic effect of viewing pet images online has been well-studied. However, it is difficult to obtain large-scale production of such content since it relies on pet owners to capture photographs and upload them. I use a Generative Adversarial Network-based framework for the creation of fake pet images at scale. These images are uploaded on an Instagram account where they drive user engagement at levels comparable to those seen with images from accounts with traditional pet photographs, underlining the applicability of the framework to be used for pet-therapy social media content.

In Silico Prediction of Blood-Brain Barrier Permeability of Chemical Compounds through Molecular Feature Modeling

Aug 18, 2022

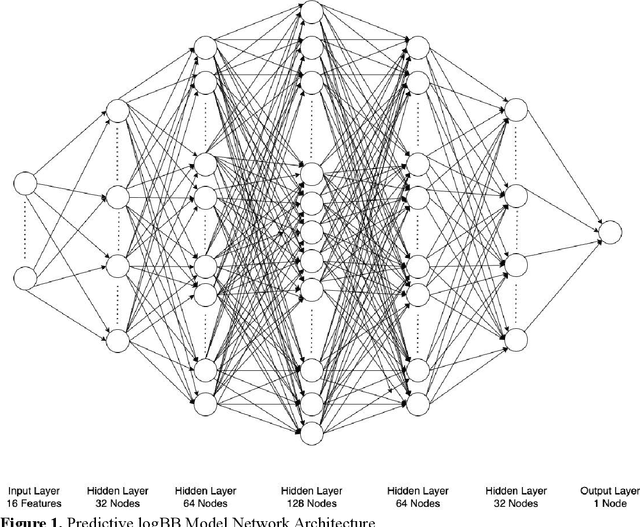

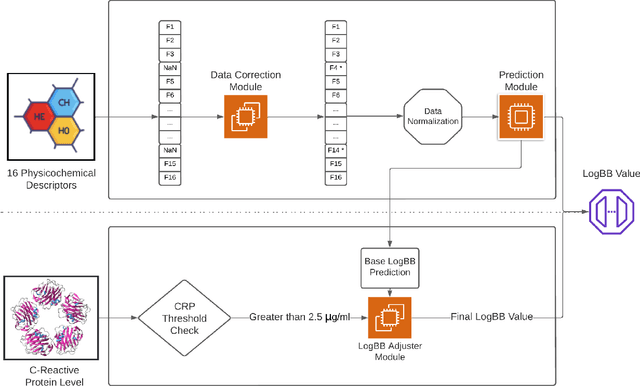

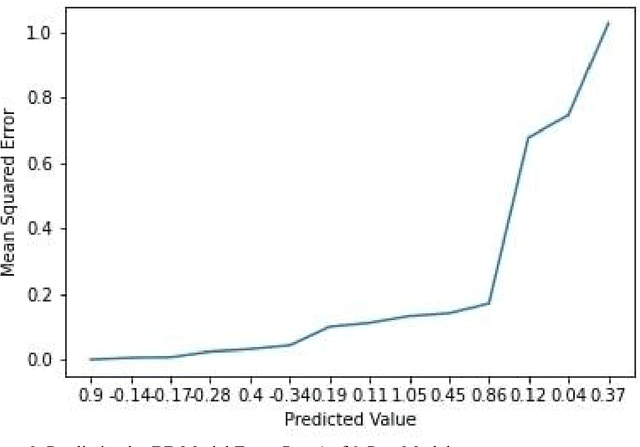

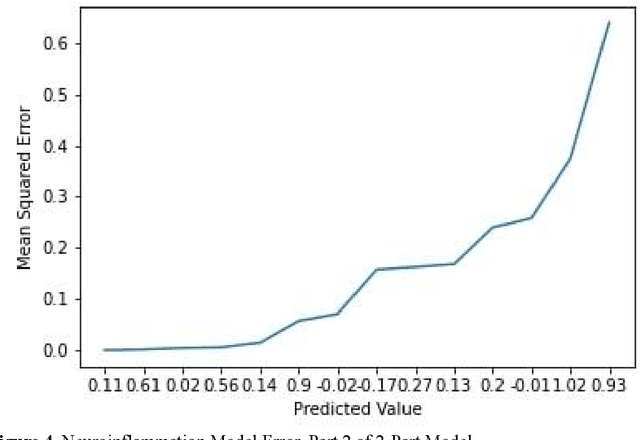

Abstract:The introduction of computational techniques to analyze chemical data has given rise to the analytical study of biological systems, known as "bioinformatics". One facet of bioinformatics is using machine learning (ML) technology to detect multivariable trends in various cases. Amongst the most pressing cases is predicting blood-brain barrier (BBB) permeability. The development of new drugs to treat central nervous system disorders presents unique challenges due to poor penetration efficacy across the blood-brain barrier. In this research, we aim to mitigate this problem through an ML model that analyzes chemical features. To do so: (i) An overview into the relevant biological systems and processes as well as the use case is given. (ii) Second, an in-depth literature review of existing computational techniques for detecting BBB permeability is undertaken. From there, an aspect unexplored across current techniques is identified and a solution is proposed. (iii) Lastly, a two-part in silico model to quantify likelihood of permeability of drugs with defined features across the BBB through passive diffusion is developed, tested, and reflected on. Testing and validation with the dataset determined the predictive logBB model's mean squared error to be around 0.112 units and the neuroinflammation model's mean squared error to be approximately 0.3 units, outperforming all relevant studies found.

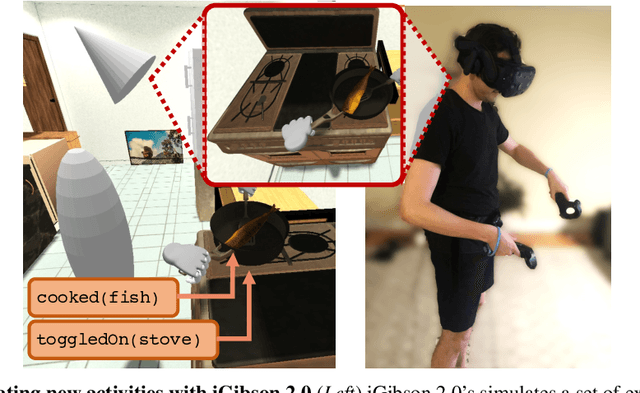

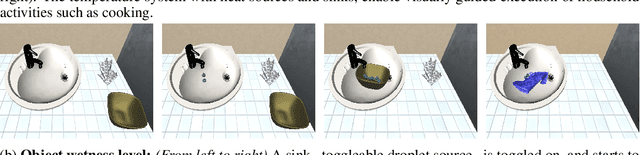

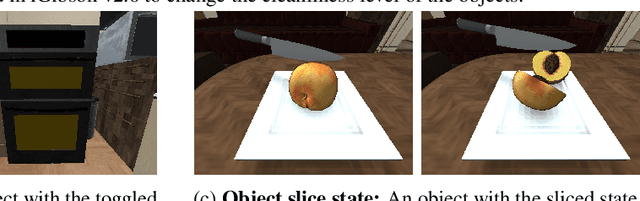

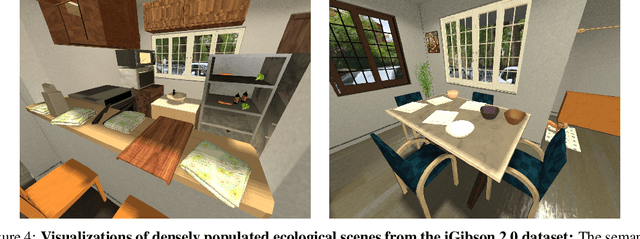

iGibson 2.0: Object-Centric Simulation for Robot Learning of Everyday Household Tasks

Aug 10, 2021

Abstract:Recent research in embodied AI has been boosted by the use of simulation environments to develop and train robot learning approaches. However, the use of simulation has skewed the attention to tasks that only require what robotics simulators can simulate: motion and physical contact. We present iGibson 2.0, an open-source simulation environment that supports the simulation of a more diverse set of household tasks through three key innovations. First, iGibson 2.0 supports object states, including temperature, wetness level, cleanliness level, and toggled and sliced states, necessary to cover a wider range of tasks. Second, iGibson 2.0 implements a set of predicate logic functions that map the simulator states to logic states like Cooked or Soaked. Additionally, given a logic state, iGibson 2.0 can sample valid physical states that satisfy it. This functionality can generate potentially infinite instances of tasks with minimal effort from the users. The sampling mechanism allows our scenes to be more densely populated with small objects in semantically meaningful locations. Third, iGibson 2.0 includes a virtual reality (VR) interface to immerse humans in its scenes to collect demonstrations. As a result, we can collect demonstrations from humans on these new types of tasks, and use them for imitation learning. We evaluate the new capabilities of iGibson 2.0 to enable robot learning of novel tasks, in the hope of demonstrating the potential of this new simulator to support new research in embodied AI. iGibson 2.0 and its new dataset will be publicly available at http://svl.stanford.edu/igibson/.

Add to Chrome

Add to Chrome Add to Firefox

Add to Firefox Add to Edge

Add to Edge