Tan

Uncovering Discrimination Clusters: Quantifying and Explaining Systematic Fairness Violations

Dec 29, 2025Abstract:Fairness in algorithmic decision-making is often framed in terms of individual fairness, which requires that similar individuals receive similar outcomes. A system violates individual fairness if there exists a pair of inputs differing only in protected attributes (such as race or gender) that lead to significantly different outcomes-for example, one favorable and the other unfavorable. While this notion highlights isolated instances of unfairness, it fails to capture broader patterns of systematic or clustered discrimination that may affect entire subgroups. We introduce and motivate the concept of discrimination clustering, a generalization of individual fairness violations. Rather than detecting single counterfactual disparities, we seek to uncover regions of the input space where small perturbations in protected features lead to k-significantly distinct clusters of outcomes. That is, for a given input, we identify a local neighborhood-differing only in protected attributes-whose members' outputs separate into many distinct clusters. These clusters reveal significant arbitrariness in treatment solely based on protected attributes that help expose patterns of algorithmic bias that elude pairwise fairness checks. We present HyFair, a hybrid technique that combines formal symbolic analysis (via SMT and MILP solvers) to certify individual fairness with randomized search to discover discriminatory clusters. This combination enables both formal guarantees-when no counterexamples exist-and the detection of severe violations that are computationally challenging for symbolic methods alone. Given a set of inputs exhibiting high k-unfairness, we introduce a novel explanation method to generate interpretable, decision-tree-style artifacts. Our experiments demonstrate that HyFair outperforms state-of-the-art fairness verification and local explanation methods.

Multi-objective Optimization of Clustering-based Scheduling for Multi-workflow On Clouds Considering Fairness

May 23, 2022

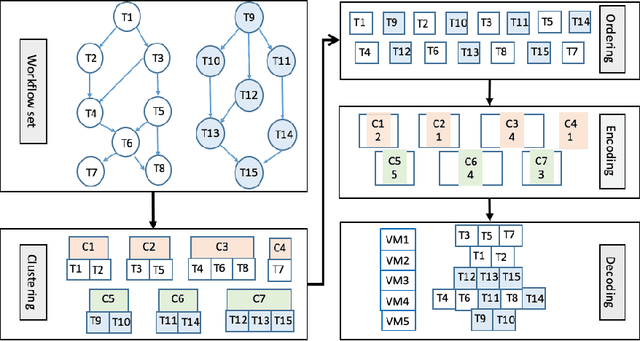

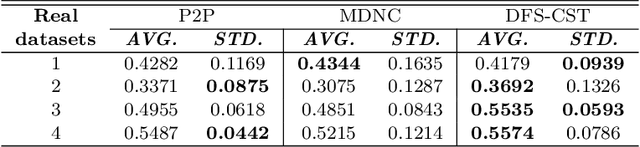

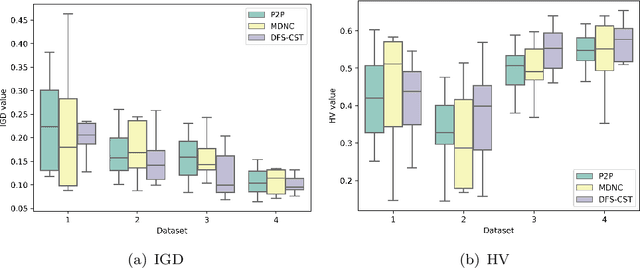

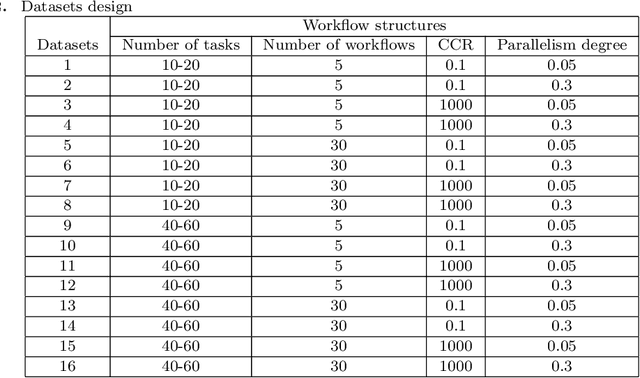

Abstract:Distributed computing, such as cloud computing, provides promising platforms to execute multiple workflows. Workflow scheduling plays an important role in multi-workflow execution with multi-objective requirements. Although there exist many multi-objective scheduling algorithms, they focus mainly on optimizing makespan and cost for a single workflow. There is a limited research on multi-objective optimization for multi-workflow scheduling. Considering multi-workflow scheduling, there is an additional key objective to maintain the fairness of workflows using the resources. To address such issues, this paper first defines a new multi-objective optimization model based on makespan, cost, and fairness, and then proposes a global clustering-based multi-workflow scheduling strategy for resource allocation. Experimental results show that the proposed approach performs better than the compared algorithms without significant compromise of the overall makespan and cost as well as individual fairness, which can guide the simulation workflow scheduling on clouds.

Add to Chrome

Add to Chrome Add to Firefox

Add to Firefox Add to Edge

Add to Edge