Song-Lu Chen

HQOD: Harmonious Quantization for Object Detection

Aug 05, 2024

Abstract:Task inharmony problem commonly occurs in modern object detectors, leading to inconsistent qualities between classification and regression tasks. The predicted boxes with high classification scores but poor localization positions or low classification scores but accurate localization positions will worsen the performance of detectors after Non-Maximum Suppression. Furthermore, when object detectors collaborate with Quantization-Aware Training (QAT), we observe that the task inharmony problem will be further exacerbated, which is considered one of the main causes of the performance degradation of quantized detectors. To tackle this issue, we propose the Harmonious Quantization for Object Detection (HQOD) framework, which consists of two components. Firstly, we propose a task-correlated loss to encourage detectors to focus on improving samples with lower task harmony quality during QAT. Secondly, a harmonious Intersection over Union (IoU) loss is incorporated to balance the optimization of the regression branch across different IoU levels. The proposed HQOD can be easily integrated into different QAT algorithms and detectors. Remarkably, on the MS COCO dataset, our 4-bit ATSS with ResNet-50 backbone achieves a state-of-the-art mAP of 39.6%, even surpassing the full-precision one.

Rethinking Speech Recognition with A Multimodal Perspective via Acoustic and Semantic Cooperative Decoding

May 23, 2023

Abstract:Attention-based encoder-decoder (AED) models have shown impressive performance in ASR. However, most existing AED methods neglect to simultaneously leverage both acoustic and semantic features in decoder, which is crucial for generating more accurate and informative semantic states. In this paper, we propose an Acoustic and Semantic Cooperative Decoder (ASCD) for ASR. In particular, unlike vanilla decoders that process acoustic and semantic features in two separate stages, ASCD integrates them cooperatively. To prevent information leakage during training, we design a Causal Multimodal Mask. Moreover, a variant Semi-ASCD is proposed to balance accuracy and computational cost. Our proposal is evaluated on the publicly available AISHELL-1 and aidatatang_200zh datasets using Transformer, Conformer, and Branchformer as encoders, respectively. The experimental results show that ASCD significantly improves the performance by leveraging both the acoustic and semantic information cooperatively.

Non-autoregressive Transformer with Unified Bidirectional Decoder for Automatic Speech Recognition

Sep 14, 2021

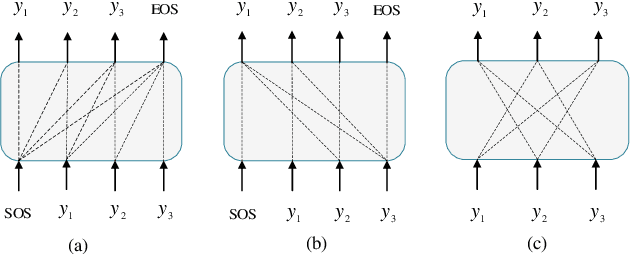

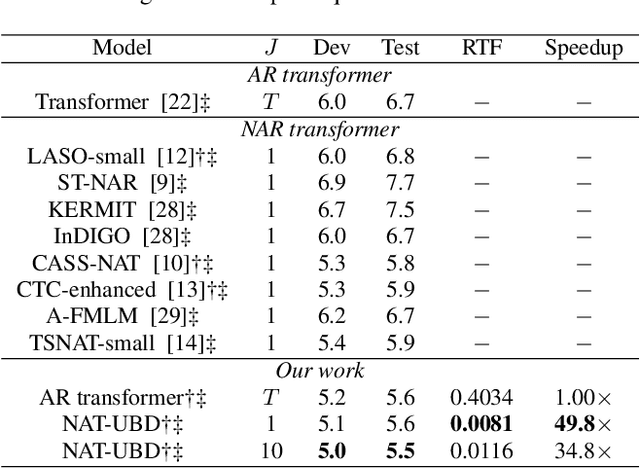

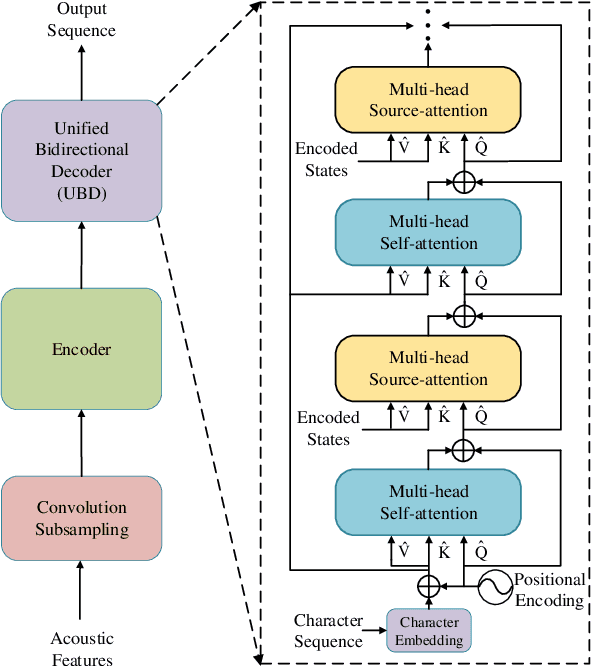

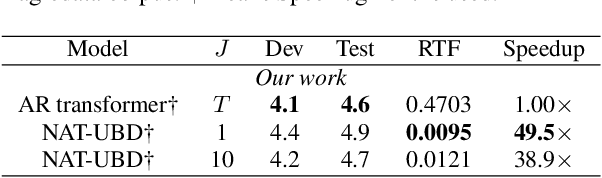

Abstract:Non-autoregressive (NAR) transformer models have been studied intensively in automatic speech recognition (ASR), and a substantial part of NAR transformer models is to use the casual mask to limit token dependencies. However, the casual mask is designed for the left-to-right decoding process of the non-parallel autoregressive (AR) transformer, which is inappropriate for the parallel NAR transformer since it ignores the right-to-left contexts. Some models are proposed to utilize right-to-left contexts with an extra decoder, but these methods increase the model complexity. To tackle the above problems, we propose a new non-autoregressive transformer with a unified bidirectional decoder (NAT-UBD), which can simultaneously utilize left-to-right and right-to-left contexts. However, direct use of bidirectional contexts will cause information leakage, which means the decoder output can be affected by the character information from the input of the same position. To avoid information leakage, we propose a novel attention mask and modify vanilla queries, keys, and values matrices for NAT-UBD. Experimental results verify that NAT-UBD can achieve character error rates (CERs) of 5.0%/5.5% on the Aishell1 dev/test sets, outperforming all previous NAR transformer models. Moreover, NAT-UBD can run 49.8x faster than the AR transformer baseline when decoding in a single step.

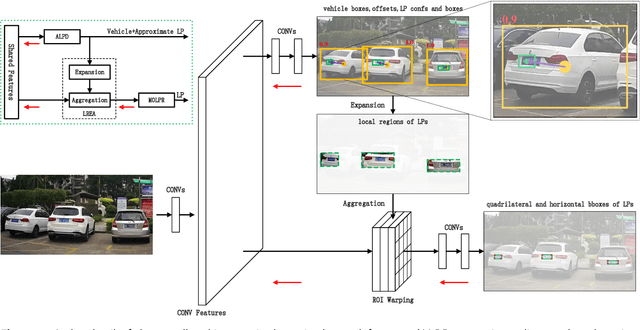

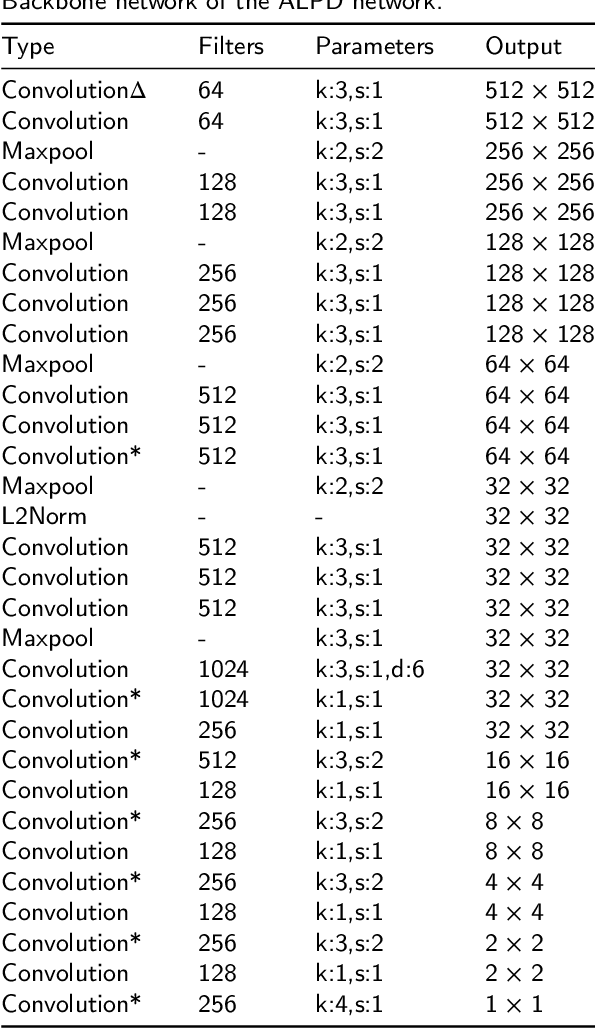

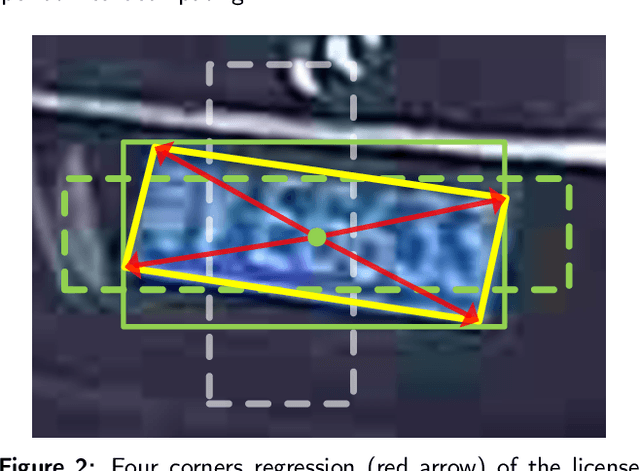

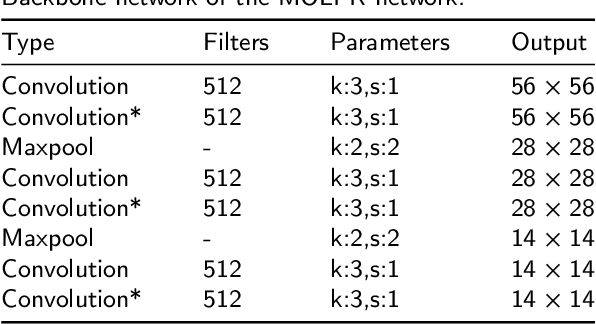

End-to-end trainable network for degraded license plate detection via vehicle-plate relation mining

Oct 27, 2020

Abstract:License plate detection is the first and essential step of the license plate recognition system and is still challenging in real applications, such as on-road scenarios. In particular, small-sized and oblique license plates, mainly caused by the distant and mobile camera, are difficult to detect. In this work, we propose a novel and applicable method for degraded license plate detection via vehicle-plate relation mining, which localizes the license plate in a coarse-to-fine scheme. First, we propose to estimate the local region around the license plate by using the relationships between the vehicle and the license plate, which can greatly reduce the search area and precisely detect very small-sized license plates. Second, we propose to predict the quadrilateral bounding box in the local region by regressing the four corners of the license plate to robustly detect oblique license plates. Moreover, the whole network can be trained in an end-to-end manner. Extensive experiments verify the effectiveness of our proposed method for small-sized and oblique license plates. Codes are available at https://github.com/chensonglu/LPD-end-to-end.

Add to Chrome

Add to Chrome Add to Firefox

Add to Firefox Add to Edge

Add to Edge