Sijia Wen

FedDTRE: Federated Dialogue Generation Models Powered by Trustworthiness Evaluation

Oct 09, 2025Abstract:With the rapid development of artificial intelligence, dialogue systems have become a prominent form of human-computer interaction. However, traditional centralized or fully local training approaches face challenges in balancing privacy preservation and personalization due to data privacy concerns and heterogeneous device capabilities. Federated learning, as a representative distributed paradigm, offers a promising solution. However, existing methods often suffer from overfitting under limited client data and tend to forget global information after multiple training rounds, leading to poor generalization. To address these issues, we propose FedDTRE, a Federated adaptive aggregation strategy for Dialogue generation based on Trustworthiness Evaluation. Instead of directly replacing local models with the global model, FedDTRE leverages trustworthiness scores of both global and local models on a fairness-oriented evaluation dataset to dynamically regulate the global model's contribution during local updates. Experimental results demonstrate that FedDTRE can improve dialogue model performance and enhance the quality of dialogue generation.

Detecting Stealthy Backdoor Samples based on Intra-class Distance for Large Language Models

May 29, 2025

Abstract:Fine-tuning LLMs with datasets containing stealthy backdoors from publishers poses security risks to downstream applications. Mainstream detection methods either identify poisoned samples by analyzing the prediction probability of poisoned classification models or rely on the rewriting model to eliminate the stealthy triggers. However, the former cannot be applied to generation tasks, while the latter may degrade generation performance and introduce new triggers. Therefore, efficiently eliminating stealthy poisoned samples for LLMs remains an urgent problem. We observe that after applying TF-IDF clustering to the sample response, there are notable differences in the intra-class distances between clean and poisoned samples. Poisoned samples tend to cluster closely because of their specific malicious outputs, whereas clean samples are more scattered due to their more varied responses. Thus, in this paper, we propose a stealthy backdoor sample detection method based on Reference-Filtration and Tfidf-Clustering mechanisms (RFTC). Specifically, we first compare the sample response with the reference model's outputs and consider the sample suspicious if there's a significant discrepancy. And then we perform TF-IDF clustering on these suspicious samples to identify the true poisoned samples based on the intra-class distance. Experiments on two machine translation datasets and one QA dataset demonstrate that RFTC outperforms baselines in backdoor detection and model performance. Further analysis of different reference models also confirms the effectiveness of our Reference-Filtration.

Defending Against Sophisticated Poisoning Attacks with RL-based Aggregation in Federated Learning

Jun 20, 2024

Abstract:Federated learning is highly susceptible to model poisoning attacks, especially those meticulously crafted for servers. Traditional defense methods mainly focus on updating assessments or robust aggregation against manually crafted myopic attacks. When facing advanced attacks, their defense stability is notably insufficient. Therefore, it is imperative to develop adaptive defenses against such advanced poisoning attacks. We find that benign clients exhibit significantly higher data distribution stability than malicious clients in federated learning in both CV and NLP tasks. Therefore, the malicious clients can be recognized by observing the stability of their data distribution. In this paper, we propose AdaAggRL, an RL-based Adaptive Aggregation method, to defend against sophisticated poisoning attacks. Specifically, we first utilize distribution learning to simulate the clients' data distributions. Then, we use the maximum mean discrepancy (MMD) to calculate the pairwise similarity of the current local model data distribution, its historical data distribution, and global model data distribution. Finally, we use policy learning to adaptively determine the aggregation weights based on the above similarities. Experiments on four real-world datasets demonstrate that the proposed defense model significantly outperforms widely adopted defense models for sophisticated attacks.

Visibility Enhancement for Low-light Hazy Scenarios

Aug 01, 2023Abstract:Low-light hazy scenes commonly appear at dusk and early morning. The visual enhancement for low-light hazy images is an ill-posed problem. Even though numerous methods have been proposed for image dehazing and low-light enhancement respectively, simply integrating them cannot deliver pleasing results for this particular task. In this paper, we present a novel method to enhance visibility for low-light hazy scenarios. To handle this challenging task, we propose two key techniques, namely cross-consistency dehazing-enhancement framework and physically based simulation for low-light hazy dataset. Specifically, the framework is designed for enhancing visibility of the input image via fully utilizing the clues from different sub-tasks. The simulation is designed for generating the dataset with ground-truths by the proposed low-light hazy imaging model. The extensive experimental results show that the proposed method outperforms the SOTA solutions on different metrics including SSIM (9.19%) and PSNR(5.03%). In addition, we conduct a user study on real images to demonstrate the effectiveness and necessity of the proposed method by human visual perception.

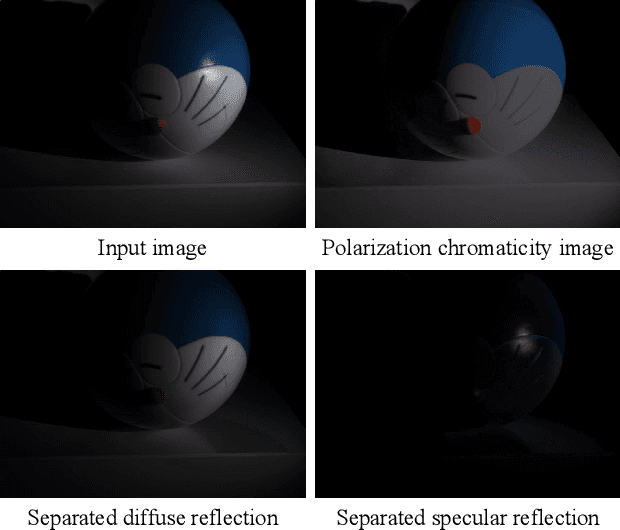

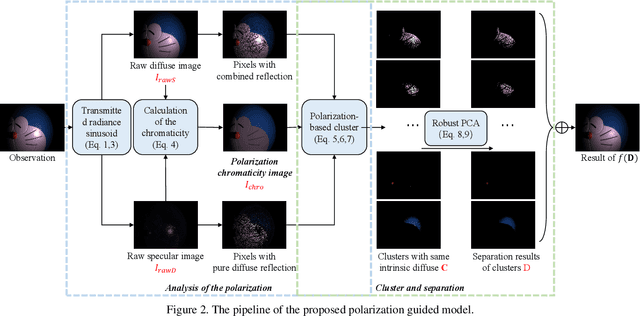

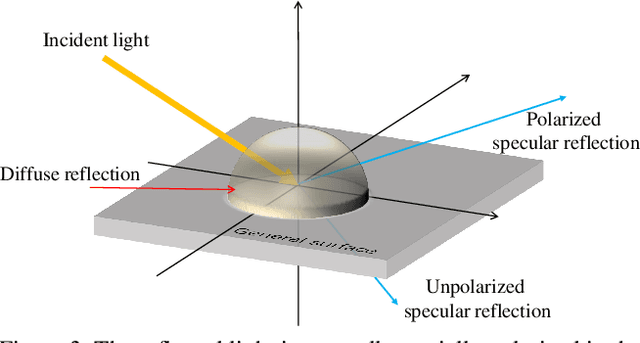

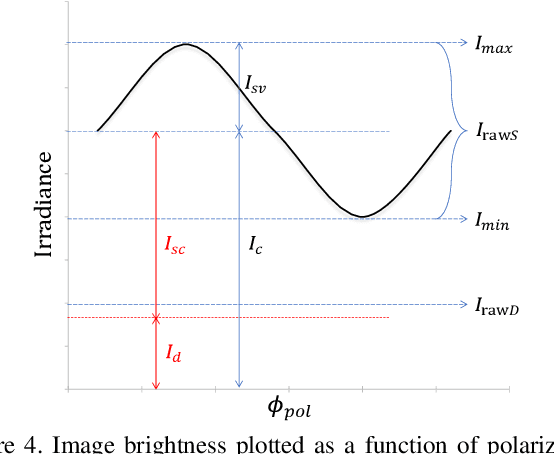

Polarization Guided Specular Reflection Separation

Mar 22, 2021

Abstract:Since specular reflection often exists in the real captured images and causes deviation between the recorded color and intrinsic color, specular reflection separation can bring advantages to multiple applications that require consistent object surface appearance. However, due to the color of an object is significantly influenced by the color of the illumination, the existing researches still suffer from the near-duplicate challenge, that is, the separation becomes unstable when the illumination color is close to the surface color. In this paper, we derive a polarization guided model to incorporate the polarization information into a designed iteration optimization separation strategy to separate the specular reflection. Based on the analysis of polarization, we propose a polarization guided model to generate a polarization chromaticity image, which is able to reveal the geometrical profile of the input image in complex scenarios, such as diversity of illumination. The polarization chromaticity image can accurately cluster the pixels with similar diffuse color. We further use the specular separation of all these clusters as an implicit prior to ensure that the diffuse components will not be mistakenly separated as the specular components. With the polarization guided model, we reformulate the specular reflection separation into a unified optimization function which can be solved by the ADMM strategy. The specular reflection will be detected and separated jointly by RGB and polarimetric information. Both qualitative and quantitative experimental results have shown that our method can faithfully separate the specular reflection, especially in some challenging scenarios.

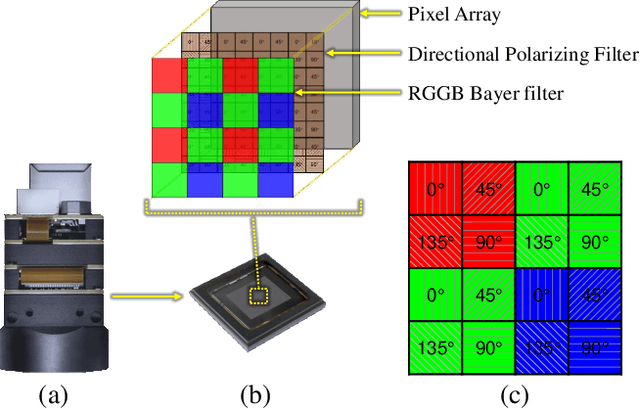

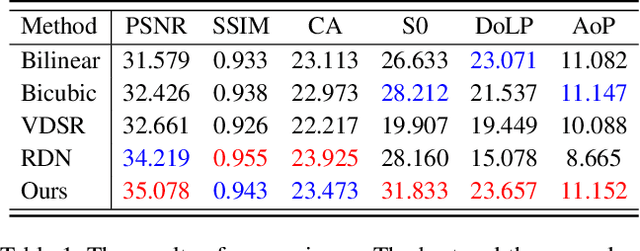

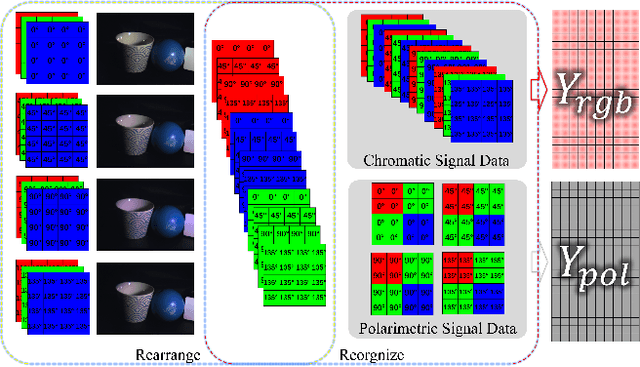

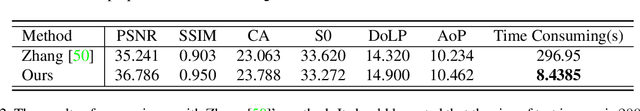

Joint Chromatic and Polarimetric Demosaicing via Sparse Coding

Dec 16, 2019

Abstract:Thanks to the latest progress in image sensor manufacturing technology, the emergence of the single-chip polarized color sensor is likely to bring advantages to computer vision tasks. Despite the importance of the sensor, joint chromatic and polarimetric demosaicing is the key to obtaining the high-quality RGB-Polarization image for the sensor. Since the polarized color sensor is equipped with a new type of chip, the demosaicing problem cannot be currently well-addressed by former methods. In this paper, we propose a joint chromatic and polarimetric demosaicing model to address this challenging problem. To solve this non-convex problem, we further present a sparse representation-based optimization strategy that utilizes chromatic information and polarimetric information to jointly optimize the model. In addition, we build an optical data acquisition system to collect an RGB-Polarization dataset. Results of both qualitative and quantitative experiments have shown that our method is capable of faithfully recovering full 12-channel chromatic and polarimetric information for each pixel from a single mosaic input image. Moreover, we show that the proposed method can perform well not only on the synthetic data but the real captured data.

Add to Chrome

Add to Chrome Add to Firefox

Add to Firefox Add to Edge

Add to Edge