Shanzhe Lei

Safactory: A Scalable Agent Factory for Trustworthy Autonomous Intelligence

May 07, 2026Abstract:As large models evolve from conversational assistants into autonomous agents, challenges increasingly arise from long-horizon decision making, tool use, and real environment interaction. Existing agenticinfrastructure remain fragmented across evaluation, data management, and agent evolution, making it difficult to discover risks systematically and improve models in a continuous closed loop. In this report, we present \textbf{Safactory}, a scalable agent factory for trustworthy autonomous intelligence. Safactory integrates three tightly coupled platforms: a \textbf{Parallel Simulation Platform} for trajectory generation, a \textbf{Trustworthy Data Platform} for trajectory storage and experience extraction, and an \textbf{Autonomous Evolution Platform} for asynchronous reinforcement learning and on-policy distillation. As far as we know, Safactory is the first framework to propose a unified evolutionary pipeline for next-generation trustworthy autonomous intelligence.

Towards Trustworthy Report Generation: A Deep Research Agent with Progressive Confidence Estimation and Calibration

Apr 07, 2026Abstract:As agent-based systems continue to evolve, deep research agents are capable of automatically generating research-style reports across diverse domains. While these agents promise to streamline information synthesis and knowledge exploration, existing evaluation frameworks-typically based on subjective dimensions-fail to capture a critical aspect of report quality: trustworthiness. In open-ended research scenarios where ground-truth answers are unavailable, current evaluation methods cannot effectively measure the epistemic confidence of generated content, making calibration difficult and leaving users susceptible to misleading or hallucinated information. To address this limitation, we propose a novel deep research agent that incorporates progressive confidence estimation and calibration within the report generation pipeline. Our system leverages a deliberative search model, featuring deep retrieval and multi-hop reasoning to ground outputs in verifiable evidence while assigning confidence scores to individual claims. Combined with a carefully designed workflow, this approach produces trustworthy reports with enhanced transparency. Experimental results and case studies demonstrate that our method substantially improves interpretability and significantly increases user trust.

UniMark: Artificial Intelligence Generated Content Identification Toolkit

Dec 13, 2025Abstract:The rapid proliferation of Artificial Intelligence Generated Content has precipitated a crisis of trust and urgent regulatory demands. However, existing identification tools suffer from fragmentation and a lack of support for visible compliance marking. To address these gaps, we introduce the \textbf{UniMark}, an open-source, unified framework for multimodal content governance. Our system features a modular unified engine that abstracts complexities across text, image, audio, and video modalities. Crucially, we propose a novel dual-operation strategy, natively supporting both \emph{Hidden Watermarking} for copyright protection and \emph{Visible Marking} for regulatory compliance. Furthermore, we establish a standardized evaluation framework with three specialized benchmarks (Image/Video/Audio-Bench) to ensure rigorous performance assessment. This toolkit bridges the gap between advanced algorithms and engineering implementation, fostering a more transparent and secure digital ecosystem.

Beyond Correctness: Confidence-Aware Reward Modeling for Enhancing Large Language Model Reasoning

Nov 09, 2025Abstract:Recent advancements in large language models (LLMs) have shifted the post-training paradigm from traditional instruction tuning and human preference alignment toward reinforcement learning (RL) focused on reasoning capabilities. However, numerous technical reports indicate that purely rule-based reward RL frequently results in poor-quality reasoning chains or inconsistencies between reasoning processes and final answers, particularly when the base model is of smaller scale. During the RL exploration process, models might employ low-quality reasoning chains due to the lack of knowledge, occasionally producing correct answers randomly and receiving rewards based on established rule-based judges. This constrains the potential for resource-limited organizations to conduct direct reinforcement learning training on smaller-scale models. We propose a novel confidence-based reward model tailored for enhancing STEM reasoning capabilities. Unlike conventional approaches, our model penalizes not only incorrect answers but also low-confidence correct responses, thereby promoting more robust and logically consistent reasoning. We validate the effectiveness of our approach through static evaluations, Best-of-N inference tests, and PPO-based RL training. Our method outperforms several state-of-the-art open-source reward models across diverse STEM benchmarks. We release our codes and model in https://github.com/qianxiHe147/C2RM.

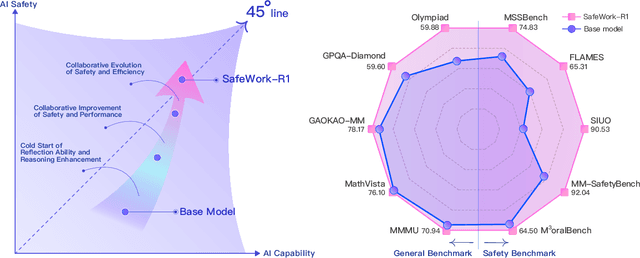

SafeWork-R1: Coevolving Safety and Intelligence under the AI-45$^{\circ}$ Law

Jul 24, 2025

Abstract:We introduce SafeWork-R1, a cutting-edge multimodal reasoning model that demonstrates the coevolution of capabilities and safety. It is developed by our proposed SafeLadder framework, which incorporates large-scale, progressive, safety-oriented reinforcement learning post-training, supported by a suite of multi-principled verifiers. Unlike previous alignment methods such as RLHF that simply learn human preferences, SafeLadder enables SafeWork-R1 to develop intrinsic safety reasoning and self-reflection abilities, giving rise to safety `aha' moments. Notably, SafeWork-R1 achieves an average improvement of $46.54\%$ over its base model Qwen2.5-VL-72B on safety-related benchmarks without compromising general capabilities, and delivers state-of-the-art safety performance compared to leading proprietary models such as GPT-4.1 and Claude Opus 4. To further bolster its reliability, we implement two distinct inference-time intervention methods and a deliberative search mechanism, enforcing step-level verification. Finally, we further develop SafeWork-R1-InternVL3-78B, SafeWork-R1-DeepSeek-70B, and SafeWork-R1-Qwen2.5VL-7B. All resulting models demonstrate that safety and capability can co-evolve synergistically, highlighting the generalizability of our framework in building robust, reliable, and trustworthy general-purpose AI.

CredID: Credible Multi-Bit Watermark for Large Language Models Identification

Dec 04, 2024

Abstract:Large Language Models (LLMs) are widely used in complex natural language processing tasks but raise privacy and security concerns due to the lack of identity recognition. This paper proposes a multi-party credible watermarking framework (CredID) involving a trusted third party (TTP) and multiple LLM vendors to address these issues. In the watermark embedding stage, vendors request a seed from the TTP to generate watermarked text without sending the user's prompt. In the extraction stage, the TTP coordinates each vendor to extract and verify the watermark from the text. This provides a credible watermarking scheme while preserving vendor privacy. Furthermore, current watermarking algorithms struggle with text quality, information capacity, and robustness, making it challenging to meet the diverse identification needs of LLMs. Thus, we propose a novel multi-bit watermarking algorithm and an open-source toolkit to facilitate research. Experiments show our CredID enhances watermark credibility and efficiency without compromising text quality. Additionally, we successfully utilized this framework to achieve highly accurate identification among multiple LLM vendors.

Add to Chrome

Add to Chrome Add to Firefox

Add to Firefox Add to Edge

Add to Edge