Raphael Hoffmann

Gemini Embedding: Generalizable Embeddings from Gemini

Mar 10, 2025Abstract:In this report, we introduce Gemini Embedding, a state-of-the-art embedding model leveraging the power of Gemini, Google's most capable large language model. Capitalizing on Gemini's inherent multilingual and code understanding capabilities, Gemini Embedding produces highly generalizable embeddings for text spanning numerous languages and textual modalities. The representations generated by Gemini Embedding can be precomputed and applied to a variety of downstream tasks including classification, similarity, clustering, ranking, and retrieval. Evaluated on the Massive Multilingual Text Embedding Benchmark (MMTEB), which includes over one hundred tasks across 250+ languages, Gemini Embedding substantially outperforms prior state-of-the-art models, demonstrating considerable improvements in embedding quality. Achieving state-of-the-art performance across MMTEB's multilingual, English, and code benchmarks, our unified model demonstrates strong capabilities across a broad selection of tasks and surpasses specialized domain-specific models.

Gemini: A Family of Highly Capable Multimodal Models

Dec 19, 2023Abstract:This report introduces a new family of multimodal models, Gemini, that exhibit remarkable capabilities across image, audio, video, and text understanding. The Gemini family consists of Ultra, Pro, and Nano sizes, suitable for applications ranging from complex reasoning tasks to on-device memory-constrained use-cases. Evaluation on a broad range of benchmarks shows that our most-capable Gemini Ultra model advances the state of the art in 30 of 32 of these benchmarks - notably being the first model to achieve human-expert performance on the well-studied exam benchmark MMLU, and improving the state of the art in every one of the 20 multimodal benchmarks we examined. We believe that the new capabilities of Gemini models in cross-modal reasoning and language understanding will enable a wide variety of use cases and we discuss our approach toward deploying them responsibly to users.

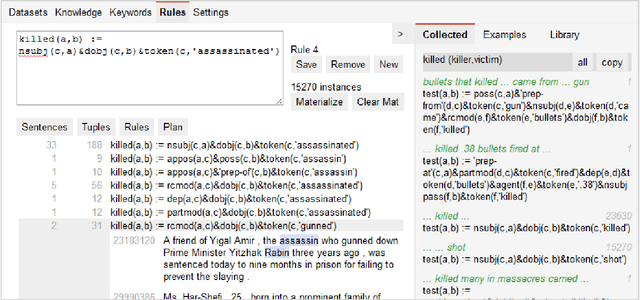

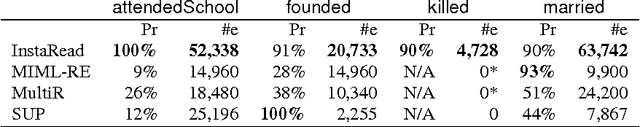

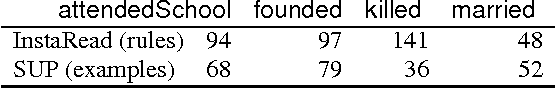

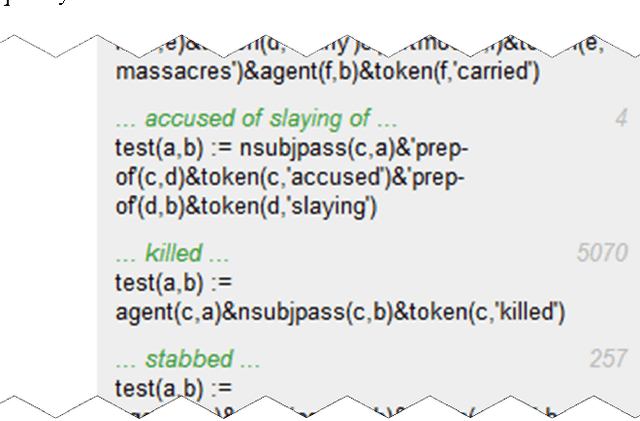

Extreme Extraction: Only One Hour per Relation

Jun 21, 2015

Abstract:Information Extraction (IE) aims to automatically generate a large knowledge base from natural language text, but progress remains slow. Supervised learning requires copious human annotation, while unsupervised and weakly supervised approaches do not deliver competitive accuracy. As a result, most fielded applications of IE, as well as the leading TAC-KBP systems, rely on significant amounts of manual engineering. Even "Extreme" methods, such as those reported in Freedman et al. 2011, require about 10 hours of expert labor per relation. This paper shows how to reduce that effort by an order of magnitude. We present a novel system, InstaRead, that streamlines authoring with an ensemble of methods: 1) encoding extraction rules in an expressive and compositional representation, 2) guiding the user to promising rules based on corpus statistics and mined resources, and 3) introducing a new interactive development cycle that provides immediate feedback --- even on large datasets. Experiments show that experts can create quality extractors in under an hour and even NLP novices can author good extractors. These extractors equal or outperform ones obtained by comparably supervised and state-of-the-art distantly supervised approaches.

Add to Chrome

Add to Chrome Add to Firefox

Add to Firefox Add to Edge

Add to Edge