Rama K. Vasudevan

Human-AI collaborative autonomous synthesis with pulsed laser deposition for remote epitaxy

Nov 14, 2025Abstract:Autonomous laboratories typically rely on data-driven decision-making, occasionally with human-in-the-loop oversight to inject domain expertise. Fully leveraging AI agents, however, requires tightly coupled, collaborative workflows spanning hypothesis generation, experimental planning, execution, and interpretation. To address this, we develop and deploy a human-AI collaborative (HAIC) workflow that integrates large language models for hypothesis generation and analysis, with collaborative policy updates driving autonomous pulsed laser deposition (PLD) experiments for remote epitaxy of BaTiO$_3$/graphene. HAIC accelerated the hypothesis formation and experimental design and efficiently mapped the growth space to graphene-damage. In situ Raman spectroscopy reveals that chemistry drives degradation while the highest energy plume components seed defects, identifying a low-O$_2$ pressure low-temperature synthesis window that preserves graphene but is incompatible with optimal BaTiO$_3$ growth. Thus, we show a two-step Ar/O$_2$ deposition is required to exfoliate ferroelectric BaTiO$_3$ while maintaining a monolayer graphene interlayer. HAIC stages human insight with AI reasoning between autonomous batches to drive rapid scientific progress, providing an evolution to many existing human-in-the-loop autonomous workflows.

Bayesian Co-navigation: Dynamic Designing of the Materials Digital Twins via Active Learning

Apr 19, 2024

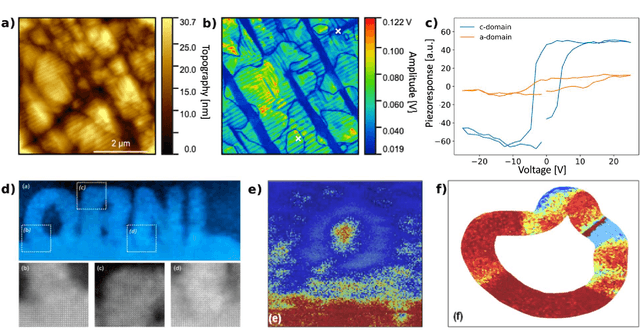

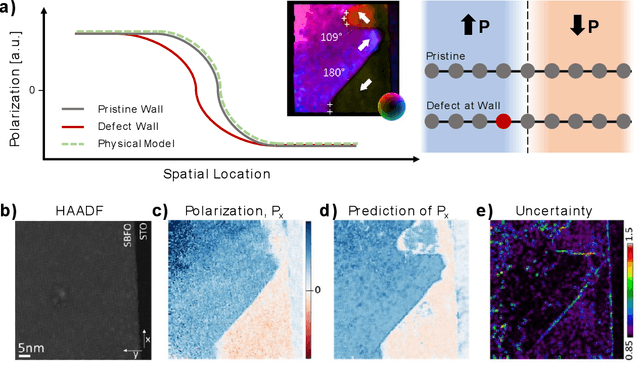

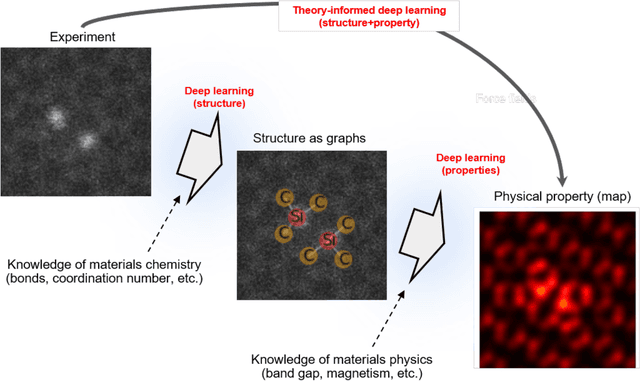

Abstract:Scientific advancement is universally based on the dynamic interplay between theoretical insights, modelling, and experimental discoveries. However, this feedback loop is often slow, including delayed community interactions and the gradual integration of experimental data into theoretical frameworks. This challenge is particularly exacerbated in domains dealing with high-dimensional object spaces, such as molecules and complex microstructures. Hence, the integration of theory within automated and autonomous experimental setups, or theory in the loop automated experiment, is emerging as a crucial objective for accelerating scientific research. The critical aspect is not only to use theory but also on-the-fly theory updates during the experiment. Here, we introduce a method for integrating theory into the loop through Bayesian co-navigation of theoretical model space and experimentation. Our approach leverages the concurrent development of surrogate models for both simulation and experimental domains at the rates determined by latencies and costs of experiments and computation, alongside the adjustment of control parameters within theoretical models to minimize epistemic uncertainty over the experimental object spaces. This methodology facilitates the creation of digital twins of material structures, encompassing both the surrogate model of behavior that includes the correlative part and the theoretical model itself. While demonstrated here within the context of functional responses in ferroelectric materials, our approach holds promise for broader applications, the exploration of optical properties in nanoclusters, microstructure-dependent properties in complex materials, and properties of molecular systems. The analysis code that supports the funding is publicly available at https://github.com/Slautin/2024_Co-navigation/tree/main

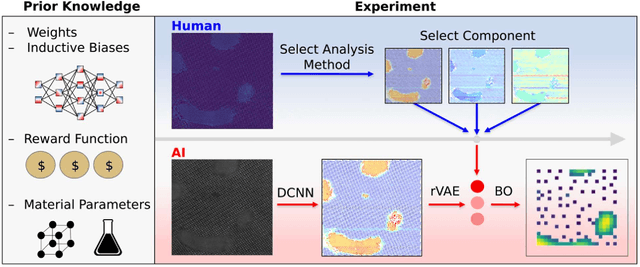

A dynamic Bayesian optimized active recommender system for curiosity-driven Human-in-the-loop automated experiments

Apr 05, 2023Abstract:Optimization of experimental materials synthesis and characterization through active learning methods has been growing over the last decade, with examples ranging from measurements of diffraction on combinatorial alloys at synchrotrons, to searches through chemical space with automated synthesis robots for perovskites. In virtually all cases, the target property of interest for optimization is defined apriori with limited human feedback during operation. In contrast, here we present the development of a new type of human in the loop experimental workflow, via a Bayesian optimized active recommender system (BOARS), to shape targets on the fly, employing human feedback. We showcase examples of this framework applied to pre-acquired piezoresponse force spectroscopy of a ferroelectric thin film, and then implement this in real time on an atomic force microscope, where the optimization proceeds to find symmetric piezoresponse amplitude hysteresis loops. It is found that such features appear more affected by subsurface defects than the local domain structure. This work shows the utility of human-augmented machine learning approaches for curiosity-driven exploration of systems across experimental domains. The analysis reported here is summarized in Colab Notebook for the purpose of tutorial and application to other data: https://github.com/arpanbiswas52/varTBO

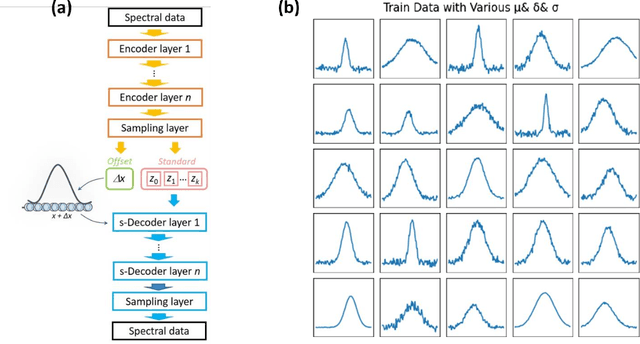

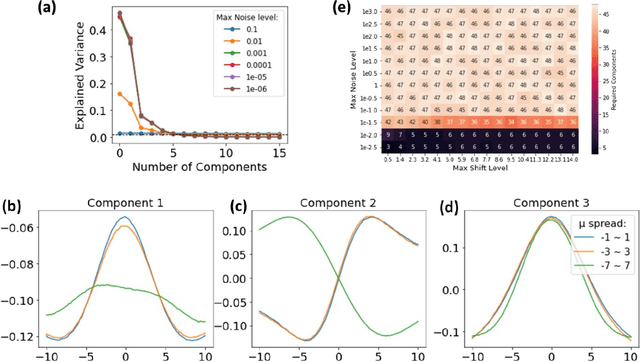

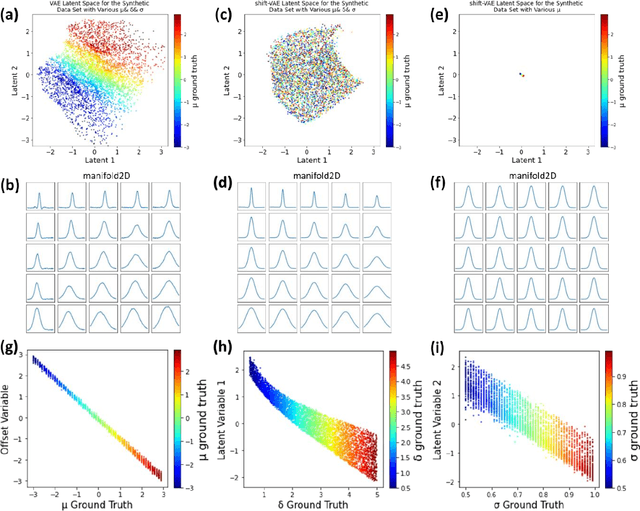

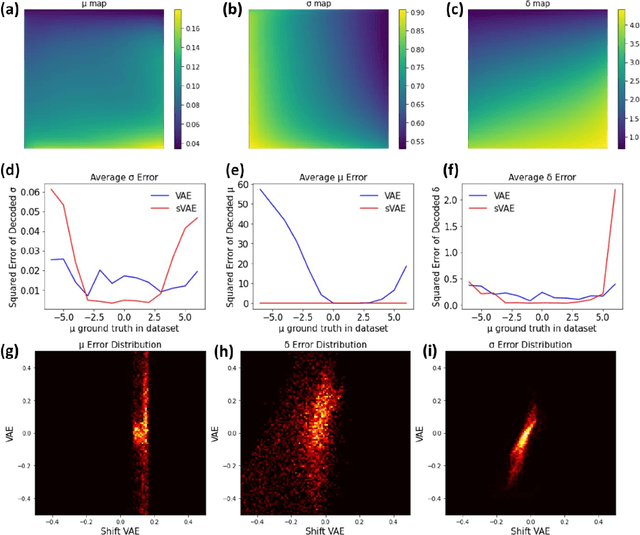

Decoding the shift-invariant data: applications for band-excitation scanning probe microscopy

Apr 20, 2021

Abstract:A shift-invariant variational autoencoder (shift-VAE) is developed as an unsupervised method for the analysis of spectral data in the presence of shifts along the parameter axis, disentangling the physically-relevant shifts from other latent variables. Using synthetic data sets, we show that the shift-VAE latent variables closely match the ground truth parameters. The shift VAE is extended towards the analysis of band-excitation piezoresponse force microscopy (BE-PFM) data, disentangling the resonance frequency shifts from the peak shape parameters in a model-free unsupervised manner. The extensions of this approach towards denoising of data and model-free dimensionality reduction in imaging and spectroscopic data are further demonstrated. This approach is universal and can also be extended to analysis of X-ray diffraction, photoluminescence, Raman spectra, and other data sets.

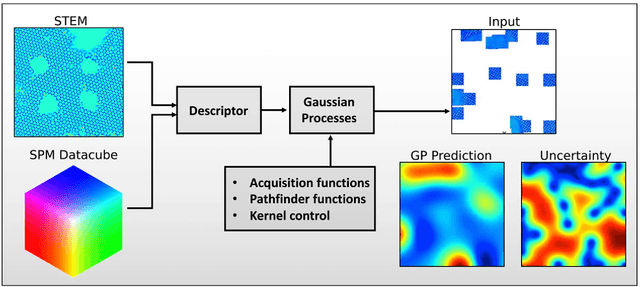

Automated and Autonomous Experiment in Electron and Scanning Probe Microscopy

Mar 22, 2021

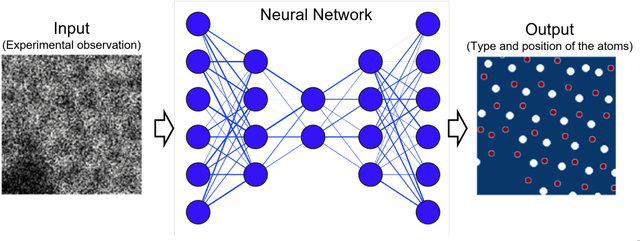

Abstract:Machine learning and artificial intelligence (ML/AI) are rapidly becoming an indispensable part of physics research, with domain applications ranging from theory and materials prediction to high-throughput data analysis. In parallel, the recent successes in applying ML/AI methods for autonomous systems from robotics through self-driving cars to organic and inorganic synthesis are generating enthusiasm for the potential of these techniques to enable automated and autonomous experiment (AE) in imaging. Here, we aim to analyze the major pathways towards AE in imaging methods with sequential image formation mechanisms, focusing on scanning probe microscopy (SPM) and (scanning) transmission electron microscopy ((S)TEM). We argue that automated experiments should necessarily be discussed in a broader context of the general domain knowledge that both informs the experiment and is increased as the result of the experiment. As such, this analysis should explore the human and ML/AI roles prior to and during the experiment, and consider the latencies, biases, and knowledge priors of the decision-making process. Similarly, such discussion should include the limitations of the existing imaging systems, including intrinsic latencies, non-idealities and drifts comprising both correctable and stochastic components. We further pose that the role of the AE in microscopy is not the exclusion of human operators (as is the case for autonomous driving), but rather automation of routine operations such as microscope tuning, etc., prior to the experiment, and conversion of low latency decision making processes on the time scale spanning from image acquisition to human-level high-order experiment planning.

Off-the-shelf deep learning is not enough: parsimony, Bayes and causality

May 04, 2020

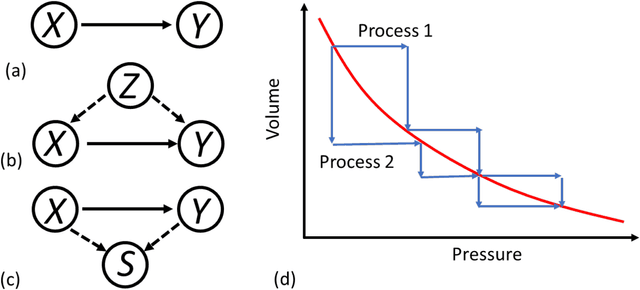

Abstract:Deep neural networks ("deep learning") have emerged as a technology of choice to tackle problems in natural language processing, computer vision, speech recognition and gameplay, and in just a few years has led to superhuman level performance and ushered in a new wave of "AI." Buoyed by these successes, researchers in the physical sciences have made steady progress in incorporating deep learning into their respective domains. However, such adoption brings substantial challenges that need to be recognized and confronted. Here, we discuss both opportunities and roadblocks to implementation of deep learning within materials science, focusing on the relationship between correlative nature of machine learning and causal hypothesis driven nature of physical sciences. We argue that deep learning and AI are now well positioned to revolutionize fields where causal links are known, as is the case for applications in theory. When confounding factors are frozen or change only weakly, this leaves open the pathway for effective deep learning solutions in experimental domains. Similarly, these methods offer a pathway towards understanding the physics of real-world systems, either via deriving reduced representations, deducing algorithmic complexity, or recovering generative physical models. However, extending deep learning and "AI" for models with unclear causal relationship can produce misleading and potentially incorrect results. Here, we argue the broad adoption of Bayesian methods incorporating prior knowledge, development of DL solutions with incorporated physical constraints, and ultimately adoption of causal models, offers a path forward for fundamental and applied research. Most notably, while these advances can change the way science is carried out in ways we cannot imagine, machine learning is not going to substitute science any time soon.

Add to Chrome

Add to Chrome Add to Firefox

Add to Firefox Add to Edge

Add to Edge