Qiang Cui

CausPref: Causal Preference Learning for Out-of-Distribution Recommendation

Feb 09, 2022

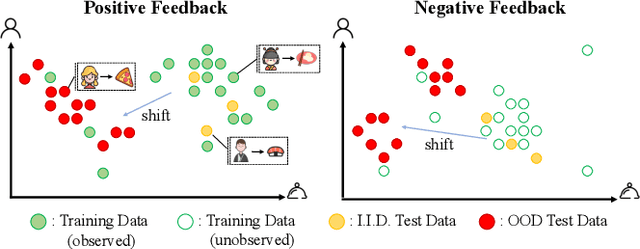

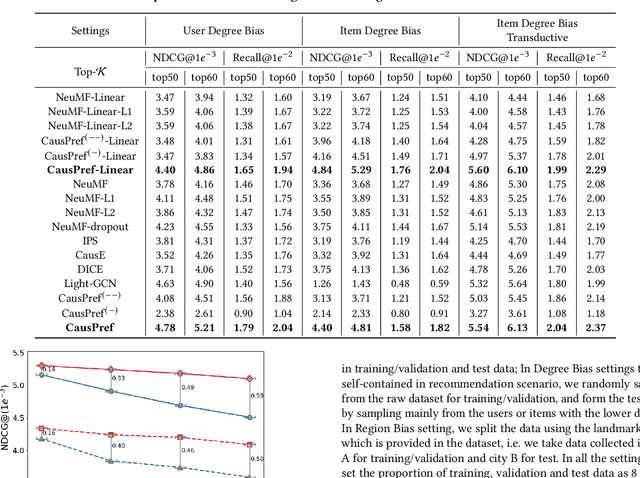

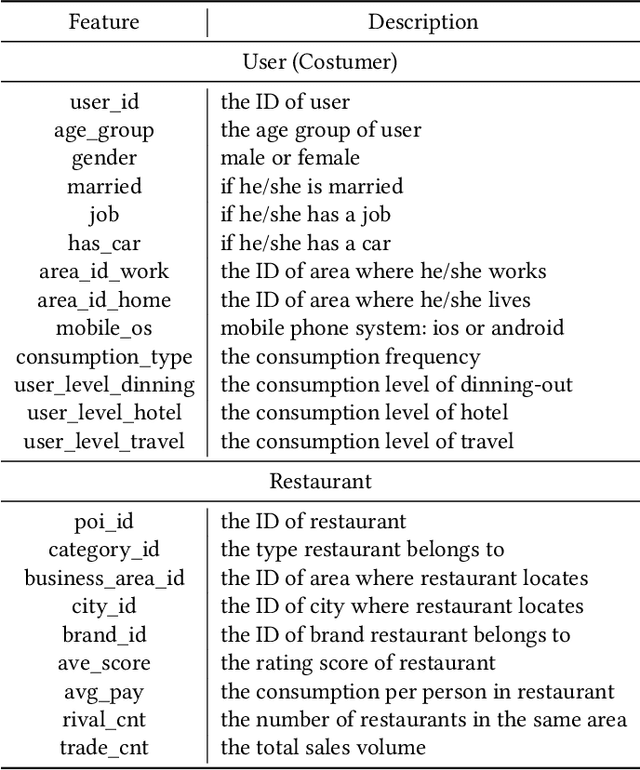

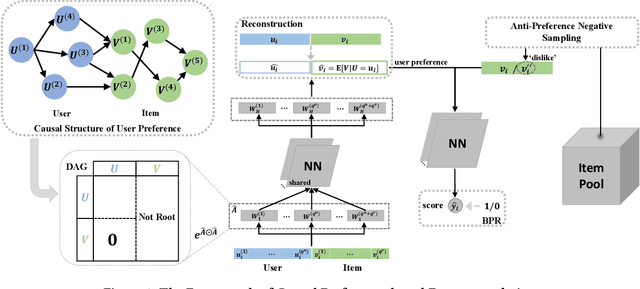

Abstract:In spite of the tremendous development of recommender system owing to the progressive capability of machine learning recently, the current recommender system is still vulnerable to the distribution shift of users and items in realistic scenarios, leading to the sharp decline of performance in testing environments. It is even more severe in many common applications where only the implicit feedback from sparse data is available. Hence, it is crucial to promote the performance stability of recommendation method in different environments. In this work, we first make a thorough analysis of implicit recommendation problem from the viewpoint of out-of-distribution (OOD) generalization. Then under the guidance of our theoretical analysis, we propose to incorporate the recommendation-specific DAG learner into a novel causal preference-based recommendation framework named CausPref, mainly consisting of causal learning of invariant user preference and anti-preference negative sampling to deal with implicit feedback. Extensive experimental results from real-world datasets clearly demonstrate that our approach surpasses the benchmark models significantly under types of out-of-distribution settings, and show its impressive interpretability.

CANS-Net: Context-Aware Non-Successive Modeling Network for Next Point-of-Interest Recommendation

Apr 06, 2021

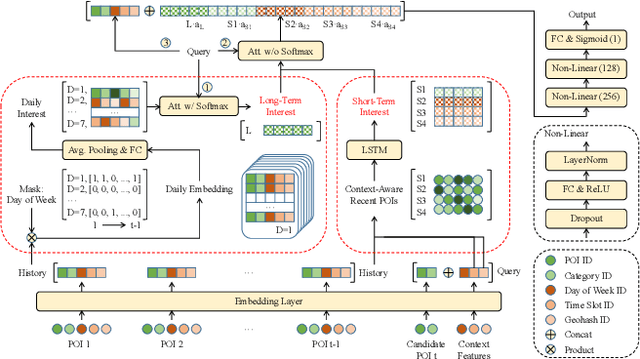

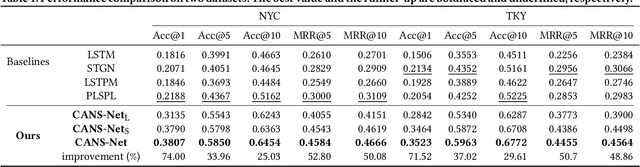

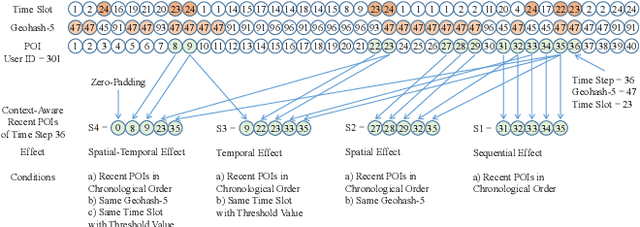

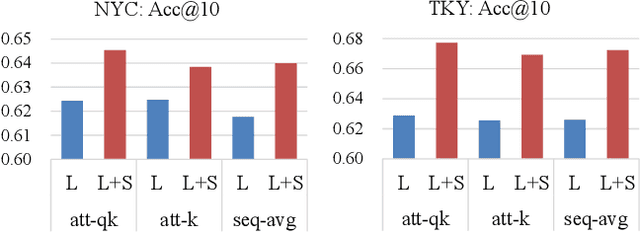

Abstract:Point-of-Interest (POI) recommendation is an important task in location-based social networks. It facilitates the sharing between users and locations. Recently, researchers tend to recommend POIs by long- and short-term interests. However, existing models are mostly based on sequential modeling of successive POIs to capture transitional regularities. A few works try to acquire user's mobility periodicity or POI's geographical influence, but they omit some other spatial-temporal factors. To this end, we propose to jointly model various spatial-temporal factors by context-aware non-successive modeling. In the long-term module, we split user's all historical check-ins into seven sequences by day of week to obtain daily interest, then we combine them by attention. This will capture temporal effect. In the short-term module, we construct four short-term sequences to acquire sequential, spatial, temporal, and spatial-temporal effects, respectively. Attention of interest-level is used to combine all factors and interests. Experiments on two real-world datasets demonstrate the state-of-the-art performance of our method.

Add to Chrome

Add to Chrome Add to Firefox

Add to Firefox Add to Edge

Add to Edge