Norimichi Ukita

SAMIDARE: Advanced Tracking-by-Segmentation for Dense Scenarios

Apr 24, 2026Abstract:Automated sports analysis demands robust multi-object tracking (MOT), yet segmentation-based methods often struggle with mask errors and ID switches in dense scenes. We propose SAMIDARE, a framework that enhances SAM2MOT for crowded scenes through three key components: (1) density-aware mask re-generation and (2) selective memory updates, both for adaptive mask control to preserve target feature integrity, and (3) state-aware association and new track initialization, which improves robustness under mutual occlusions and frequent frame-out events. Evaluated on the SportsMOT dataset, SAMIDARE achieves state-of-the-art performance, outperforming the baseline by 2.5 HOTA and 4.2 IDF1 points on the validation set. These results demonstrate that adaptive feature management using mask control and state-aware association provide a robust and efficient solution for dense sports tracking. Code is available at https://github.com/ZabuZabuZabu/SAMIDARE

Group-DINOmics: Incorporating People Dynamics into DINO for Self-supervised Group Activity Feature Learning

Apr 06, 2026Abstract:This paper proposes Group Activity Feature (GAF) learning without group activity annotations. Unlike prior work, which uses low-level static local features to learn GAFs, we propose leveraging dynamics-aware and group-aware pretext tasks, along with local and global features provided by DINO, for group-dynamics-aware GAF learning. To adapt DINO and GAF learning to local dynamics and global group features, our pretext tasks use person flow estimation and group-relevant object location estimation, respectively. Person flow estimation is used to represent the local motion of each person, which is an important cue for understanding group activities. In contrast, group-relevant object location estimation encourages GAFs to learn scene context (e.g., spatial relations of people and objects) as global features. Comprehensive experiments on public datasets demonstrate the state-of-the-art performance of our method in group activity retrieval and recognition. Our ablation studies verify the effectiveness of each component in our method. Code: https://github.com/tezuka0001/Group-DINOmics.

End-to-End Shared Attention Estimation via Group Detection with Feedback Refinement

Apr 02, 2026Abstract:This paper proposes an end-to-end shared attention estimation method via group detection. Most previous methods estimate shared attention (SA) without detecting the actual group of people focusing on it, or assume that there is a single SA point in a given image. These issues limit the applicability of SA detection in practice and impact performance. To address them, we propose to simultaneously achieve group detection and shared attention estimation using a two step process: (i) the generation of SA heatmaps relying on individual gaze attention heatmaps and group membership scalars estimated in a group inference; (ii) a refinement of the initial group memberships allowing to account for the initial SA heatmaps, and the final prediction of the SA heatmap. Experiments demonstrate that our method outperforms other methods in group detection and shared attention estimation. Additional analyses validate the effectiveness of the proposed components. Code: https://github.com/chihina/sagd-CVPRW2026.

Multi-Person Pose Estimation Evaluation Using Optimal Transportation and Improved Pose Matching

Mar 11, 2026Abstract:In Multi-Person Pose Estimation, many metrics place importance on ranking of pose detection confidence scores. Current metrics tend to disregard false-positive poses with low confidence, focusing primarily on a larger number of high-confidence poses. Consequently, these metrics may yield high scores even when many false-positive poses with low confidence are detected. For fair evaluation taking into account a tradeoff between true-positive and false-positive poses, this paper proposes Optimal Correction Cost for pose (OCpose), which evaluates detected poses against pose annotations as an optimal transportation. For the fair tradeoff between true-positive and false-positive poses, OCpose equally evaluates all the detected poses regardless of their confidence scores. In OCpose, on the other hand, the confidence score of each pose is utilized to improve the reliability of matching scores between the estimated pose and pose annotations. As a result, OCpose provides a different perspective assessment than other confidence ranking-based metrics.

Human-in-the-loop Adaptation in Group Activity Feature Learning for Team Sports Video Retrieval

Feb 03, 2026Abstract:This paper proposes human-in-the-loop adaptation for Group Activity Feature Learning (GAFL) without group activity annotations. This human-in-the-loop adaptation is employed in a group-activity video retrieval framework to improve its retrieval performance. Our method initially pre-trains the GAF space based on the similarity of group activities in a self-supervised manner, unlike prior work that classifies videos into pre-defined group activity classes in a supervised learning manner. Our interactive fine-tuning process updates the GAF space to allow a user to better retrieve videos similar to query videos given by the user. In this fine-tuning, our proposed data-efficient video selection process provides several videos, which are selected from a video database, to the user in order to manually label these videos as positive or negative. These labeled videos are used to update (i.e., fine-tune) the GAF space, so that the positive and negative videos move closer to and farther away from the query videos through contrastive learning. Our comprehensive experimental results on two team sports datasets validate that our method significantly improves the retrieval performance. Ablation studies also demonstrate that several components in our human-in-the-loop adaptation contribute to the improvement of the retrieval performance. Code: https://github.com/chihina/GAFL-FINE-CVIU.

* Accepted to Computer Vision and Image Understanding (CVIU)

MMCM: Multimodality-aware Metric using Clustering-based Modes for Probabilistic Human Motion Prediction

Nov 19, 2025

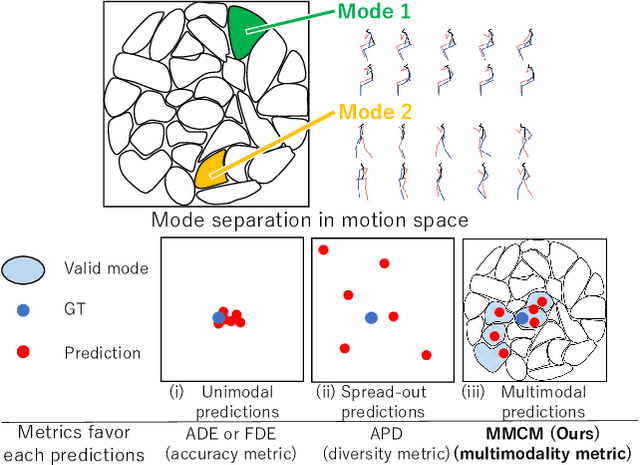

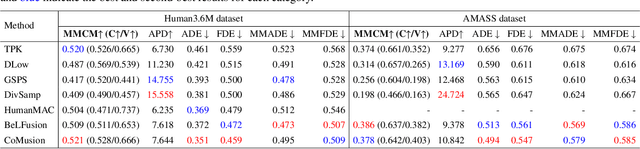

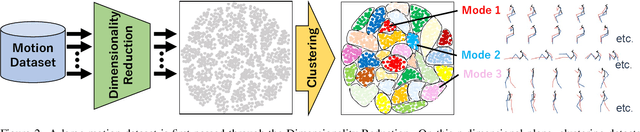

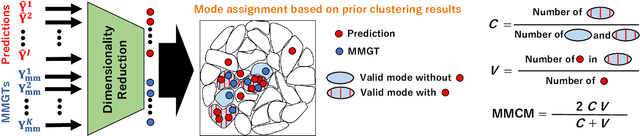

Abstract:This paper proposes a novel metric for Human Motion Prediction (HMP). Since a single past sequence can lead to multiple possible futures, a probabilistic HMP method predicts such multiple motions. While a single motion predicted by a deterministic method is evaluated only with the difference from its ground truth motion, multiple predicted motions should also be evaluated based on their distribution. For this evaluation, this paper focuses on the following two criteria. \textbf{(a) Coverage}: motions should be distributed among multiple motion modes to cover diverse possibilities. \textbf{(b) Validity}: motions should be kinematically valid as future motions observable from a given past motion. However, existing metrics simply appreciate widely distributed motions even if these motions are observed in a single mode and kinematically invalid. To resolve these disadvantages, this paper proposes a Multimodality-aware Metric using Clustering-based Modes (MMCM). For (a) coverage, MMCM divides a motion space into several clusters, each of which is regarded as a mode. These modes are used to explicitly evaluate whether predicted motions are distributed among multiple modes. For (b) validity, MMCM identifies valid modes by collecting possible future motions from a motion dataset. Our experiments validate that our clustering yields sensible mode definitions and that MMCM accurately scores multimodal predictions. Code: https://github.com/placerkyo/MMCM

Dynamic Group Detection using VLM-augmented Temporal Groupness Graph

Sep 05, 2025Abstract:This paper proposes dynamic human group detection in videos. For detecting complex groups, not only the local appearance features of in-group members but also the global context of the scene are important. Such local and global appearance features in each frame are extracted using a Vision-Language Model (VLM) augmented for group detection in our method. For further improvement, the group structure should be consistent over time. While previous methods are stabilized on the assumption that groups are not changed in a video, our method detects dynamically changing groups by global optimization using a graph with all frames' groupness probabilities estimated by our groupness-augmented CLIP features. Our experimental results demonstrate that our method outperforms state-of-the-art group detection methods on public datasets. Code: https://github.com/irajisamurai/VLM-GroupDetection.git

CacheFlow: Fast Human Motion Prediction by Cached Normalizing Flow

May 19, 2025Abstract:Many density estimation techniques for 3D human motion prediction require a significant amount of inference time, often exceeding the duration of the predicted time horizon. To address the need for faster density estimation for 3D human motion prediction, we introduce a novel flow-based method for human motion prediction called CacheFlow. Unlike previous conditional generative models that suffer from time efficiency, CacheFlow takes advantage of an unconditional flow-based generative model that transforms a Gaussian mixture into the density of future motions. The results of the computation of the flow-based generative model can be precomputed and cached. Then, for conditional prediction, we seek a mapping from historical trajectories to samples in the Gaussian mixture. This mapping can be done by a much more lightweight model, thus saving significant computation overhead compared to a typical conditional flow model. In such a two-stage fashion and by caching results from the slow flow model computation, we build our CacheFlow without loss of prediction accuracy and model expressiveness. This inference process is completed in approximately one millisecond, making it 4 times faster than previous VAE methods and 30 times faster than previous diffusion-based methods on standard benchmarks such as Human3.6M and AMASS datasets. Furthermore, our method demonstrates improved density estimation accuracy and comparable prediction accuracy to a SOTA method on Human3.6M. Our code and models will be publicly available.

Human Motion Prediction via Test-domain-aware Adaptation with Easily-available Human Motions Estimated from Videos

May 13, 2025

Abstract:In 3D Human Motion Prediction (HMP), conventional methods train HMP models with expensive motion capture data. However, the data collection cost of such motion capture data limits the data diversity, which leads to poor generalizability to unseen motions or subjects. To address this issue, this paper proposes to enhance HMP with additional learning using estimated poses from easily available videos. The 2D poses estimated from the monocular videos are carefully transformed into motion capture-style 3D motions through our pipeline. By additional learning with the obtained motions, the HMP model is adapted to the test domain. The experimental results demonstrate the quantitative and qualitative impact of our method.

Robot Motion Planning using One-Step Diffusion with Noise-Optimized Approximate Motions

Apr 28, 2025

Abstract:This paper proposes an image-based robot motion planning method using a one-step diffusion model. While the diffusion model allows for high-quality motion generation, its computational cost is too expensive to control a robot in real time. To achieve high quality and efficiency simultaneously, our one-step diffusion model takes an approximately generated motion, which is predicted directly from input images. This approximate motion is optimized by additive noise provided by our novel noise optimizer. Unlike general isotropic noise, our noise optimizer adjusts noise anisotropically depending on the uncertainty of each motion element. Our experimental results demonstrate that our method outperforms state-of-the-art methods while maintaining its efficiency by one-step diffusion.

Add to Chrome

Add to Chrome Add to Firefox

Add to Firefox Add to Edge

Add to Edge