Minghua Zhao

VADMamba++: Efficient Video Anomaly Detection via Hybrid Modeling in Grayscale Space

Apr 01, 2026Abstract:VADMamba pioneered the introduction of Mamba to Video Anomaly Detection (VAD), achieving high accuracy and fast inference through hybrid proxy tasks. Nevertheless, its heavy reliance on optical flow as auxiliary input and inter-task fusion scoring constrains its applicability to a single proxy task. In this paper, we introduce VADMamba++, an efficient VAD method based on the Gray-to-RGB paradigm that enforces a Single-Channel to Three-Channel reconstruction mapping, designed for a single proxy task and operating without auxiliary inputs. This paradigm compels inferring color appearances from grayscale structures, allowing anomalies to be more effectively revealed through dual inconsistencies between structure and chromatic cues. Specifically, VADMamba++ reconstructs grayscale frames into the RGB space to simultaneously discriminate structural geometry and chromatic fidelity, thereby enhancing sensitivity to explicit visual anomalies. We further design a hybrid modeling backbone that integrates Mamba, CNN, and Transformer modules to capture diverse normal patterns while suppressing the appearance of anomalies. Furthermore, an intra-task fusion scoring strategy integrates explicit future-frame prediction errors with implicit quantized feature errors, further improving accuracy under a single task setting. Extensive experiments on three benchmark datasets demonstrate that VADMamba++ outperforms state-of-the-art methods while meeting performance and efficiency, especially under a strict single-task setting with only frame-level inputs.

Forward Consistency Learning with Gated Context Aggregation for Video Anomaly Detection

Jan 26, 2026Abstract:As a crucial element of public security, video anomaly detection (VAD) aims to measure deviations from normal patterns for various events in real-time surveillance systems. However, most existing VAD methods rely on large-scale models to pursue extreme accuracy, limiting their feasibility on resource-limited edge devices. Moreover, mainstream prediction-based VAD detects anomalies using only single-frame future prediction errors, overlooking the richer constraints from longer-term temporal forward information. In this paper, we introduce FoGA, a lightweight VAD model that performs Forward consistency learning with Gated context Aggregation, containing about 2M parameters and tailored for potential edge devices. Specifically, we propose a Unet-based method that performs feature extraction on consecutive frames to generate both immediate and forward predictions. Then, we introduce a gated context aggregation module into the skip connections to dynamically fuse encoder and decoder features at the same spatial scale. Finally, the model is jointly optimized with a novel forward consistency loss, and a hybrid anomaly measurement strategy is adopted to integrate errors from both immediate and forward frames for more accurate detection. Extensive experiments demonstrate the effectiveness of the proposed method, which substantially outperforms state-of-the-art competing methods, running up to 155 FPS. Hence, our FoGA achieves an excellent trade-off between performance and the efficiency metric.

VADMamba: Exploring State Space Models for Fast Video Anomaly Detection

Mar 27, 2025Abstract:Video anomaly detection (VAD) methods are mostly CNN-based or Transformer-based, achieving impressive results, but the focus on detection accuracy often comes at the expense of inference speed. The emergence of state space models in computer vision, exemplified by the Mamba model, demonstrates improved computational efficiency through selective scans and showcases the great potential for long-range modeling. Our study pioneers the application of Mamba to VAD, dubbed VADMamba, which is based on multi-task learning for frame prediction and optical flow reconstruction. Specifically, we propose the VQ-Mamba Unet (VQ-MaU) framework, which incorporates a Vector Quantization (VQ) layer and Mamba-based Non-negative Visual State Space (NVSS) block. Furthermore, two individual VQ-MaU networks separately predict frames and reconstruct corresponding optical flows, further boosting accuracy through a clip-level fusion evaluation strategy. Experimental results validate the efficacy of the proposed VADMamba across three benchmark datasets, demonstrating superior performance in inference speed compared to previous work. Code is available at https://github.com/jLooo/VADMamba.

Appearance Blur-driven AutoEncoder and Motion-guided Memory Module for Video Anomaly Detection

Sep 26, 2024Abstract:Video anomaly detection (VAD) often learns the distribution of normal samples and detects the anomaly through measuring significant deviations, but the undesired generalization may reconstruct a few anomalies thus suppressing the deviations. Meanwhile, most VADs cannot cope with cross-dataset validation for new target domains, and few-shot methods must laboriously rely on model-tuning from the target domain to complete domain adaptation. To address these problems, we propose a novel VAD method with a motion-guided memory module to achieve cross-dataset validation with zero-shot. First, we add Gaussian blur to the raw appearance images, thereby constructing the global pseudo-anomaly, which serves as the input to the network. Then, we propose multi-scale residual channel attention to deblur the pseudo-anomaly in normal samples. Next, memory items are obtained by recording the motion features in the training phase, which are used to retrieve the motion features from the raw information in the testing phase. Lastly, our method can ignore the blurred real anomaly through attention and rely on motion memory items to increase the normality gap between normal and abnormal motion. Extensive experiments on three benchmark datasets demonstrate the effectiveness of the proposed method. Compared with cross-domain methods, our method achieves competitive performance without adaptation during testing.

Bidirectional skip-frame prediction for video anomaly detection with intra-domain disparity-driven attention

Jul 23, 2024

Abstract:With the widespread deployment of video surveillance devices and the demand for intelligent system development, video anomaly detection (VAD) has become an important part of constructing intelligent surveillance systems. Expanding the discriminative boundary between normal and abnormal events to enhance performance is the common goal and challenge of VAD. To address this problem, we propose a Bidirectional Skip-frame Prediction (BiSP) network based on a dual-stream autoencoder, from the perspective of learning the intra-domain disparity between different features. The BiSP skips frames in the training phase to achieve the forward and backward frame prediction respectively, and in the testing phase, it utilizes bidirectional consecutive frames to co-predict the same intermediate frames, thus expanding the degree of disparity between normal and abnormal events. The BiSP designs the variance channel attention and context spatial attention from the perspectives of movement patterns and object scales, respectively, thus ensuring the maximization of the disparity between normal and abnormal in the feature extraction and delivery with different dimensions. Extensive experiments from four benchmark datasets demonstrate the effectiveness of the proposed BiSP, which substantially outperforms state-of-the-art competing methods.

Low-light Stereo Image Enhancement and De-noising in the Low-frequency Information Enhanced Image Space

Jan 15, 2024Abstract:Unlike single image task, stereo image enhancement can use another view information, and its key stage is how to perform cross-view feature interaction to extract useful information from another view. However, complex noise in low-light image and its impact on subsequent feature encoding and interaction are ignored by the existing methods. In this paper, a method is proposed to perform enhancement and de-noising simultaneously. First, to reduce unwanted noise interference, a low-frequency information enhanced module (IEM) is proposed to suppress noise and produce a new image space. Additionally, a cross-channel and spatial context information mining module (CSM) is proposed to encode long-range spatial dependencies and to enhance inter-channel feature interaction. Relying on CSM, an encoder-decoder structure is constructed, incorporating cross-view and cross-scale feature interactions to perform enhancement in the new image space. Finally, the network is trained with the constraints of both spatial and frequency domain losses. Extensive experiments on both synthesized and real datasets show that our method obtains better detail recovery and noise removal compared with state-of-the-art methods. In addition, a real stereo image enhancement dataset is captured with stereo camera ZED2. The code and dataset are publicly available at: https://www.github.com/noportraits/LFENet.

CGGAN: A Context Guided Generative Adversarial Network For Single Image Dehazing

May 28, 2020

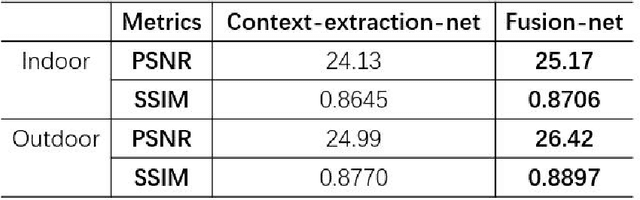

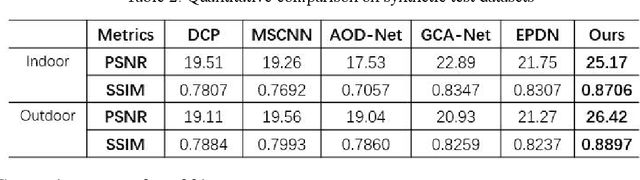

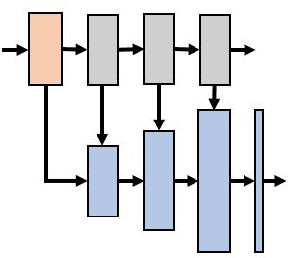

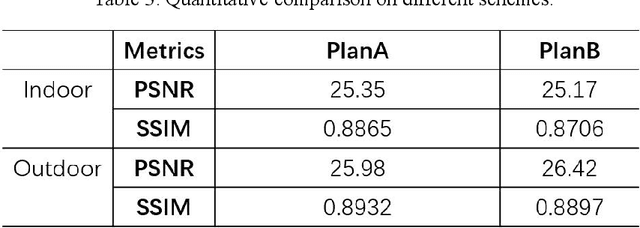

Abstract:Image haze removal is highly desired for the application of computer vision. This paper proposes a novel Context Guided Generative Adversarial Network (CGGAN) for single image dehazing. Of which, an novel new encoder-decoder is employed as the generator. And it consists of a feature-extraction-net, a context-extractionnet, and a fusion-net in sequence. The feature extraction-net acts as a encoder, and is used for extracting haze features. The context-extraction net is a multi-scale parallel pyramid decoder, and is used for extracting the deep features of the encoder and generating coarse dehazing image. The fusion-net is a decoder, and is used for obtaining the final haze-free image. To obtain more better results, multi-scale information obtained during the decoding process of the context extraction decoder is used for guiding the fusion decoder. By introducing an extra coarse decoder to the original encoder-decoder, the CGGAN can make better use of the deep feature information extracted by the encoder. To ensure our CGGAN work effectively for different haze scenarios, different loss functions are employed for the two decoders. Experiments results show the advantage and the effectiveness of our proposed CGGAN, evidential improvements over existing state-of-the-art methods are obtained.

Add to Chrome

Add to Chrome Add to Firefox

Add to Firefox Add to Edge

Add to Edge