Mikhail Belyaev

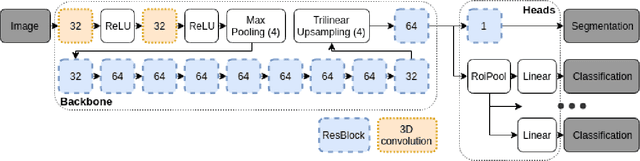

Keypoints Localization for Joint Vertebra Detection and Fracture Severity Quantification

May 25, 2020

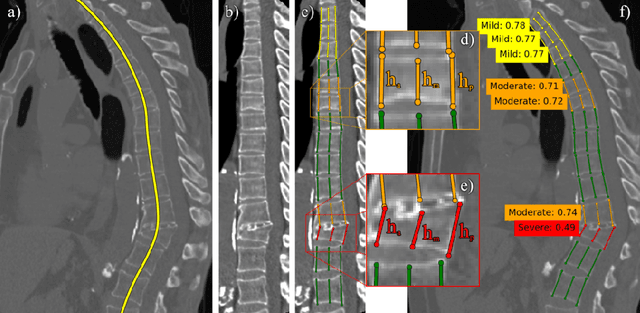

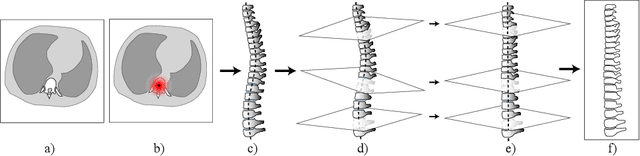

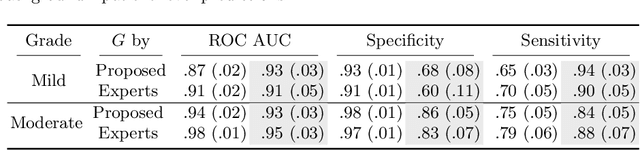

Abstract:Vertebral body compression fractures are reliable early signs of osteoporosis. Though these fractures are visible on Computed Tomography (CT) images, they are frequently missed by radiologists in clinical settings. Prior research on automatic methods of vertebral fracture classification proves its reliable quality; however, existing methods provide hard-to-interpret outputs and sometimes fail to process cases with severe abnormalities such as highly pathological vertebrae or scoliosis. We propose a new two-step algorithm to localize the vertebral column in 3D CT images and then to simultaneously detect individual vertebrae and quantify fractures in 2D. We train neural networks for both steps using a simple 6-keypoints based annotation scheme, which corresponds precisely to current medical recommendation. Our algorithm has no exclusion criteria, processes 3D CT in 2 seconds on a single GPU, and provides an intuitive and verifiable output. The method approaches expert-level performance and demonstrates state-of-the-art results in vertebrae 3D localization (the average error is 1 mm), vertebrae 2D detection (precision is 0.99, recall is 1), and fracture identification (ROC AUC at the patient level is 0.93).

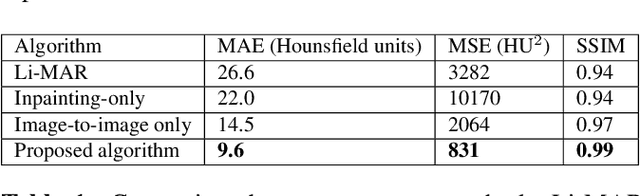

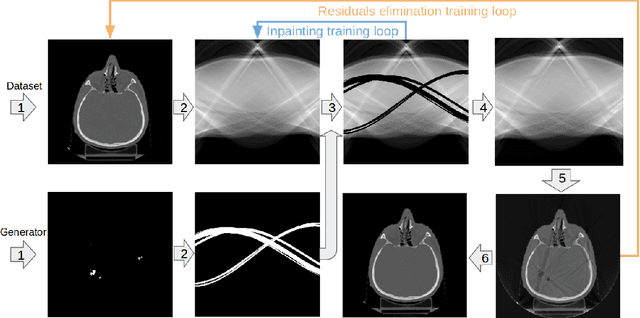

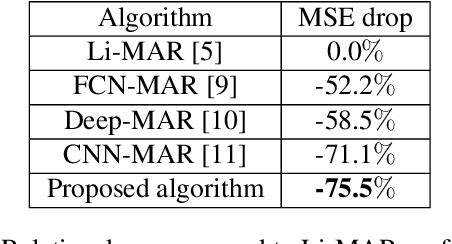

Multi-domain CT metal artifacts reduction using partial convolution based inpainting

Nov 13, 2019

Abstract:Recent CT Metal Artifacts Reduction (MAR) methods are often based on image-to-image convolutional neural networks for adjustment of corrupted sinograms or images themselves. In this paper, we are exploring the capabilities of a multi-domain method which consists of both sinogram correction (projection domain step) and restored image correction (image-domain step). Moreover, we propose a formulation of the first step problem as sinogram inpainting which allows us to use methods of this specific field such as partial convolutions. The proposed method allows to achieve state-of-the-art (-75% MSE) improvement in comparison with a classic benchmark - Li-MAR.

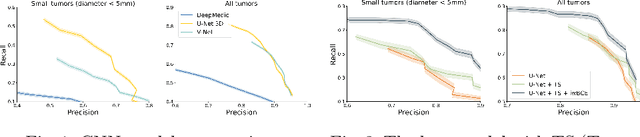

Deep Learning for Brain Tumor Segmentation in Radiosurgery: Prospective Clinical Evaluation

Sep 06, 2019

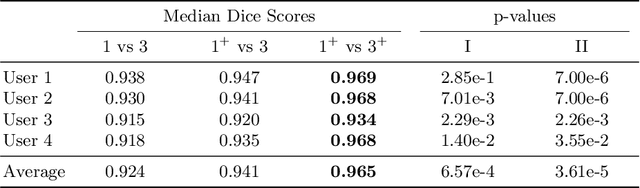

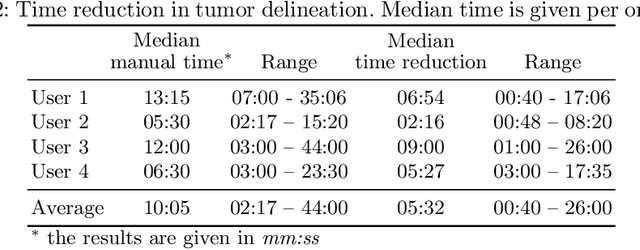

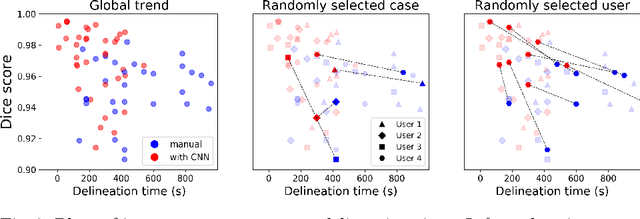

Abstract:Stereotactic radiosurgery is a minimally-invasive treatment option for a large number of patients with intracranial tumors. As part of the therapy treatment, accurate delineation of brain tumors is of great importance. However, slice-by-slice manual segmentation on T1c MRI could be time-consuming (especially for multiple metastases) and subjective (especially for meningiomas). In our work, we compared several deep convolutional networks architectures and training procedures and evaluated the best model in a radiation therapy department for three types of brain tumors: meningiomas, schwannomas and multiple brain metastases. The developed semiautomatic segmentation system accelerates the contouring process by 2.2 times on average and increases inter-rater agreement from 92% to 96.5%.

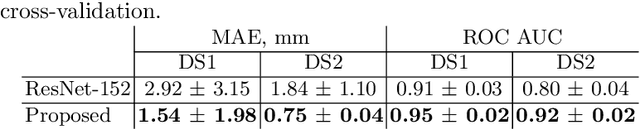

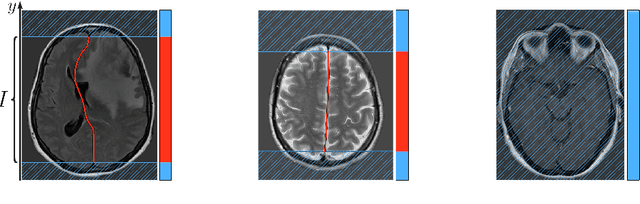

Incorporating Task-Specific Structural Knowledge into CNNs for Brain Midline Shift Detection

Aug 13, 2019

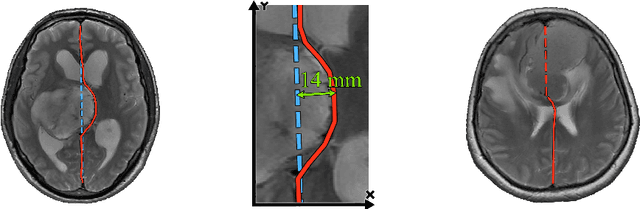

Abstract:Midline shift (MLS) is a well-established factor used for outcome prediction in traumatic brain injury, stroke and brain tumors. The importance of automatic estimation of MLS was recently highlighted by ACR Data Science Institute. In this paper we introduce a novel deep learning based approach for the problem of MLS detection, which exploits task-specific structural knowledge. We evaluate our method on a large dataset containing heterogeneous images with significant MLS and show that its mean error approaches the inter-expert variability. Finally, we show the robustness of our approach by validating it on an external dataset, acquired during routine clinical practice.

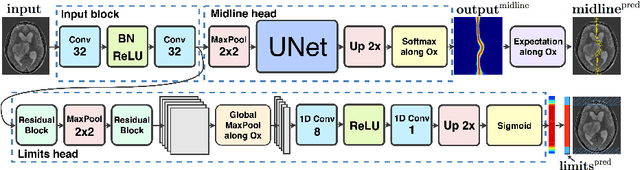

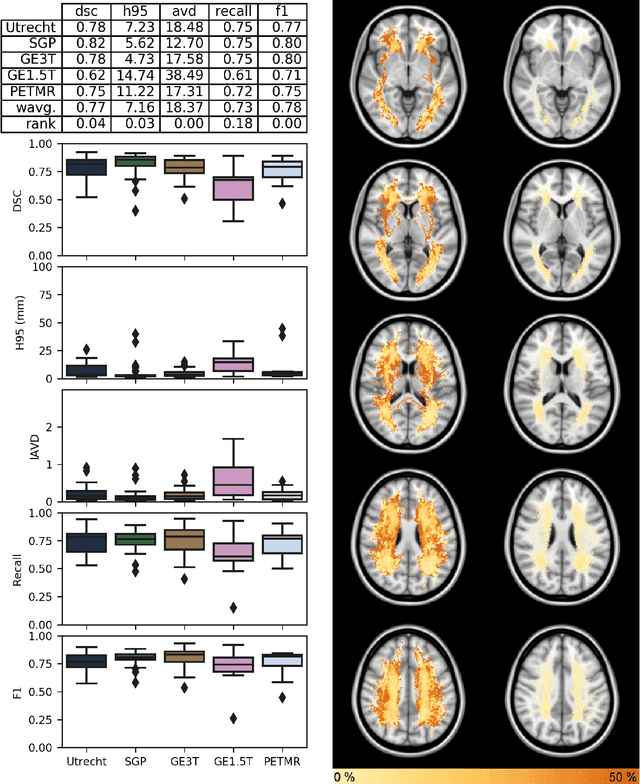

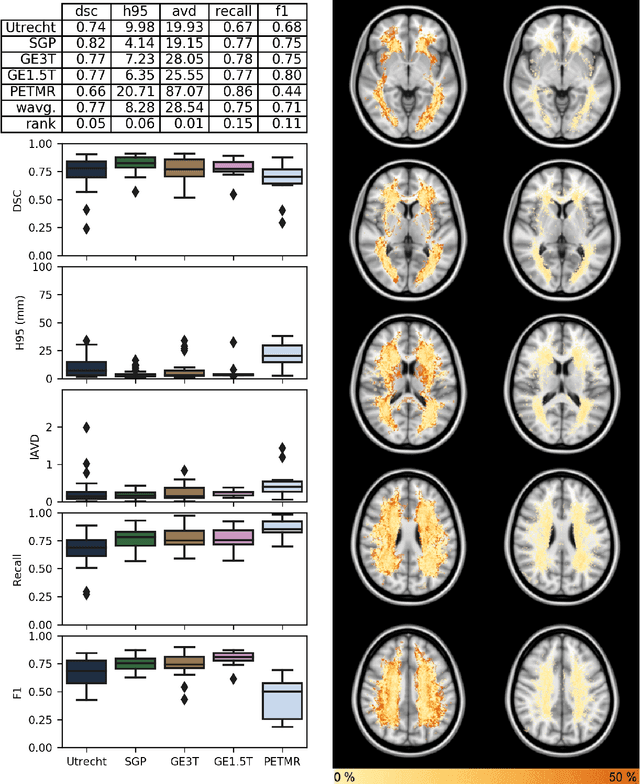

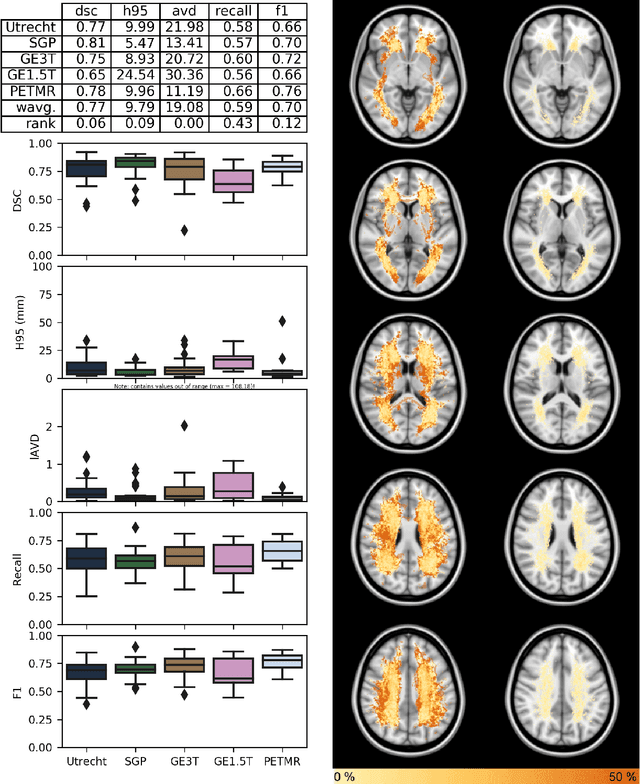

Standardized Assessment of Automatic Segmentation of White Matter Hyperintensities and Results of the WMH Segmentation Challenge

Apr 01, 2019

Abstract:Quantification of cerebral white matter hyperintensities (WMH) of presumed vascular origin is of key importance in many neurological research studies. Currently, measurements are often still obtained from manual segmentations on brain MR images, which is a laborious procedure. Automatic WMH segmentation methods exist, but a standardized comparison of the performance of such methods is lacking. We organized a scientific challenge, in which developers could evaluate their method on a standardized multi-center/-scanner image dataset, giving an objective comparison: the WMH Segmentation Challenge (https://wmh.isi.uu.nl/). Sixty T1+FLAIR images from three MR scanners were released with manual WMH segmentations for training. A test set of 110 images from five MR scanners was used for evaluation. Segmentation methods had to be containerized and submitted to the challenge organizers. Five evaluation metrics were used to rank the methods: (1) Dice similarity coefficient, (2) modified Hausdorff distance (95th percentile), (3) absolute log-transformed volume difference, (4) sensitivity for detecting individual lesions, and (5) F1-score for individual lesions. Additionally, methods were ranked on their inter-scanner robustness. Twenty participants submitted their method for evaluation. This paper provides a detailed analysis of the results. In brief, there is a cluster of four methods that rank significantly better than the other methods, with one clear winner. The inter-scanner robustness ranking shows that not all methods generalize to unseen scanners. The challenge remains open for future submissions and provides a public platform for method evaluation.

Brain Tumor Image Retrieval via Multitask Learning

Oct 22, 2018

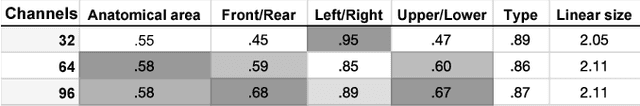

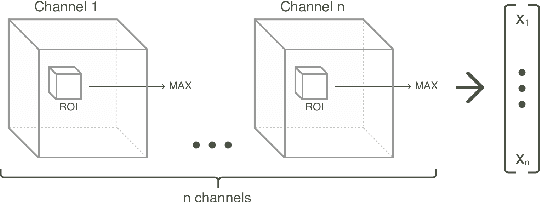

Abstract:Classification-based image retrieval systems are built by training convolutional neural networks (CNNs) on a relevant classification problem and using the distance in the resulting feature space as a similarity metric. However, in practical applications, it is often desirable to have representations which take into account several aspects of the data (e.g., brain tumor type and its localization). In our work, we extend the classification-based approach with multitask learning: we train a CNN on brain MRI scans with heterogeneous labels and implement a corresponding tumor image retrieval system. We validate our approach on brain tumor data which contains information about tumor types, shapes and localization. We show that our method allows us to build representations that contain more relevant information about tumors than single-task classification-based approaches.

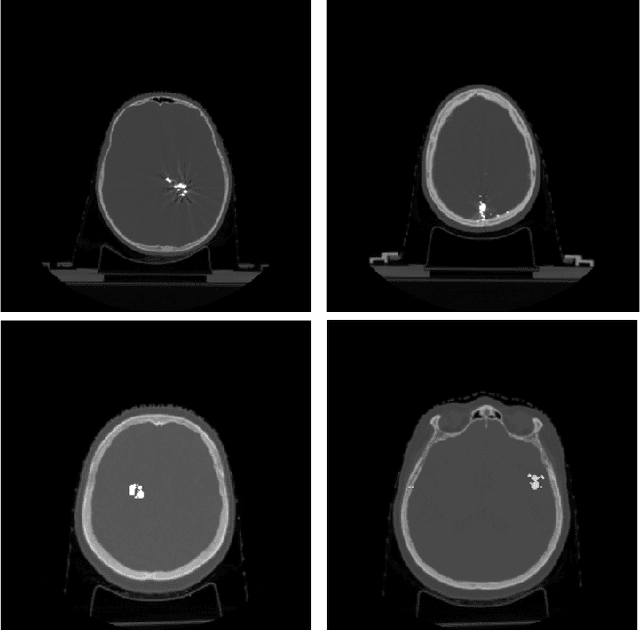

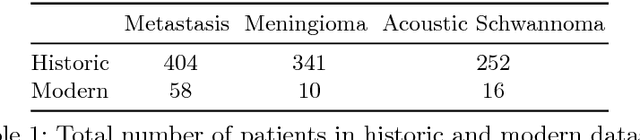

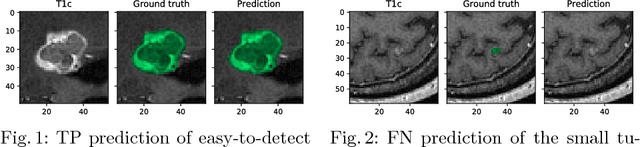

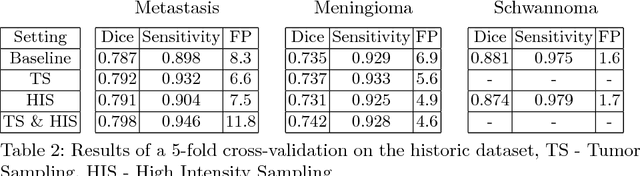

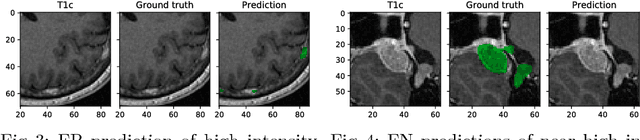

Tumor Delineation For Brain Radiosurgery by a ConvNet and Non-Uniform Patch Generation

Aug 01, 2018

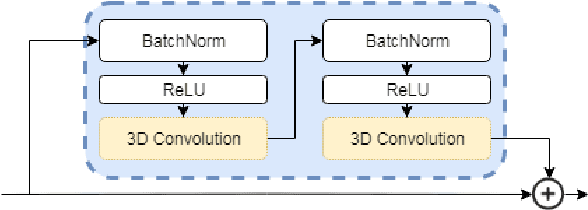

Abstract:Deep learning methods are actively used for brain lesion segmentation. One of the most popular models is DeepMedic, which was developed for segmentation of relatively large lesions like glioma and ischemic stroke. In our work, we consider segmentation of brain tumors appropriate to stereotactic radiosurgery which limits typical lesion sizes. These differences in target volumes lead to a large number of false negatives (especially for small lesions) as well as to an increased number of false positives for DeepMedic. We propose a new patch-sampling procedure to increase network performance for small lesions. We used a 6-year dataset from a stereotactic radiosurgery center. To evaluate our approach, we conducted experiments with the three most frequent brain tumors: metastasis, meningioma, schwannoma. In addition to cross-validation, we estimated quality on a hold-out test set which was collected several years later than the train one. The experimental results show solid improvements in both cases.

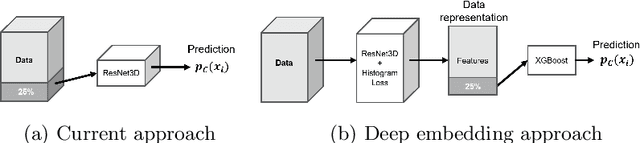

Predicting Conversion of Mild Cognitive Impairments to Alzheimer's Disease and Exploring Impact of Neuroimaging

Jul 30, 2018

Abstract:Nowadays, a lot of scientific efforts are concentrated on the diagnosis of Alzheimer's Disease (AD) applying deep learning methods to neuroimaging data. Even for 2017, there were published more than a hundred papers dedicated to AD diagnosis, whereas only a few works considered a problem of mild cognitive impairments (MCI) conversion to the AD. However, the conversion prediction is an important problem since approximately 15% of patients with MCI converges to the AD every year. In the current work, we are focusing on the conversion prediction using brain Magnetic Resonance Imaging and clinical data, such as demographics, cognitive assessments, genetic, and biochemical markers. First of all, we applied state-of-the-art deep learning algorithms on the neuroimaging data and compared these results with two machine learning algorithms that we fit using the clinical data. As a result, the models trained on the clinical data outperform the deep learning algorithms applied to the MR images. To explore the impact of neuroimaging further, we trained a deep feed-forward embedding using similarity learning with Histogram loss on all available MRIs and obtained 64-dimensional vector representation of neuroimaging data. The use of learned representation from the deep embedding allowed to increase the quality of prediction based on the neuroimaging. Finally, the current results on this dataset show that the neuroimaging does affect conversion prediction, however, cannot noticeably increase the quality of the prediction. The best results of predicting MCI-to-AD conversion are provided by XGBoost algorithm trained on the clinical and embedding data. The resulting accuracy is 0.76 +- 0.01 and the area under the ROC curve - 0.86 +- 0.01.

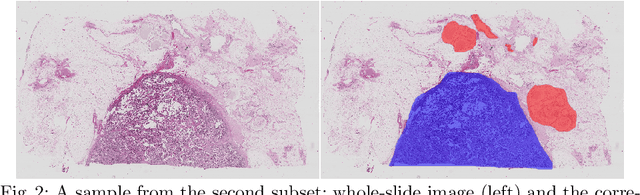

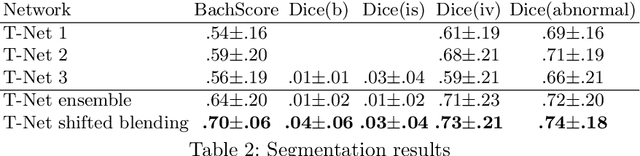

Ensembling Neural Networks for Digital Pathology Images Classification and Segmentation

Feb 03, 2018

Abstract:In the last years, neural networks have proven to be a powerful framework for various image analysis problems. However, some application domains have specific limitations. Notably, digital pathology is an example of such fields due to tremendous image sizes and quite limited number of training examples available. In this paper, we adopt state-of-the-art convolutional neural networks (CNN) architectures for digital pathology images analysis. We propose to classify image patches to increase effective sample size and then to apply an ensembling technique to build prediction for the original images. To validate the developed approaches, we conducted experiments with \textit{Breast Cancer Histology Challenge} dataset and obtained 90\% accuracy for the 4-class tissue classification task.

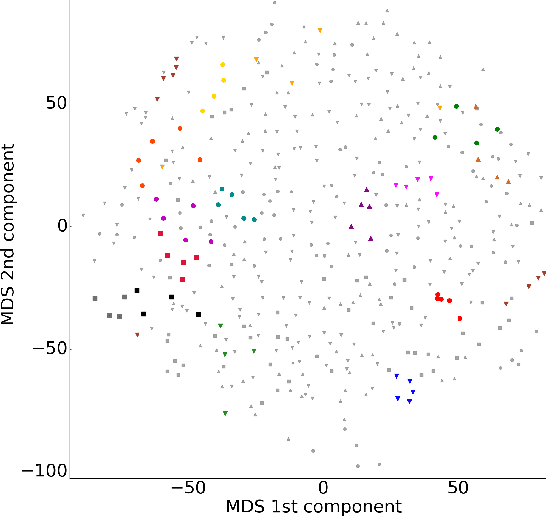

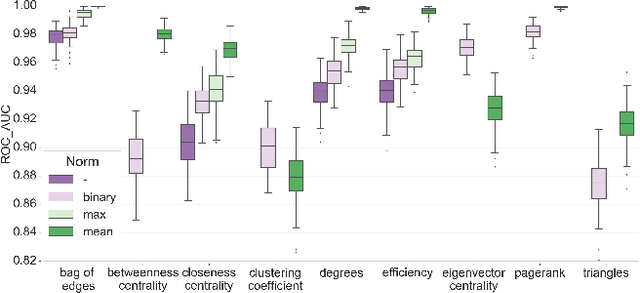

Structural Connectome Validation Using Pairwise Classification

Jan 30, 2017

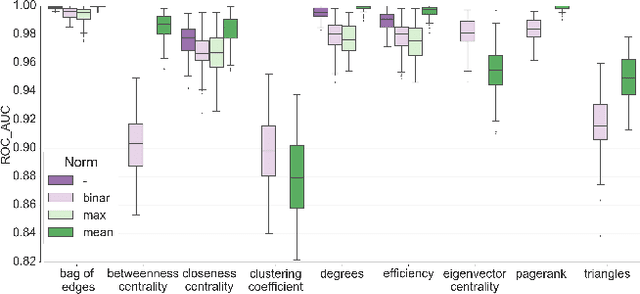

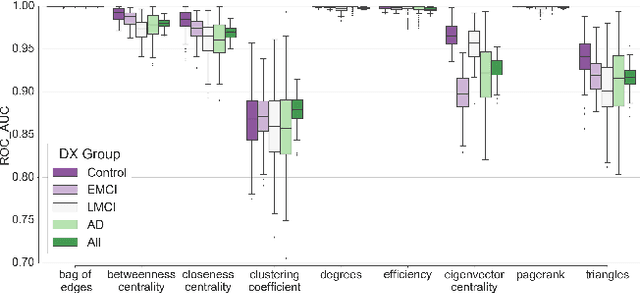

Abstract:In this work, we study the extent to which structural connectomes and topological derivative measures are unique to individual changes within human brains. To do so, we classify structural connectome pairs from two large longitudinal datasets as either belonging to the same individual or not. Our data is comprised of 227 individuals from the Alzheimer's Disease Neuroimaging Initiative (ADNI) and 226 from the Parkinson's Progression Markers Initiative (PPMI). We achieve 0.99 area under the ROC curve score for features which represent either weights or network structure of the connectomes (node degrees, PageRank and local efficiency). Our approach may be useful for eliminating noisy features as a preprocessing step in brain aging studies and early diagnosis classification problems.

Add to Chrome

Add to Chrome Add to Firefox

Add to Firefox Add to Edge

Add to Edge