Mengjuan Liu

Promoting Open-domain Dialogue Generation through Learning Pattern Information between Contexts and Responses

Sep 06, 2023

Abstract:Recently, utilizing deep neural networks to build the opendomain dialogue models has become a hot topic. However, the responses generated by these models suffer from many problems such as responses not being contextualized and tend to generate generic responses that lack information content, damaging the user's experience seriously. Therefore, many studies try introducing more information into the dialogue models to make the generated responses more vivid and informative. Unlike them, this paper improves the quality of generated responses by learning the implicit pattern information between contexts and responses in the training samples. In this paper, we first build an open-domain dialogue model based on the pre-trained language model (i.e., GPT-2). And then, an improved scheduled sampling method is proposed for pre-trained models, by which the responses can be used to guide the response generation in the training phase while avoiding the exposure bias problem. More importantly, we design a response-aware mechanism for mining the implicit pattern information between contexts and responses so that the generated replies are more diverse and approximate to human replies. Finally, we evaluate the proposed model (RAD) on the Persona-Chat and DailyDialog datasets; and the experimental results show that our model outperforms the baselines on most automatic and manual metrics.

From CNN to Transformer: A Review of Medical Image Segmentation Models

Aug 10, 2023

Abstract:Medical image segmentation is an important step in medical image analysis, especially as a crucial prerequisite for efficient disease diagnosis and treatment. The use of deep learning for image segmentation has become a prevalent trend. The widely adopted approach currently is U-Net and its variants. Additionally, with the remarkable success of pre-trained models in natural language processing tasks, transformer-based models like TransUNet have achieved desirable performance on multiple medical image segmentation datasets. In this paper, we conduct a survey of the most representative four medical image segmentation models in recent years. We theoretically analyze the characteristics of these models and quantitatively evaluate their performance on two benchmark datasets (i.e., Tuberculosis Chest X-rays and ovarian tumors). Finally, we discuss the main challenges and future trends in medical image segmentation. Our work can assist researchers in the related field to quickly establish medical segmentation models tailored to specific regions.

Multi-Dimensional Self Attention based Approach for Remaining Useful Life Estimation

Dec 12, 2022Abstract:Remaining Useful Life (RUL) estimation plays a critical role in Prognostics and Health Management (PHM). Traditional machine health maintenance systems are often costly, requiring sufficient prior expertise, and are difficult to fit into highly complex and changing industrial scenarios. With the widespread deployment of sensors on industrial equipment, building the Industrial Internet of Things (IIoT) to interconnect these devices has become an inexorable trend in the development of the digital factory. Using the device's real-time operational data collected by IIoT to get the estimated RUL through the RUL prediction algorithm, the PHM system can develop proactive maintenance measures for the device, thus, reducing maintenance costs and decreasing failure times during operation. This paper carries out research into the remaining useful life prediction model for multi-sensor devices in the IIoT scenario. We investigated the mainstream RUL prediction models and summarized the basic steps of RUL prediction modeling in this scenario. On this basis, a data-driven approach for RUL estimation is proposed in this paper. It employs a Multi-Head Attention Mechanism to fuse the multi-dimensional time-series data output from multiple sensors, in which the attention on features is used to capture the interactions between features and attention on sequences is used to learn the weights of time steps. Then, the Long Short-Term Memory Network is applied to learn the features of time series. We evaluate the proposed model on two benchmark datasets (C-MAPSS and PHM08), and the results demonstrate that it outperforms the state-of-art models. Moreover, through the interpretability of the multi-head attention mechanism, the proposed model can provide a preliminary explanation of engine degradation. Therefore, this approach is promising for predictive maintenance in IIoT scenarios.

Real-time Bidding Strategy in Display Advertising: An Empirical Analysis

Nov 30, 2022

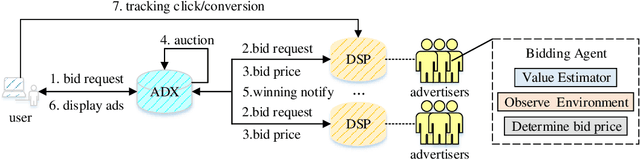

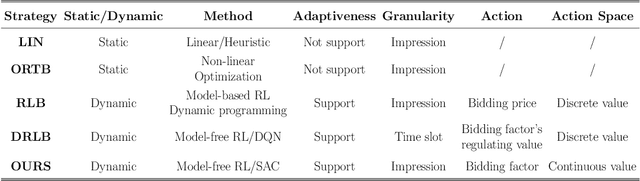

Abstract:Bidding strategies that help advertisers determine bidding prices are receiving increasing attention as more and more ad impressions are sold through real-time bidding systems. This paper first describes the problem and challenges of optimizing bidding strategies for individual advertisers in real-time bidding display advertising. Then, several representative bidding strategies are introduced, especially the research advances and challenges of reinforcement learning-based bidding strategies. Further, we quantitatively evaluate the performance of several representative bidding strategies on the iPinYou dataset. Specifically, we examine the effects of state, action, and reward function on the performance of reinforcement learning-based bidding strategies. Finally, we summarize the general steps for optimizing bidding strategies using reinforcement learning algorithms and present our suggestions.

Bid Optimization using Maximum Entropy Reinforcement Learning

Oct 11, 2021

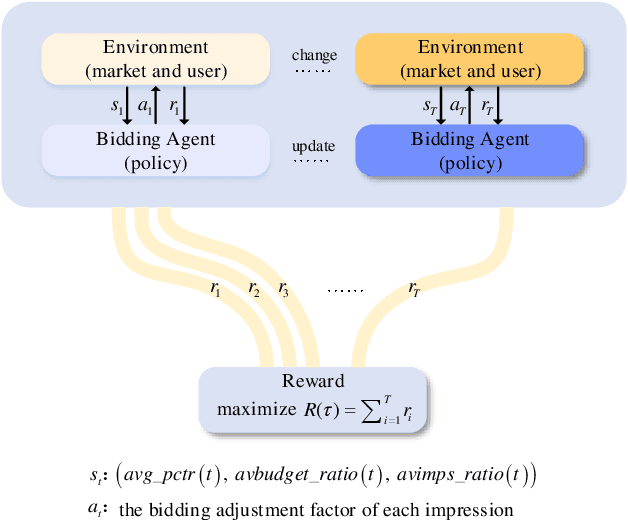

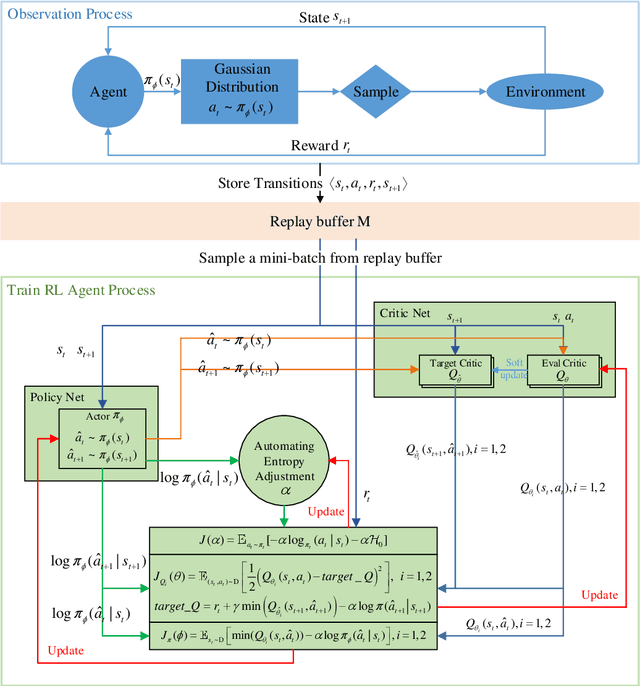

Abstract:Real-time bidding (RTB) has become a critical way of online advertising. In RTB, an advertiser can participate in bidding ad impressions to display its advertisements. The advertiser determines every impression's bidding price according to its bidding strategy. Therefore, a good bidding strategy can help advertisers improve cost efficiency. This paper focuses on optimizing a single advertiser's bidding strategy using reinforcement learning (RL) in RTB. Unfortunately, it is challenging to optimize the bidding strategy through RL at the granularity of impression due to the highly dynamic nature of the RTB environment. In this paper, we first utilize a widely accepted linear bidding function to compute every impression's base price and optimize it by a mutable adjustment factor derived from the RTB auction environment, to avoid optimizing every impression's bidding price directly. Specifically, we use the maximum entropy RL algorithm (Soft Actor-Critic) to optimize the adjustment factor generation policy at the impression-grained level. Finally, the empirical study on a public dataset demonstrates that the proposed bidding strategy has superior performance compared with the baselines.

Add to Chrome

Add to Chrome Add to Firefox

Add to Firefox Add to Edge

Add to Edge