Matthew Tong

Coil Reweighting to Suppress Motion Artifacts in Real-Time Exercise Cine Imaging

May 26, 2024

Abstract:Background: Accelerated real-time cine (RT-Cine) imaging enables cardiac function assessment without the need for breath-holding. However, when performed during in-magnet exercise, RT-Cine images may exhibit significant motion artifacts. Methods: By projecting the time-averaged images to the subspace spanned by the coil sensitivity maps, we propose a coil reweighting (CR) method to automatically suppress a subset of receive coils that introduces a high level of artifacts in the reconstructed image. RT-Cine data collected at rest and during exercise from ten healthy volunteers and six patients were utilized to assess the performance of the proposed method. One short-axis and one two-chamber RT-Cine series reconstructed with and without CR from each subject were visually scored by two cardiologists in terms of the level of artifacts on a scale of 1 (worst) to 5 (best). Results: For healthy volunteers, applying CR to RT-Cine images collected at rest did not significantly change the image quality score (p=1). In contrast, for RT-Cine images collected during exercise, CR significantly improved the score from 3.9 to 4.68 (p<0.001). Similarly, in patients, CR did not significantly change the score for images collected at rest (p=0.031) but markedly improved the score from 3.15 to 4.42 (p<0.001) for images taken during exercise. Despite lower image quality scores in the patient cohort compared to healthy subjects, likely due to larger body habitus and the difficulty of limiting body motion during exercise, CR effectively suppressed motion artifacts, with all image series from the patient cohort receiving a score of four or higher. Conclusion: Using data from healthy subjects and patients, we demonstrate that the motion artifacts in the reconstructed RT-Cine images can be effectively suppressed significantly with the proposed CR method.

Accelerated Real-time Cine and Flow under In-magnet Staged Exercise

Feb 27, 2024

Abstract:Background: Cardiovascular magnetic resonance imaging (CMR) is a well-established imaging tool for diagnosing and managing cardiac conditions. The integration of exercise stress with CMR (ExCMR) can enhance its diagnostic capacity. Despite recent advances in CMR technology, ExCMR remains technically challenging due to motion artifacts and limited spatial and temporal resolution. Methods: This study investigates the feasibility of biventricular functional and hemodynamic assessment using real-time (RT) ExCMR during a staged exercise protocol in 26 healthy volunteers. We introduce a coil reweighting technique to minimize motion artifacts. In addition, we identify and analyze heartbeats from the end-expiratory phase to enhance the repeatability of cardiac function quantification. To demonstrate clinical feasibility, qualitative results from five patients are also presented. Results: Our findings indicate a consistent decrease in end-systolic volume (ESV) and stable end-diastolic volume (EDV) across exercise intensities, leading to increased stroke volume (SV) and ejection fraction (EF). Coil reweighting effectively reduces motion artifacts, improving image quality in both healthy volunteers and patients. The repeatability of cardiac function parameters, demonstrated by scan-rescan tests in nine volunteers, improves with the selection of end-expiratory beats. Conclusions: The study demonstrates that RT ExCMR with in-magnet exercise is a feasible and effective method for dynamic cardiac function monitoring during exercise. The proposed coil reweighting technique and selection of end-expiratory beats significantly enhance image quality and repeatability.

Cardiac and respiratory motion extraction for MRI using Pilot Tone-a patient study

Jan 31, 2022Abstract:Background:The Pilot Tone (PT) technology allows contactless monitoring of physiological motion during the MRI scan. Several studies have shown that both respiratory and cardiac motion can be extracted from the PT signal successfully. However, most of these studies were performed in healthy volunteers. In this study, we seek to evaluate the accuracy and reliability of the cardiac and respiratory signals extracted from PT in patients clinically referred for cardiovascular MRI (CMR). Methods: Twenty-three patients were included in this study, each scanned under free-breathing conditions using a balanced steady-state free-precession real-time (RT) cine sequence on a 1.5T scanner. The PT signal was generated by a built-in PT transmitter integrated within the body array coil. For comparison, ECG and BioMatrix (BM) respiratory sensor signals were also synchronously recorded. To assess the performances of PT, ECG, and BM, cardiac and respiratory signals extracted from the RT cine images were used as the ground truth. Results: The respiratory motion extracted from PT correlated positively with the image-derived respiratory signal in all cases and showed a stronger correlation (absolute coefficient: 0.95-0.09) than BM (0.72-0.24). For the cardiac signal, the precision of PT-based triggers (standard deviation of PT trigger locations relative to ECG triggers) ranged from 6.6 to 81.2 ms (median 19.5 ms). Overall, the performance of PT-based trigger extraction was comparable to that of ECG. Conclusions: This study demonstrates the potential of PT to monitor both respiratory and cardiac motion in patients clinically referred for CMR.

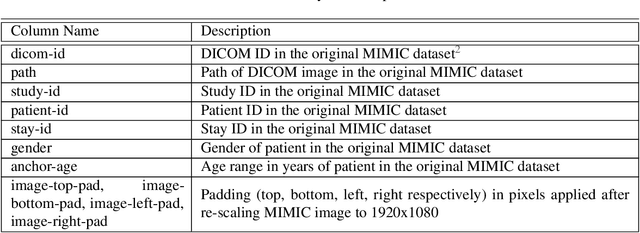

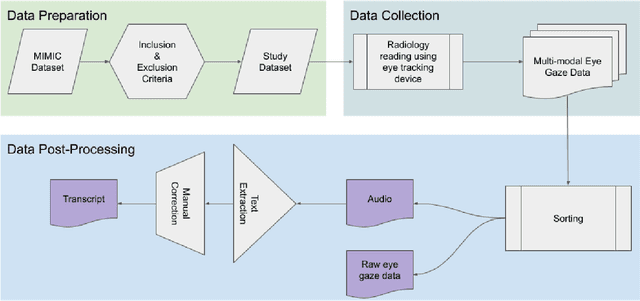

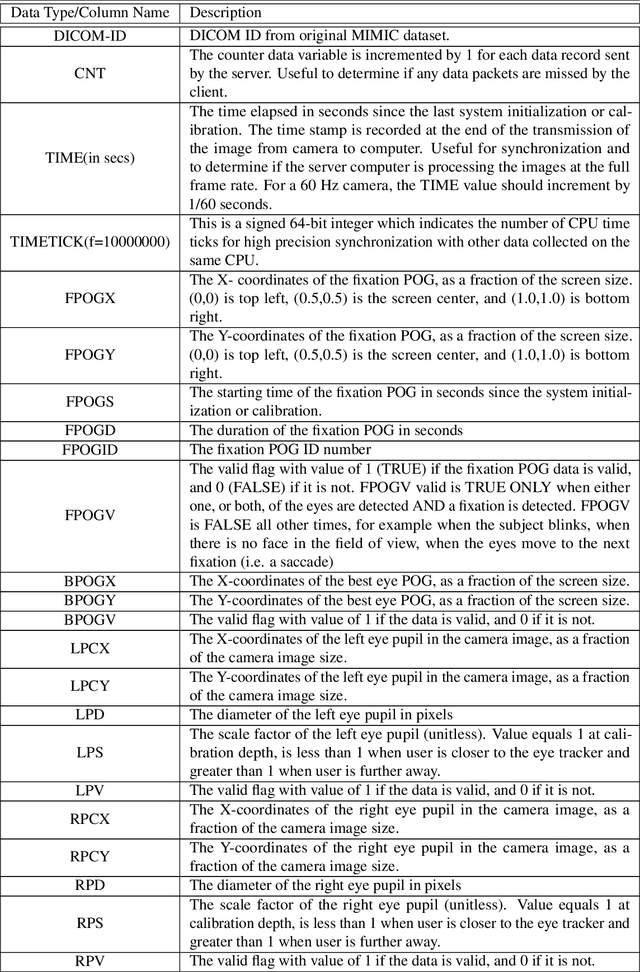

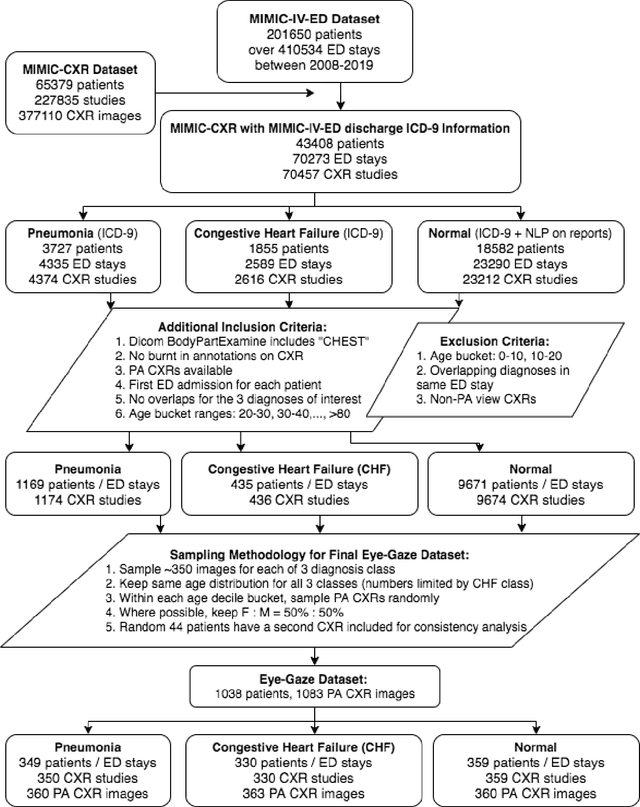

Creation and Validation of a Chest X-Ray Dataset with Eye-tracking and Report Dictation for AI Development

Oct 08, 2020

Abstract:We developed a rich dataset of Chest X-Ray (CXR) images to assist investigators in artificial intelligence. The data were collected using an eye tracking system while a radiologist reviewed and reported on 1,083 CXR images. The dataset contains the following aligned data: CXR image, transcribed radiology report text, radiologist's dictation audio and eye gaze coordinates data. We hope this dataset can contribute to various areas of research particularly towards explainable and multimodal deep learning / machine learning methods. Furthermore, investigators in disease classification and localization, automated radiology report generation, and human-machine interaction can benefit from these data. We report deep learning experiments that utilize the attention maps produced by eye gaze dataset to show the potential utility of this data.

Add to Chrome

Add to Chrome Add to Firefox

Add to Firefox Add to Edge

Add to Edge