Marija Furdek

Variational Autoencoder Domain Adaptation for Cross-System Generalization in ML-Based SOP Monitoring

Apr 20, 2026Abstract:Machine learning (ML) models trained to detect physical-layer threats on one optical fiber system often fail catastrophically when applied to a different system, due to variations in operating wavelength, fiber properties, and network architecture. To overcome this, we propose a Domain Adaptation (DA) framework based on a Variational Autoencoder (VAE) that learns a shared representation capturing event signatures common to both systems while suppressing system-specific differences. The shared encoder is first trained on the combined data from two distinct optical systems: a 21 km O-band dark-fiber testbed (System 1) and a 63.4 km C-band live metro ring (System 2). The encoder is then frozen, and a classifier is trained using labels from an individual system. The proposed approach achieves 95.3% and 73.5% cross-system accuracy when moving from System 1 to System 2 and vice versa, respectively. This corresponds to gains of 83.4% and 51% over a fully supervised Deep Neural Network (DNN) baseline trained on a single system, while preserving intra-system performance.

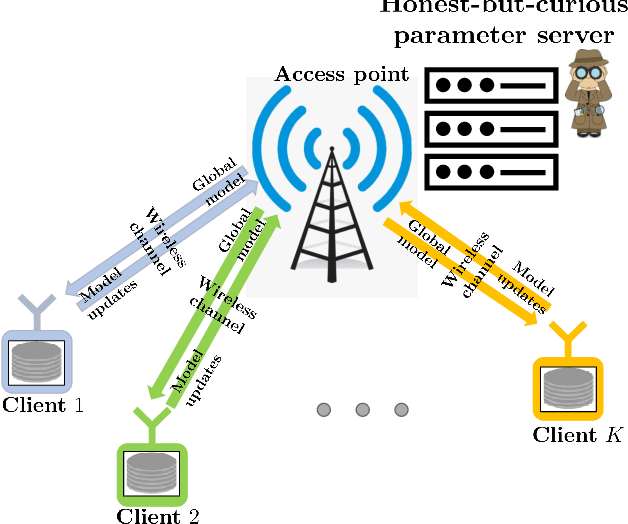

Privacy-Preserving Wireless Federated Learning Exploiting Inherent Hardware Impairments

Feb 21, 2021

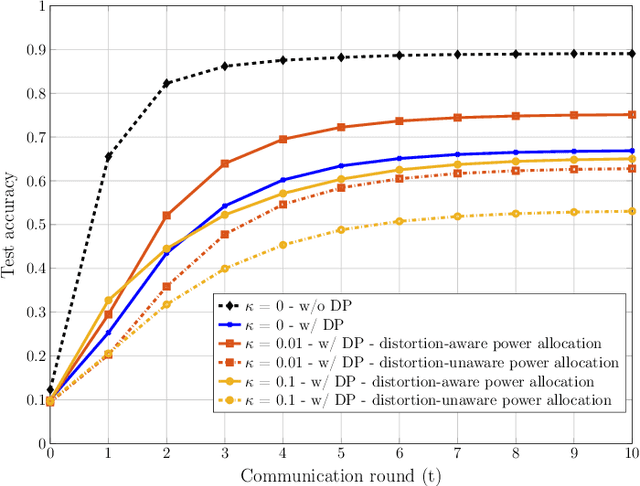

Abstract:We consider a wireless federated learning system where multiple data holder edge devices collaborate to train a global model via sharing their parameter updates with an honest-but-curious parameter server. We demonstrate that the inherent hardware-induced distortion perturbing the model updates of the edge devices can be exploited as a privacy-preserving mechanism. In particular, we model the distortion as power-dependent additive Gaussian noise and present a power allocation strategy that provides privacy guarantees within the framework of differential privacy. We conduct numerical experiments to evaluate the performance of the proposed power allocation scheme under different levels of hardware impairments.

Add to Chrome

Add to Chrome Add to Firefox

Add to Firefox Add to Edge

Add to Edge