L. N. Vishnunandan Venkatesh

Self-Evaluation in One-Shot Learning from Demonstration of Contact-Intensive Tasks

Apr 03, 2019

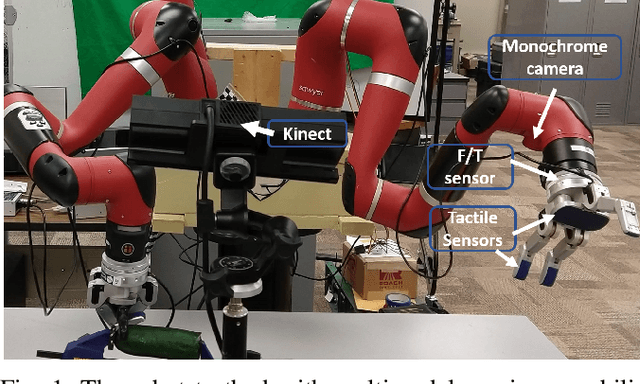

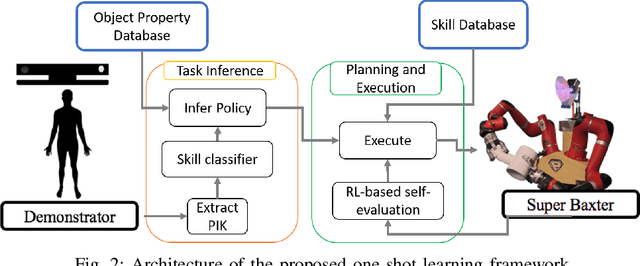

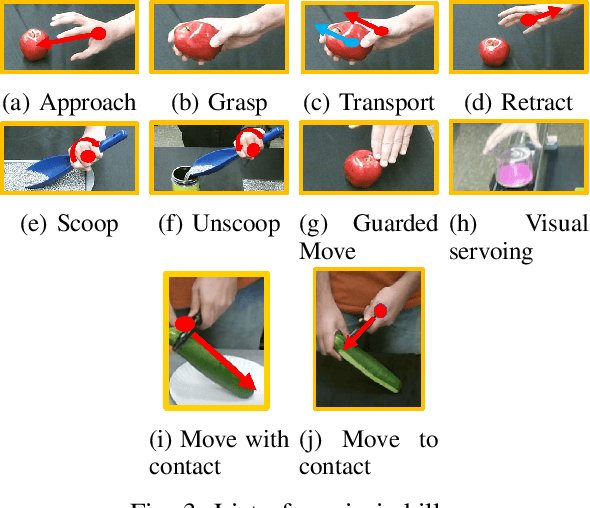

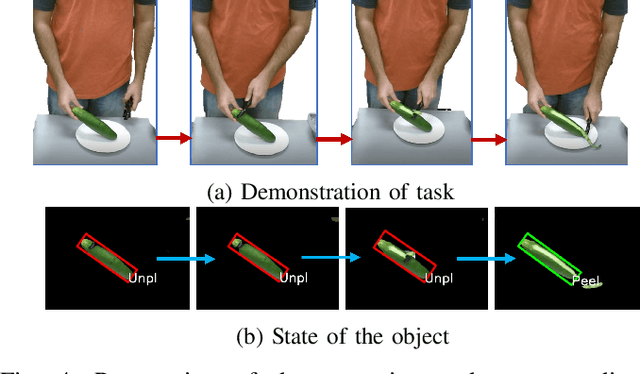

Abstract:Humans naturally "program" a fellow collaborator to perform a task by demonstrating the task few times. It is intuitive, therefore, for a human to program a collaborative robot by demonstration and many paradigms use a single demonstration of the task. This is a form of one-shot learning in which a single training example, plus some context of the task, is used to infer a model of the task for subsequent execution and later refinement. This paper presents a one-shot learning from demonstration framework to learn contact-intensive tasks using only visual perception of the demonstrated task. The robot learns a policy for performing the tasks in terms of a priori skills and further uses self-evaluation based on visual and tactile perception of the skill performance to learn the force correspondences for the skills. The self-evaluation is performed based on goal states detected in the demonstration with the help of task context and the skill parameters are tuned using reinforcement learning. This approach enables the robot to learn force correspondences which cannot be inferred from a visual demonstration of the task. The effectiveness of this approach is evaluated using a vegetable peeling task.

DESK: A Robotic Activity Dataset for Dexterous Surgical Skills Transfer to Medical Robots

Mar 03, 2019

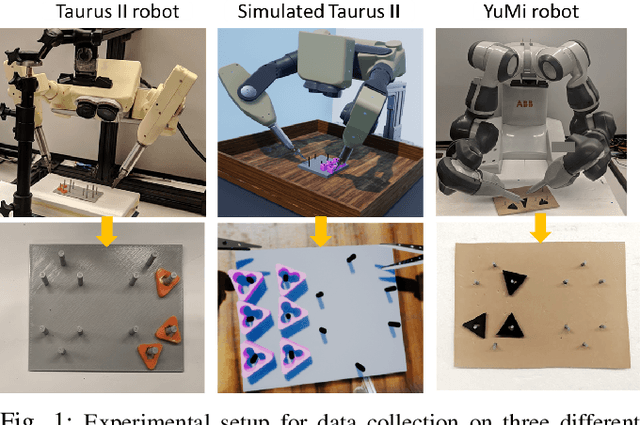

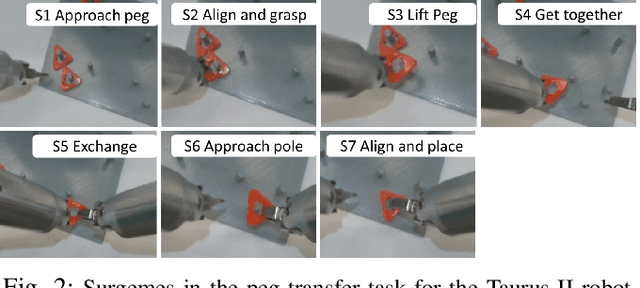

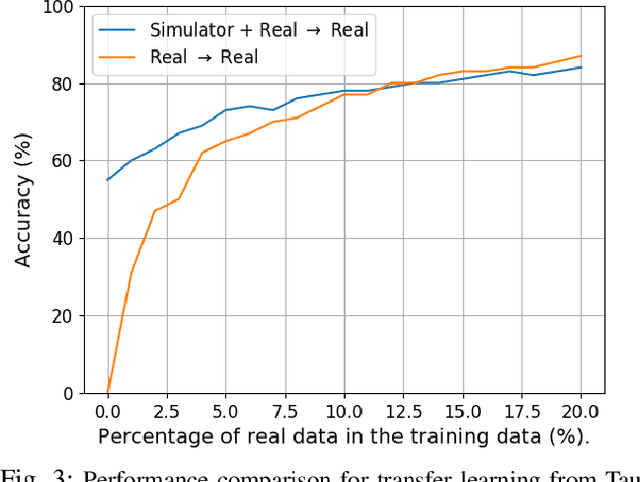

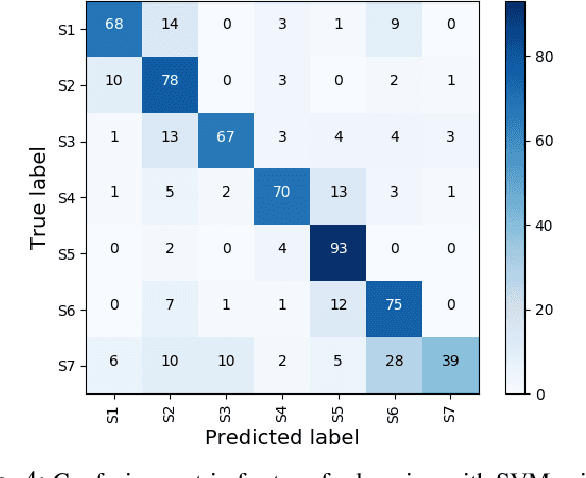

Abstract:Datasets are an essential component for training effective machine learning models. In particular, surgical robotic datasets have been key to many advances in semi-autonomous surgeries, skill assessment, and training. Simulated surgical environments can enhance the data collection process by making it faster, simpler and cheaper than real systems. In addition, combining data from multiple robotic domains can provide rich and diverse training data for transfer learning algorithms. In this paper, we present the DESK (Dexterous Surgical Skill) dataset. It comprises a set of surgical robotic skills collected during a surgical training task using three robotic platforms: the Taurus II robot, Taurus II simulated robot, and the YuMi robot. This dataset was used to test the idea of transferring knowledge across different domains (e.g. from Taurus to YuMi robot) for a surgical gesture classification task with seven gestures. We explored three different scenarios: 1) No transfer, 2) Transfer from simulated Taurus to real Taurus and 3) Transfer from Simulated Taurus to the YuMi robot. We conducted extensive experiments with three supervised learning models and provided baselines in each of these scenarios. Results show that using simulation data during training enhances the performance on the real robot where limited real data is available. In particular, we obtained an accuracy of 55% on the real Taurus data using a model that is trained only on the simulator data. Furthermore, we achieved an accuracy improvement of 34% when 3% of the real data is added into the training process.

Add to Chrome

Add to Chrome Add to Firefox

Add to Firefox Add to Edge

Add to Edge