Koki Ryu

What Do Vision-Language Models Encode for Personalized Image Aesthetics Assessment?

Apr 13, 2026Abstract:Personalized image aesthetics assessment (PIAA) is an important research problem with practical real-world applications. While methods based on vision-language models (VLMs) are promising candidates for PIAA, it remains unclear whether they internally encode rich, multi-level aesthetic attributes required for effective personalization. In this paper, we first analyze the internal representations of VLMs to examine the presence and distribution of such aesthetic attributes, and then leverage them for lightweight, individual-level personalization without model fine-tuning. Our analysis reveals that VLMs encode diverse aesthetic attributes that propagate into the language decoder layers. Building on these representations, we demonstrate that simple linear models can perform PIAA effectively. We further analyze how aesthetic information is transferred across layers in different VLM architectures and across image domains. Our findings provide insights into how VLMs can be utilized for modeling subjective, individual aesthetic preferences. Our code is available at https://github.com/ynklab/vlm-latent-piaa.

LLM-jp: A Cross-organizational Project for the Research and Development of Fully Open Japanese LLMs

Jul 04, 2024

Abstract:This paper introduces LLM-jp, a cross-organizational project for the research and development of Japanese large language models (LLMs). LLM-jp aims to develop open-source and strong Japanese LLMs, and as of this writing, more than 1,500 participants from academia and industry are working together for this purpose. This paper presents the background of the establishment of LLM-jp, summaries of its activities, and technical reports on the LLMs developed by LLM-jp. For the latest activities, visit https://llm-jp.nii.ac.jp/en/.

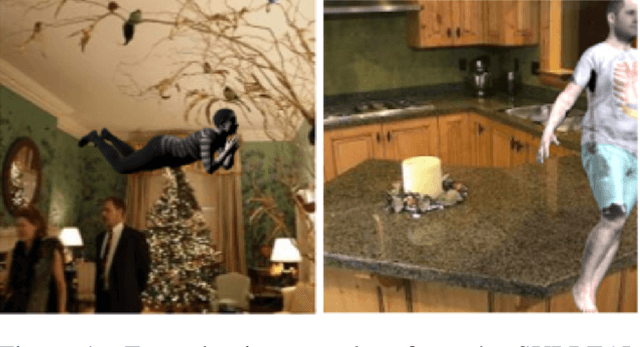

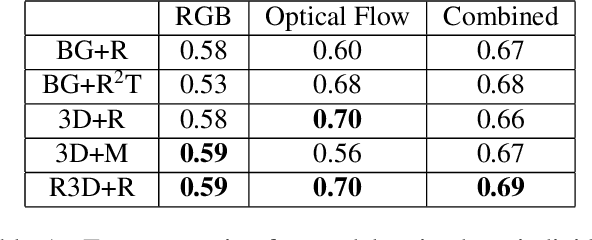

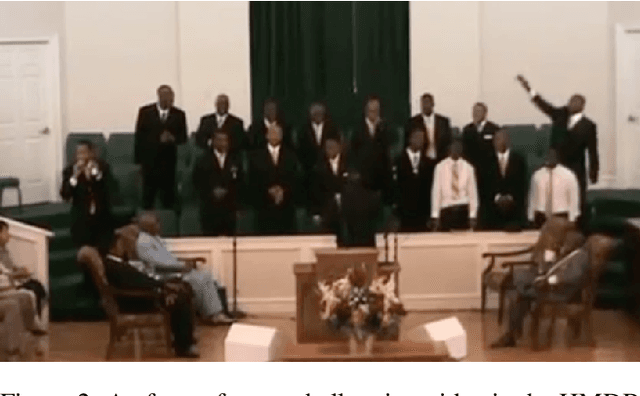

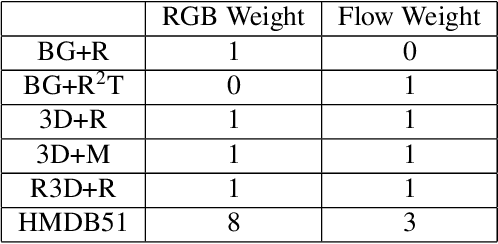

Creating a Large-scale Synthetic Dataset for Human Activity Recognition

Jul 21, 2020

Abstract:Creating and labelling datasets of videos for use in training Human Activity Recognition models is an arduous task. In this paper, we approach this by using 3D rendering tools to generate a synthetic dataset of videos, and show that a classifier trained on these videos can generalise to real videos. We use five different augmentation techniques to generate the videos, leading to a wide variety of accurately labelled unique videos. We fine tune a pre-trained I3D model on our videos, and find that the model is able to achieve a high accuracy of 73% on the HMDB51 dataset over three classes. We also find that augmenting the HMDB training set with our dataset provides a 2% improvement in the performance of the classifier. Finally, we discuss possible extensions to the dataset, including virtual try on and modeling motion of the people.

Add to Chrome

Add to Chrome Add to Firefox

Add to Firefox Add to Edge

Add to Edge