Kensuke Harada

Osaka University, AIST

Planning to Build Soma Blocks Using a Dual-arm Robot

Mar 02, 2020

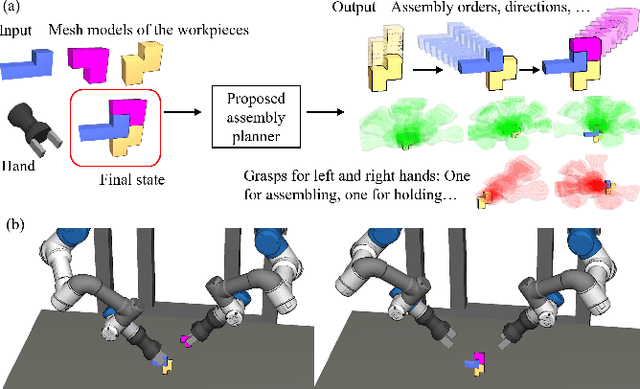

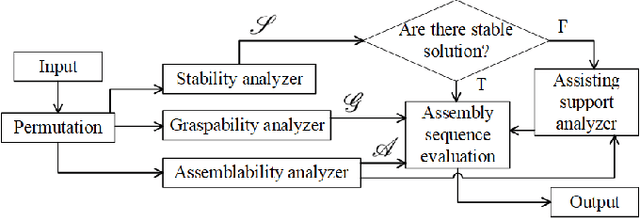

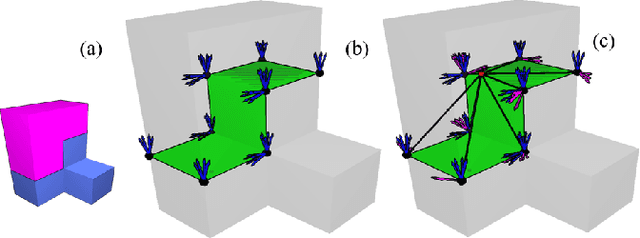

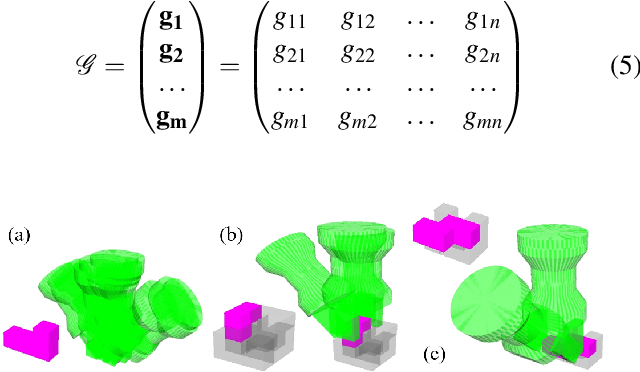

Abstract:This paper presents a planner that can automatically find an optimal assembly sequence for a dual-arm robot to assemble the soma blocks. The planner uses the mesh model of objects and the final state of the assembly to generate all possible assembly sequence and evaluate the optimal assembly sequence by considering the stability, graspability, assemblability, as well as the need for a second arm. Especially, the need for a second arm is considered when supports from worktables and other workpieces are not enough to produce a stable assembly. The planner will refer to an assisting grasp to additionally hold and support the unstable components so that the robot can further assemble new workpieces and finally reach a stable state. The output of the planner is the optimal assembly orders, candidate grasps, assembly directions, and the assisting grasps if any. The output of the planner can be used to guide a dual-arm robot to perform the assembly task. The planner is verified in both simulations and real-world executions.

Learning Contact-Rich Manipulation Tasks with Rigid Position-Controlled Robots: Learning to Force Control

Mar 02, 2020

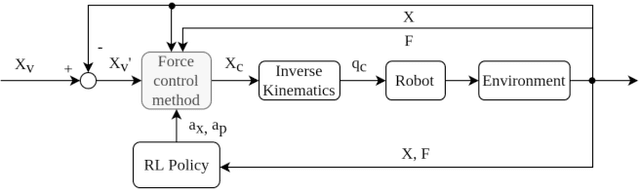

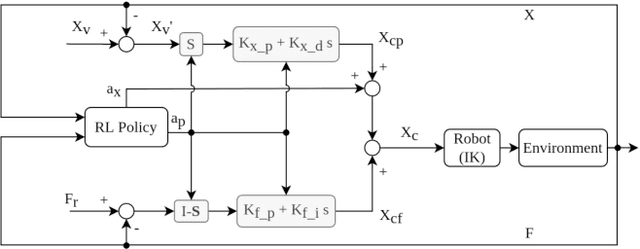

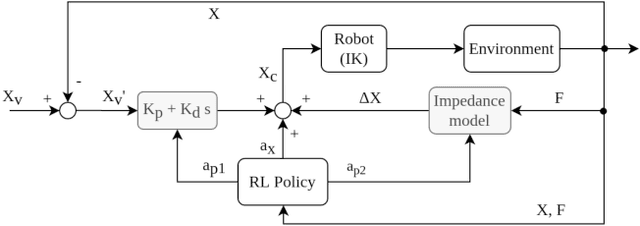

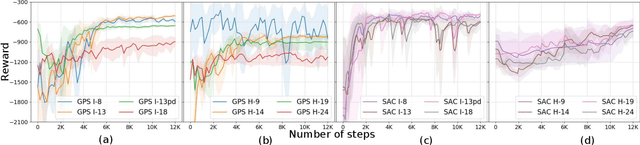

Abstract:To fully realize industrial automation, it is indispensable to give the robot manipulators the ability to adapt by themselves to their surroundings and to learn to handle novel manipulation tasks. Reinforcement Learning (RL) methods have been proven successful in solving manipulation tasks autonomously. However, RL is still not widely adopted on real robotic systems because working with real hardware entails additional challenges, especially when using rigid position-controlled manipulators. These challenges include the need for a robust controller to avoid undesired behavior, that risk damaging the robot and its environment, and constant supervision from a human operator. The main contributions of this work are, first, we propose a framework for safely training an RL agent on manipulation tasks using a rigid robot. Second, to enable a position-controlled manipulator to perform contact-rich manipulation tasks, we implemented two different force control schemes based on standard force feedback controllers; one is a modified hybrid position-force control, and the other one is an impedance control. Third, we empirically study both control schemes when used as the action representation of an RL agent. We evaluate the trade-off between control complexity and performance by comparing several versions of the control schemes, each with a different number of force control parameters. The proposed methods are validated both on simulation and a real robot, a UR3 e-series robotic arm when executing contact-rich manipulation tasks.

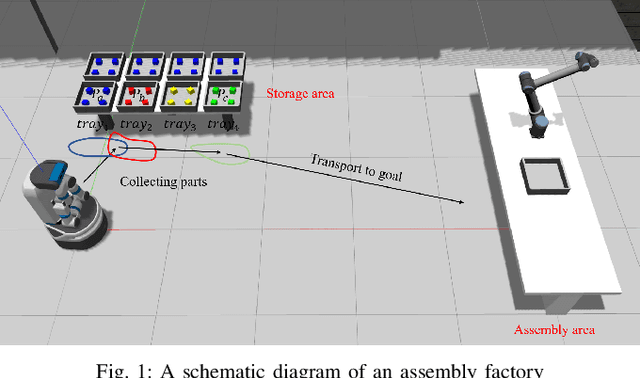

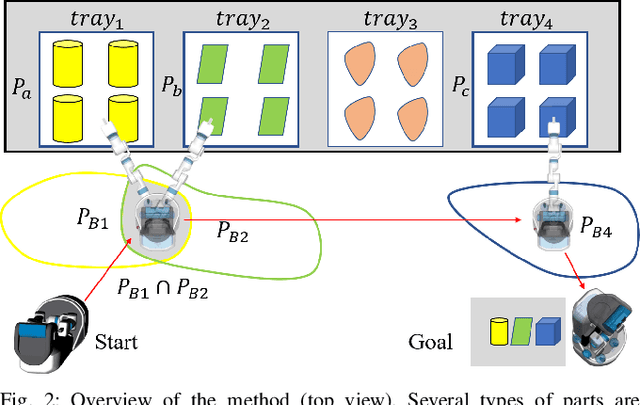

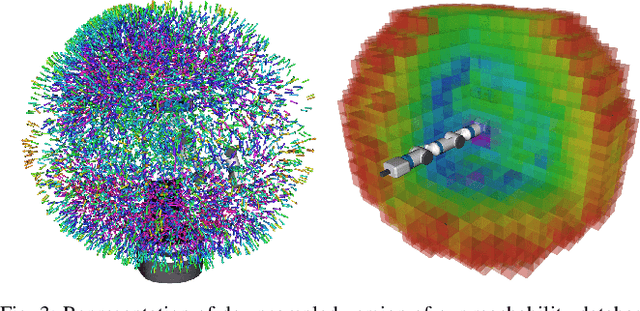

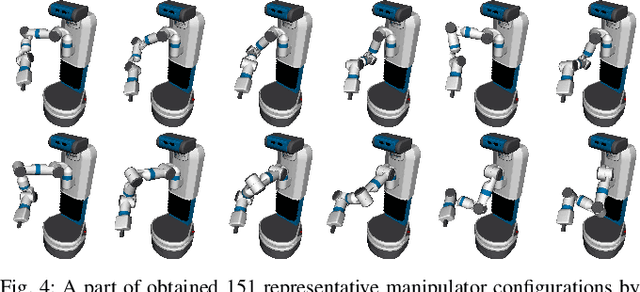

Planning an Efficient and Robust Base Sequence for a Mobile Manipulator Performing Multiple Pick-and-place Tasks

Jan 22, 2020

Abstract:In this paper, we address efficiently and robustly collecting objects stored in different trays using a mobile manipulator. A resolution complete method, based on precomputed reachability database, is proposed to explore collision-free inverse kinematics (IK) solutions and then a resolution complete set of feasible base positions can be determined. This method approximates a set of representative IK solutions that are especially helpful when solving IK and checking collision are treated separately. For real world applications, we take into account the base positioning uncertainty and plan a sequence of base positions that reduce the number of necessary base movements for collecting the target objects, the base sequence is robust in that the mobile manipulator is able to complete the part-supply task even there is certain deviation from the planned base positions. Our experiments demonstrate both the efficiency compared to regular base sequence and the feasibility in real world applications.

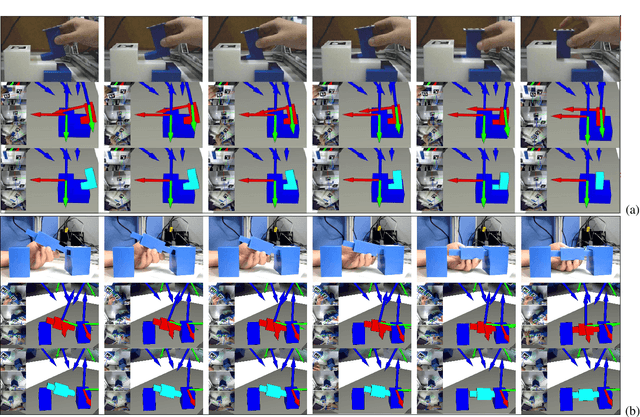

Motion Planning through Demonstration to Deal with Complex Motions in Assembly Process

Oct 04, 2019

Abstract:Complex and skillful motions in actual assembly process are challenging for the robot to generate with existing motion planning approaches, because some key poses during the human assembly can be too skillful for the robot to realize automatically. In order to deal with this problem, this paper develops a motion planning method using skillful motions from demonstration, which can be applied to complete robotic assembly process including complex and skillful motions. In order to demonstrate conveniently without redundant third-party devices, we attach augmented reality (AR) markers to the manipulated object to track and capture poses of the object during the human assembly process, which are employed as key poses to execute motion planning by the planner. Derivative of every key pose serves as criterion to determine the priority of use of key poses in order to accelerate the motion planning. The effectiveness of the presented method is verified through some numerical examples and actual robot experiments.

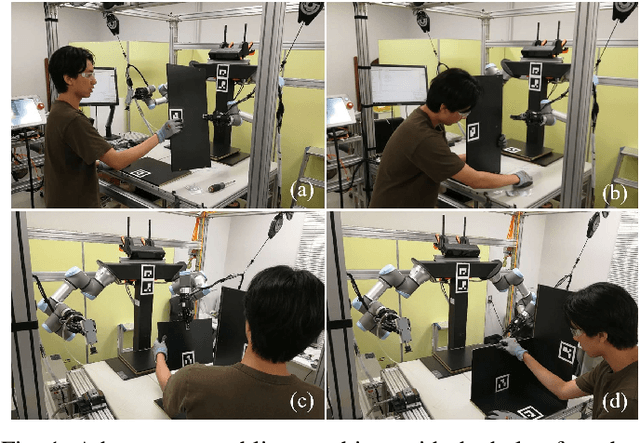

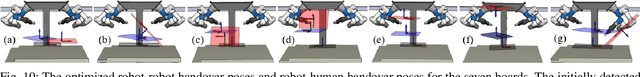

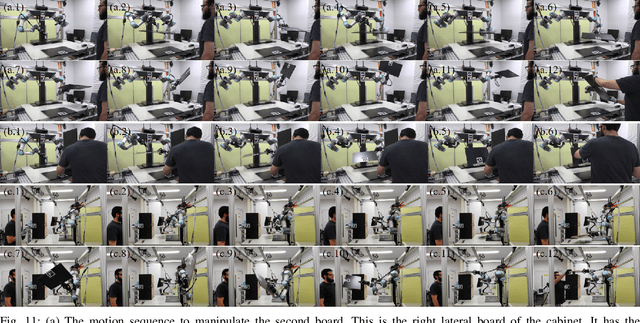

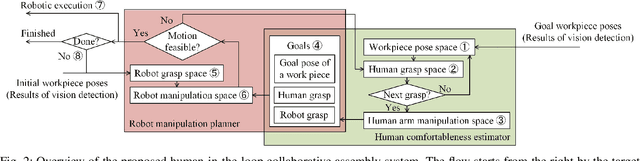

Human-in-the-loop Robotic Manipulation Planning for Collaborative Assembly

Sep 25, 2019

Abstract:This paper develops a robotic manipulation planner for human-robot collaborative assembly. Unlike previous methods which study an independent and fully AI-equipped autonomous system, this paper explores the subtask distribution between a robot and a human and studies a human-in-the-loop robotic system for collaborative assembly. The system distributes the subtasks of an assembly to robots and humans by exploiting their advantages and avoiding their disadvantages. The robot in the system will work on pick-and-place tasks and provide workpieces to humans. The human collaborator will work on fine operations like aligning, fixing, screwing, etc. A constraint based incremental manipulation planning method is proposed to generate the motion for the robots. The performance of the proposed system is demonstrated by asking a human and the dual-arm robot to collaboratively assemble a cabinet. The results showed that the proposed system and planner are effective, efficient, and can assist humans in finishing the assembly task comfortably.

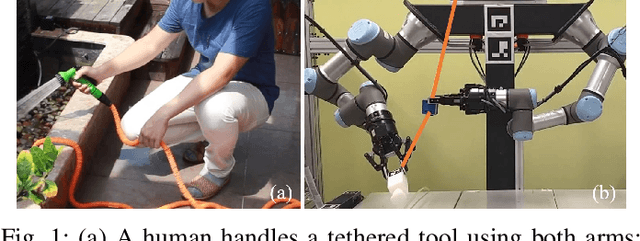

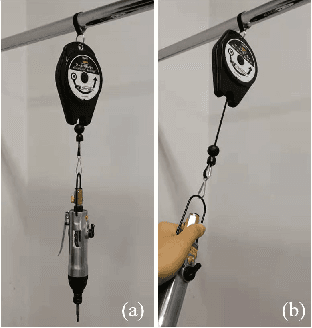

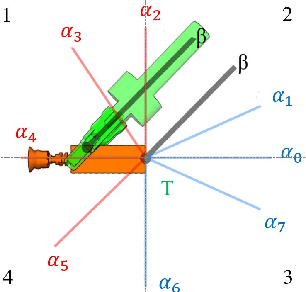

Tethered Tool Manipulation Planning with Cable Maneuvering

Sep 24, 2019

Abstract:In this paper, we present a planner for manipulating tethered tools using dual-armed robots. The planner generates robot motion sequences to maneuver a tool and its cable while avoiding robot-cable entanglements. Firstly, the planner generates an Object Manipulation Motion Sequence (OMMS) to handle the tool and place it in desired poses. Secondly, the planner examines the tool movement associated with the OMMS and computes candidate positions for a cable slider, to maneuver the tool cable and avoid collisions. Finally, the planner determines the optimal slider positions to avoid entanglements and generates a Cable Manipulation Motion Sequence (CMMS) to place the slider in these positions. The robot executes both the OMMS and CMMS to handle the tool and its cable to avoid entanglements and excess cable bending. Simulations and real-world experiments help validate the proposed method.

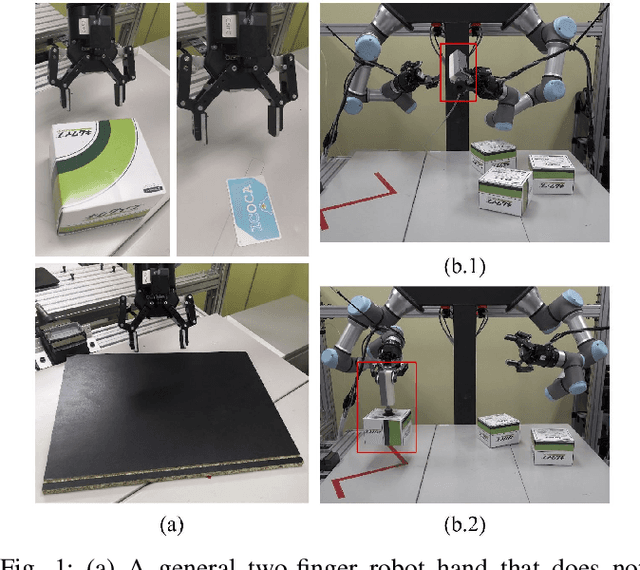

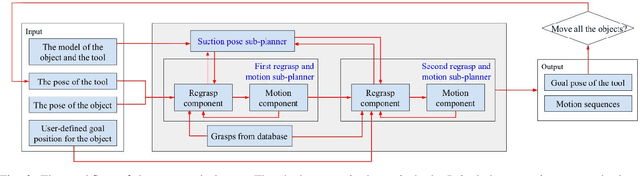

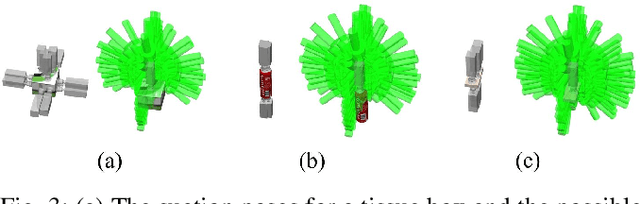

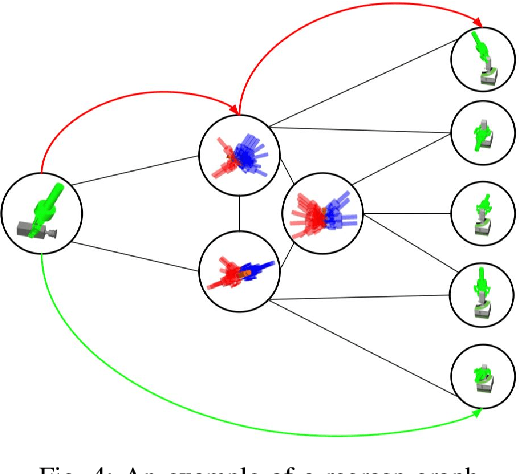

Combined Task and Motion Planning for a Dual-arm Robot to Use a Suction Cup Tool

Aug 31, 2019

Abstract:This paper proposes a combined task and motion planner for a dual-arm robot to use a suction cup tool. The planner consists of three sub-planners -- A suction pose sub-planner and two regrasp and motion sub-planners. The suction pose sub-planner finds all the available poses for a suction cup tool to suck on the object, using the models of the tool and the object. The regrasp and motion sub-planner builds the regrasp graph that represents all possible grasp sequences to reorient and move the suction cup tool from an initial pose to a goal pose. Two regrasp graphs are used to plan for a single suction cup and the complex of the suction cup and an object respectively. The output of the proposed planner is a sequence of robot motion that uses a suction cup tool to manipulate objects following human instructions. The planner is examined and analyzed by both simulation experiments and real-world executions using several real-world tasks. The results show that the planner is efficient, robust, and can generate sequential transit and transfer robot motion to finish complicated combined task and motion planning tasks in a few seconds.

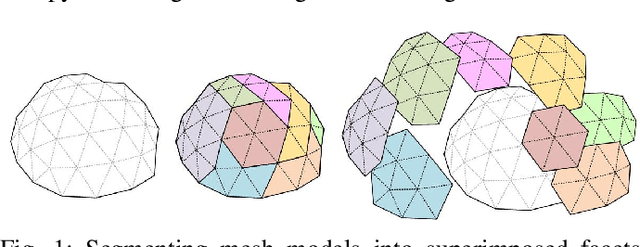

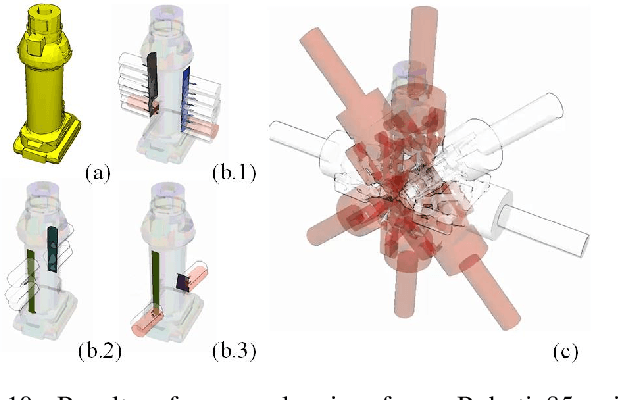

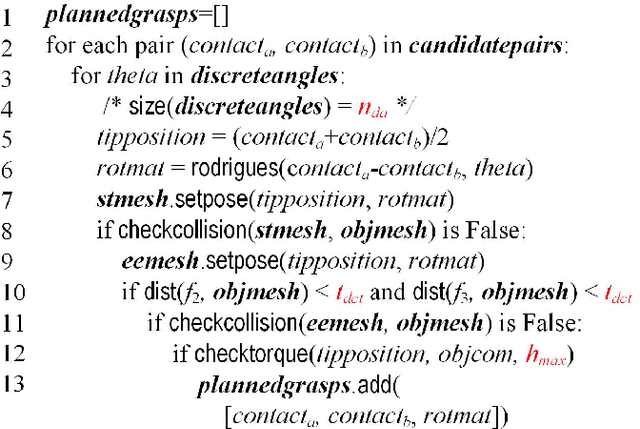

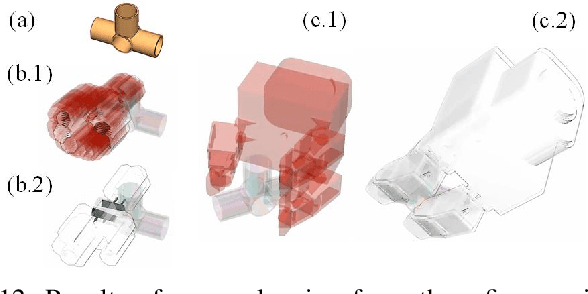

Planning Grasps for Assembly Tasks

Mar 05, 2019

Abstract:This paper develops model-based grasp planning algorithms for assembly tasks. It focuses on industrial end-effectors like grippers and suction cups, and plans grasp configurations considering CAD models of target objects. The developed algorithms are able to stably plan a large number of high-quality grasps, with high precision and little dependency on the quality of CAD models. The undergoing core technique is superimposed segmentation, which pre-processes a mesh model by peeling it into facets. The algorithms use superimposed segments to locate contact points and parallel facets, and synthesize grasp poses for popular industrial end-effectors. Several tunable parameters were prepared to adapt the algorithms to meet various requirements. The experimental section demonstrates the advantages of the algorithms by analyzing the cost and stability of the algorithms, the precision of the planned grasps, and the tunable parameters with both simulations and real-world experiments. Also, some examples of robotic assembly systems using the proposed algorithms are presented to demonstrate the efficacy.

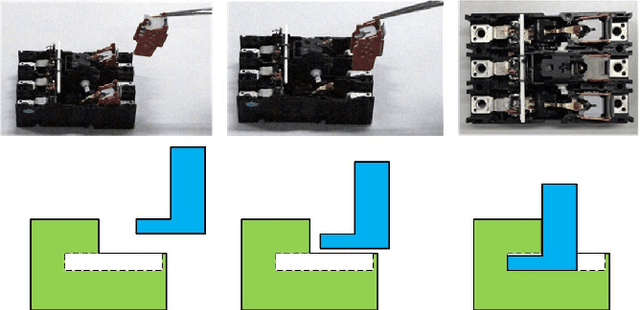

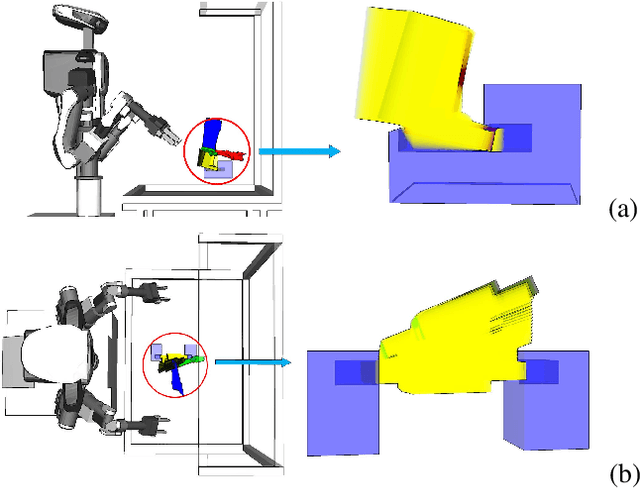

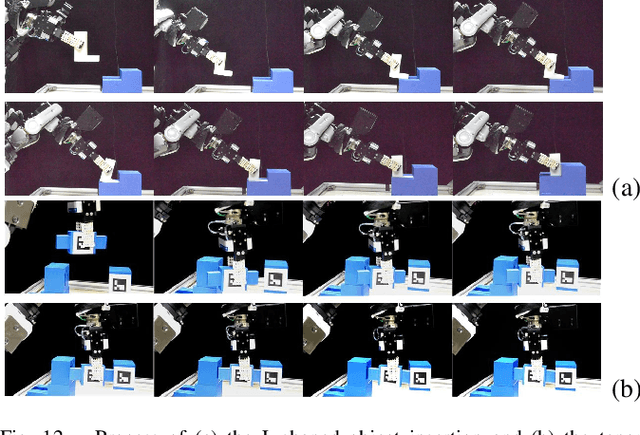

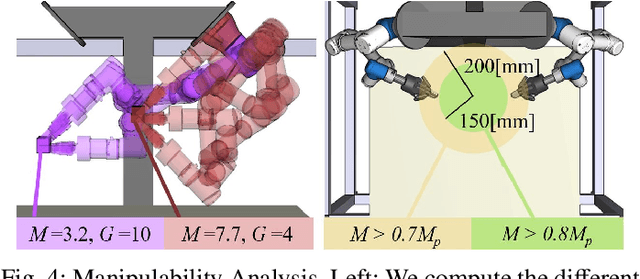

Dual-arm Assembly Planning Considering Gravitational Constraints

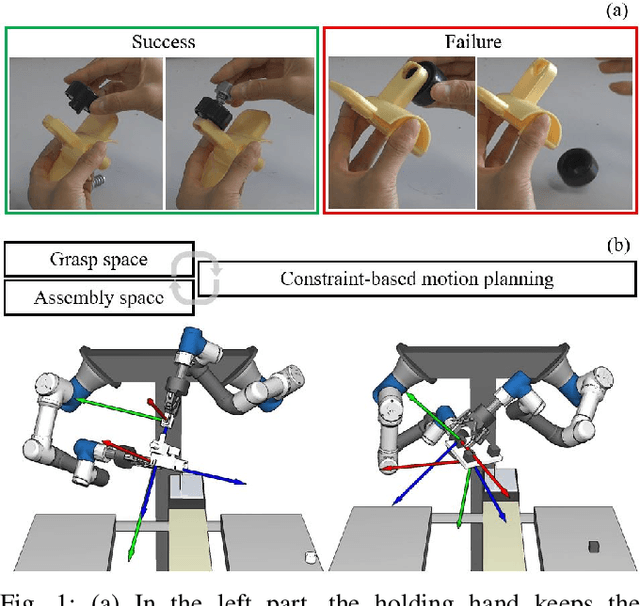

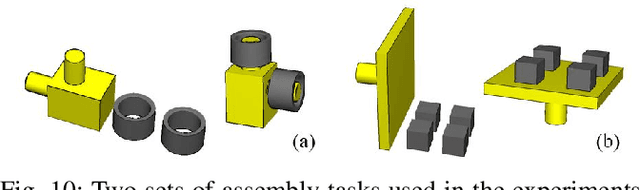

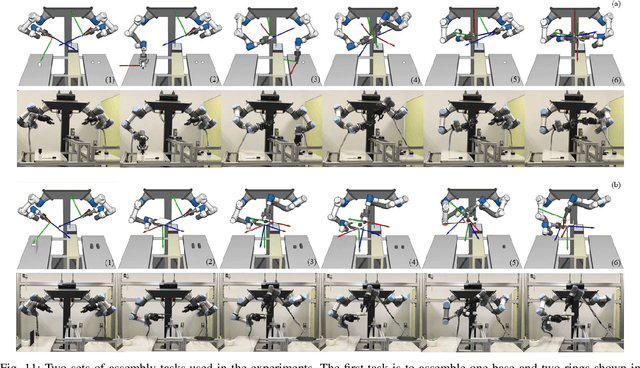

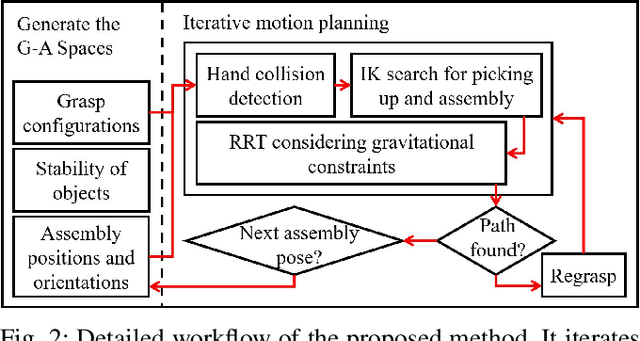

Mar 02, 2019

Abstract:Planning dual-arm assembly of more than three objects is a challenging Task and Motion Planning (TAMP) problem. The assembly planner shall consider not only the pose constraints of objects and robots, but also the gravitational constraints that may break the finished part. This paper proposes a planner to plan the dual-arm assembly of more than three objects. It automatically generates the grasp configurations and assembly poses, and simultaneously searches and backtracks the grasp space and assembly space to accelerate the motion planning of robot arms. Meanwhile, the proposed method considers gravitational constraints during robot motion planning to avoid breaking the finished part. In the experiments and analysis section, the time cost of each process and the influence of different parameters used in the proposed planner are compared and analyzed. The optimal values are used to perform real-world executions of various robotic assembly tasks. The planner is proved to be robust and efficient through the experiments.

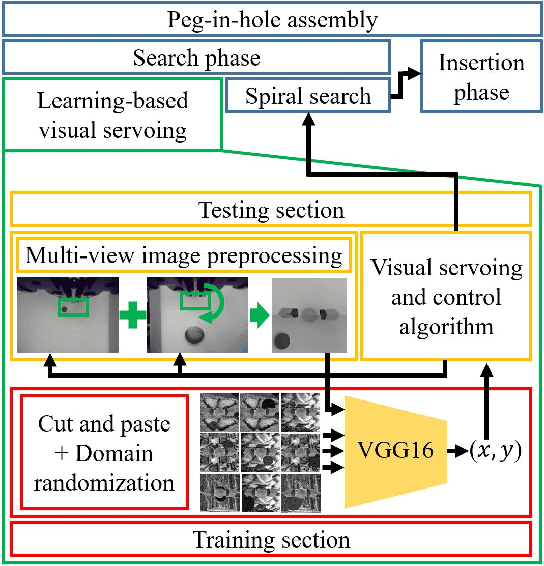

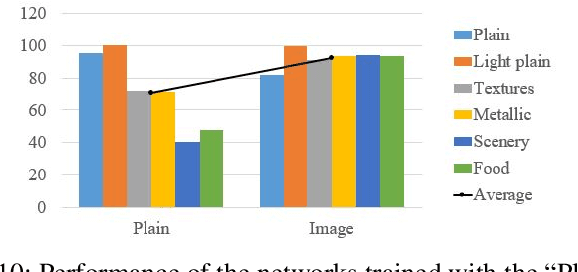

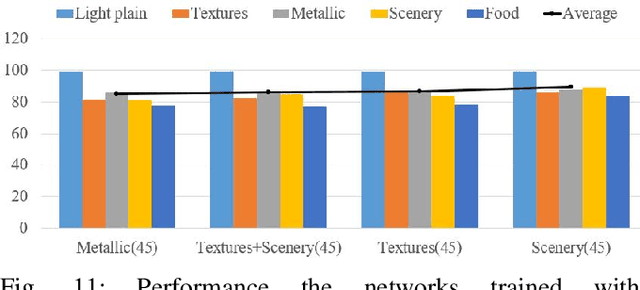

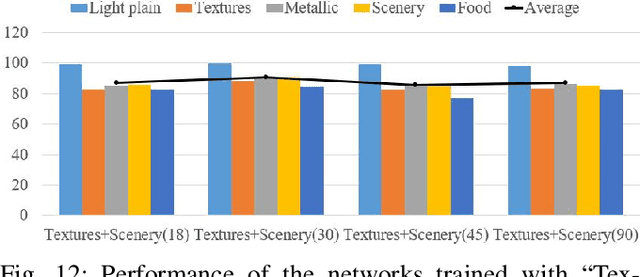

Quickly Inserting Pegs into Uncertain Holes using Multi-view Images and Deep Network Trained on Synthetic Data

Feb 25, 2019

Abstract:This paper uses robots to assemble pegs into holes on surfaces with different colors and textures. It especially targets at the problem of peg-in-hole assembly with initial position uncertainty. Two in-hand cameras and a force-torque sensor are used to account for the position uncertainty. A program sequence comprising learning-based visual servoing, spiral search, and impedance control is implemented to perform the peg-in-hole task with feedback from the above sensors. Contributions are mainly made in the learning-based visual servoing of the sequence, where a deep neural network is trained with various sets of synthetic data generated using the concept of domain randomization to predict where a hole is. In the experiments and analysis section, the network is analyzed and compared, and a real-world robotic system to insert pegs to holes using the proposed method is implemented. The results show that the implemented peg-in-hole assembly system can perform successful peg-in-hole insertions on surfaces with various colors and textures. It can generally speed up the entire peg-in-hole process.

Add to Chrome

Add to Chrome Add to Firefox

Add to Firefox Add to Edge

Add to Edge