Kartik Talamadupula

Multiresolution Recurrent Neural Networks: An Application to Dialogue Response Generation

Jun 14, 2016

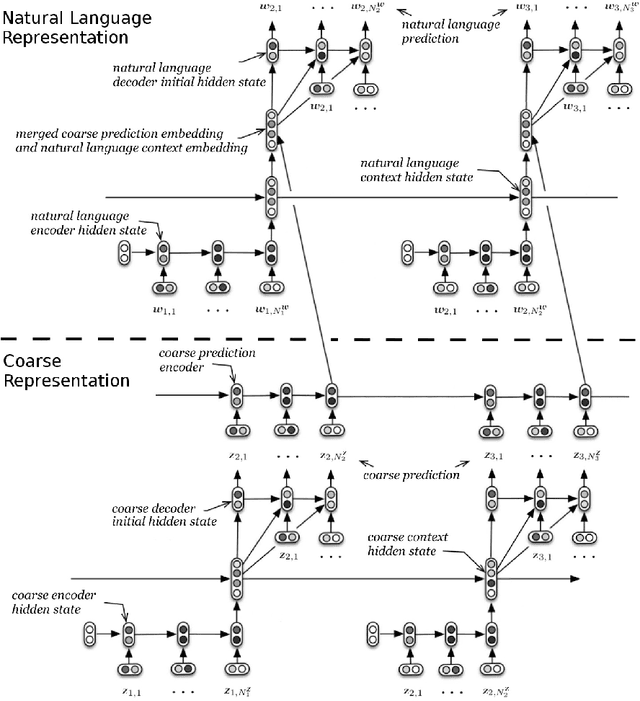

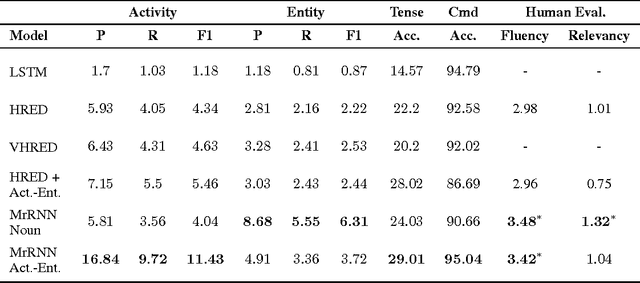

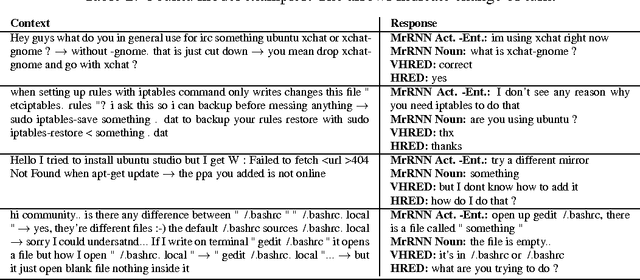

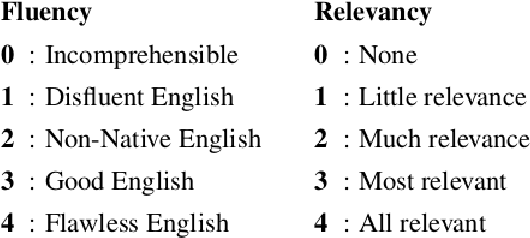

Abstract:We introduce the multiresolution recurrent neural network, which extends the sequence-to-sequence framework to model natural language generation as two parallel discrete stochastic processes: a sequence of high-level coarse tokens, and a sequence of natural language tokens. There are many ways to estimate or learn the high-level coarse tokens, but we argue that a simple extraction procedure is sufficient to capture a wealth of high-level discourse semantics. Such procedure allows training the multiresolution recurrent neural network by maximizing the exact joint log-likelihood over both sequences. In contrast to the standard log- likelihood objective w.r.t. natural language tokens (word perplexity), optimizing the joint log-likelihood biases the model towards modeling high-level abstractions. We apply the proposed model to the task of dialogue response generation in two challenging domains: the Ubuntu technical support domain, and Twitter conversations. On Ubuntu, the model outperforms competing approaches by a substantial margin, achieving state-of-the-art results according to both automatic evaluation metrics and a human evaluation study. On Twitter, the model appears to generate more relevant and on-topic responses according to automatic evaluation metrics. Finally, our experiments demonstrate that the proposed model is more adept at overcoming the sparsity of natural language and is better able to capture long-term structure.

The Metrics Matter! On the Incompatibility of Different Flavors of Replanning

May 12, 2014

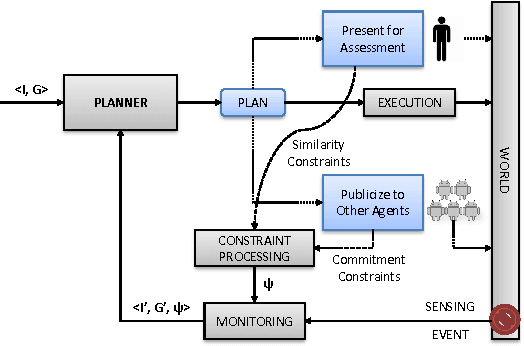

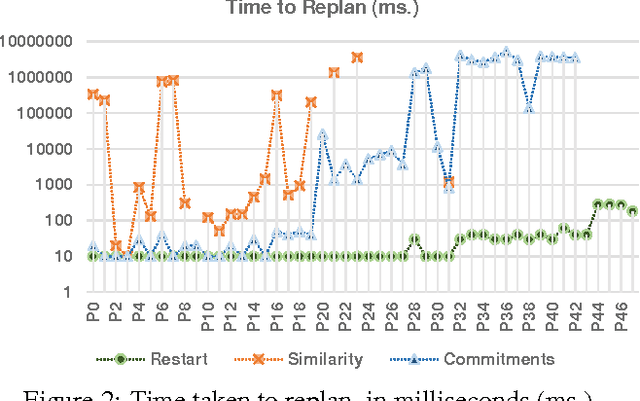

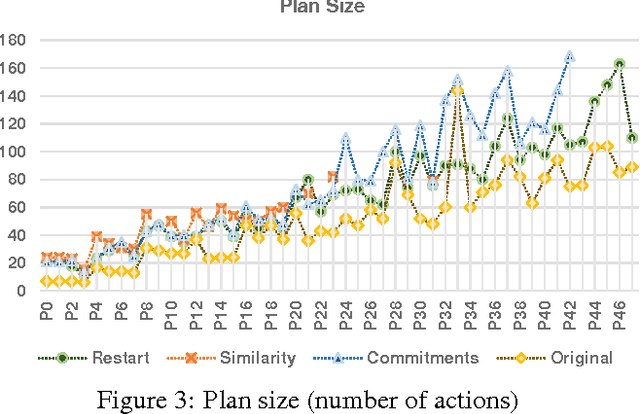

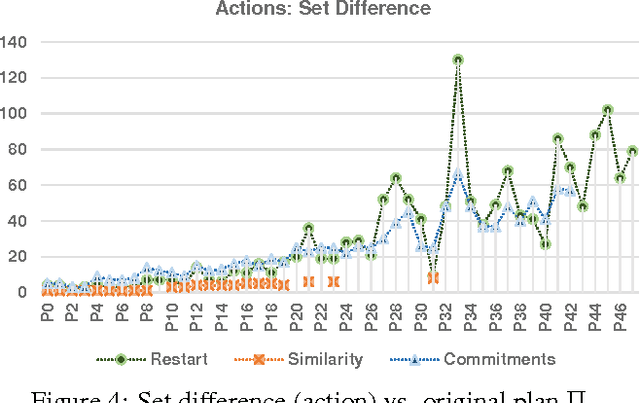

Abstract:When autonomous agents are executing in the real world, the state of the world as well as the objectives of the agent may change from the agent's original model. In such cases, the agent's planning process must modify the plan under execution to make it amenable to the new conditions, and to resume execution. This brings up the replanning problem, and the various techniques that have been proposed to solve it. In all, three main techniques -- based on three different metrics -- have been proposed in prior automated planning work. An open question is whether these metrics are interchangeable; answering this requires a normalized comparison of the various replanning quality metrics. In this paper, we show that it is possible to support such a comparison by compiling all the respective techniques into a single substrate. Using this novel compilation, we demonstrate that these different metrics are not interchangeable, and that they are not good surrogates for each other. Thus we focus attention on the incompatibility of the various replanning flavors with each other, founded in the differences between the metrics that they respectively seek to optimize.

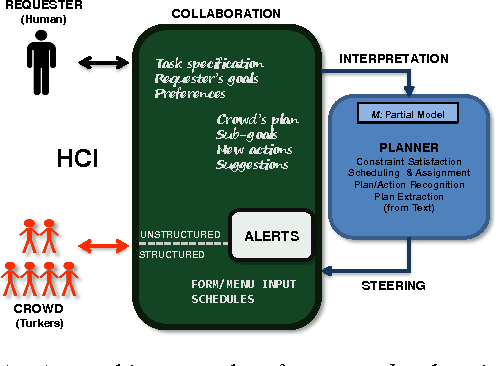

Herding the Crowd: Automated Planning for Crowdsourced Planning

Jul 29, 2013

Abstract:There has been significant interest in crowdsourcing and human computation. One subclass of human computation applications are those directed at tasks that involve planning (e.g. travel planning) and scheduling (e.g. conference scheduling). Much of this work appears outside the traditional automated planning forums, and at the outset it is not clear whether automated planning has much of a role to play in these human computation systems. Interestingly however, work on these systems shows that even primitive forms of automated oversight of the human planner does help in significantly improving the effectiveness of the humans/crowd. In this paper, we will argue that the automated oversight used in these systems can be viewed as a primitive automated planner, and that there are several opportunities for more sophisticated automated planning in effectively steering crowdsourced planning. Straightforward adaptation of current planning technology is however hampered by the mismatch between the capabilities of human workers and automated planners. We identify two important challenges that need to be overcome before such adaptation of planning technology can occur: (i) interpreting the inputs of the human workers (and the requester) and (ii) steering or critiquing the plans being produced by the human workers armed only with incomplete domain and preference models. In this paper, we discuss approaches for handling these challenges, and characterize existing human computation systems in terms of the specific choices they make in handling these challenges.

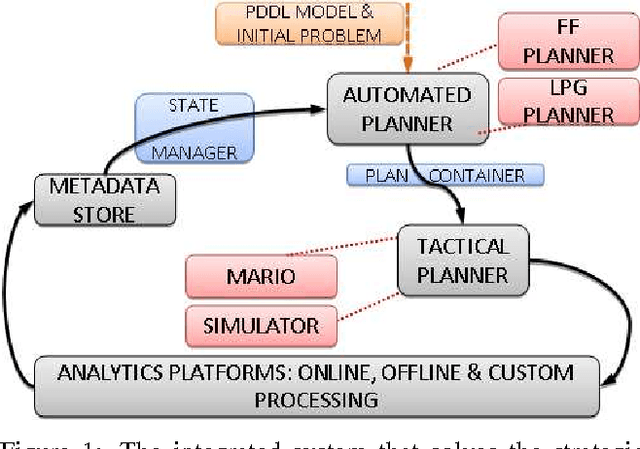

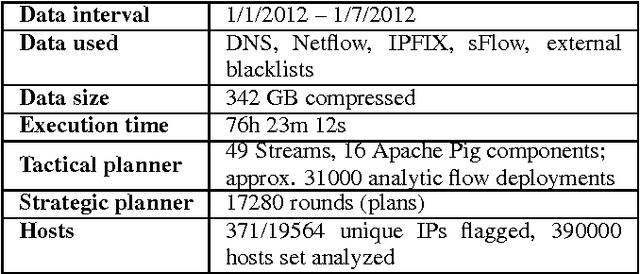

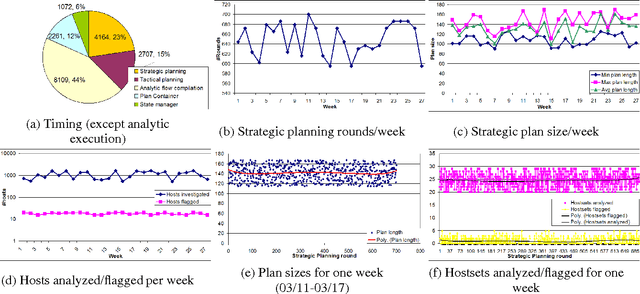

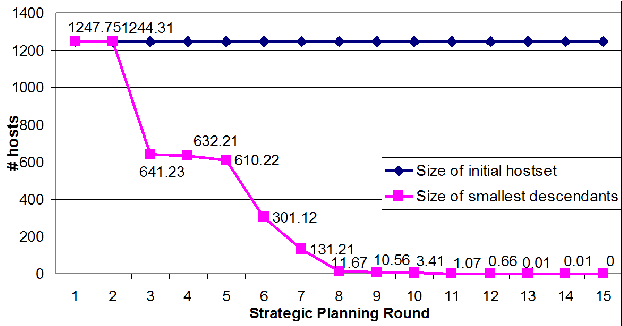

Strategic Planning for Network Data Analysis

May 12, 2013

Abstract:As network traffic monitoring software for cybersecurity, malware detection, and other critical tasks becomes increasingly automated, the rate of alerts and supporting data gathered, as well as the complexity of the underlying model, regularly exceed human processing capabilities. Many of these applications require complex models and constituent rules in order to come up with decisions that influence the operation of entire systems. In this paper, we motivate the novel "strategic planning" problem -- one of gathering data from the world and applying the underlying model of the domain in order to come up with decisions that will monitor the system in an automated manner. We describe our use of automated planning methods to this problem, including the technique that we used to solve it in a manner that would scale to the demands of a real-time, real world scenario. We then present a PDDL model of one such application scenario related to network administration and monitoring, followed by a description of a novel integrated system that was built to accept generated plans and to continue the execution process. Finally, we present evaluations of two different automated planners and their different capabilities with our integrated system, both on a six-month window of network data, and using a simulator.

Add to Chrome

Add to Chrome Add to Firefox

Add to Firefox Add to Edge

Add to Edge