Kam Woh Ng

Ternary Hashing

Mar 19, 2021

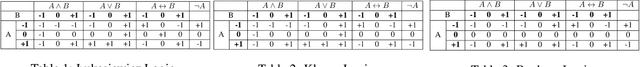

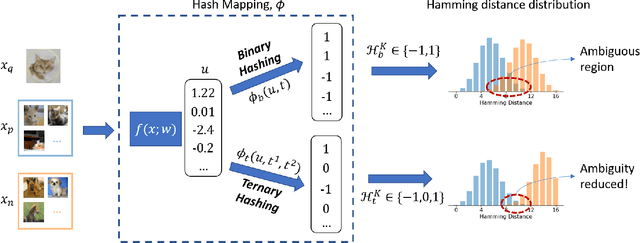

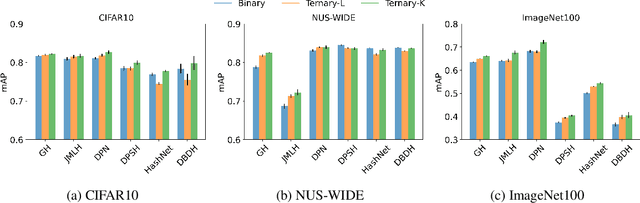

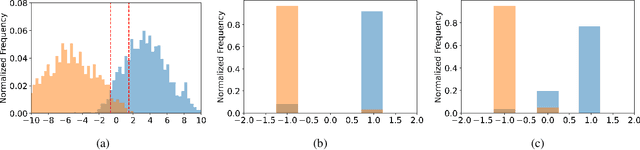

Abstract:This paper proposes a novel ternary hash encoding for learning to hash methods, which provides a principled more efficient coding scheme with performances better than those of the state-of-the-art binary hashing counterparts. Two kinds of axiomatic ternary logic, Kleene logic and {\L}ukasiewicz logic are adopted to calculate the Ternary Hamming Distance (THD) for both the learning/encoding and testing/querying phases. Our work demonstrates that, with an efficient implementation of ternary logic on standard binary machines, the proposed ternary hashing is compared favorably to the binary hashing methods with consistent improvements of retrieval mean average precision (mAP) ranging from 1\% to 5.9\% as shown in CIFAR10, NUS-WIDE and ImageNet100 datasets.

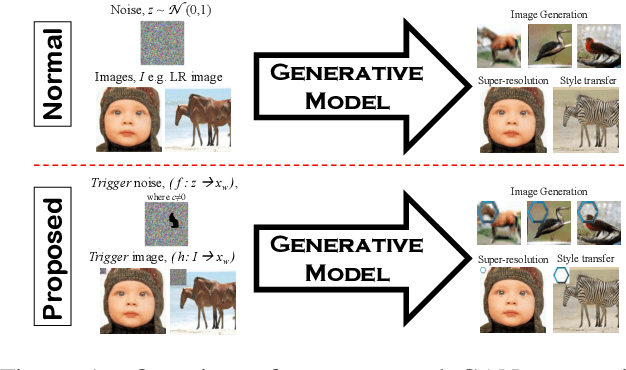

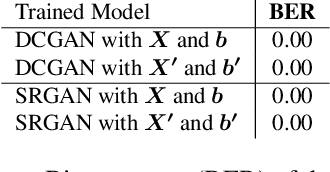

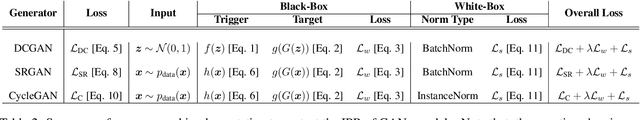

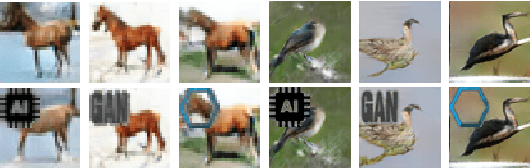

Protecting Intellectual Property of Generative Adversarial Networks from Ambiguity Attack

Mar 01, 2021

Abstract:Ever since Machine Learning as a Service (MLaaS) emerges as a viable business that utilizes deep learning models to generate lucrative revenue, Intellectual Property Right (IPR) has become a major concern because these deep learning models can easily be replicated, shared, and re-distributed by any unauthorized third parties. To the best of our knowledge, one of the prominent deep learning models - Generative Adversarial Networks (GANs) which has been widely used to create photorealistic image are totally unprotected despite the existence of pioneering IPR protection methodology for Convolutional Neural Networks (CNNs). This paper therefore presents a complete protection framework in both black-box and white-box settings to enforce IPR protection on GANs. Empirically, we show that the proposed method does not compromise the original GANs performance (i.e. image generation, image super-resolution, style transfer), and at the same time, it is able to withstand both removal and ambiguity attacks against embedded watermarks.

Rethinking Uncertainty in Deep Learning: Whether and How it Improves Robustness

Nov 27, 2020

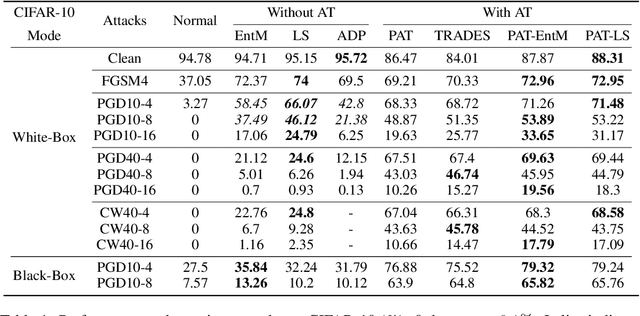

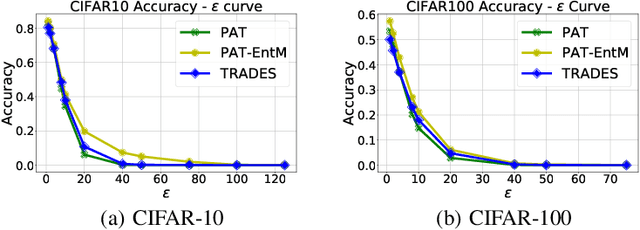

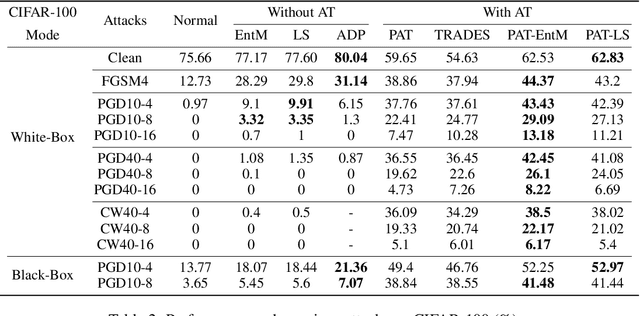

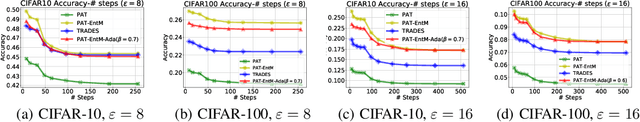

Abstract:Deep neural networks (DNNs) are known to be prone to adversarial attacks, for which many remedies are proposed. While adversarial training (AT) is regarded as the most robust defense, it suffers from poor performance both on clean examples and under other types of attacks, e.g. attacks with larger perturbations. Meanwhile, regularizers that encourage uncertain outputs, such as entropy maximization (EntM) and label smoothing (LS) can maintain accuracy on clean examples and improve performance under weak attacks, yet their ability to defend against strong attacks is still in doubt. In this paper, we revisit uncertainty promotion regularizers, including EntM and LS, in the field of adversarial learning. We show that EntM and LS alone provide robustness only under small perturbations. Contrarily, we show that uncertainty promotion regularizers complement AT in a principled manner, consistently improving performance on both clean examples and under various attacks, especially attacks with large perturbations. We further analyze how uncertainty promotion regularizers enhance the performance of AT from the perspective of Jacobian matrices $\nabla_X f(X;\theta)$, and find out that EntM effectively shrinks the norm of Jacobian matrices and hence promotes robustness.

Protect, Show, Attend and Tell: Image Captioning Model with Ownership Protection

Aug 25, 2020

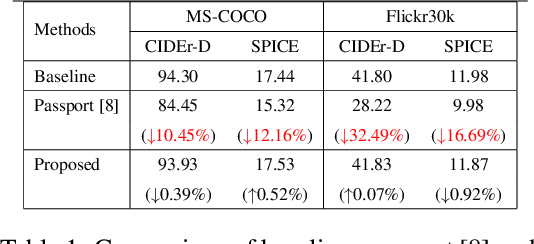

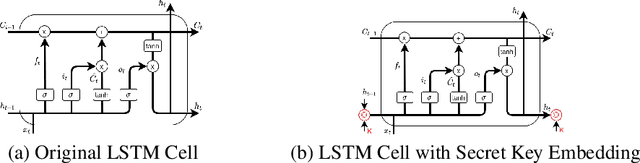

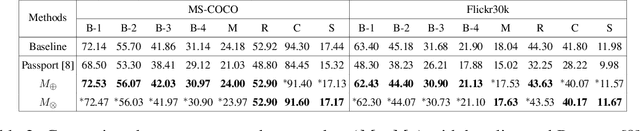

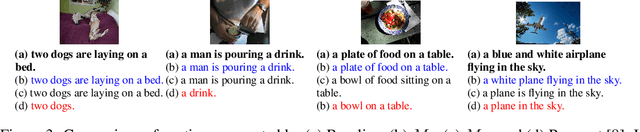

Abstract:By and large, existing Intellectual Property Right (IPR) protection on deep neural networks typically i) focus on image classification task only, and ii) follow a standard digital watermarking framework that were conventionally used to protect the ownership of multimedia and video content. This paper demonstrates that current digital watermarking framework is insufficient to protect image captioning task that often regarded as one of the frontier A.I. problems. As a remedy, this paper studies and proposes two different embedding schemes in the hidden memory state of a recurrent neural network to protect image captioning model. From both theoretically and empirically points, we prove that a forged key will yield an unusable image captioning model, defeating the purpose on infringement. To the best of our knowledge, this work is the first to propose ownership protection on image captioning task. Also, extensive experiments show that the proposed method does not compromise the original image captioning performance on all common captioning metrics on Flickr30k and MS-COCO datasets, and at the same time it is able to withstand both removal and ambiguity attacks.

Rethinking Privacy Preserving Deep Learning: How to Evaluate and Thwart Privacy Attacks

Jun 23, 2020

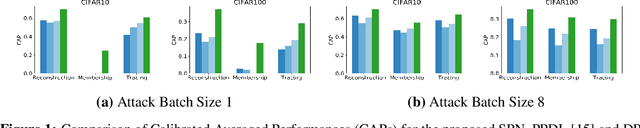

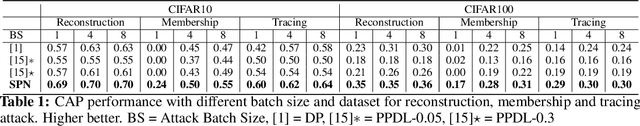

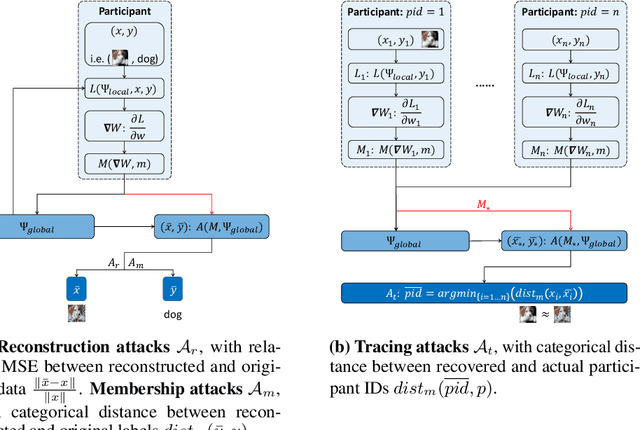

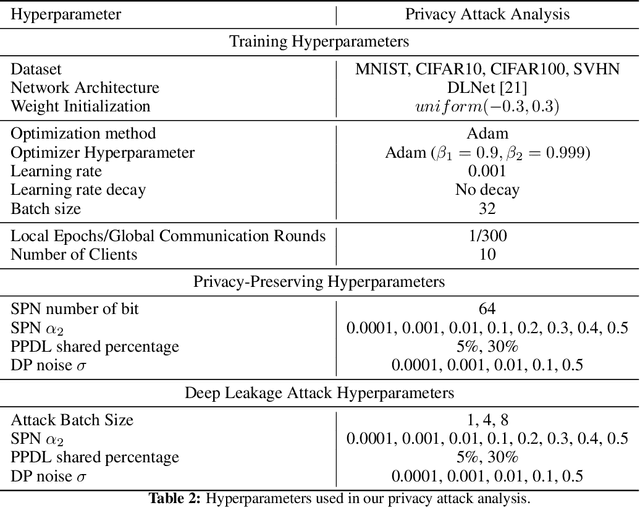

Abstract:This paper investigates capabilities of Privacy-Preserving Deep Learning (PPDL) mechanisms against various forms of privacy attacks. First, we propose to quantitatively measure the trade-off between model accuracy and privacy losses incurred by reconstruction, tracing and membership attacks. Second, we formulate reconstruction attacks as solving a noisy system of linear equations, and prove that attacks are guaranteed to be defeated if condition (2) is unfulfilled. Third, based on theoretical analysis, a novel Secret Polarization Network (SPN) is proposed to thwart privacy attacks, which pose serious challenges to existing PPDL methods. Extensive experiments showed that model accuracies are improved on average by 5-20% compared with baseline mechanisms, in regimes where data privacy are satisfactorily protected.

Rethinking Deep Neural Network Ownership Verification: Embedding Passports to Defeat Ambiguity Attacks

Sep 21, 2019

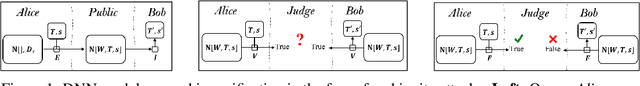

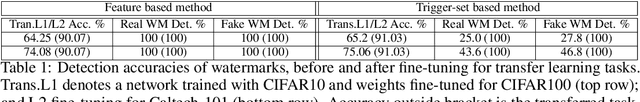

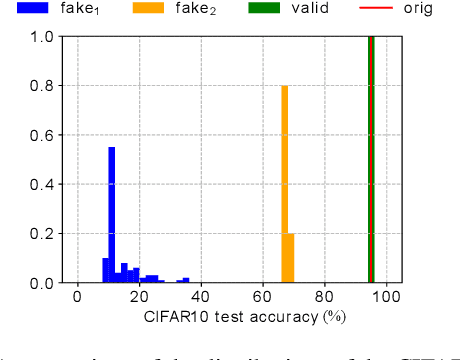

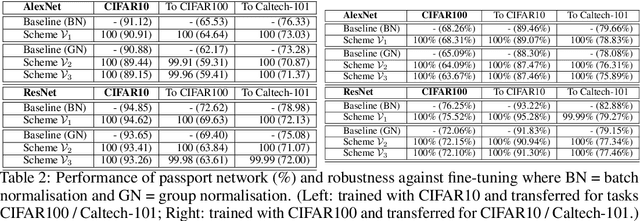

Abstract:With the rapid development of deep neural networks (DNN), there emerges an urgent need to protect the trained DNN models from being illegally copied, redistributed, or abused without respecting the intellectual properties of legitimate owners. Following recent progresses along this line, we investigate a number of watermark-based DNN ownership verification methods in the face of ambiguity attacks, which aim to cast doubts on ownership verification by forging counterfeit watermarks. It is shown that ambiguity attacks pose serious challenges to existing DNN watermarking methods. As remedies to the above-mentioned loophole, this paper proposes novel passport-based DNN ownership verification schemes which are both robust to network modifications and resilient to ambiguity attacks. The gist of embedding digital passports is to design and train DNN models in a way such that, the DNN model performance of an original task will be significantly deteriorated due to forged passports. In other words genuine passports are not only verified by looking for predefined signatures, but also reasserted by the unyielding DNN model performances. Extensive experimental results justify the effectiveness of the proposed passport-based DNN ownership verification schemes. Code and models are available at https://github.com/kamwoh/DeepIPR

Add to Chrome

Add to Chrome Add to Firefox

Add to Firefox Add to Edge

Add to Edge