John Skinner

What can robotics research learn from computer vision research?

Jan 08, 2020

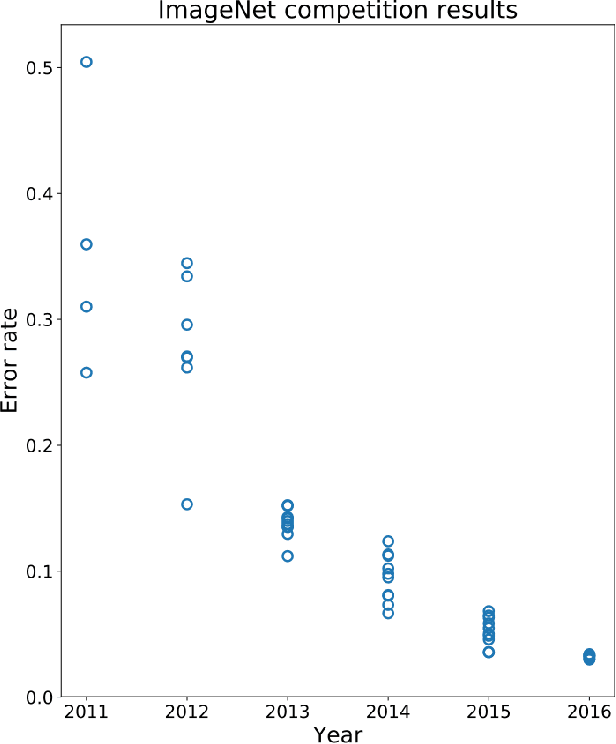

Abstract:The computer vision and robotics research communities are each strong. However progress in computer vision has become turbo-charged in recent years due to big data, GPU computing, novel learning algorithms and a very effective research methodology. By comparison, progress in robotics seems slower. It is true that robotics came later to exploring the potential of learning -- the advantages over the well-established body of knowledge in dynamics, kinematics, planning and control is still being debated, although reinforcement learning seems to offer real potential. However, the rapid development of computer vision compared to robotics cannot be only attributed to the former's adoption of deep learning. In this paper, we argue that the gains in computer vision are due to research methodology -- evaluation under strict constraints versus experiments; bold numbers versus videos.

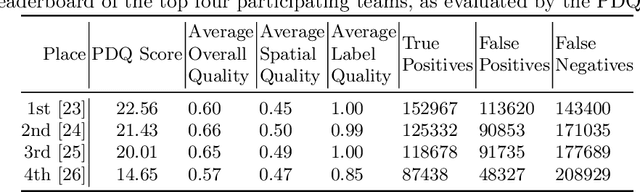

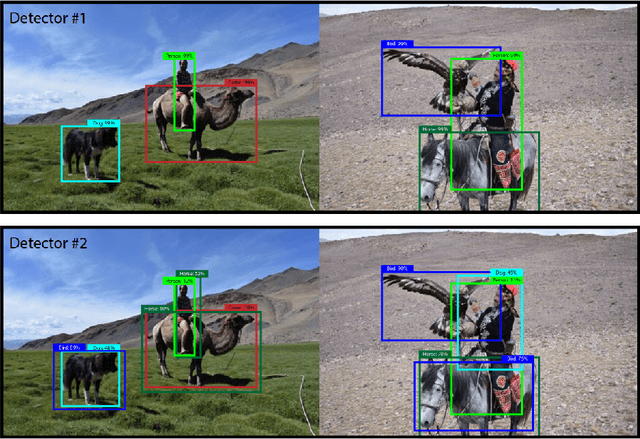

The Probabilistic Object Detection Challenge

Apr 08, 2019

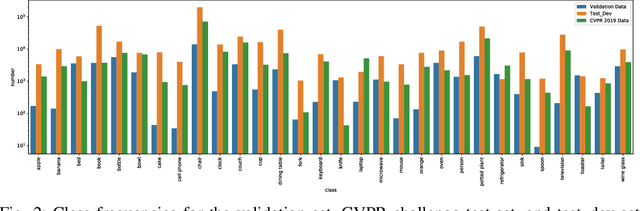

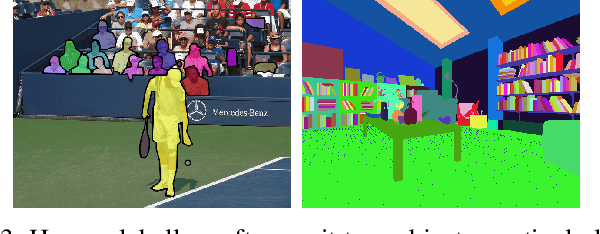

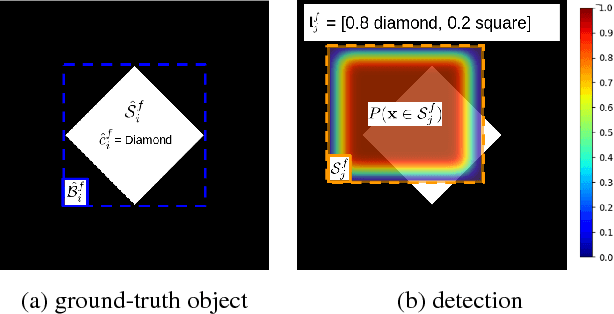

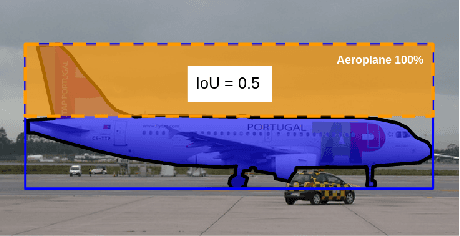

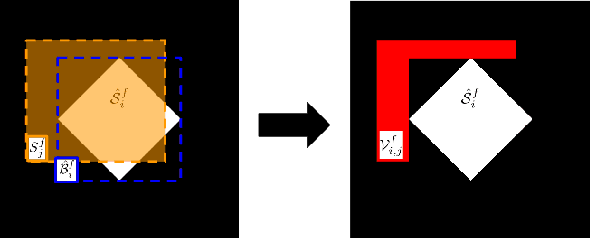

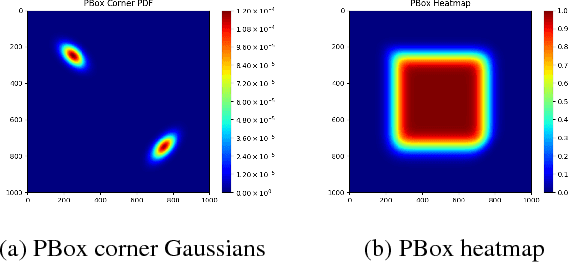

Abstract:We introduce a new challenge for computer and robotic vision, the first ACRV Robotic Vision Challenge, Probabilistic Object Detection. Probabilistic object detection is a new variation on traditional object detection tasks, requiring estimates of spatial and semantic uncertainty. We extend the traditional bounding box format of object detection to express spatial uncertainty using gaussian distributions for the box corners. The challenge introduces a new test dataset of video sequences, which are designed to more closely resemble the kind of data available to a robotic system. We evaluate probabilistic detections using a new probability-based detection quality (PDQ) measure. The goal in creating this challenge is to draw the computer and robotic vision communities together, toward applying object detection solutions for practical robotics applications.

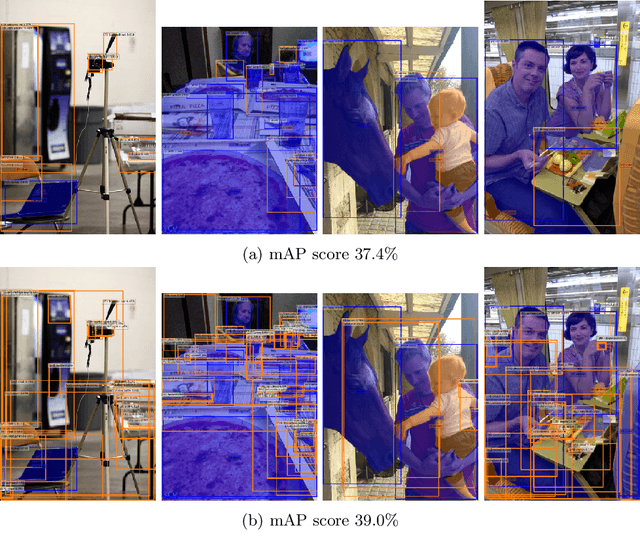

Probability-based Detection Quality (PDQ): A Probabilistic Approach to Detection Evaluation

Dec 04, 2018

Abstract:We propose a new visual object detector evaluation measure which not only assesses detection quality, but also accounts for the spatial and label uncertainties produced by object detection systems. Current evaluation measures such as mean average precision (mAP) do not take these two aspects into account, accepting detections with no spatial uncertainty and using only the label with the winning score instead of a full class probability distribution to rank detections. To overcome these limitations, we propose the probability-based detection quality (PDQ) measure which evaluates both spatial and label probabilities, requires no thresholds to be predefined, and optimally assigns ground-truth objects to detections. Our experimental evaluation shows that PDQ rewards detections with accurate spatial probabilities and explicitly evaluates label probability to determine detection quality. PDQ aims to encourage the development of new object detection approaches that provide meaningful spatial and label uncertainty measures.

Add to Chrome

Add to Chrome Add to Firefox

Add to Firefox Add to Edge

Add to Edge