Jeonghun Park

Space-Time Adaptive Beamforming for Satellite Communications: Harnessing Doppler as New Signaling Dimensions

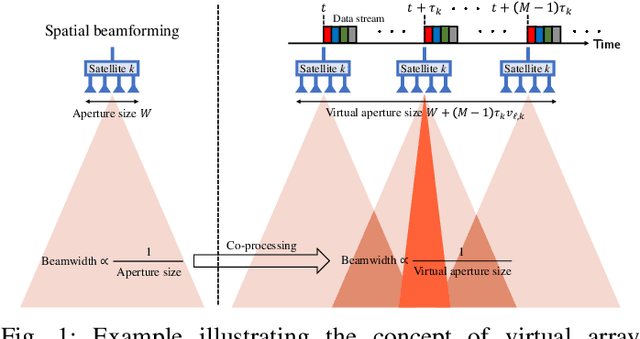

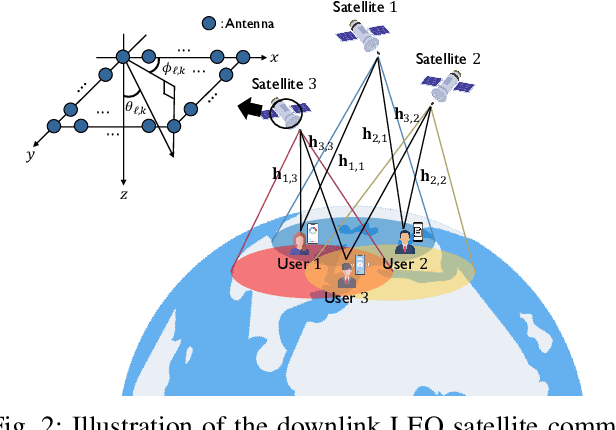

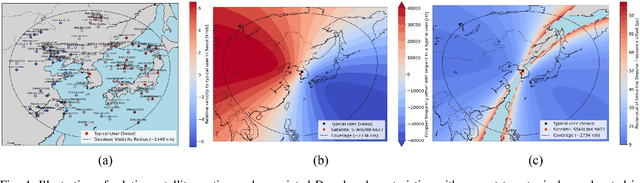

Mar 31, 2026Abstract:Low Earth orbit (LEO) satellite downlinks are fundamentally limited by severe channel correlation: the line-of-sight (LoS)-dominant propagation and high orbital altitude confine users to a narrow angular region, rendering the multiuser channel matrix ill-conditioned. This paper provides a rigorous characterization of this limitation by exploiting the Vandermonde structure of the channel. Specifically, we link the minimum eigenvalue of the channel Gram matrix to user crowding through a balls-and-bins abstraction, and derive asymptotic sum rate scaling laws for both uniform linear arrays and uniform planar arrays. Our analysis reveals a sharp density threshold beyond which zero-forcing (ZF) precoding provably fails. To overcome this spatial multiplexing breakdown, we propose space-time adaptive beamforming (STAB), which exploits user-dependent residual Doppler shifts as an additional discrimination dimension. By constructing a time-extended channel in the joint space-Doppler domain, STAB restores a non-vanishing sum rate in regimes where purely spatial ZF collapses. We further develop a space-Doppler user selection (SDS) algorithm that leverages both spatial and Doppler separability for scheduling. Numerical results corroborate the analytical predictions and demonstrate that STAB with SDS achieves substantial sum rate gains over conventional methods in dense LEO downlink scenarios.

Scalable and Convergent Generalized Power Iteration Precoding for Massive MIMO Systems

Mar 04, 2026Abstract:In massive multiple-input multiple-output (MIMO) systems, achieving high spectral efficiency (SE) often requires advanced precoding algorithms whose complexity scales rapidly with the number of antennas, limiting practical deployment. In this paper, we develop a scalable and computationally efficient generalized power iteration precoding (GPIP) framework for massive MIMO systems under both perfect and imperfect channel state information at the transmitter (CSIT). By exploiting the low-dimensional subspace property of optimal precoders, we reformulate the high-dimensional beamforming problem into a lower-dimensional weight optimization that scales with the number of users rather than antennas. We further extend this framework to the imperfect CSIT scenario by showing that stationary solutions reside in a combined subspace spanned by the estimated channel and error covariance matrices, enabling a robust design via low-rank approximation. To reduce computational cost, we leverage the Sherman-Morrison formula to simplify matrix inversions. Moreover, interpreting the GPIP update as a projected preconditioned gradient ascent method, we establish convergence guarantees and develop a stable and monotonic algorithm using a backtracking line search. Numerical results demonstrate that the proposed methods achieve the highest SE performance compared to state-of-the-art linear precoders with significantly reduced complexity and convergence, highlighting their suitability for large-scale MIMO systems.

Fractional Programming for Kullback-Leibler Divergence in Hypothesis Testing

Jan 02, 2026Abstract:Maximizing the Kullback-Leibler divergence (KLD) is a fundamental problem in waveform design for active sensing and hypothesis testing, as it directly relates to the error exponent of detection probability. However, the associated optimization problem is highly nonconvex due to the intricate coupling of log-determinant and matrix trace terms. Existing solutions often suffer from high computational complexity, typically requiring matrix inversion at every iteration. In this paper, we propose a computationally efficient optimization framework based on fractional programming (FP). Our key idea is to reformulate the KLD maximization problem into a sequence of tractable quadratic subproblems using matrix FP. To further reduce complexity, we introduce a nonhomogeneous relaxation technique that replaces the costly linear system solver with a simple closed-form update, thereby reducing the per-iteration complexity to quadratic order. To compensate for the convergence speed trade-off caused by relaxation, we employ an acceleration method called STEM by interpreting the iterative scheme as a fixed-point mapping. The resulting algorithm achieves significantly faster convergence rates with low per-iteration cost. Numerical results demonstrate that our approach reduces the total runtime by orders of magnitude compared to a state-of-the-art benchmark. Finally, we apply the proposed framework to a multiple random access scenario and a joint integrated sensing and communication scenario, validating the efficacy of our framework in such applications.

The MIMO-ME-MS Channel: Analysis and Algorithm for Secure MIMO Integrated Sensing and Communications

Dec 24, 2025Abstract:This paper studies precoder design for secure MIMO integrated sensing and communications (ISAC) by introducing the MIMO-ME-MS channel, where a multi-antenna transmitter serves a legitimate multi-antenna receiver in the presence of a multi-antenna eavesdropper while simultaneously enabling sensing via a multi-antenna sensing receiver. Using sensing mutual information as the sensing metric, we formulate a nonconvex weighted objective that jointly captures secure communication (via secrecy rate) and sensing performance. A high-SNR analysis based on subspace decomposition characterizes the maximum achievable weighted degrees of freedom and reveals that a quasi-optimal precoder must span a "useful subspace," highlighting why straightforward extensions of classical wiretap/ISAC precoders can be suboptimal in this tripartite setting. Motivated by these insights, we develop a practical two-stage iterative algorithm that alternates between sequential basis construction and power allocation via a difference-of-convex program. Numerical results show that the proposed approach captures the desirable precoding structure predicted by the analysis and yields substantial gains in the MIMO-ME-MS channel.

State Estimation with 1-Bit Observations and Imperfect Models: Bussgang Meets Kalman in Neural Networks

Jul 23, 2025Abstract:State estimation from noisy observations is a fundamental problem in many applications of signal processing. Traditional methods, such as the extended Kalman filter, work well under fully-known Gaussian models, while recent hybrid deep learning frameworks, combining model-based and data-driven approaches, can also handle partially known models and non-Gaussian noise. However, existing studies commonly assume the absence of quantization distortion, which is inevitable, especially with non-ideal analog-to-digital converters. In this work, we consider a state estimation problem with 1-bit quantization. 1-bit quantization causes significant quantization distortion and severe information loss, rendering conventional state estimation strategies unsuitable. To address this, inspired by the Bussgang decomposition technique, we first develop the Bussgang-aided Kalman filter by assuming perfectly known models. The proposed method suitably captures quantization distortion into the state estimation process. In addition, we propose a computationally efficient variant, referred to as the reduced Bussgang-aided Kalman filter and, building upon it, introduce a deep learning-based approach for handling partially known models, termed the Bussgang-aided KalmanNet. In particular, the Bussgang-aided KalmanNet jointly uses a dithering technique and a gated recurrent unit (GRU) architecture to effectively mitigate the effects of 1-bit quantization and model mismatch. Through simulations on the Lorenz-Attractor model and the Michigan NCLT dataset, we demonstrate that our proposed methods achieve accurate state estimation performance even under highly nonlinear, mismatched models and 1-bit observations.

Space-Time Beamforming for LEO Satellite Communications

May 12, 2025

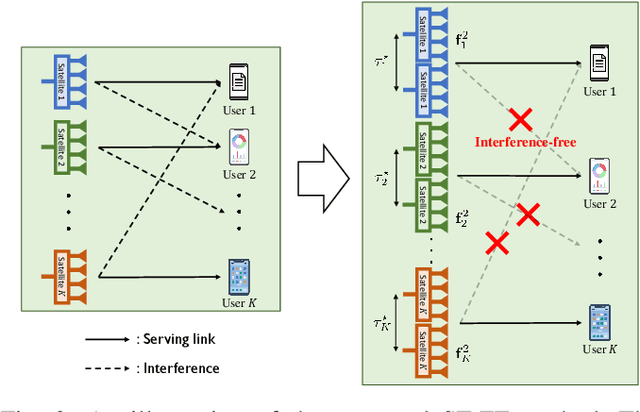

Abstract:Inter-beam interference poses a significant challenge in low Earth orbit (LEO) satellite communications due to dense satellite constellations. To address this issue, we introduce spacetime beamforming, a novel paradigm that leverages the spacetime channel vector, uniquely determined by the angle of arrival (AoA) and relative Doppler shift, to optimize beamforming between a moving satellite transmitter and a ground station user. We propose two space-time beamforming techniques: spacetime zero-forcing (ST-ZF) and space-time signal-to-leakage-plus-noise ratio (ST-SLNR) maximization. In a partially connected interference channel, ST-ZF achieves a 3dB SNR gain over the conventional interference avoidance method using maximum ratio transmission beamforming. Moreover, in general interference networks, ST-SLNR beamforming significantly enhances sum spectral efficiency compared to conventional interference management approaches. These results demonstrate the effectiveness of space-time beamforming in improving spectral efficiency and interference mitigation for next-generation LEO satellite networks.

Uplink Coordinated Pilot Design for 1-bit Massive MIMO in Correlated Channel

Feb 19, 2025Abstract:In this paper, we propose a coordinated pilot design method to minimize the channel estimation mean squared error (MSE) in 1-bit analog-to-digital converters (ADCs) massive multiple-input multiple-output (MIMO). Under the assumption that the well-known Bussgang linear minimum mean square error (BLMMSE) estimator is used for channel estimation, we first observe that the resulting MSE leads to an intractable optimization problem, as it involves the arcsin function and a complex multiple matrix ratio form. To resolve this, we derive the approximate MSE by assuming the low signal-to-noise ratio (SNR) regime, by which we develop an efficient coordinated pilot design based on a fractional programming technique. The proposed pilot design is distinguishable from the existing work in that it is applicable in general system environments, including correlated channel and multi-cell environments. We demonstrate that the proposed method outperforms the channel estimation accuracy performance compared to the conventional approaches.

Reducing Latency by Eliminating CSIT Feedback: FDD Downlink MIMO Precoding Without CSIT Feedback for Internet-of-Things Communications

Jan 13, 2025

Abstract:This paper presents a novel framework for low-latency frequency division duplex (FDD) multi-input multi-output (MIMO) transmission with Internet of Things (IoT) communications. Our key idea is eliminating feedback associated with downlink channel state information at the transmitter (CSIT) acquisition. Instead, we propose to reconstruct downlink CSIT from uplink reference signals by exploiting the frequency invariance property on channel parameters. Nonetheless, the frequency disparity between the uplink and downlink makes it impossible to get perfect downlink CSIT, resulting in substantial interference. To address this, we formulate a max-min fairness problem and propose a rate-splitting multiple access (RSMA)-aided efficient precoding method. In particular, to fully harness the potential benefits of RSMA, we propose a method that approximates the error covariance matrix and incorporates it into the precoder optimization process. This approach effectively accounts for the impact of imperfect CSIT, enabling the design of a robust precoder that efficiently handles CSIT inaccuracies. Simulation results demonstrate that our framework outperforms other baseline methods in terms of the minimum spectral efficiency when no direct CSI feedback is used. Moreover, we show that our framework significantly reduces communication latency compared to conventional CSI feedback-based methods, underscoring its effectiveness in enhancing latency performance for IoT communications.

A Selective Secure Precoding Framework for MU-MIMO Rate-Splitting Multiple Access Networks Under Limited CSIT

Dec 26, 2024

Abstract:In this paper, we propose a robust and adaptable secure precoding framework designed to encapsulate a intricate scenario where legitimate users have different information security: secure private or normal public information. Leveraging rate-splitting multiple access (RSMA), we formulate the sum secrecy spectral efficiency (SE) maximization problem in downlink multi-user multiple-input multiple-output (MIMO) systems with multi-eavesdropper. To resolve the challenges including the heterogeneity of security, non-convexity, and non-smoothness of the problem, we initially approximate the problem using a LogSumExp technique. Subsequently, we derive the first-order optimality condition in the form of a generalized eigenvalue problem. We utilize a power iteration-based method to solve the condition, thereby achieving a superior local optimal solution. The proposed algorithm is further extended to a more realistic scenario involving limited channel state information at the transmitter (CSIT). To effectively utilize the limited channel information, we employ a conditional average rate approach. Handling the conditional average by deriving useful bounds, we establish a lower bound for the objective function under the conditional average. Then we apply the similar optimization method as for the perfect CSIT case. In simulations, we validate the proposed algorithm in terms of the sum secrecy SE.

Low-Earth Orbit Satellite Network Analysis: Coverage under Distance-Dependent Shadowing

Sep 06, 2024

Abstract:This paper offers a thorough analysis of the coverage performance of Low Earth Orbit (LEO) satellite networks using a strongest satellite association approach, with a particular emphasis on shadowing effects modeled through a Poisson point process (PPP)-based network framework. We derive an analytical expression for the coverage probability, which incorporates key system parameters and a distance-dependent shadowing probability function, explicitly accounting for both line-of-sight and non-line-of-sight propagation channels. To enhance the practical relevance of our findings, we provide both lower and upper bounds for the coverage probability and introduce a closed-form solution based on a simplified shadowing model. Our analysis reveals several important network design insights, including the enhancement of coverage probability by distance-dependent shadowing effects and the identification of an optimal satellite altitude that balances beam gain benefits with interference drawbacks. Notably, our PPP-based network model shows strong alignment with other established models, confirming its accuracy and applicability across a variety of satellite network configurations. The insights gained from our analysis are valuable for optimizing LEO satellite deployment strategies and improving network performance in diverse scenarios.

Add to Chrome

Add to Chrome Add to Firefox

Add to Firefox Add to Edge

Add to Edge