I. Parra

Predicting Vehicles Trajectories in Urban Scenarios with Transformer Networks and Augmented Information

Jun 07, 2021

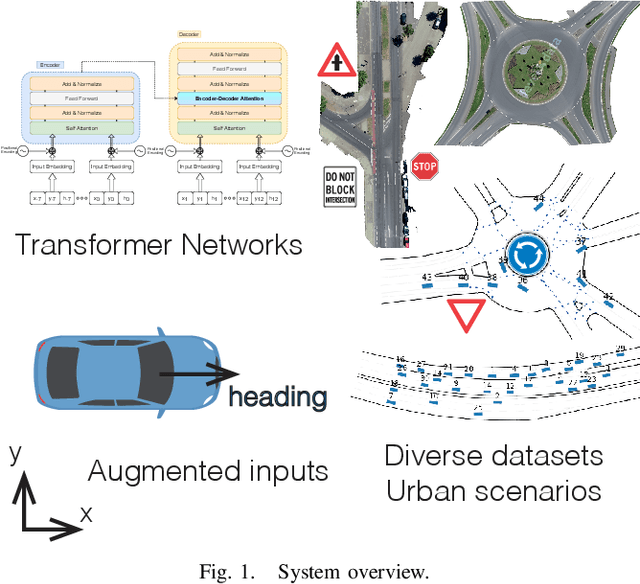

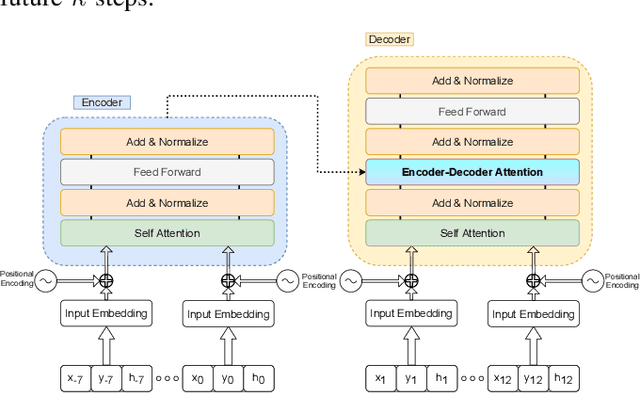

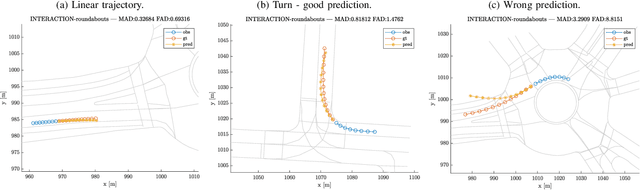

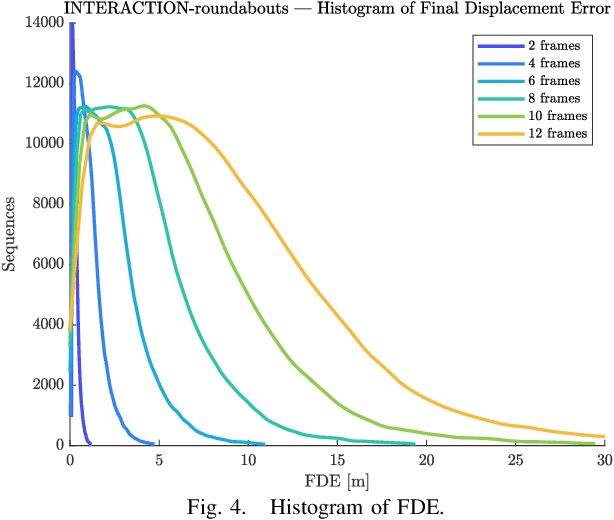

Abstract:Understanding the behavior of road users is of vital importance for the development of trajectory prediction systems. In this context, the latest advances have focused on recurrent structures, establishing the social interaction between the agents involved in the scene. More recently, simpler structures have also been introduced for predicting pedestrian trajectories, based on Transformer Networks, and using positional information. They allow the individual modelling of each agent's trajectory separately without any complex interaction terms. Our model exploits these simple structures by adding augmented data (position and heading), and adapting their use to the problem of vehicle trajectory prediction in urban scenarios in prediction horizons up to 5 seconds. In addition, a cross-performance analysis is performed between different types of scenarios, including highways, intersections and roundabouts, using recent datasets (inD, rounD, highD and INTERACTION). Our model achieves state-of-the-art results and proves to be flexible and adaptable to different types of urban contexts.

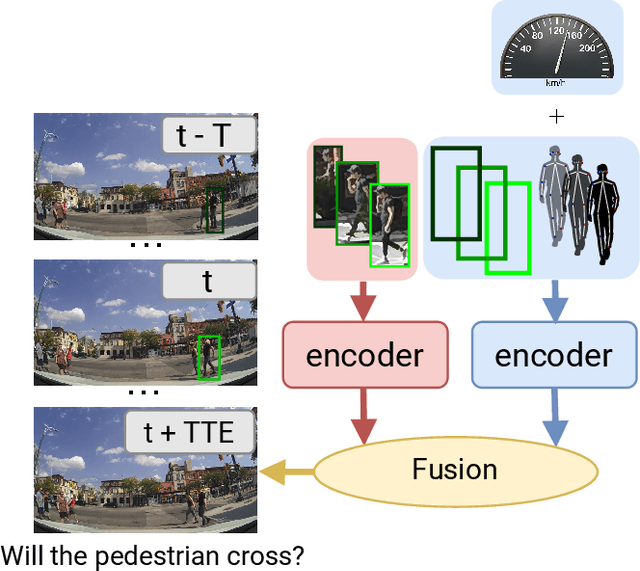

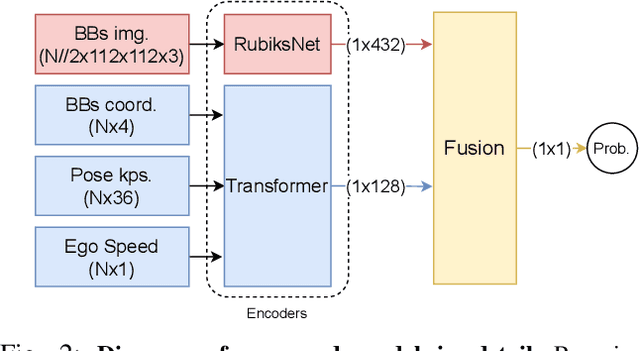

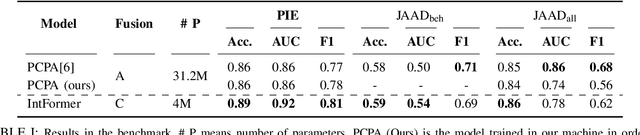

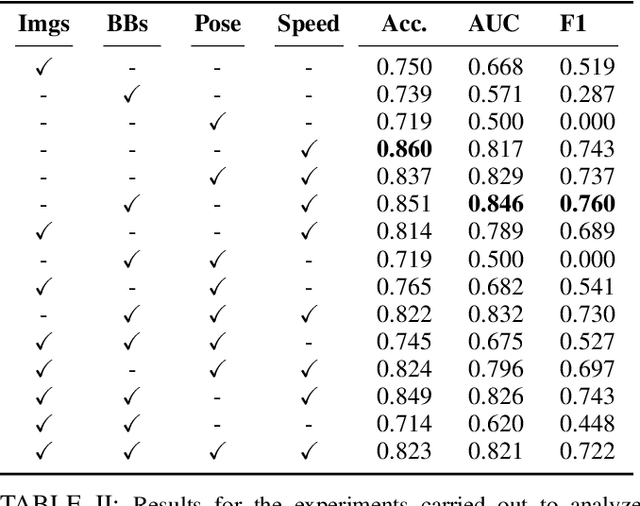

IntFormer: Predicting pedestrian intention with the aid of the Transformer architecture

May 18, 2021

Abstract:Understanding pedestrian crossing behavior is an essential goal in intelligent vehicle development, leading to an improvement in their security and traffic flow. In this paper, we developed a method called IntFormer. It is based on transformer architecture and a novel convolutional video classification model called RubiksNet. Following the evaluation procedure in a recent benchmark, we show that our model reaches state-of-the-art results with good performance ($\approx 40$ seq. per second) and size ($8\times $smaller than the best performing model), making it suitable for real-time usage. We also explore each of the input features, finding that ego-vehicle speed is the most important variable, possibly due to the similarity in crossing cases in PIE dataset.

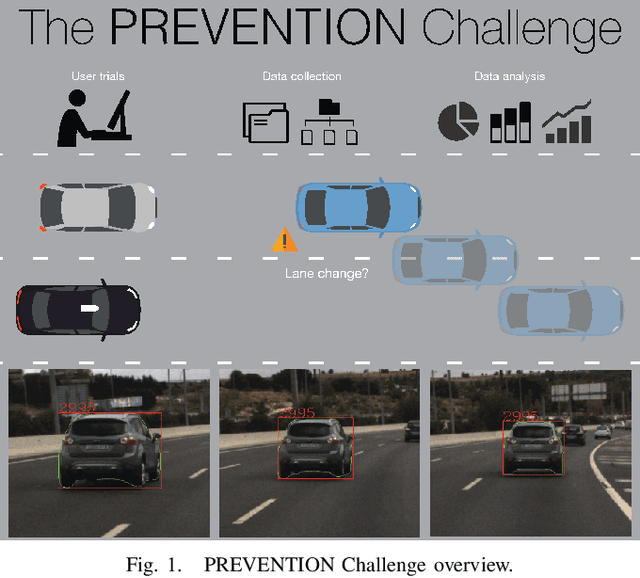

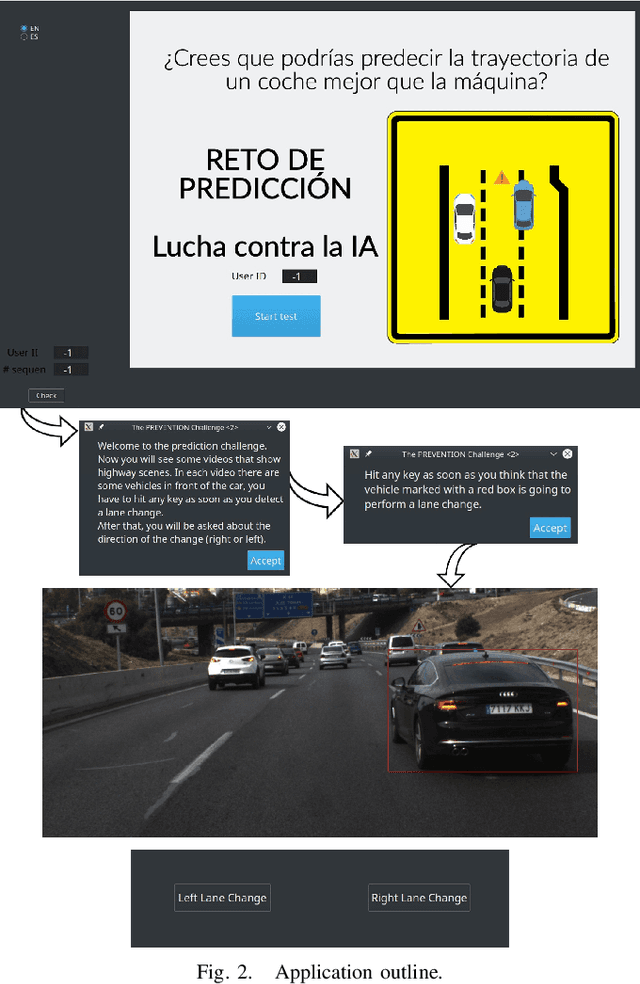

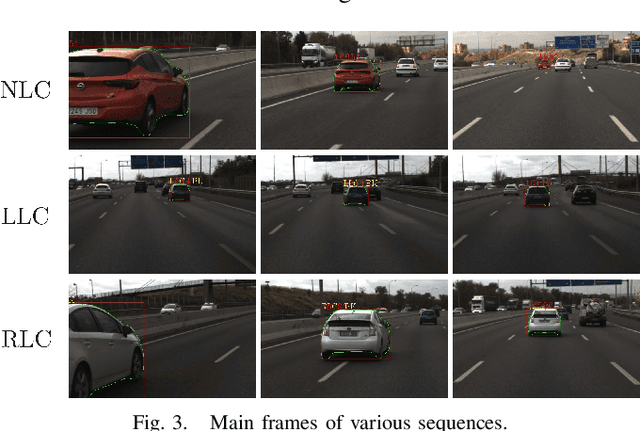

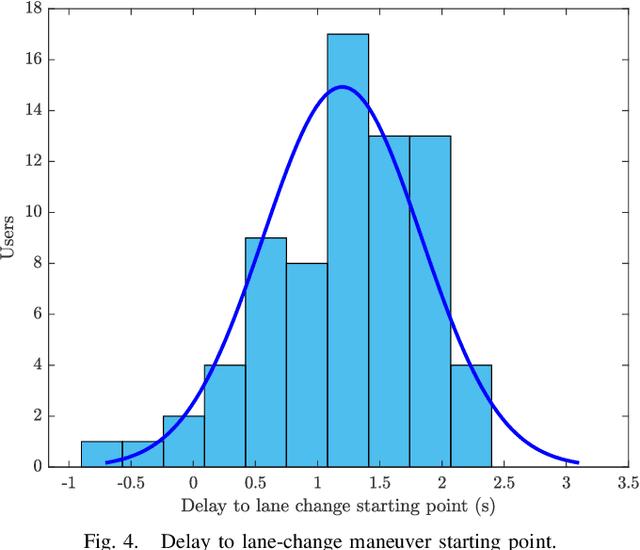

The PREVENTION Challenge: How Good Are Humans Predicting Lane Changes?

Sep 11, 2020

Abstract:While driving on highways, every driver tries to be aware of the behavior of surrounding vehicles, including possible emergency braking, evasive maneuvers trying to avoid obstacles, unexpected lane changes, or other emergencies that could lead to an accident. In this paper, human's ability to predict lane changes in highway scenarios is analyzed through the use of video sequences extracted from the PREVENTION dataset, a database focused on the development of research on vehicle intention and trajectory prediction. Thus, users had to indicate the moment at which they considered that a lane change maneuver was taking place in a target vehicle, subsequently indicating its direction: left or right. The results retrieved have been carefully analyzed and compared to ground truth labels, evaluating statistical models to understand whether humans can actually predict. The study has revealed that most participants are unable to anticipate lane-change maneuvers, detecting them after they have started. These results might serve as a baseline for AI's prediction ability evaluation, grading if those systems can outperform human skills by analyzing hidden cues that seem unnoticed, improving the detection time, and even anticipating maneuvers in some cases.

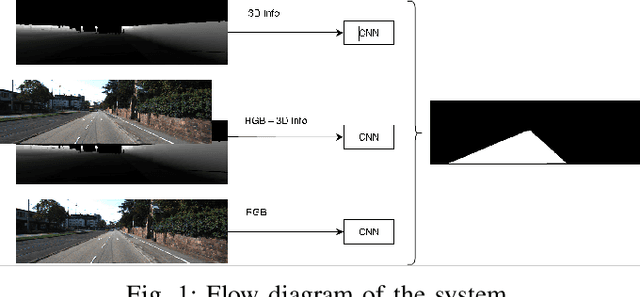

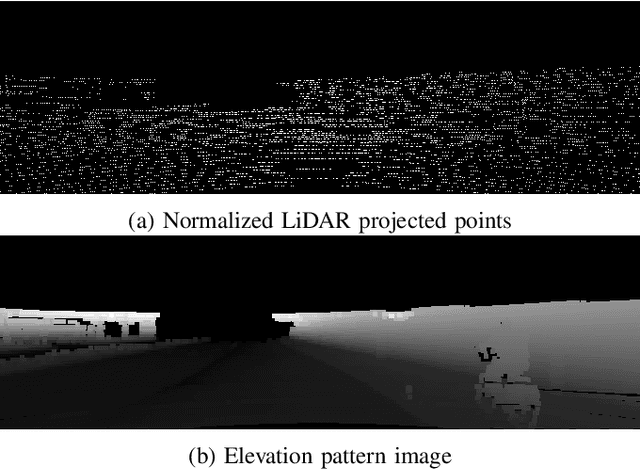

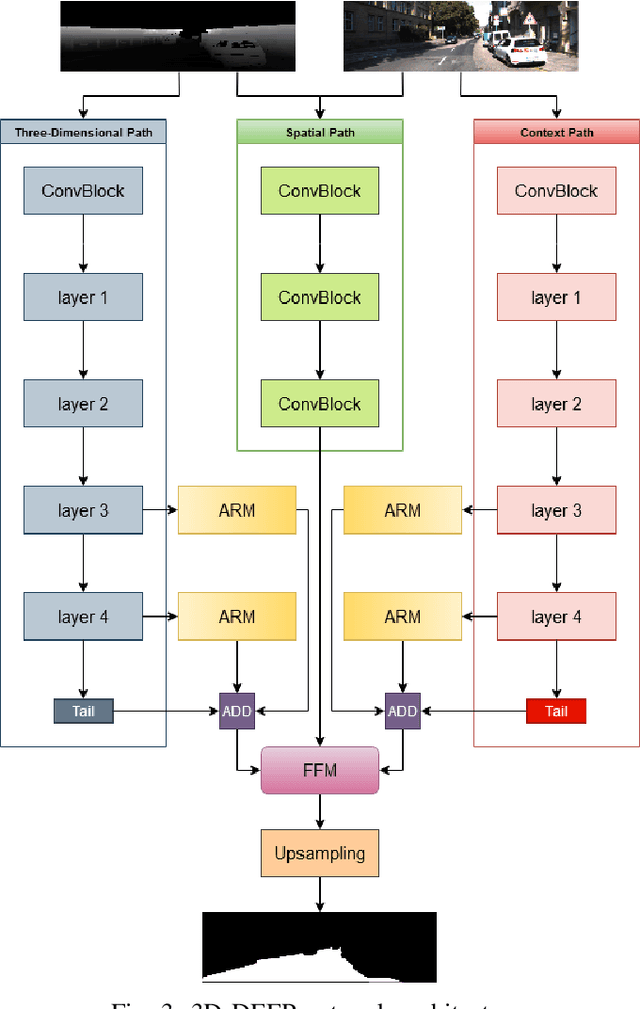

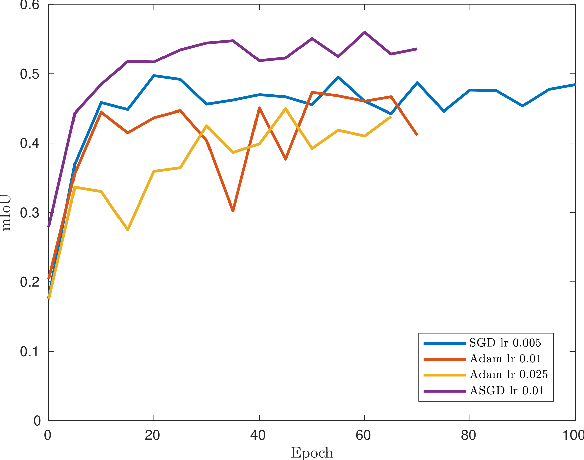

3D-DEEP: 3-Dimensional Deep-learning based on elevation patterns forroad scene interpretation

Sep 01, 2020

Abstract:Road detection and segmentation is a crucial task in computer vision for safe autonomous driving. With this in mind, a new net architecture (3D-DEEP) and its end-to-end training methodology for CNN-based semantic segmentation are described along this paper for. The method relies on disparity filtered and LiDAR projected images for three-dimensional information and image feature extraction through fully convolutional networks architectures. The developed models were trained and validated over Cityscapes dataset using just fine annotation examples with 19 different training classes, and over KITTI road dataset. 72.32% mean intersection over union(mIoU) has been obtained for the 19 Cityscapes training classes using the validation images. On the other hand, over KITTIdataset the model has achieved an F1 error value of 97.85% invalidation and 96.02% using the test images.

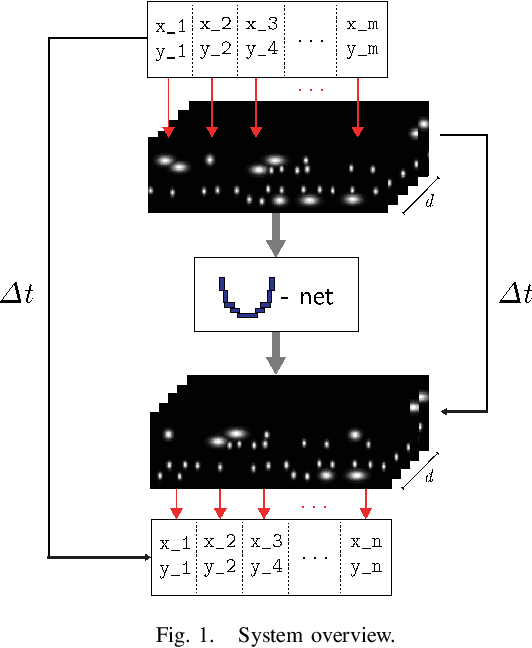

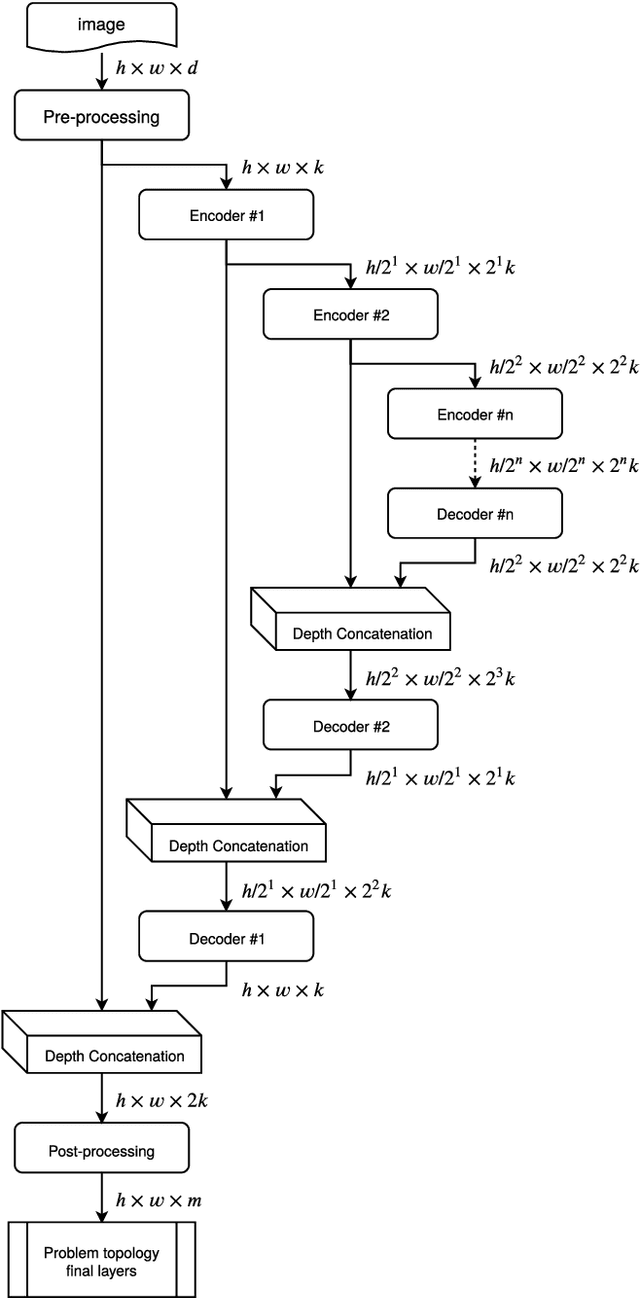

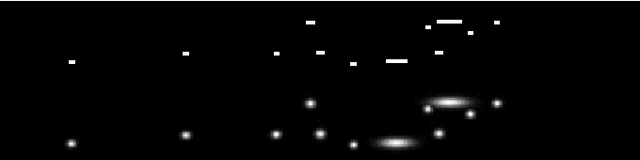

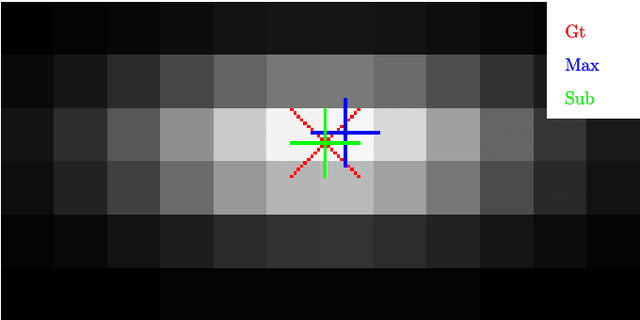

Vehicle Trajectory Prediction in Crowded Highway Scenarios Using Bird Eye View Representations and CNNs

Aug 26, 2020

Abstract:This paper describes a novel approach to perform vehicle trajectory predictions employing graphic representations. The vehicles are represented using Gaussian distributions into a Bird Eye View. Then the U-net model is used to perform sequence to sequence predictions. This deep learning-based methodology has been trained using the HighD dataset, which contains vehicles' detection in a highway scenario from aerial imagery. The problem is faced as an image to image regression problem training the network to learn the underlying relations between the traffic participants. This approach generates an estimation of the future appearance of the input scene, not trajectories or numeric positions. An extra step is conducted to extract the positions from the predicted representation with subpixel resolution. Different network configurations have been tested, and prediction error up to three seconds ahead is in the order of the representation resolution. The model has been tested in highway scenarios with more than 30 vehicles simultaneously in two opposite traffic flow streams showing good qualitative and quantitative results.

Add to Chrome

Add to Chrome Add to Firefox

Add to Firefox Add to Edge

Add to Edge