Hasan Esen

Safety-Aligned 3D Object Detection: Single-Vehicle, Cooperative, and End-to-End Perspectives

Apr 02, 2026Abstract:Perception plays a central role in connected and autonomous vehicles (CAVs), underpinning not only conventional modular driving stacks, but also cooperative perception systems and recent end-to-end driving models. While deep learning has greatly improved perception performance, its statistical nature makes perfect predictions difficult to attain. Meanwhile, standard training objectives and evaluation benchmarks treat all perception errors equally, even though only a subset is safety-critical. In this paper, we investigate safety-aligned evaluation and optimization for 3D object detection that explicitly characterize high-impact errors. Building on our previously proposed safety-oriented metric, NDS-USC, and safety-aware loss function, EC-IoU, we make three contributions. First, we present an expanded study of single-vehicle 3D object detection models across diverse neural network architectures and sensing modalities, showing that gains under standard metrics such as mAP and NDS may not translate to safety-oriented criteria represented by NDS-USC. With EC-IoU, we reaffirm the benefit of safety-aware fine-tuning for improving safety-critical detection performance. Second, we conduct an ego-centric, safety-oriented evaluation of AV-infrastructure cooperative object detection models, underscoring its superiority over vehicle-only models and demonstrating a safety impact analysis that illustrates the potential contribution of cooperative models to "Vision Zero." Third, we integrate EC-IoU into SparseDrive and show that safety-aware perception hardening can reduce collision rate by nearly 30% and improve system-level safety directly in an end-to-end perception-to-planning framework. Overall, our results indicate that safety-aligned perception evaluation and optimization offer a practical path toward enhancing CAV safety across single-vehicle, cooperative, and end-to-end autonomy settings.

Workflow-Level Design Principles for Trustworthy GenAI in Automotive System Engineering

Feb 23, 2026Abstract:The adoption of large language models in safety-critical system engineering is constrained by trustworthiness, traceability, and alignment with established verification practices. We propose workflow-level design principles for trustworthy GenAI integration and demonstrate them in an end-to-end automotive pipeline, from requirement delta identification to SysML v2 architecture update and re-testing. First, we show that monolithic ("big-bang") prompting misses critical changes in large specifications, while section-wise decomposition with diversity sampling and lightweight NLP sanity checks improves completeness and correctness. Then, we propagate requirement deltas into SysML v2 models and validate updates via compilation and static analysis. Additionally, we ensure traceable regression testing by generating test cases through explicit mappings from specification variables to architectural ports and states, providing practical safeguards for GenAI used in safety-critical automotive engineering.

Online Collision Risk Estimation via Monocular Depth-Aware Object Detectors and Fuzzy Inference

Nov 09, 2024Abstract:This paper presents a monitoring framework that infers the level of autonomous vehicle (AV) collision risk based on its object detector's performance using only monocular camera images. Essentially, the framework takes two sets of predictions produced by different algorithms and associates their inconsistencies with the collision risk via fuzzy inference. The first set of predictions is obtained through retrieving safety-critical 2.5D objects from a depth map, and the second set comes from the AV's 3D object detector. We experimentally validate that, based on Intersection-over-Union (IoU) and a depth discrepancy measure, the inconsistencies between the two sets of predictions strongly correlate to the safety-related error of the 3D object detector against ground truths. This correlation allows us to construct a fuzzy inference system and map the inconsistency measures to an existing collision risk indicator. In particular, we apply various knowledge- and data-driven techniques and find using particle swarm optimization that learns general fuzzy rules gives the best mapping result. Lastly, we validate our monitor's capability to produce relevant risk estimates with the large-scale nuScenes dataset and show it can safeguard an AV in closed-loop simulations.

EC-IoU: Orienting Safety for Object Detectors via Ego-Centric Intersection-over-Union

Mar 20, 2024

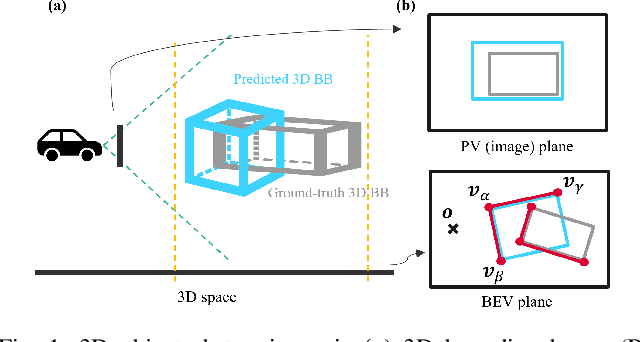

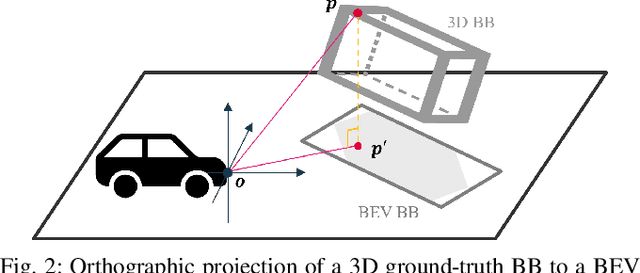

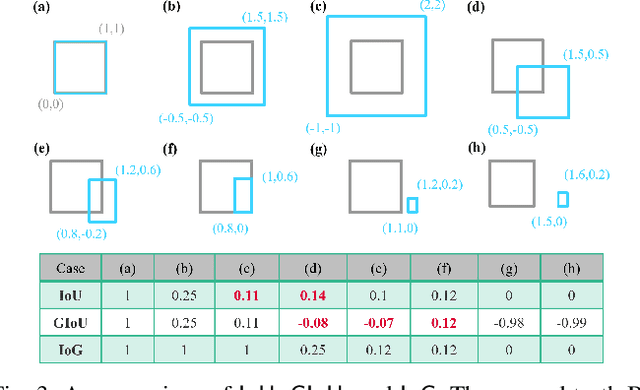

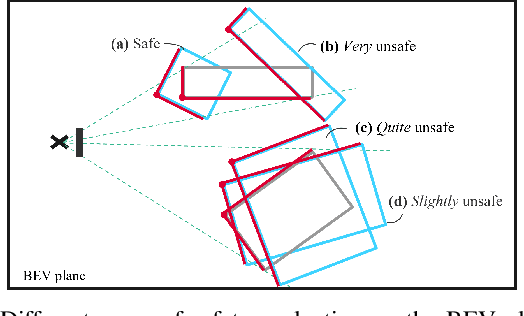

Abstract:This paper presents safety-oriented object detection via a novel Ego-Centric Intersection-over-Union (EC-IoU) measure, addressing practical concerns when applying state-of-the-art learning-based perception models in safety-critical domains such as autonomous driving. Concretely, we propose a weighting mechanism to refine the widely used IoU measure, allowing it to assign a higher score to a prediction that covers closer points of a ground-truth object from the ego agent's perspective. The proposed EC-IoU measure can be used in typical evaluation processes to select object detectors with higher safety-related performance for downstream tasks. It can also be integrated into common loss functions for model fine-tuning. While geared towards safety, our experiment with the KITTI dataset demonstrates the performance of a model trained on EC-IoU can be better than that of a variant trained on IoU in terms of mean Average Precision as well.

Safety Metrics and Losses for Object Detection in Autonomous Driving

Sep 21, 2022

Abstract:State-of-the-art object detectors have been shown effective in many applications. Usually, their performance is evaluated based on accuracy metrics such as mean Average Precision. In this paper, we consider a safety property of 3D object detectors in the context of Autonomous Driving (AD). In particular, we propose an essential safety requirement for object detectors in AD and formulate it into a specification. During the formulation, we find that abstracting 3D objects with projected 2D bounding boxes on the image and bird's-eye-view planes allows for a necessary and sufficient condition to the proposed safety requirement. We then leverage the analysis and derive qualitative and quantitative safety metrics based on the Intersection-over-Ground-Truth measure and a distance ratio between predictions and ground truths. Finally, for continual improvement, we formulate safety losses that can be used to optimize object detectors towards higher safety scores. Our experiments with public models on the MMDetection3D library and the nuScenes datasets demonstrate the validity of our consideration and proposals.

Add to Chrome

Add to Chrome Add to Firefox

Add to Firefox Add to Edge

Add to Edge