Haoyang Yan

Single-Agent Scaling Fails Multi-Agent Intelligence: Towards Foundation Models with Native Multi-Agent Intelligence

Dec 16, 2025Abstract:Foundation models (FMs) are increasingly assuming the role of the ''brain'' of AI agents. While recent efforts have begun to equip FMs with native single-agent abilities -- such as GUI interaction or integrated tool use -- we argue that the next frontier is endowing FMs with native multi-agent intelligence. We identify four core capabilities of FMs in multi-agent contexts: understanding, planning, efficient communication, and adaptation. Contrary to assumptions about the spontaneous emergence of such abilities, we provide extensive empirical evidence, across 41 large language models and 7 challenging benchmarks, showing that scaling single-agent performance alone does not automatically yield robust multi-agent intelligence. To address this gap, we outline key research directions -- spanning dataset construction, evaluation, training paradigms, and safety considerations -- for building FMs with native multi-agent intelligence.

Learning dynamic and hierarchical traffic spatiotemporal features with Transformer

Apr 12, 2021

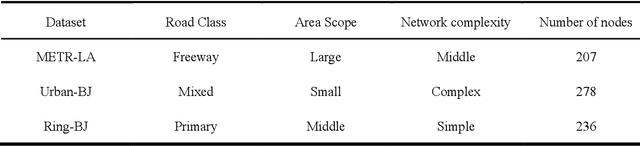

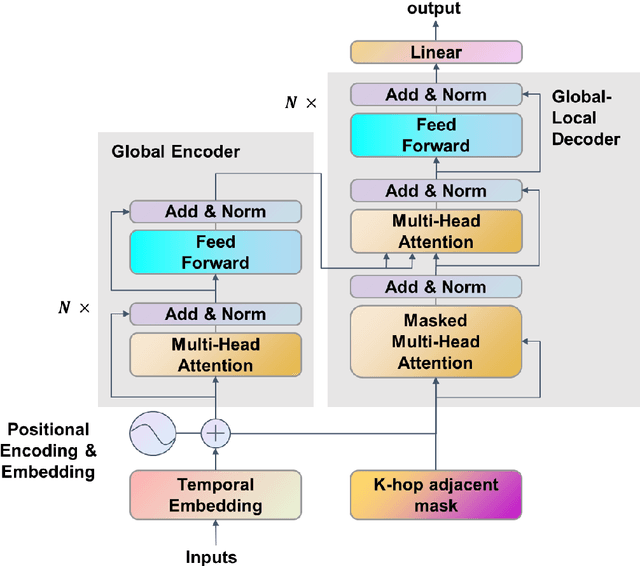

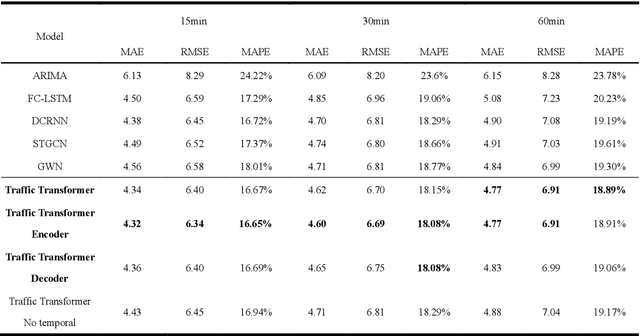

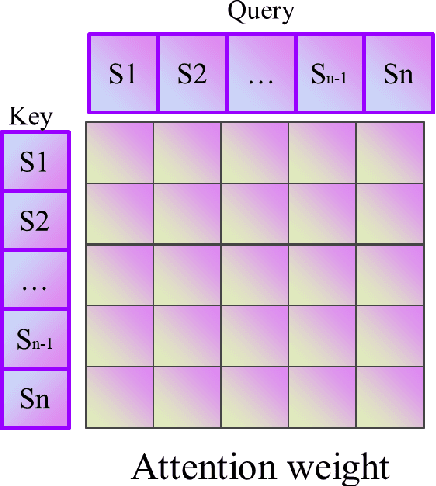

Abstract:Traffic forecasting is an indispensable part of Intelligent transportation systems (ITS), and long-term network-wide accurate traffic speed forecasting is one of the most challenging tasks. Recently, deep learning methods have become popular in this domain. As traffic data are physically associated with road networks, most proposed models treat it as a spatiotemporal graph modeling problem and use Graph Convolution Network (GCN) based methods. These GCN-based models highly depend on a predefined and fixed adjacent matrix to reflect the spatial dependency. However, the predefined fixed adjacent matrix is limited in reflecting the actual dependence of traffic flow. This paper proposes a novel model, Traffic Transformer, for spatial-temporal graph modeling and long-term traffic forecasting to overcome these limitations. Transformer is the most popular framework in Natural Language Processing (NLP). And by adapting it to the spatiotemporal problem, Traffic Transformer hierarchically extracts spatiotemporal features through data dynamically by multi-head attention and masked multi-head attention mechanism, and fuse these features for traffic forecasting. Furthermore, analyzing the attention weight matrixes can find the influential part of road networks, allowing us to learn the traffic networks better. Experimental results on the public traffic network datasets and real-world traffic network datasets generated by ourselves demonstrate our proposed model achieves better performance than the state-of-the-art ones.

Add to Chrome

Add to Chrome Add to Firefox

Add to Firefox Add to Edge

Add to Edge