Hanyu Yang

Model Risk Management for Generative AI In Financial Institutions

Mar 19, 2025Abstract:The success of OpenAI's ChatGPT in 2023 has spurred financial enterprises into exploring Generative AI applications to reduce costs or drive revenue within different lines of businesses in the Financial Industry. While these applications offer strong potential for efficiencies, they introduce new model risks, primarily hallucinations and toxicity. As highly regulated entities, financial enterprises (primarily large US banks) are obligated to enhance their model risk framework with additional testing and controls to ensure safe deployment of such applications. This paper outlines the key aspects for model risk management of generative AI model with a special emphasis on additional practices required in model validation.

Frequency Diverse Array-enabled RIS-aided Integrated Sensing and Communication

Oct 01, 2024

Abstract:Integrated sensing and communication (ISAC) has been envisioned as a prospective technology to enable ubiquitous sensing and communications in next-generation wireless networks. In contrast to existing works on reconfigurable intelligent surface (RIS) aided ISAC systems using conventional phased arrays (PAs), this paper investigates a frequency diverse array (FDA)-enabled RIS-aided ISAC system, where the FDA aims to provide a distance-angle-dependent beampattern to effectively suppress the clutter, and RIS is employed to establish high-quality links between the BS and users/target. We aim to maximize sum rate by jointly optimizing the BS transmit beamforming vectors, the covariance matrix of the dedicated radar signal, the RIS phase shift matrix, the FDA frequency offsets and the radar receive equalizer, while guaranteeing the required signal-to-clutter-plus-noise ratio (SCNR) of the radar echo signal. To tackle this challenging problem, we first theoretically prove that the dedicated radar signal is unnecessary for enhancing target sensing performance, based on which the original problem is much simplified. Then, we turn our attention to the single-user single-target (SUST) scenario to demonstrate that the FDA-RIS-aided ISAC system always achieves a higher SCNR than its PA-RIS-aided counterpart. Moreover, it is revealed that the SCNR increment exhibits linear growth with the BS transmit power and the number of BS receive antennas. In order to effectively solve this simplified problem, we leverage the fractional programming (FP) theory and subsequently develop an efficient alternating optimization (AO) algorithm based on symmetric alternating direction method of multipliers (SADMM) and successive convex approximation (SCA) techniques. Numerical results demonstrate the superior performance of our proposed algorithm in terms of sum rate and radar SCNR.

Robustness Tests of NLP Machine Learning Models: Search and Semantically Replace

Apr 20, 2021

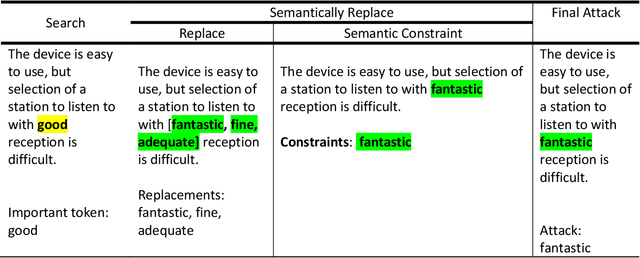

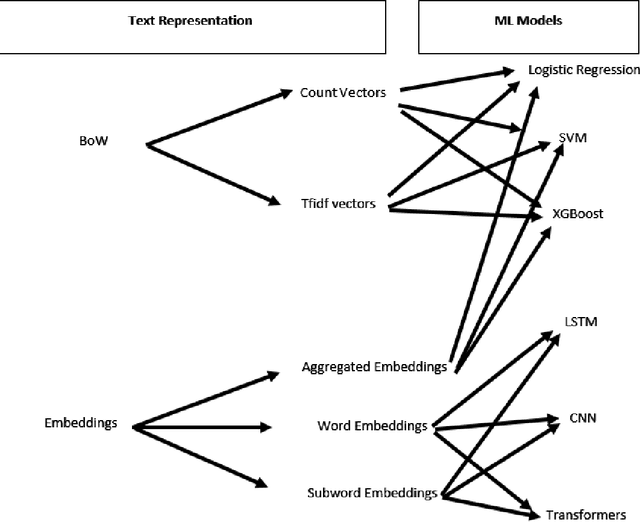

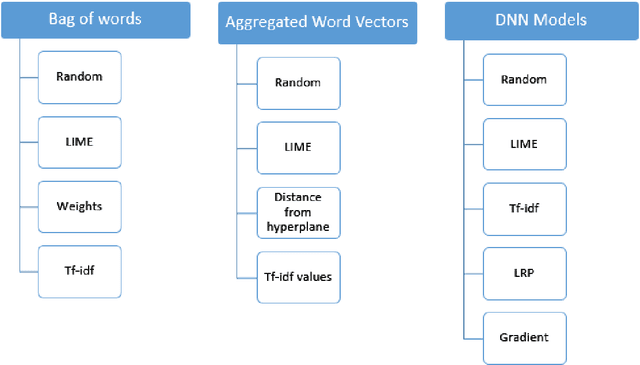

Abstract:This paper proposes a strategy to assess the robustness of different machine learning models that involve natural language processing (NLP). The overall approach relies upon a Search and Semantically Replace strategy that consists of two steps: (1) Search, which identifies important parts in the text; (2) Semantically Replace, which finds replacements for the important parts, and constrains the replaced tokens with semantically similar words. We introduce different types of Search and Semantically Replace methods designed specifically for particular types of machine learning models. We also investigate the effectiveness of this strategy and provide a general framework to assess a variety of machine learning models. Finally, an empirical comparison is provided of robustness performance among three different model types, each with a different text representation.

Supervised Machine Learning Techniques: An Overview with Applications to Banking

Jul 28, 2020

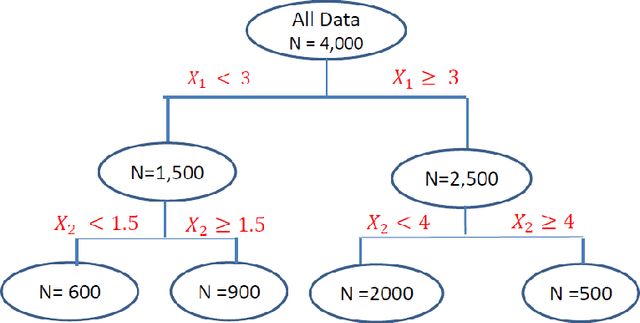

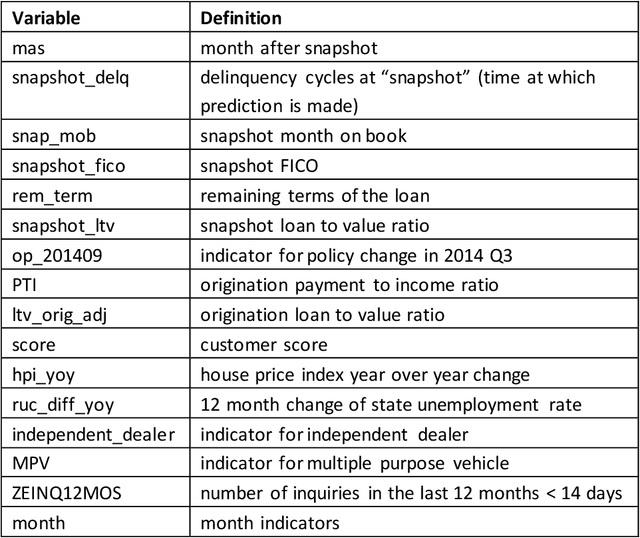

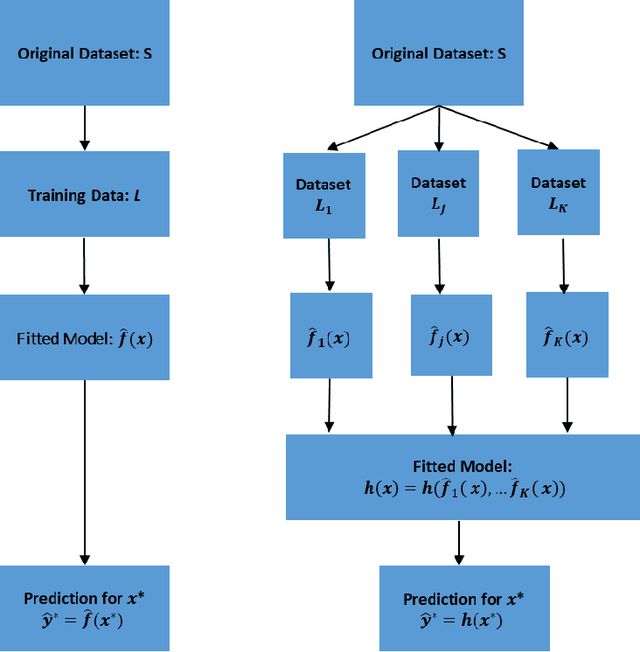

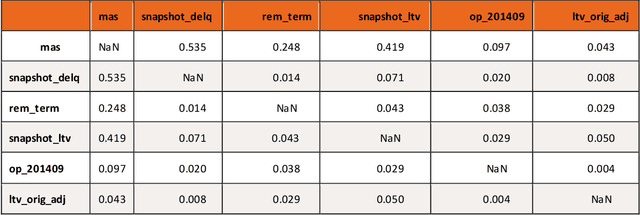

Abstract:This article provides an overview of Supervised Machine Learning (SML) with a focus on applications to banking. The SML techniques covered include Bagging (Random Forest or RF), Boosting (Gradient Boosting Machine or GBM) and Neural Networks (NNs). We begin with an introduction to ML tasks and techniques. This is followed by a description of: i) tree-based ensemble algorithms including Bagging with RF and Boosting with GBMs, ii) Feedforward NNs, iii) a discussion of hyper-parameter optimization techniques, and iv) machine learning interpretability. The paper concludes with a comparison of the features of different ML algorithms. Examples taken from credit risk modeling in banking are used throughout the paper to illustrate the techniques and interpret the results of the algorithms.

Add to Chrome

Add to Chrome Add to Firefox

Add to Firefox Add to Edge

Add to Edge