Fazilet Gokbudak

Physically Based Neural Bidirectional Reflectance Distribution Function

Nov 04, 2024Abstract:We introduce the physically based neural bidirectional reflectance distribution function (PBNBRDF), a novel, continuous representation for material appearance based on neural fields. Our model accurately reconstructs real-world materials while uniquely enforcing physical properties for realistic BRDFs, specifically Helmholtz reciprocity via reparametrization and energy passivity via efficient analytical integration. We conduct a systematic analysis demonstrating the benefits of adhering to these physical laws on the visual quality of reconstructed materials. Additionally, we enhance the color accuracy of neural BRDFs by introducing chromaticity enforcement supervising the norms of RGB channels. Through both qualitative and quantitative experiments on multiple databases of measured real-world BRDFs, we show that adhering to these physical constraints enables neural fields to more faithfully and stably represent the original data and achieve higher rendering quality.

Multispectral Fine-Grained Classification of Blackgrass in Wheat and Barley Crops

May 03, 2024

Abstract:As the burden of herbicide resistance grows and the environmental repercussions of excessive herbicide use become clear, new ways of managing weed populations are needed. This is particularly true for cereal crops, like wheat and barley, that are staple food crops and occupy a globally significant portion of agricultural land. Even small improvements in weed management practices across these major food crops worldwide would yield considerable benefits for both the environment and global food security. Blackgrass is a major grass weed which causes particular problems in cereal crops in north-west Europe, a major cereal production area, because it has high levels of of herbicide resistance and is well adapted to agronomic practice in this region. With the use of machine vision and multispectral imaging, we investigate the effectiveness of state-of-the-art methods to identify blackgrass in wheat and barley crops. As part of this work, we provide a large dataset with which we evaluate several key aspects of blackgrass weed recognition. Firstly, we determine the performance of different CNN and transformer-based architectures on images from unseen fields. Secondly, we demonstrate the role that different spectral bands have on the performance of weed classification. Lastly, we evaluate the role of dataset size in classification performance for each of the models trialled. We find that even with a fairly modest quantity of training data an accuracy of almost 90% can be achieved on images from unseen fields.

One-shot Detail Retouching with Patch Space Neural Field based Transformation Blending

Oct 03, 2022

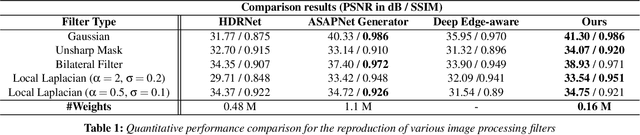

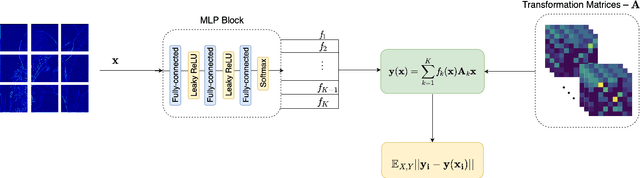

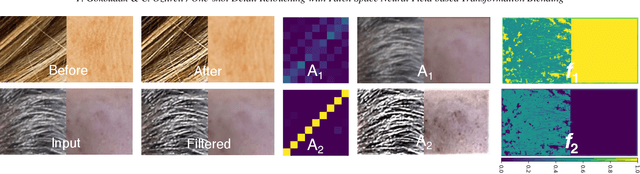

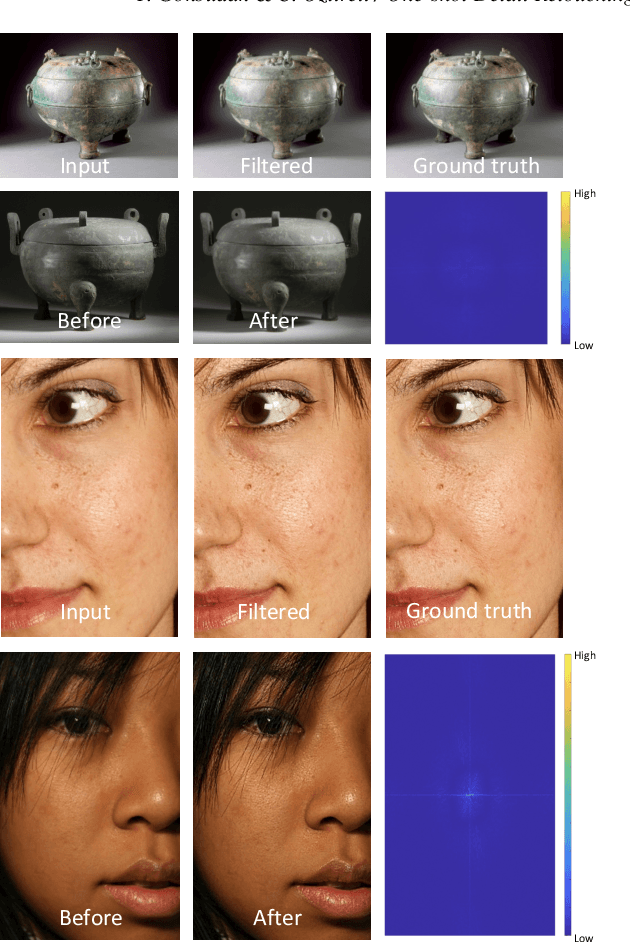

Abstract:Photo retouching is a difficult task for novice users as it requires expert knowledge and advanced tools. Photographers often spend a great deal of time generating high-quality retouched photos with intricate details. In this paper, we introduce a one-shot learning based technique to automatically retouch details of an input image based on just a single pair of before and after example images. Our approach provides accurate and generalizable detail edit transfer to new images. We achieve these by proposing a new representation for image to image maps. Specifically, we propose neural field based transformation blending in the patch space for defining patch to patch transformations for each frequency band. This parametrization of the map with anchor transformations and associated weights, and spatio-spectral localized patches, allows us to capture details well while staying generalizable. We evaluate our technique both on known ground truth filtes and artist retouching edits. Our method accurately transfers complex detail retouching edits.

Add to Chrome

Add to Chrome Add to Firefox

Add to Firefox Add to Edge

Add to Edge