E. A. Huerta

Advances in Machine and Deep Learning for Modeling and Real-time Detection of Multi-Messenger Sources

May 13, 2021

Abstract:We live in momentous times. The science community is empowered with an arsenal of cosmic messengers to study the Universe in unprecedented detail. Gravitational waves, electromagnetic waves, neutrinos and cosmic rays cover a wide range of wavelengths and time scales. Combining and processing these datasets that vary in volume, speed and dimensionality requires new modes of instrument coordination, funding and international collaboration with a specialized human and technological infrastructure. In tandem with the advent of large-scale scientific facilities, the last decade has experienced an unprecedented transformation in computing and signal processing algorithms. The combination of graphics processing units, deep learning, and the availability of open source, high-quality datasets, have powered the rise of artificial intelligence. This digital revolution now powers a multi-billion dollar industry, with far-reaching implications in technology and society. In this chapter we describe pioneering efforts to adapt artificial intelligence algorithms to address computational grand challenges in Multi-Messenger Astrophysics. We review the rapid evolution of these disruptive algorithms, from the first class of algorithms introduced in early 2017, to the sophisticated algorithms that now incorporate domain expertise in their architectural design and optimization schemes. We discuss the importance of scientific visualization and extreme-scale computing in reducing time-to-insight and obtaining new knowledge from the interplay between models and data.

Confluence of Artificial Intelligence and High Performance Computing for Accelerated, Scalable and Reproducible Gravitational Wave Detection

Dec 15, 2020

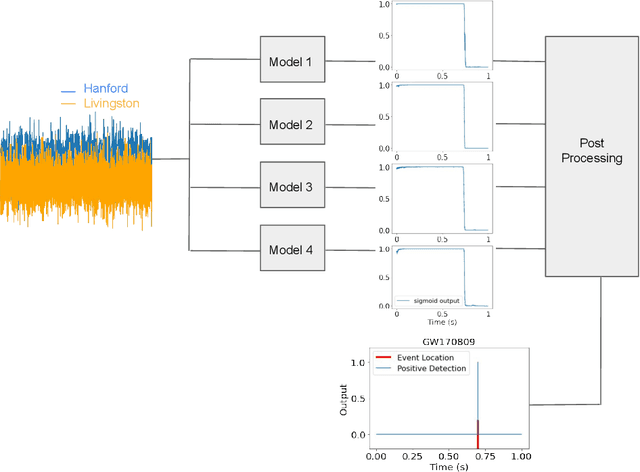

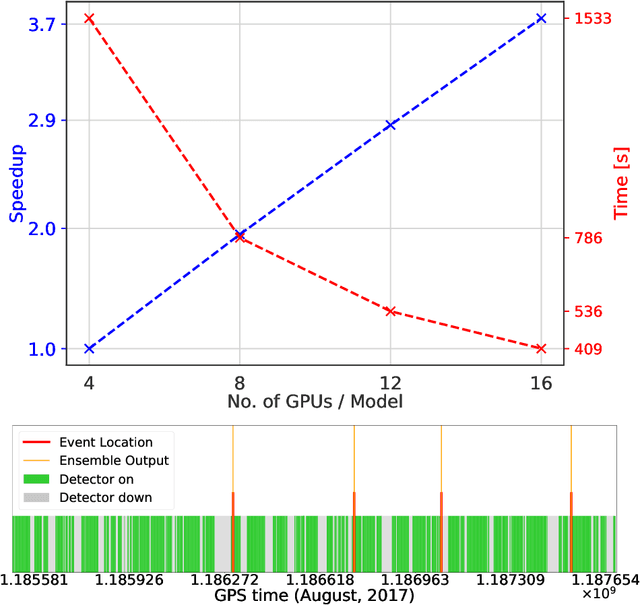

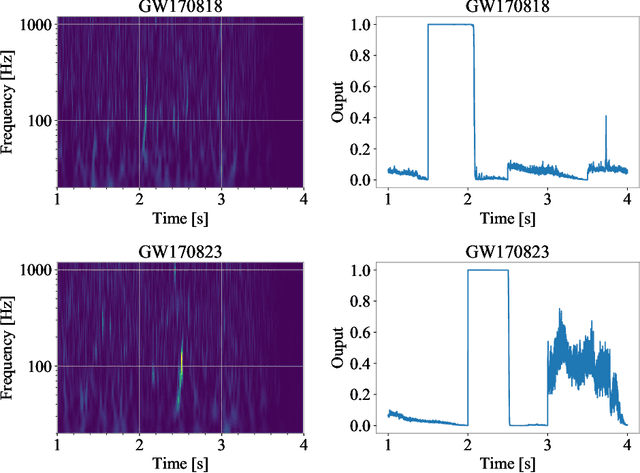

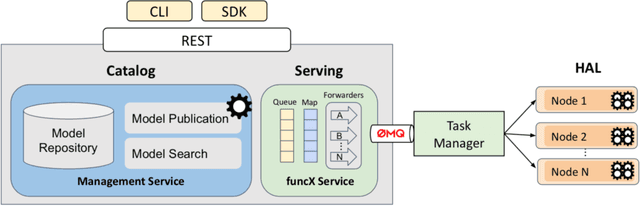

Abstract:Finding new ways to use artificial intelligence (AI) to accelerate the analysis of gravitational wave data, and ensuring the developed models are easily reusable promises to unlock new opportunities in multi-messenger astrophysics (MMA), and to enable wider use, rigorous validation, and sharing of developed models by the community. In this work, we demonstrate how connecting recently deployed DOE and NSF-sponsored cyberinfrastructure allows for new ways to publish models, and to subsequently deploy these models into applications using computing platforms ranging from laptops to high performance computing clusters. We develop a workflow that connects the Data and Learning Hub for Science (DLHub), a repository for publishing machine learning models, with the Hardware Accelerated Learning (HAL) deep learning computing cluster, using funcX as a universal distributed computing service. We then use this workflow to search for binary black hole gravitational wave signals in open source advanced LIGO data. We find that using this workflow, an ensemble of four openly available deep learning models can be run on HAL and process the entire month of August 2017 of advanced LIGO data in just seven minutes, identifying all four binary black hole mergers previously identified in this dataset, and reporting no misclassifications. This approach, which combines advances in AI, distributed computing, and scientific data infrastructure opens new pathways to conduct reproducible, accelerated, data-driven gravitational wave detection.

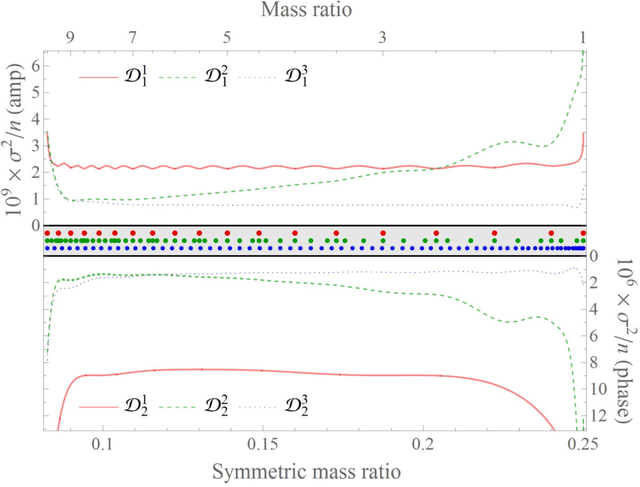

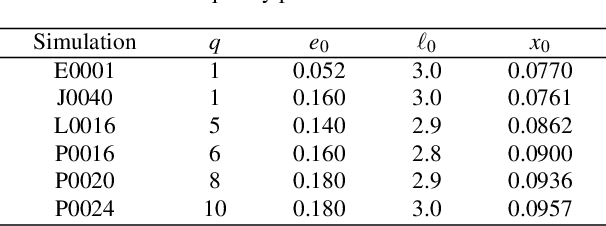

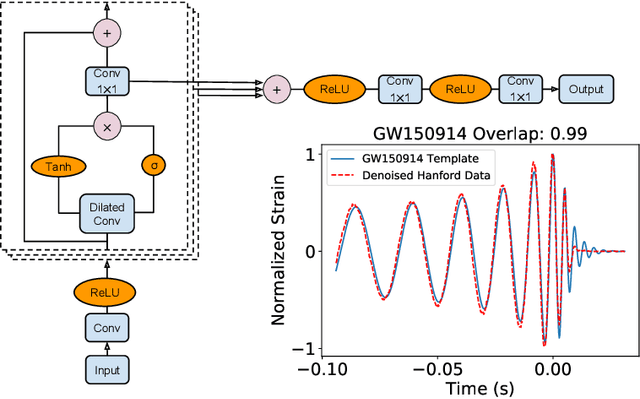

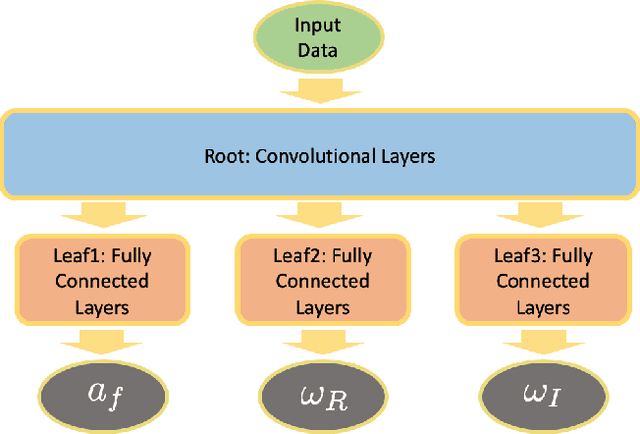

Physics-inspired deep learning to characterize the signal manifold of quasi-circular, spinning, non-precessing binary black hole mergers

Apr 20, 2020

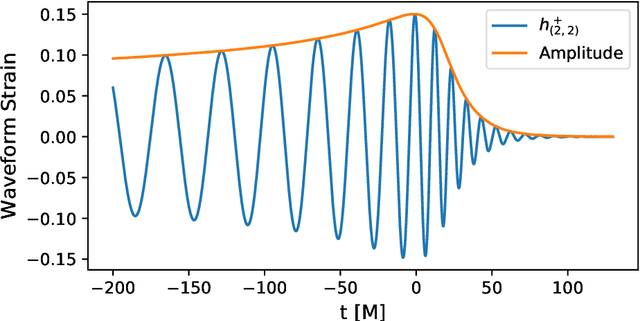

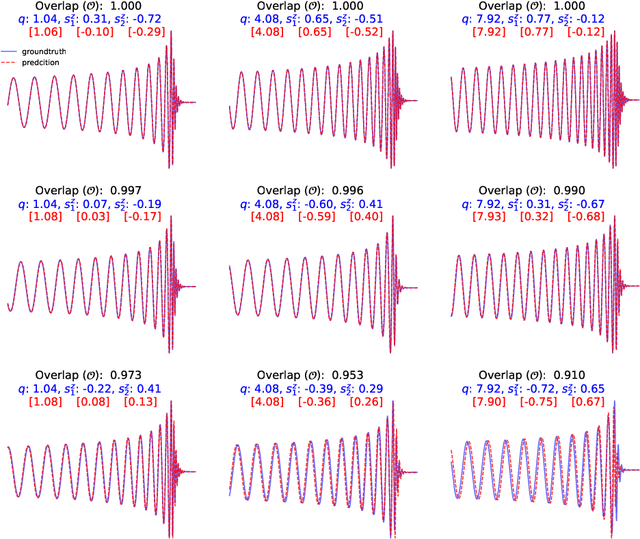

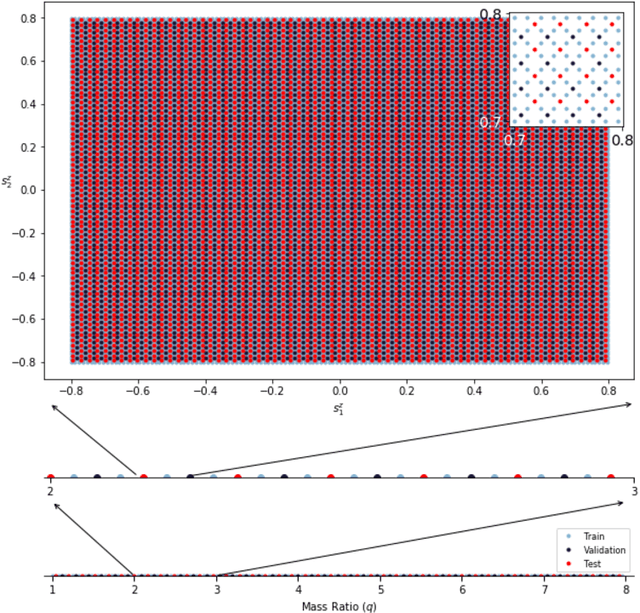

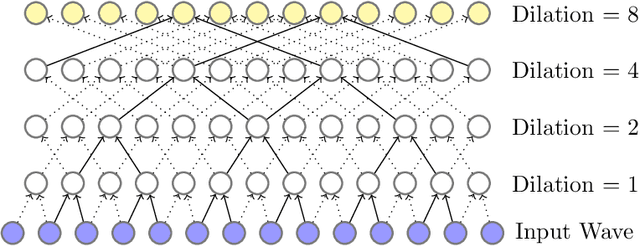

Abstract:The spin distribution of binary black hole mergers contains key information concerning the formation channels of these objects, and the astrophysical environments where they form, evolve and coalesce. To quantify the suitability of deep learning to characterize the signal manifold of quasi-circular, spinning, non-precessing binary black hole mergers, we introduce a modified version of WaveNet trained with a novel optimization scheme that incorporates general relativistic constraints of the spin properties of astrophysical black holes. The neural network model is trained, validated and tested with 1.5 million $\ell=|m|=2$ waveforms generated within the regime of validity of NRHybSur3dq8, i.e., mass-ratios $q\leq8$ and individual black hole spins $ | s^z_{\{1,\,2\}} | \leq 0.8$. Using this neural network model, we quantify how accurately we can infer the astrophysical parameters of black hole mergers in the absence of noise. We do this by computing the overlap between waveforms in the testing data set and the corresponding signals whose mass-ratio and individual spins are predicted by our neural network. We find that the convergence of high performance computing and physics-inspired optimization algorithms enable an accurate reconstruction of the mass-ratio and individual spins of binary black hole mergers across the parameter space under consideration. This is a significant step towards an informed utilization of physics-inspired deep learning models to reconstruct the spin distribution of binary black hole mergers in realistic detection scenarios.

Convergence of Artificial Intelligence and High Performance Computing on NSF-supported Cyberinfrastructure

Mar 18, 2020

Abstract:Significant investments to upgrade or construct large-scale scientific facilities demand commensurate investments in R&D to design algorithms and computing approaches to enable scientific and engineering breakthroughs in the big data era. The remarkable success of Artificial Intelligence (AI) algorithms to turn big-data challenges in industry and technology into transformational digital solutions that drive a multi-billion dollar industry, which play an ever increasing role shaping human social patterns, has promoted AI as the most sought after signal processing tool in big-data research. As AI continues to evolve into a computing tool endowed with statistical and mathematical rigor, and which encodes domain expertise to inform and inspire AI architectures and optimization algorithms, it has become apparent that single-GPU solutions for training, validation, and testing are no longer sufficient. This realization has been driving the confluence of AI and high performance computing (HPC) to reduce time-to-insight and to produce robust, reliable, trustworthy, and computationally efficient AI solutions. In this white paper, we present a summary of recent developments in this field, and discuss avenues to accelerate and streamline the use of HPC platforms to design accelerated AI algorithms.

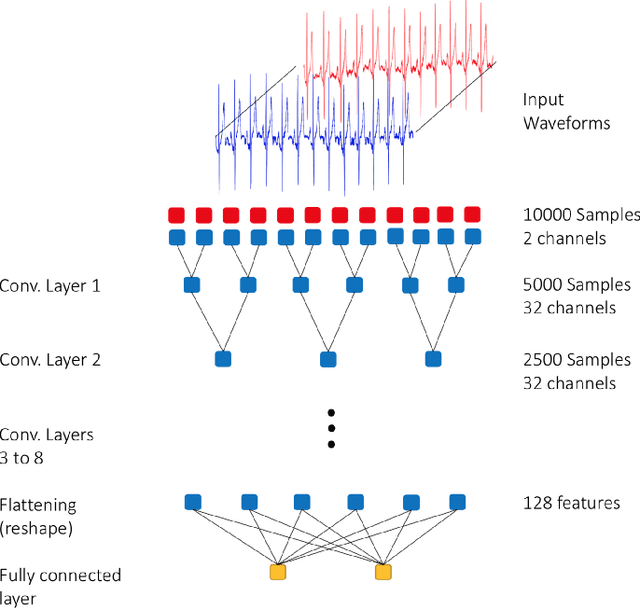

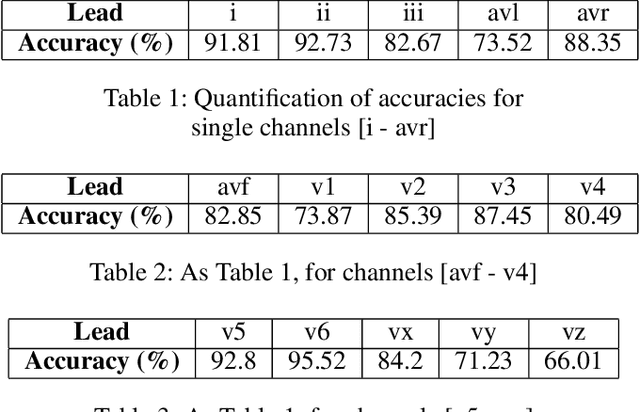

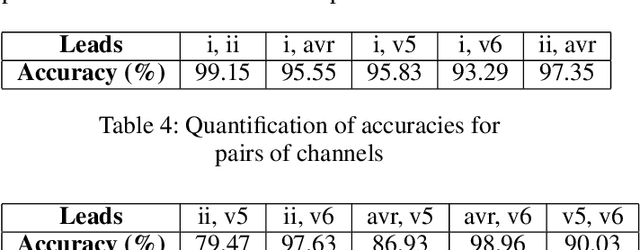

Deep Learning for Cardiologist-level Myocardial Infarction Detection in Electrocardiograms

Dec 16, 2019

Abstract:Heart disease is the leading cause of death worldwide. Amongst patients with cardiovascular diseases, myocardial infarction is the main cause of death. In order to provide adequate healthcare support to patients who may experience this clinical event, it is essential to gather supportive evidence in a timely manner to help secure a correct diagnosis. In this article, we study the feasibility of using deep learning to identify suggestive electrocardiographic (ECG) changes that may correctly classify heart conditions using the Physikalisch-Technische Bundesanstalt (PTB) database. As part of this study, we systematically quantify the contribution of each ECG lead to correctly tell apart a healthy from an unhealthy heart. For such a study we fine-tune the ConvNetQuake neural network model, which was originally designed to identify earthquakes. Our findings indicate that out of 15 ECG leads, data from the v6 and vz leads are critical to correctly identify myocardial infarction. Based on these findings, we modify ConvNetQuake to simultaneously take in raw ECG data from leads v6 and vz, achieving $99.43\%$ classification accuracy, which represents cardiologist-level performance level for myocardial infarction detection after feeding only 10 seconds of raw ECG data to our neural network model. This approach differs from others in the community in that the ECG data fed into the neural network model does not require any kind of manual feature extraction or pre-processing.

Enabling real-time multi-messenger astrophysics discoveries with deep learning

Nov 26, 2019

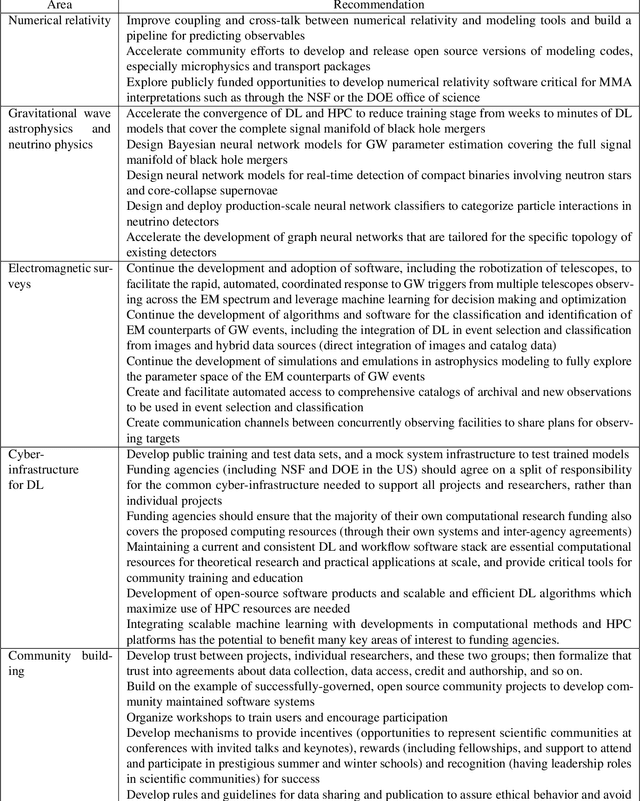

Abstract:Multi-messenger astrophysics is a fast-growing, interdisciplinary field that combines data, which vary in volume and speed of data processing, from many different instruments that probe the Universe using different cosmic messengers: electromagnetic waves, cosmic rays, gravitational waves and neutrinos. In this Expert Recommendation, we review the key challenges of real-time observations of gravitational wave sources and their electromagnetic and astroparticle counterparts, and make a number of recommendations to maximize their potential for scientific discovery. These recommendations refer to the design of scalable and computationally efficient machine learning algorithms; the cyber-infrastructure to numerically simulate astrophysical sources, and to process and interpret multi-messenger astrophysics data; the management of gravitational wave detections to trigger real-time alerts for electromagnetic and astroparticle follow-ups; a vision to harness future developments of machine learning and cyber-infrastructure resources to cope with the big-data requirements; and the need to build a community of experts to realize the goals of multi-messenger astrophysics.

* Invited Expert Recommendation for Nature Reviews Physics. The art work produced by E. A. Huerta and Shawn Rosofsky for this article was used by Carl Conway to design the cover of the October 2019 issue of Nature Reviews Physics

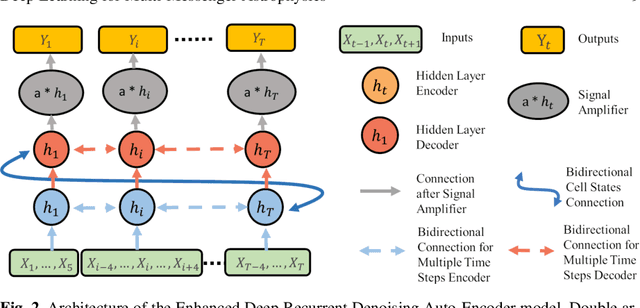

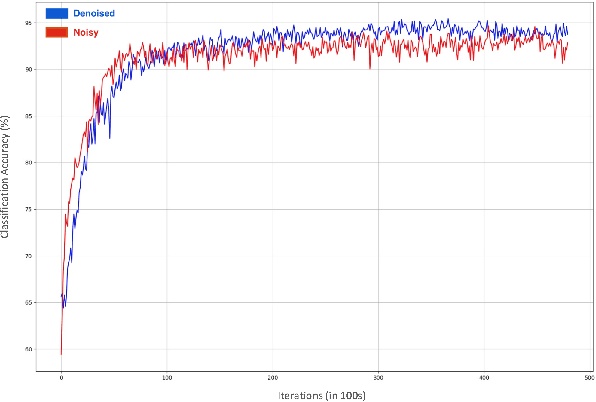

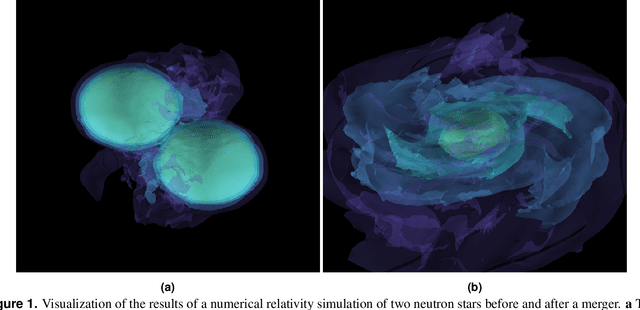

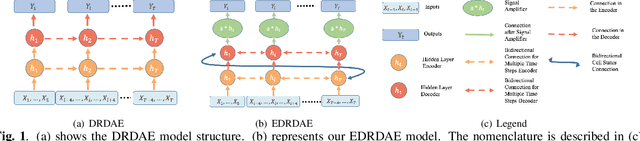

Denoising Gravitational Waves with Enhanced Deep Recurrent Denoising Auto-Encoders

Mar 06, 2019

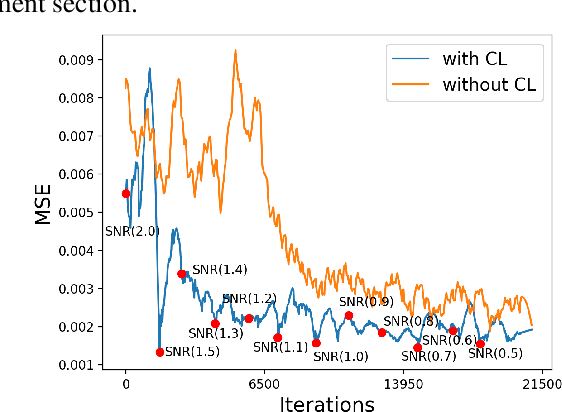

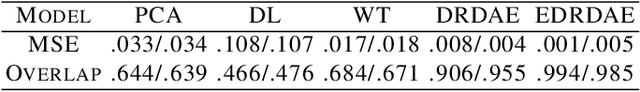

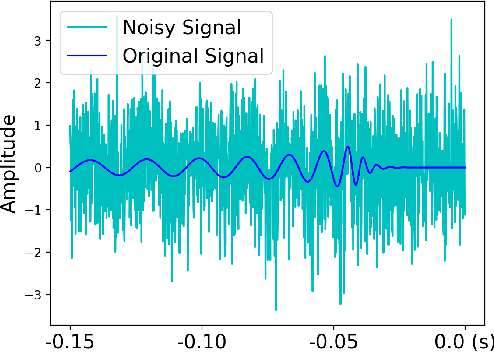

Abstract:Denoising of time domain data is a crucial task for many applications such as communication, translation, virtual assistants etc. For this task, a combination of a recurrent neural net (RNNs) with a Denoising Auto-Encoder (DAEs) has shown promising results. However, this combined model is challenged when operating with low signal-to-noise ratio (SNR) data embedded in non-Gaussian and non-stationary noise. To address this issue, we design a novel model, referred to as 'Enhanced Deep Recurrent Denoising Auto-Encoder' (EDRDAE), that incorporates a signal amplifier layer, and applies curriculum learning by first denoising high SNR signals, before gradually decreasing the SNR until the signals become noise dominated. We showcase the performance of EDRDAE using time-series data that describes gravitational waves embedded in very noisy backgrounds. In addition, we show that EDRDAE can accurately denoise signals whose topology is significantly more complex than those used for training, demonstrating that our model generalizes to new classes of gravitational waves that are beyond the scope of established denoising algorithms.

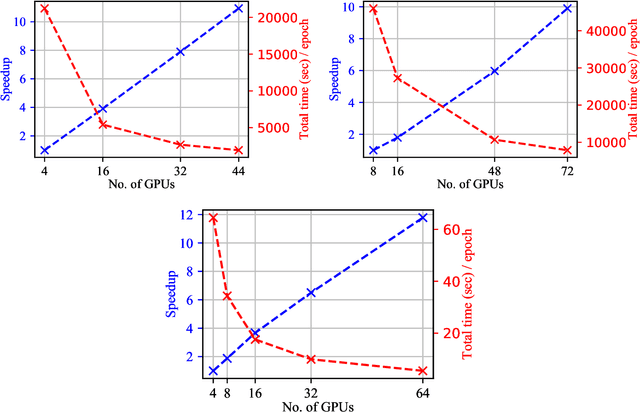

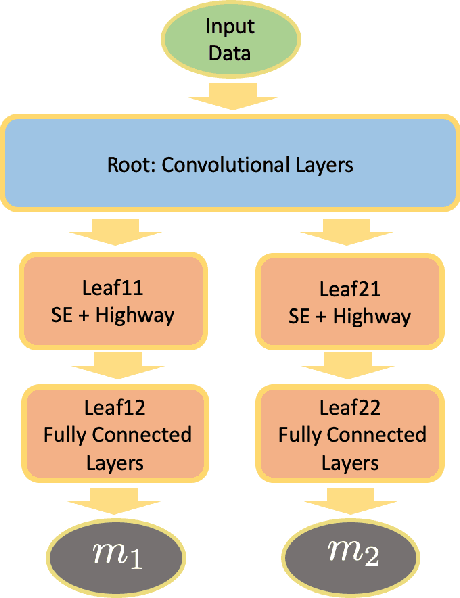

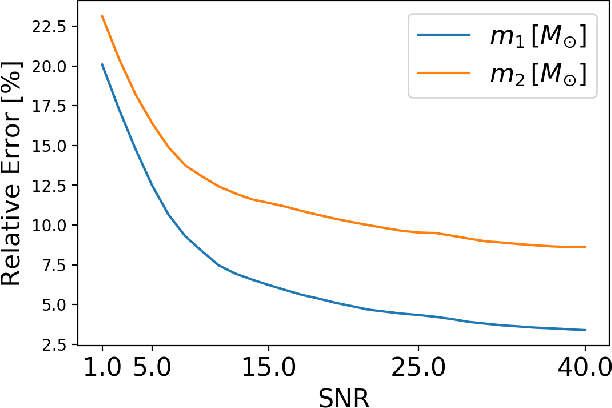

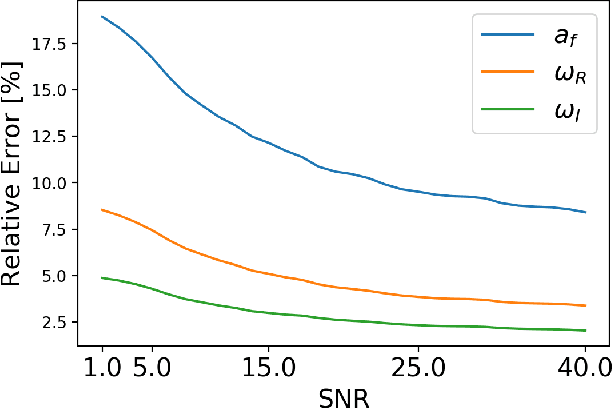

Deep Learning at Scale for Gravitational Wave Parameter Estimation of Binary Black Hole Mergers

Mar 05, 2019

Abstract:We present the first application of deep learning at scale to do gravitational wave parameter estimation of binary black hole mergers that describe a 4-D signal manifold, i.e., black holes whose spins are aligned or anti-aligned, and which evolve on quasi-circular orbits. We densely sample this 4-D signal manifold using over three hundred thousand simulated waveforms. In order to cover a broad range of astrophysically motivated scenarios, we synthetically enhance this waveform dataset to ensure that our deep learning algorithms can process waveforms located at any point in the data stream of gravitational wave detectors (time invariance) for a broad range of signal-to-noise ratios (scale invariance), which in turn means that our neural network models are trained with over $10^{7}$ waveform signals. We then apply these neural network models to estimate the astrophysical parameters of black hole mergers, and their corresponding black hole remnants, including the final spin and the gravitational wave quasi-normal frequencies. These neural network models represent the first time deep learning is used to provide point-parameter estimation calculations endowed with statistical errors. For each binary black hole merger that ground-based gravitational wave detectors have observed, our deep learning algorithms can reconstruct its parameters within 2 milliseconds using a single Tesla V100 GPU. We show that this new approach produces parameter estimation results that are consistent with Bayesian analyses that have been used to reconstruct the parameters of the catalog of binary black hole mergers observed by the advanced LIGO and Virgo detectors.

Deep Learning for Multi-Messenger Astrophysics: A Gateway for Discovery in the Big Data Era

Feb 01, 2019Abstract:This report provides an overview of recent work that harnesses the Big Data Revolution and Large Scale Computing to address grand computational challenges in Multi-Messenger Astrophysics, with a particular emphasis on real-time discovery campaigns. Acknowledging the transdisciplinary nature of Multi-Messenger Astrophysics, this document has been prepared by members of the physics, astronomy, computer science, data science, software and cyberinfrastructure communities who attended the NSF-, DOE- and NVIDIA-funded "Deep Learning for Multi-Messenger Astrophysics: Real-time Discovery at Scale" workshop, hosted at the National Center for Supercomputing Applications, October 17-19, 2018. Highlights of this report include unanimous agreement that it is critical to accelerate the development and deployment of novel, signal-processing algorithms that use the synergy between artificial intelligence (AI) and high performance computing to maximize the potential for scientific discovery with Multi-Messenger Astrophysics. We discuss key aspects to realize this endeavor, namely (i) the design and exploitation of scalable and computationally efficient AI algorithms for Multi-Messenger Astrophysics; (ii) cyberinfrastructure requirements to numerically simulate astrophysical sources, and to process and interpret Multi-Messenger Astrophysics data; (iii) management of gravitational wave detections and triggers to enable electromagnetic and astro-particle follow-ups; (iv) a vision to harness future developments of machine and deep learning and cyberinfrastructure resources to cope with the scale of discovery in the Big Data Era; (v) and the need to build a community that brings domain experts together with data scientists on equal footing to maximize and accelerate discovery in the nascent field of Multi-Messenger Astrophysics.

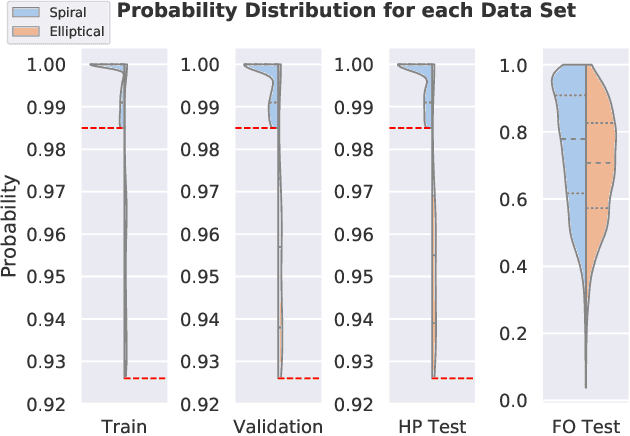

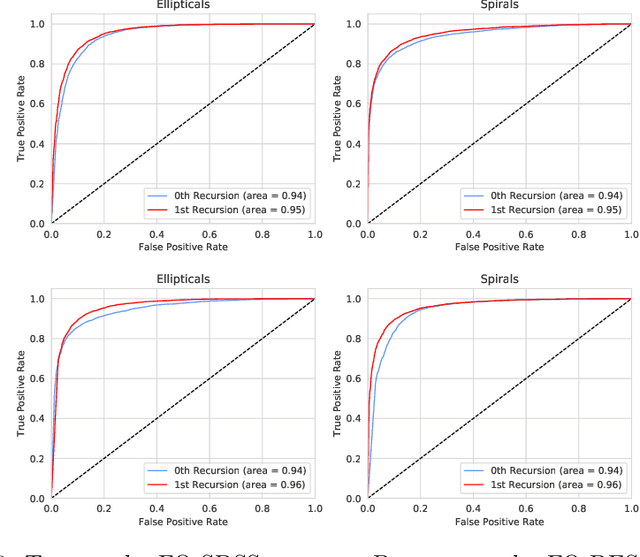

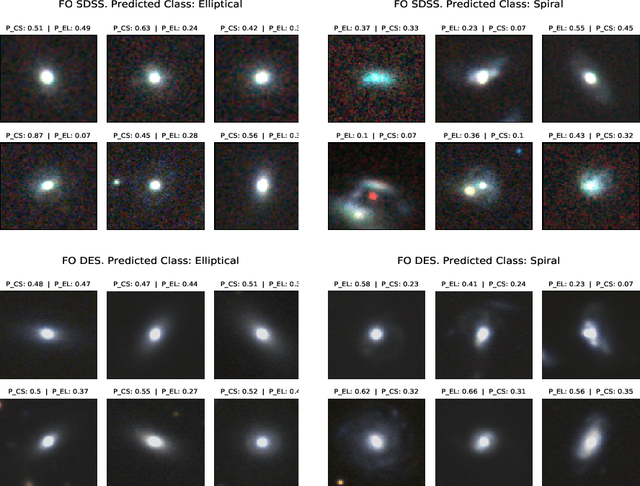

Unsupervised learning and data clustering for the construction of Galaxy Catalogs in the Dark Energy Survey

Dec 05, 2018

Abstract:Large scale astronomical surveys continue to increase their depth and scale, providing new opportunities to observe large numbers of celestial objects with ever increasing precision. At the same time, the sheer scale of ongoing and future surveys pose formidable challenges to classify astronomical objects. Pioneering efforts on this front include the citizen science approach adopted by the Sloan Digital Sky Survey (SDSS). These SDSS datasets have been used recently to train neural network models to classify galaxies in the Dark Energy Survey (DES) that overlap the footprint of both surveys. While this represents a significant step to classify unlabeled images of astrophysical objects in DES, the key issue at heart still remains, i.e., the classification of unlabelled DES galaxies that have not been observed in previous surveys. To start addressing this timely and pressing matter, we demonstrate that knowledge from deep learning algorithms trained with real-object images can be transferred to classify elliptical and spiral galaxies that overlap both SDSS and DES surveys, achieving state-of-the-art accuracy 99.6%. More importantly, to initiate the characterization of unlabelled DES galaxies that have not been observed in previous surveys, we demonstrate that our neural network model can also be used for unsupervised clustering, grouping together unlabeled DES galaxies into spiral and elliptical types. We showcase the application of this novel approach by classifying over ten thousand unlabelled DES galaxies into spiral and elliptical classes. We conclude by showing that unsupervised clustering can be combined with recursive training to start creating large-scale DES galaxy catalogs in preparation for the Large Synoptic Survey Telescope era.

Add to Chrome

Add to Chrome Add to Firefox

Add to Firefox Add to Edge

Add to Edge