Dolores Garcia

AI Agents Can Already Autonomously Perform Experimental High Energy Physics

Mar 20, 2026Abstract:Large language model-based AI agents are now able to autonomously execute substantial portions of a high energy physics (HEP) analysis pipeline with minimal expert-curated input. Given access to a HEP dataset, an execution framework, and a corpus of prior experimental literature, we find that Claude Code succeeds in automating all stages of a typical analysis: event selection, background estimation, uncertainty quantification, statistical inference, and paper drafting. We argue that the experimental HEP community is underestimating the current capabilities of these systems, and that most proposed agentic workflows are too narrowly scoped or scaffolded to specific analysis structures. We present a proof-of-concept framework, Just Furnish Context (JFC), that integrates autonomous analysis agents with literature-based knowledge retrieval and multi-agent review, and show that this is sufficient to plan, execute, and document a credible high energy physics analysis. We demonstrate this by conducting analyses on open data from ALEPH, DELPHI, and CMS to perform electroweak, QCD, and Higgs boson measurements. Rather than replacing physicists, these tools promise to offload the repetitive technical burden of analysis code development, freeing researchers to focus on physics insight, truly novel method development, and rigorous validation. Given these developments, we advocate for new strategies for how the community trains students, organizes analysis efforts, and allocates human expertise.

End-to-end event reconstruction for precision physics at future colliders

Mar 04, 2026Abstract:Future collider experiments require unprecedented precision in measurements of Higgs, electroweak, and flavour observables, placing stringent demands on event reconstruction. The achievable precision on Higgs couplings scales directly with the resolution on visible final state particles and their invariant masses. Current particle flow algorithms rely on detector specific clustering, limiting flexibility during detector design. Here we present an end-to-end global event reconstruction approach that maps charged particle tracks and calorimeter and muon hits directly to particle level objects. The method combines geometric algebra transformer networks with object condensation based clustering, followed by dedicated networks for particle identification and energy regression. Our approach is benchmarked on fully simulated electron positron collisions at FCC-ee using the CLD detector concept. It outperforms the state-of-the-art rule-based algorithm by 10--20\% in relative reconstruction efficiency, achieves up to two orders of magnitude reduction in fake-particle rates for charged hadrons, and improves visible energy and invariant mass resolution by 22\%. By decoupling reconstruction performance from detector-specific tuning, this framework enables rapid iteration during the detector design phase of future collider experiments.

Machine Learning on Heterogeneous, Edge, and Quantum Hardware for Particle Physics (ML-HEQUPP)

Feb 24, 2026Abstract:The next generation of particle physics experiments will face a new era of challenges in data acquisition, due to unprecedented data rates and volumes along with extreme environments and operational constraints. Harnessing this data for scientific discovery demands real-time inference and decision-making, intelligent data reduction, and efficient processing architectures beyond current capabilities. Crucial to the success of this experimental paradigm are several emerging technologies, such as artificial intelligence and machine learning (AI/ML) and silicon microelectronics, and the advent of quantum algorithms and processing. Their intersection includes areas of research such as low-power and low-latency devices for edge computing, heterogeneous accelerator systems, reconfigurable hardware, novel codesign and synthesis strategies, readout for cryogenic or high-radiation environments, and analog computing. This white paper presents a community-driven vision to identify and prioritize research and development opportunities in hardware-based ML systems and corresponding physics applications, contributing towards a successful transition to the new data frontier of fundamental science.

Pix2Streams: Dynamic Hydrology Maps from Satellite-LiDAR Fusion

Nov 15, 2020

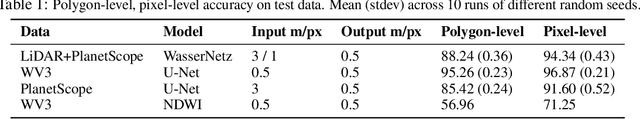

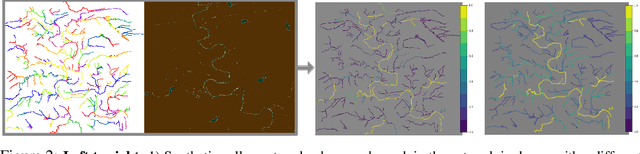

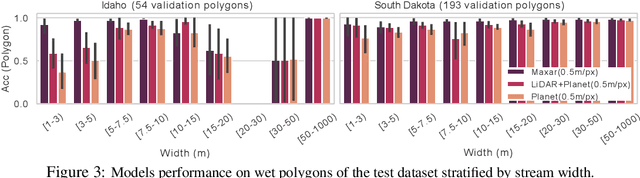

Abstract:Where are the Earth's streams flowing right now? Inland surface waters expand with floods and contract with droughts, so there is no one map of our streams. Current satellite approaches are limited to monthly observations that map only the widest streams. These are fed by smaller tributaries that make up much of the dendritic surface network but whose flow is unobserved. A complete map of our daily waters can give us an early warning for where droughts are born: the receding tips of the flowing network. Mapping them over years can give us a map of impermanence of our waters, showing where to expect water, and where not to. To that end, we feed the latest high-res sensor data to multiple deep learning models in order to map these flowing networks every day, stacking the times series maps over many years. Specifically, i) we enhance water segmentation to $50$ cm/pixel resolution, a 60$\times$ improvement over previous state-of-the-art results. Our U-Net trained on 30-40cm WorldView3 images can detect streams as narrow as 1-3m (30-60$\times$ over SOTA). Our multi-sensor, multi-res variant, WasserNetz, fuses a multi-day window of 3m PlanetScope imagery with 1m LiDAR data, to detect streams 5-7m wide. Both U-Nets produce a water probability map at the pixel-level. ii) We integrate this water map over a DEM-derived synthetic valley network map to produce a snapshot of flow at the stream level. iii) We apply this pipeline, which we call Pix2Streams, to a 2-year daily PlanetScope time-series of three watersheds in the US to produce the first high-fidelity dynamic map of stream flow frequency. The end result is a new map that, if applied at the national scale, could fundamentally improve how we manage our water resources around the world.

Add to Chrome

Add to Chrome Add to Firefox

Add to Firefox Add to Edge

Add to Edge