Chengjian Liu

Decoupling the All-Reduce Primitive for Accelerating Distributed Deep Learning

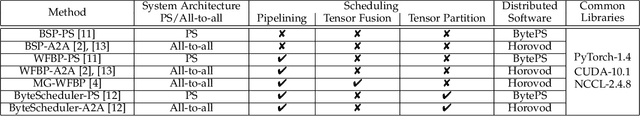

Feb 24, 2023Abstract:Communication scheduling has been shown to be effective in accelerating distributed training, which enables all-reduce communications to be overlapped with backpropagation computations. This has been commonly adopted in popular distributed deep learning frameworks. However, there exist two fundamental problems: (1) excessive startup latency proportional to the number of workers for each all-reduce operation; (2) it only achieves sub-optimal training performance due to the dependency and synchronization requirement of the feed-forward computation in the next iteration. We propose a novel scheduling algorithm, DeAR, that decouples the all-reduce primitive into two continuous operations, which overlaps with both backpropagation and feed-forward computations without extra communications. We further design a practical tensor fusion algorithm to improve the training performance. Experimental results with five popular models show that DeAR achieves up to 83% and 15% training speedup over the state-of-the-art solutions on a 64-GPU cluster with 10Gb/s Ethernet and 100Gb/s InfiniBand interconnects, respectively.

Communication-Efficient Distributed Deep Learning: Survey, Evaluation, and Challenges

May 27, 2020

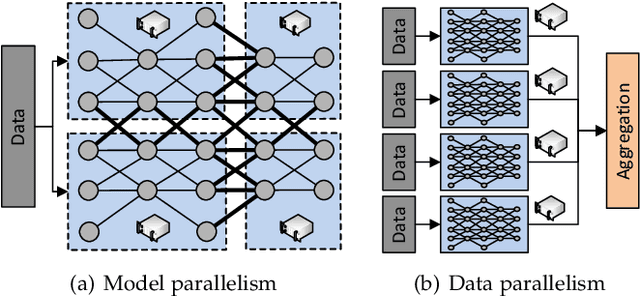

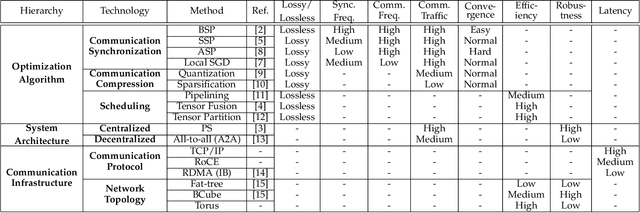

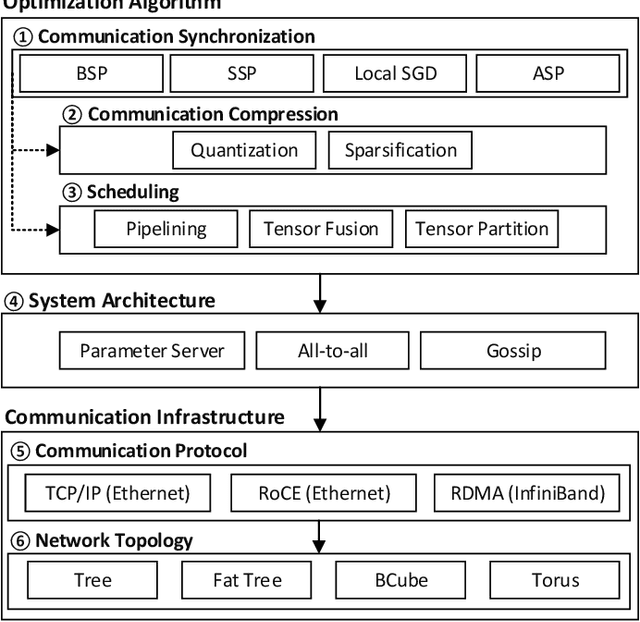

Abstract:In recent years, distributed deep learning techniques are widely deployed to accelerate the training of deep learning models by exploiting multiple computing nodes. However, the extensive communications among workers dramatically limit the system scalability. In this article, we provide a systematic survey of communication-efficient distributed deep learning. Specifically, we first identify the communication challenges in distributed deep learning. Then we summarize the state-of-the-art techniques in this direction, and provide a taxonomy with three levels: optimization algorithm, system architecture, and communication infrastructure. Afterwards, we present a comparative study on seven different distributed deep learning techniques on a 32-GPU cluster with both 10Gbps Ethernet and 100Gbps InfiniBand. We finally discuss some challenges and open issues for possible future investigations.

Add to Chrome

Add to Chrome Add to Firefox

Add to Firefox Add to Edge

Add to Edge