Angelo Cenedese

Dynamic Control Allocation for Dual-Tilt UAV Platforms

Apr 07, 2026Abstract:This paper focuses on dynamic control allocation for a hexarotor UAV platform, considering a trajectory tracking task as as case study. It is assumed that the platform is dual-tilting, meaning that it is able to tilt each propeller independently during flight, along two orthogonal axis. We present a hierarchical control structure composed of a high-level controller generating the required wrench for the tracking task, and a control allocation law ensuring that the actuators produce such wrench. The allocator imposes desired first-order dynamics on the actuators set, and exploits system redundancy to optimize the actuators state with respect to a given objective function. Unlike other studies on the subject, we explicitly model actuator saturation and provide theoretical insights on its effect on control performances. We also investigate the role of propeller tilt angles, by imposing asymmetric shapes in the objective function. Numerical simulations are presented to validate the allocation strategy.

Force Polytope-Based Cant-Angle Selection for Tilting Hexarotor UAVs

Apr 07, 2026Abstract:From a maneuverability perspective, the main advantage of tilting multirotor UAVs lies in the dynamic variability of the feasible executable wrench, which represents a key asset for physical interaction tasks. Accordingly, cant-angle selection should be optimized to ensure high performance while avoiding abrupt variations and preserving real-world feasibility. In this context, this work proposes a lightweight control framework for star-shaped interdependent cant-tilting hexarotor UAVs performing interaction tasks. The method uses an offline-computed look-up table of zero-moment force polytopes to identify feasible cant angles for a desired control force and select the optimal one by balancing efficiency and smoothness. The framework is integrated with a geometric full-pose controller and validated through Monte Carlo simulations in MATLAB/Simulink and compared against a baseline strategy. The results show a significant reduction in computation time, together with improved pose-tracking performance and competitive actuation efficiency. A final physics-based simulation of a complete wall inspection task in Simscape further confirms the feasibility of the proposed strategy in interacting scenarios.

A Sim-to-Real Vision-based Lane Keeping System for a 1:10-scale Autonomous Vehicle

Sep 26, 2024

Abstract:In recent years, several competitions have highlighted the need to investigate vision-based solutions to address scenarios with functional insufficiencies in perception, world modeling and localization. This article presents the Vision-based Lane Keeping System (VbLKS) developed by the DEI-Unipd Team within the context of the Bosch Future Mobility Challenge 2022. The main contribution lies in a Simulation-to-Reality (Sim2Real) GPS-denied VbLKS for a 1:10-scale autonomous vehicle. In this VbLKS, the input to a tailored Pure Pursuit (PP) based control strategy, namely the Lookahead Heading Error (LHE), is estimated at a constant lookahead distance employing a Convolutional Neural Network (CNN). A training strategy for a compact CNN is proposed, emphasizing data generation and augmentation on simulated camera images from a 3D Gazebo simulator, and enabling real-time operation on low-level hardware. A tailored PP-based lateral controller equipped with a derivative action and a PP-based velocity reference generation are implemented. Tuning ranges are established through a systematic time-delay stability analysis. Validation in a representative controlled laboratory setting is provided.

Integrated Hardware and Software Architecture for Industrial AGV with Manual Override Capability

Aug 22, 2024

Abstract:This paper presents a study on transforming a traditional human-operated vehicle into a fully autonomous device. By leveraging previous research and state-of-the-art technologies, the study addresses autonomy, safety, and operational efficiency in industrial environments. Motivated by the demand for automation in hazardous and complex industries, the autonomous system integrates sensors, actuators, advanced control algorithms, and communication systems to enhance safety, streamline processes, and improve productivity. The paper covers system requirements, hardware architecture, software framework and preliminary results. This research offers insights into designing and implementing autonomous capabilities in human-operated vehicles, with implications for improving safety and efficiency in various industrial sectors.

Smart Fleet Solutions: Simulating Electric AGV Performance in Industrial Settings

Aug 22, 2024

Abstract:This paper explores the potential benefits and challenges of integrating Electric Vehicles (EVs) and Autonomous Ground Vehicles (AGVs) in industrial settings to improve sustainability and operational efficiency. While EVs offer environmental advantages, barriers like high costs and limited range hinder their widespread use. Similarly, AGVs, despite their autonomous capabilities, face challenges in technology integration and reliability. To address these issues, the paper develops a fleet management tool tailored for coordinating electric AGVs in industrial environments. The study focuses on simulating electric AGV performance in a primary aluminum plant to provide insights into their effectiveness and offer recommendations for optimizing fleet performance.

Star-shaped Tilted Hexarotor Maneuverability: Analysis of the Role of the Tilt Cant Angles

Aug 22, 2024

Abstract:Star-shaped Tilted Hexarotors are rapidly emerging for applications highly demanding in terms of robustness and maneuverability. To ensure improvement in such features, a careful selection of the tilt angles is mandatory. In this work, we present a rigorous analysis of how the force subspace varies with the tilt cant angles, namely the tilt angles along the vehicle arms, taking into account gravity compensation and torque decoupling to abide by the hovering condition. Novel metrics are introduced to assess the performance of existing tilted platforms, as well as to provide some guidelines for the selection of the tilt cant angle in the design phase.

Tag-based Visual Odometry Estimation for Indoor UAVs Localization

Sep 23, 2023

Abstract:The agility and versatility offered by UAV platforms still encounter obstacles for full exploitation in industrial applications due to their indoor usage limitations. A significant challenge in this sense is finding a reliable and cost-effective way to localize aerial vehicles in a GNSS-denied environment. In this paper, we focus on the visual-based positioning paradigm: high accuracy in UAVs position and orientation estimation is achieved by leveraging the potentials offered by a dense and size-heterogenous map of tags. In detail, we propose an efficient visual odometry procedure focusing on hierarchical tags selection, outliers removal, and multi-tag estimation fusion, to facilitate the visual-inertial reconciliation. Experimental results show the validity of the proposed localization architecture as compared to the state of the art.

Visual Sensor Network Stimulation Model Identification via Gaussian Mixture Model and Deep Embedded Features

Jan 18, 2022

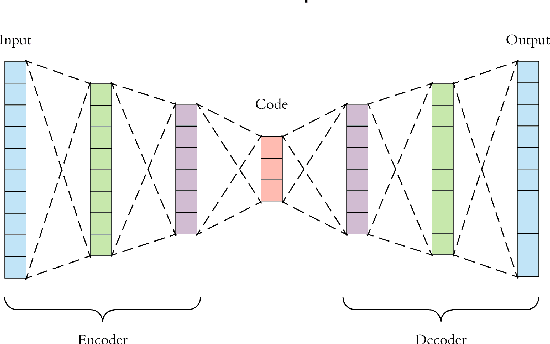

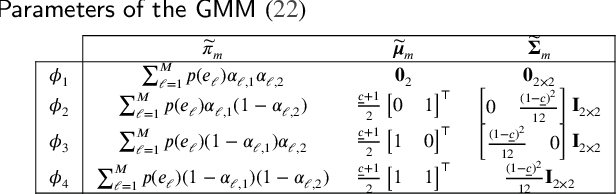

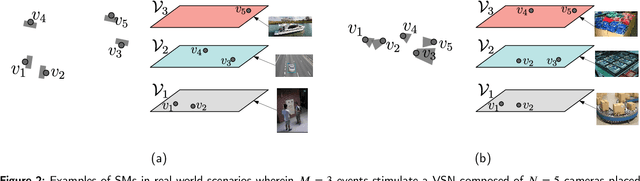

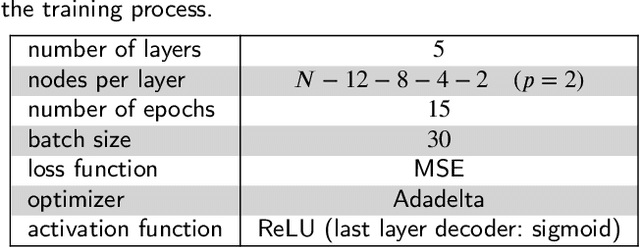

Abstract:Visual sensor networks constitute a fundamental class of distributed sensing systems, with unique complexity and performance research subjects. One of these novel challenges is represented by the identification of the network stimulation model (SM), which emerges when a set of detectable events trigger different subsets of the cameras. In this direction, the formulation of the related SM identification problem is proposed, along with a proper network observations generation method. Consequently, an approach based on deep embedded features and soft clustering is leveraged to solve the presented identification problem. In detail, the Gaussian Mixture Modeling is employed to provide a suitable description for data distribution and an autoencoder is used to reduce undesired effects due to the so-called curse of dimensionality. Hence, it is shown that a SM can be learnt by solving Maximum A-Posteriori estimation on the encoded features belonging to a space with lower dimensionality. Lastly, numerical results are reported to validate the devised estimation algorithm.

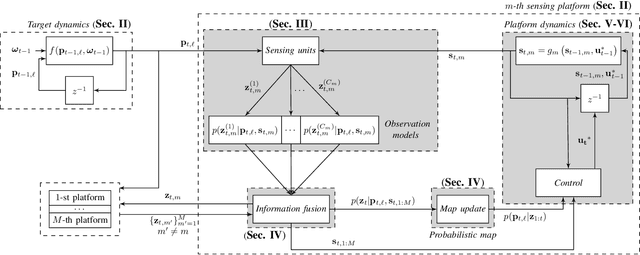

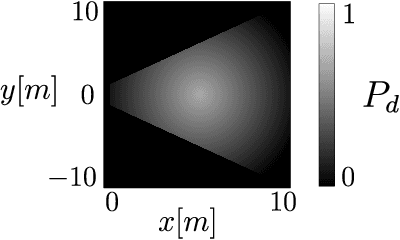

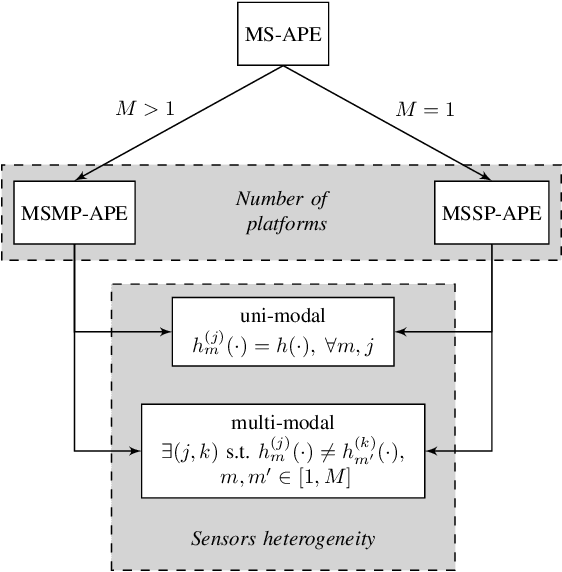

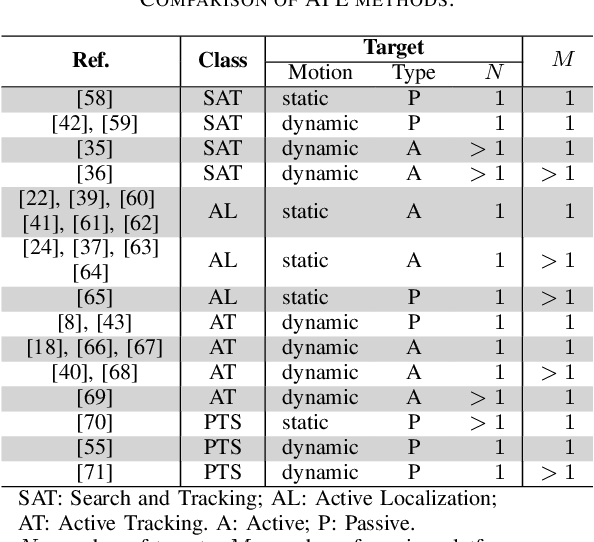

Active Sensing for Search and Tracking: A Review

Dec 04, 2021

Abstract:Active Position Estimation (APE) is the task of localizing one or more targets using one or more sensing platforms. APE is a key task for search and rescue missions, wildlife monitoring, source term estimation, and collaborative mobile robotics. Success in APE depends on the level of cooperation of the sensing platforms, their number, their degrees of freedom and the quality of the information gathered. APE control laws enable active sensing by satisfying either pure-exploitative or pure-explorative criteria. The former minimizes the uncertainty on position estimation; whereas the latter drives the platform closer to its task completion. In this paper, we define the main elements of APE to systematically classify and critically discuss the state of the art in this domain. We also propose a reference framework as a formalism to classify APE-related solutions. Overall, this survey explores the principal challenges and envisages the main research directions in the field of autonomous perception systems for localization tasks. It is also beneficial to promote the development of robust active sensing methods for search and tracking applications.

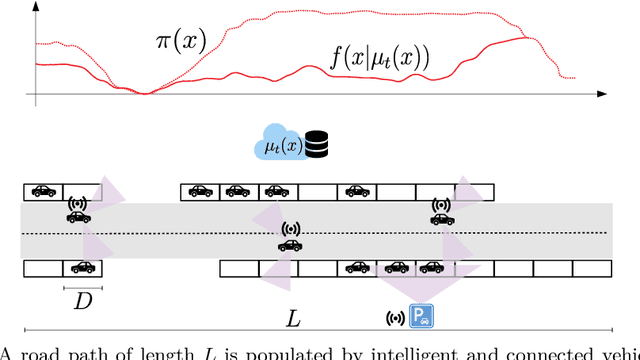

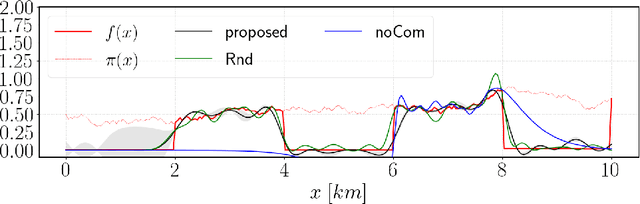

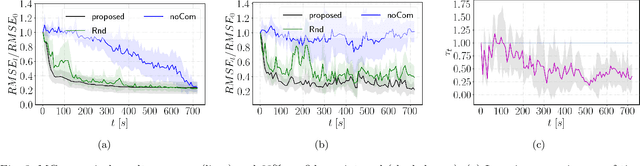

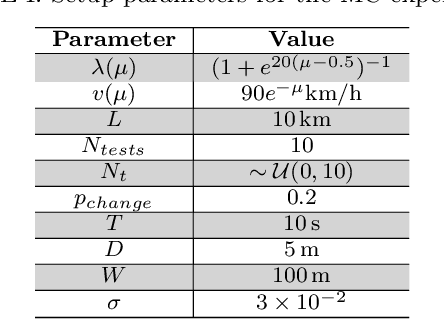

Online and Adaptive Parking Availability Mapping: An Uncertainty-Aware Active Sensing Approach for Connected Vehicles

May 01, 2021

Abstract:Research on connected vehicles represents a continuously evolving technological domain, fostered by the emerging Internet of Things (IoT) paradigm and the recent advances in intelligent transportation systems. Nowadays, vehicles are platforms capable of generating, receiving and automatically act based on large amount of data. In the context of assisted driving, connected vehicle technology provides real-time information about the surrounding traffic conditions. Such information is expected to improve drivers' quality of life, for example, by adopting decision making strategies according to the current parking availability status. In this context, we propose an online and adaptive scheme for parking availability mapping. Specifically, we adopt an information-seeking active sensing approach to select the incoming data, thus preserving the onboard storage and processing resources; then, we estimate the parking availability through Gaussian Process Regression. We compare the proposed algorithm with several baselines, which attain inferior performance in terms of mapping convergence speed and adaptivity capabilities; moreover, the proposed approach comes at the cost of a very small computational demand.

Add to Chrome

Add to Chrome Add to Firefox

Add to Firefox Add to Edge

Add to Edge