Andreas Pichler

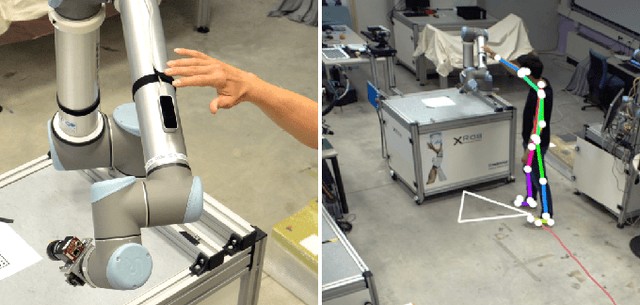

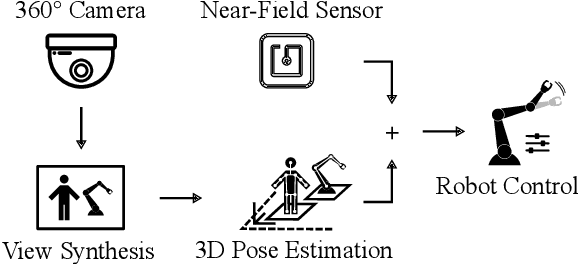

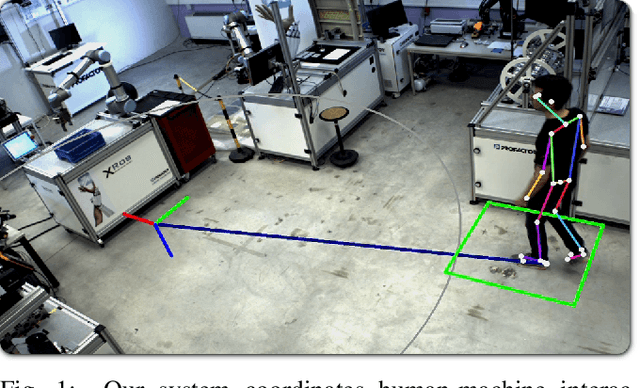

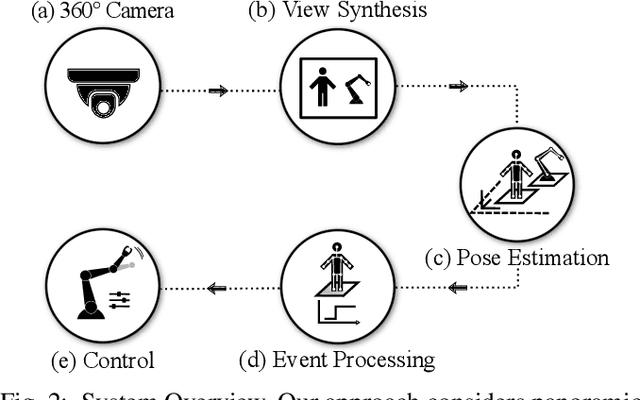

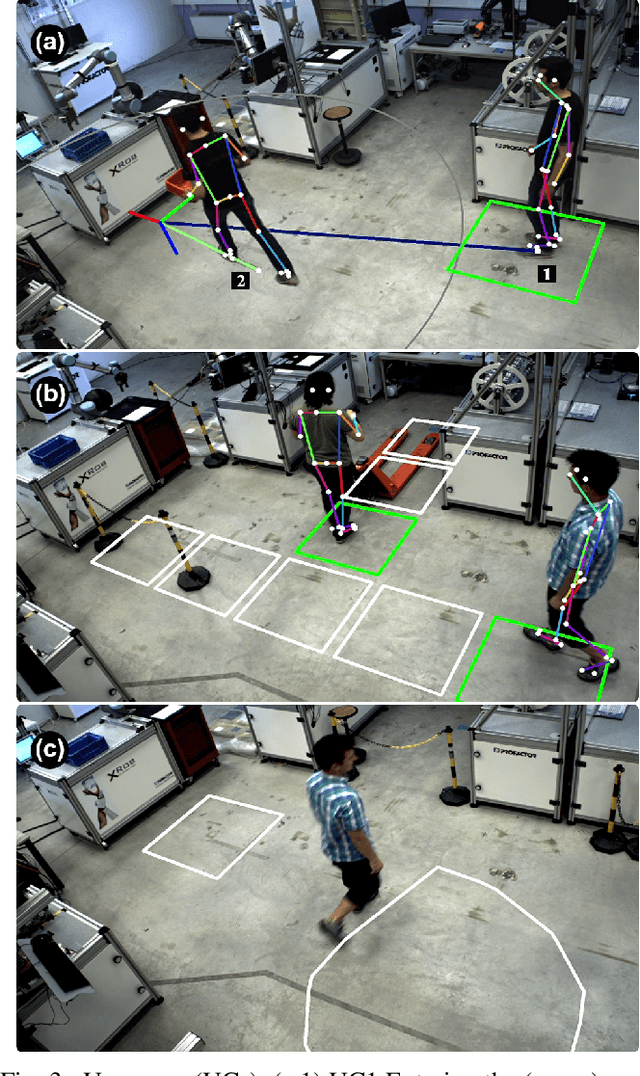

Enhanced Human-Machine Interaction by Combining Proximity Sensing with Global Perception

Oct 16, 2019

Abstract:The raise of collaborative robotics has led to wide range of sensor technologies to detect human-machine interactions: at short distances, proximity sensors detect nontactile gestures virtually occlusion-free, while at medium distances, active depth sensors are frequently used to infer human intentions. We describe an optical system for large workspaces to capture human pose based on a single panoramic color camera. Despite the two-dimensional input, our system is able to predict metric 3D pose information over larger field of views than would be possible with active depth measurement cameras. We merge posture context with proximity perception to reduce occlusions and improve accuracy at long distances. We demonstrate the capabilities of our system in two use cases involving multiple humans and robots.

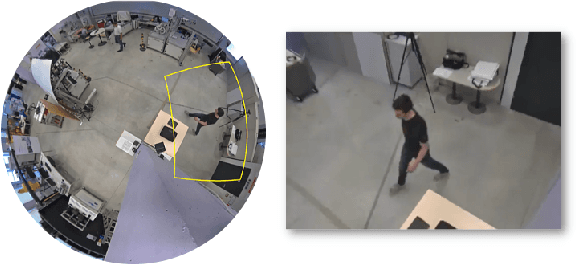

Metric Pose Estimation for Human-Machine Interaction Using Monocular Vision

Oct 08, 2019

Abstract:The rapid growth of collaborative robotics in production requires new automation technologies that take human and machine equally into account. In this work, we describe a monocular camera based system to detect human-machine interactions from a bird's-eye perspective. Our system predicts poses of humans and robots from a single wide-angle color image. Even though our approach works on 2D color input, we lift the majority of detections to a metric 3D space. Our system merges pose information with predefined virtual sensors to coordinate human-machine interactions. We demonstrate the advantages of our system in three use cases.

Spatio-thermal depth correction of RGB-D sensors based on Gaussian Processes in real-time

Jul 01, 2019Abstract:Commodity RGB-D sensors capture color images along with dense pixel-wise depth information in real-time. Typical RGB-D sensors are provided with a factory calibration and exhibit erratic depth readings due to coarse calibration values, ageing and thermal influence effects. This limits their applicability in computer vision and robotics. We propose a novel method to accurately calibrate depth considering spatial and thermal influences jointly. Our work is based on Gaussian Process Regression in a four dimensional Cartesian and thermal domain. We propose to leverage modern GPUs for dense depth map correction in real-time. For reproducibility we make our dataset and source code publicly available.

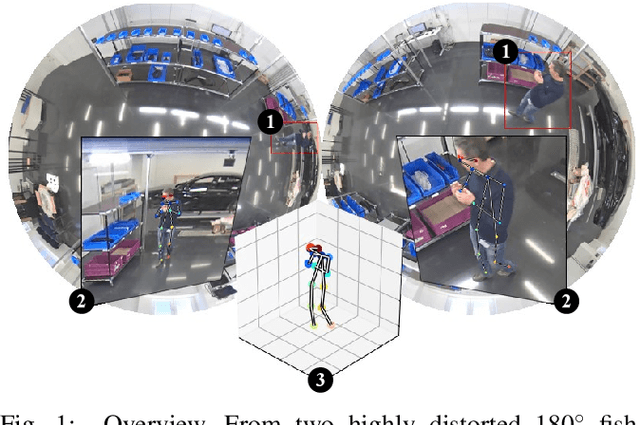

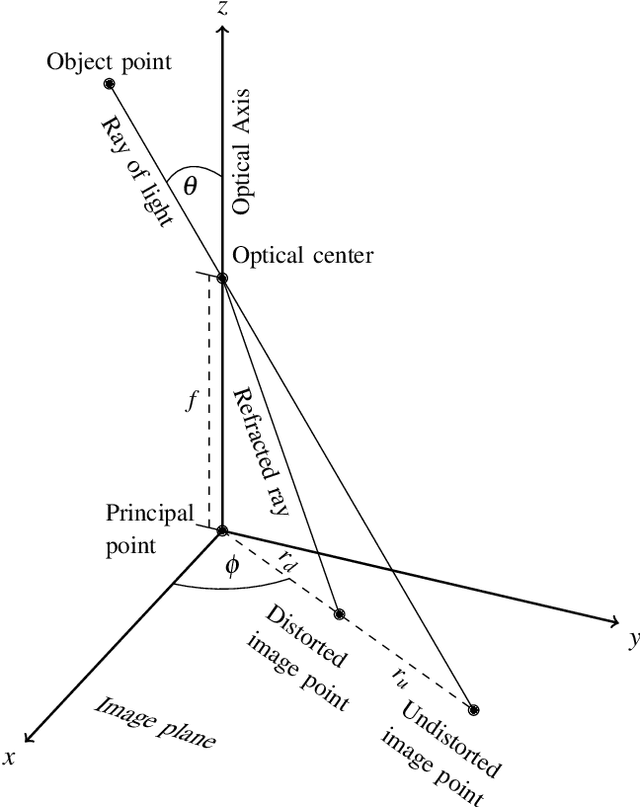

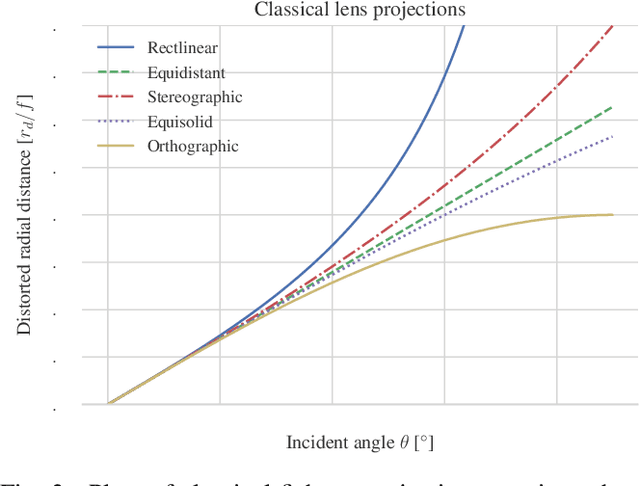

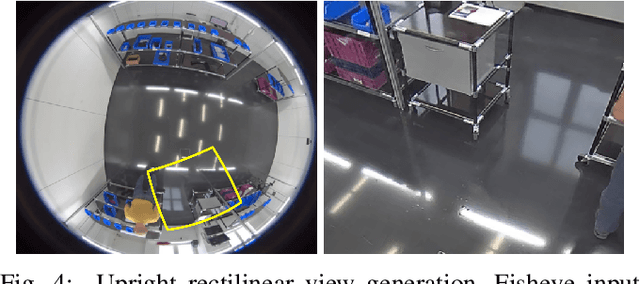

Large Area 3D Human Pose Detection Via Stereo Reconstruction in Panoramic Cameras

Jul 01, 2019

Abstract:We propose a novel 3D human pose detector using two panoramic cameras. We show that transforming fisheye perspectives to rectilinear views allows a direct application of two-dimensional deep-learning pose estimation methods, without the explicit need for a costly re-training step to compensate for fisheye image distortions. By utilizing panoramic cameras, our method is capable of accurately estimating human poses over a large field of view. This renders our method suitable for ergonomic analyses and other pose based assessments.

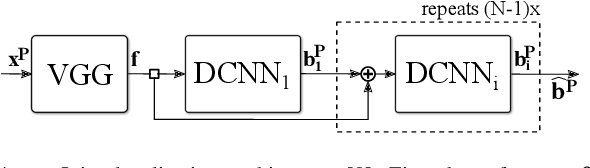

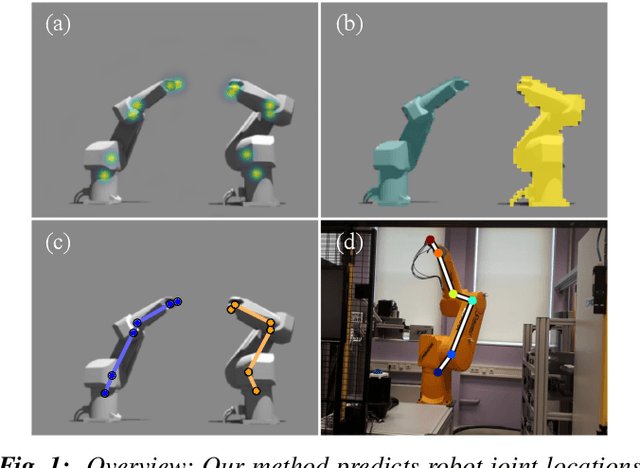

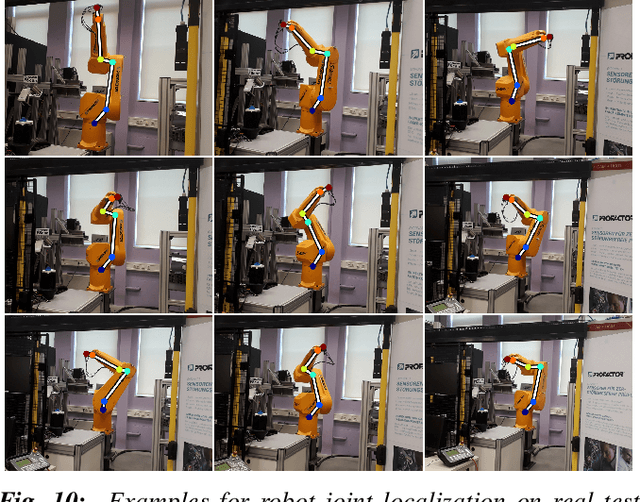

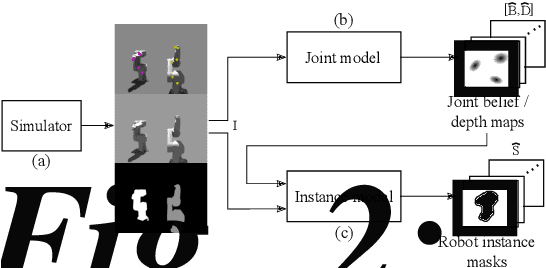

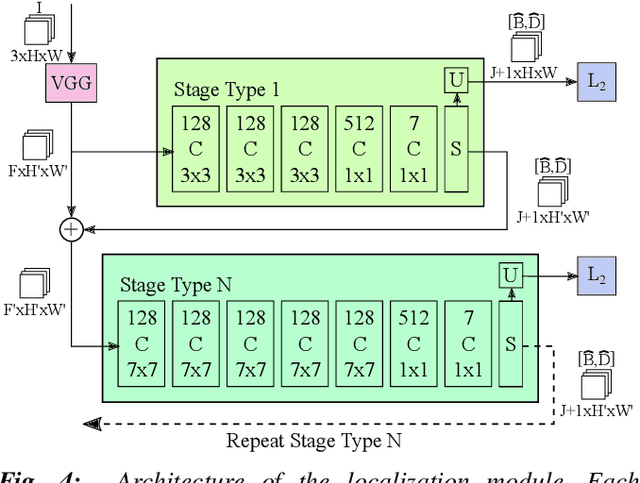

3D Robot Pose Estimation from 2D Images

Feb 13, 2019

Abstract:This paper considers the task of locating articulated poses of multiple robots in images. Our approach simultaneously infers the number of robots in a scene, identifies joint locations and estimates sparse depth maps around joint locations. The proposed method applies staged convolutional feature detectors to 2D image inputs and computes robot instance masks using a recurrent network architecture. In addition, regression maps of most likely joint locations in pixel coordinates together with depth information are computed. Compositing 3D robot joint kinematics is accomplished by applying masks to joint readout maps. Our end-to-end formulation is in contrast to previous work in which the composition of robot joints into kinematics is performed in a separate post-processing step. Despite the fact that our models are trained on artificial data, we demonstrate generalizability to real world images.

Add to Chrome

Add to Chrome Add to Firefox

Add to Firefox Add to Edge

Add to Edge