Andrea Bragagnolo

Cardiac Output Prediction from Echocardiograms: Self-Supervised Learning with Limited Data

Feb 14, 2026Abstract:Cardiac Output (CO) is a key parameter in the diagnosis and management of cardiovascular diseases. However, its accurate measurement requires right-heart catheterization, an invasive and time-consuming procedure, motivating the development of reliable non-invasive alternatives using echocardiography. In this work, we propose a self-supervised learning (SSL) pretraining strategy based on SimCLR to improve CO prediction from apical four-chamber echocardiographic videos. The pretraining is performed using the same limited dataset available for the downstream task, demonstrating the potential of SSL even under data scarcity. Our results show that SSL mitigates overfitting and improves representation learning, achieving an average Pearson correlation of 0.41 on the test set and outperforming PanEcho, a model trained on over one million echocardiographic exams. Source code is available at https://github.com/EIDOSLAB/cardiac-output.

When Does Pruning Benefit Vision Representations?

Jul 02, 2025

Abstract:Pruning is widely used to reduce the complexity of deep learning models, but its effects on interpretability and representation learning remain poorly understood. This paper investigates how pruning influences vision models across three key dimensions: (i) interpretability, (ii) unsupervised object discovery, and (iii) alignment with human perception. We first analyze different vision network architectures to examine how varying sparsity levels affect feature attribution interpretability methods. Additionally, we explore whether pruning promotes more succinct and structured representations, potentially improving unsupervised object discovery by discarding redundant information while preserving essential features. Finally, we assess whether pruning enhances the alignment between model representations and human perception, investigating whether sparser models focus on more discriminative features similarly to humans. Our findings also reveal the presence of sweet spots, where sparse models exhibit higher interpretability, downstream generalization and human alignment. However, these spots highly depend on the network architectures and their size in terms of trainable parameters. Our results suggest a complex interplay between these three dimensions, highlighting the importance of investigating when and how pruning benefits vision representations.

To update or not to update? Neurons at equilibrium in deep models

Jul 19, 2022

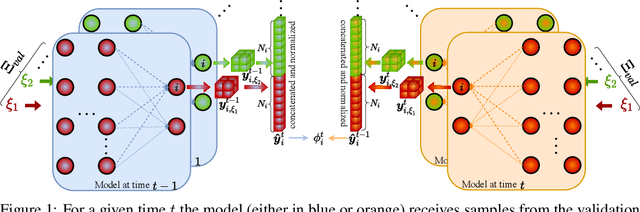

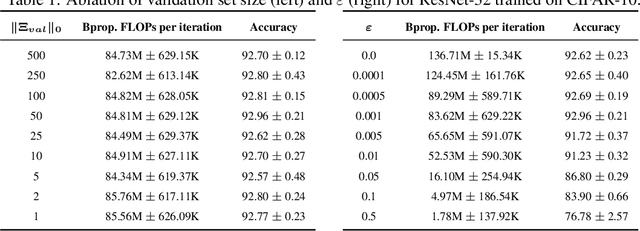

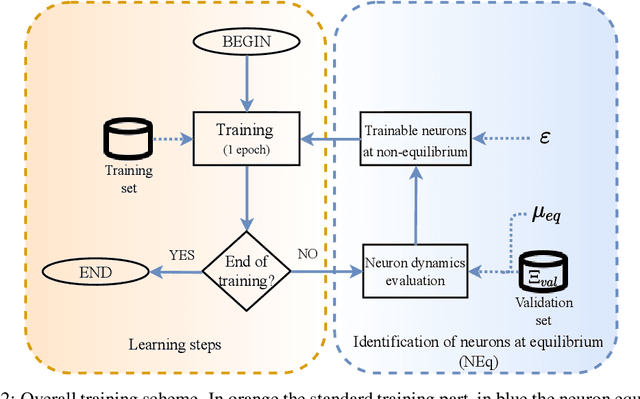

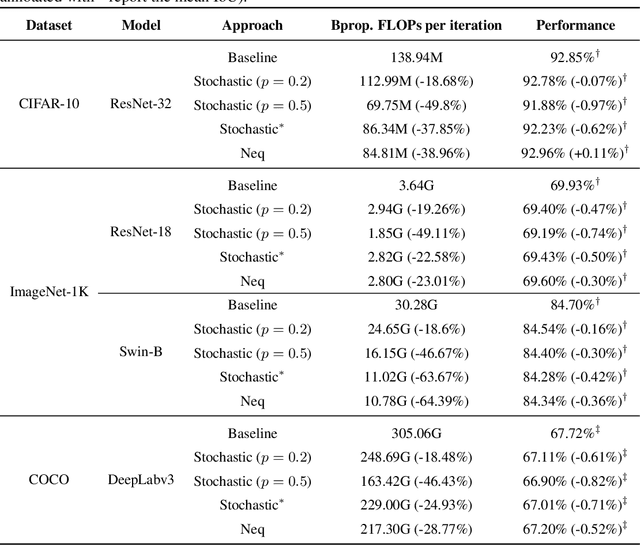

Abstract:Recent advances in deep learning optimization showed that, with some a-posteriori information on fully-trained models, it is possible to match the same performance by simply training a subset of their parameters. Such a discovery has a broad impact from theory to applications, driving the research towards methods to identify the minimum subset of parameters to train without look-ahead information exploitation. However, the methods proposed do not match the state-of-the-art performance, and rely on unstructured sparsely connected models. In this work we shift our focus from the single parameters to the behavior of the whole neuron, exploiting the concept of neuronal equilibrium (NEq). When a neuron is in a configuration at equilibrium (meaning that it has learned a specific input-output relationship), we can halt its update; on the contrary, when a neuron is at non-equilibrium, we let its state evolve towards an equilibrium state, updating its parameters. The proposed approach has been tested on different state-of-the-art learning strategies and tasks, validating NEq and observing that the neuronal equilibrium depends on the specific learning setup.

SeReNe: Sensitivity based Regularization of Neurons for Structured Sparsity in Neural Networks

Feb 07, 2021

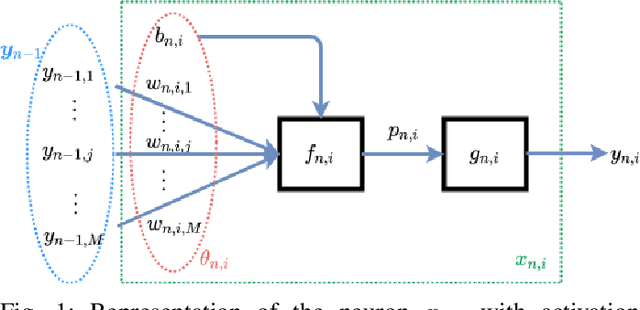

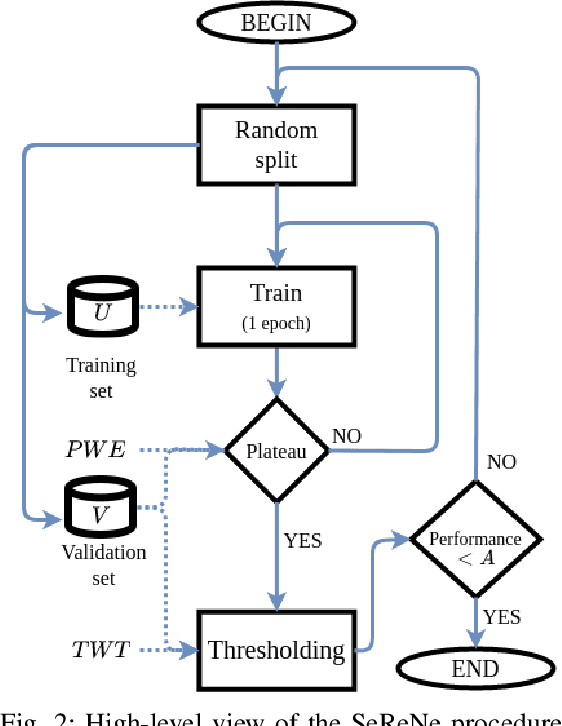

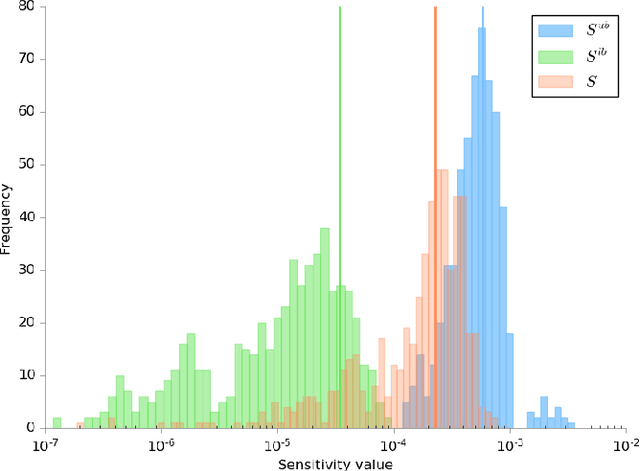

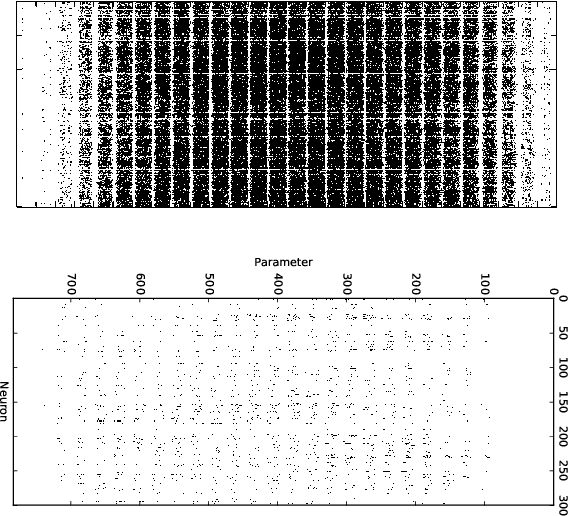

Abstract:Deep neural networks include millions of learnable parameters, making their deployment over resource-constrained devices problematic. SeReNe (Sensitivity-based Regularization of Neurons) is a method for learning sparse topologies with a structure, exploiting neural sensitivity as a regularizer. We define the sensitivity of a neuron as the variation of the network output with respect to the variation of the activity of the neuron. The lower the sensitivity of a neuron, the less the network output is perturbed if the neuron output changes. By including the neuron sensitivity in the cost function as a regularization term, we areable to prune neurons with low sensitivity. As entire neurons are pruned rather then single parameters, practical network footprint reduction becomes possible. Our experimental results on multiple network architectures and datasets yield competitive compression ratios with respect to state-of-the-art references.

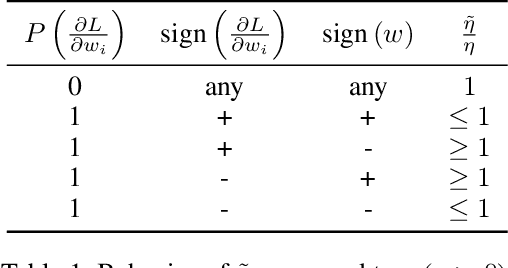

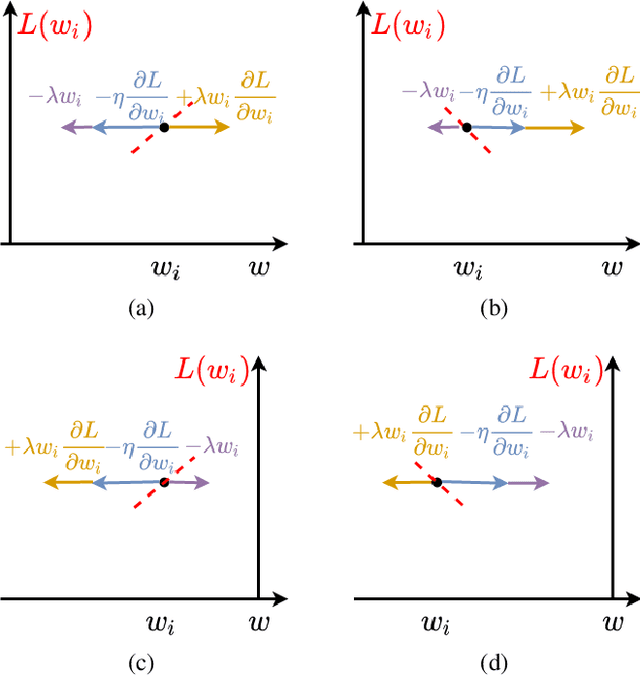

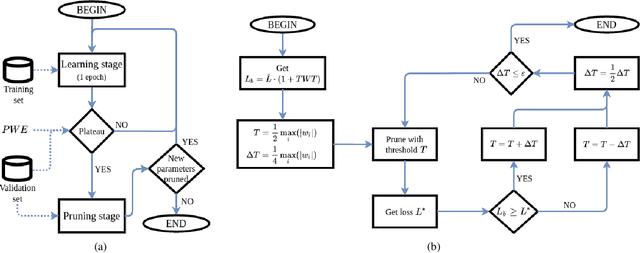

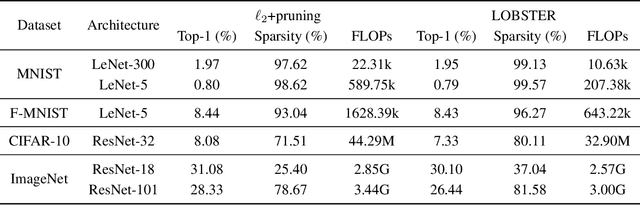

LOss-Based SensiTivity rEgulaRization: towards deep sparse neural networks

Nov 16, 2020

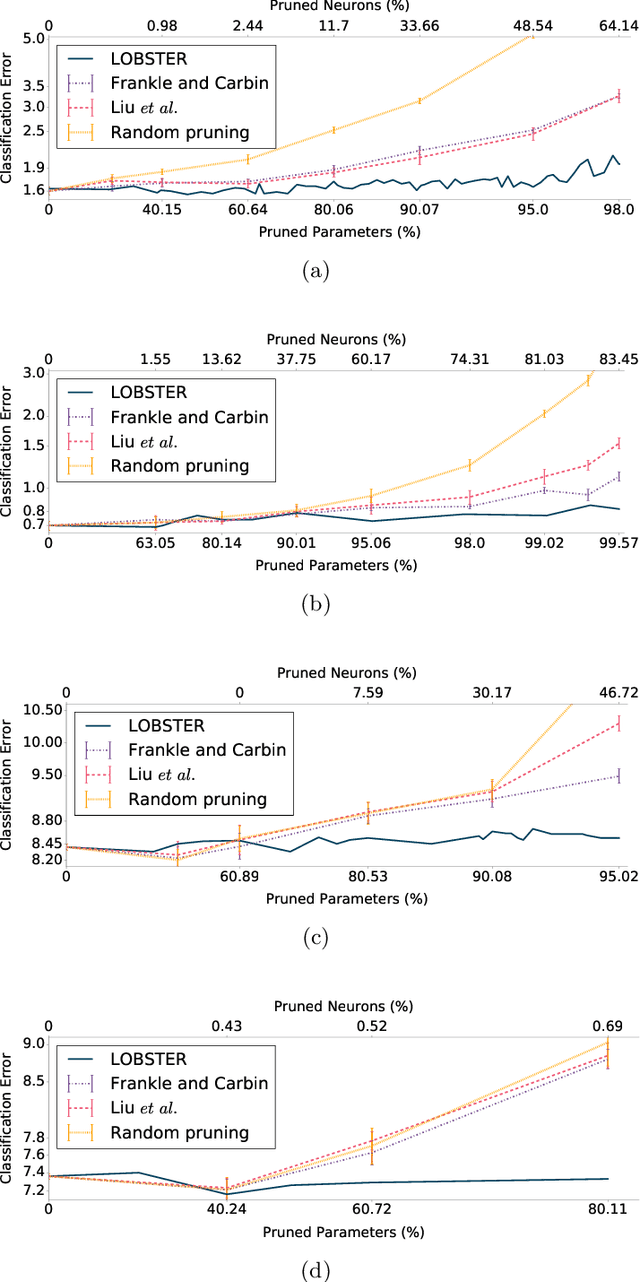

Abstract:LOBSTER (LOss-Based SensiTivity rEgulaRization) is a method for training neural networks having a sparse topology. Let the sensitivity of a network parameter be the variation of the loss function with respect to the variation of the parameter. Parameters with low sensitivity, i.e. having little impact on the loss when perturbed, are shrunk and then pruned to sparsify the network. Our method allows to train a network from scratch, i.e. without preliminary learning or rewinding. Experiments on multiple architectures and datasets show competitive compression ratios with minimal computational overhead.

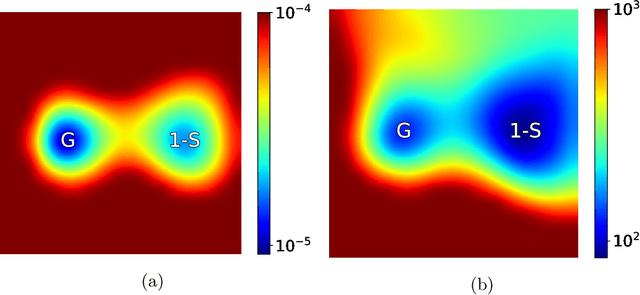

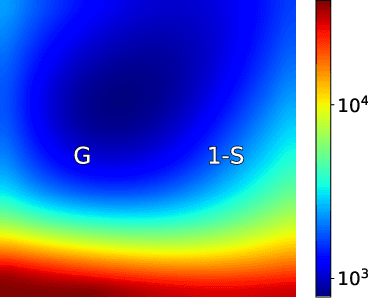

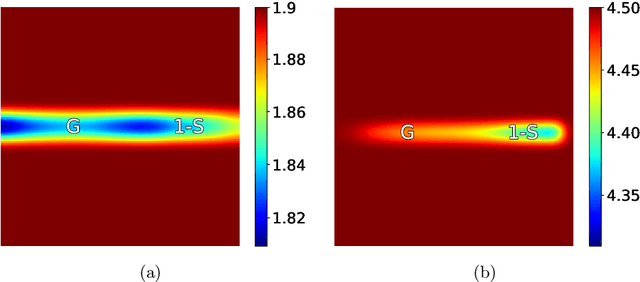

Pruning artificial neural networks: a way to find well-generalizing, high-entropy sharp minima

Apr 30, 2020

Abstract:Recently, a race towards the simplification of deep networks has begun, showing that it is effectively possible to reduce the size of these models with minimal or no performance loss. However, there is a general lack in understanding why these pruning strategies are effective. In this work, we are going to compare and analyze pruned solutions with two different pruning approaches, one-shot and gradual, showing the higher effectiveness of the latter. In particular, we find that gradual pruning allows access to narrow, well-generalizing minima, which are typically ignored when using one-shot approaches. In this work we also propose PSP-entropy, a measure to understand how a given neuron correlates to some specific learned classes. Interestingly, we observe that the features extracted by iteratively-pruned models are less correlated to specific classes, potentially making these models a better fit in transfer learning approaches.

Add to Chrome

Add to Chrome Add to Firefox

Add to Firefox Add to Edge

Add to Edge