Alice X. Zheng

Gradient Regularized Budgeted Boosting

Jan 27, 2019

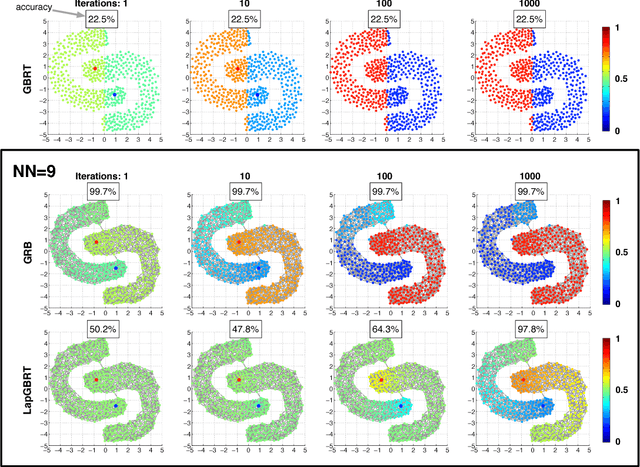

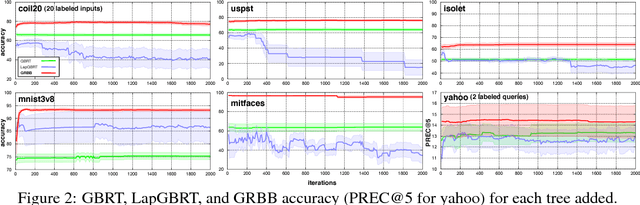

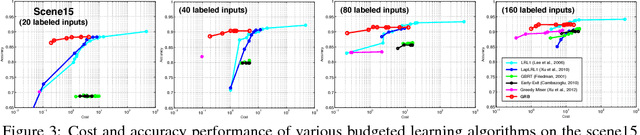

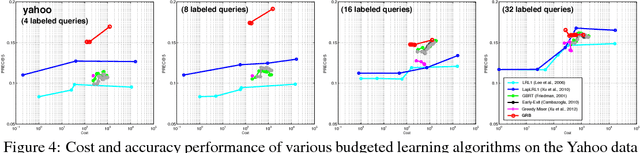

Abstract:As machine learning transitions increasingly towards real world applications controlling the test-time cost of algorithms becomes more and more crucial. Recent work, such as the Greedy Miser and Speedboost, incorporate test-time budget constraints into the training procedure and learn classifiers that provably stay within budget (in expectation). However, so far, these algorithms are limited to the supervised learning scenario where sufficient amounts of labeled data are available. In this paper we investigate the common scenario where labeled data is scarce but unlabeled data is available in abundance. We propose an algorithm that leverages the unlabeled data (through Laplace smoothing) and learns classifiers with budget constraints. Our model, based on gradient boosted regression trees (GBRT), is, to our knowledge, the first algorithm for semi-supervised budgeted learning.

Gradient Boosted Feature Selection

Jan 13, 2019

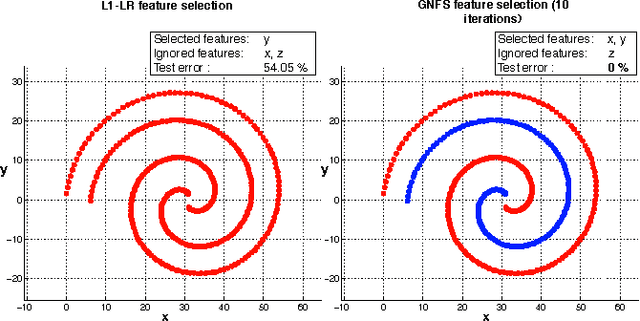

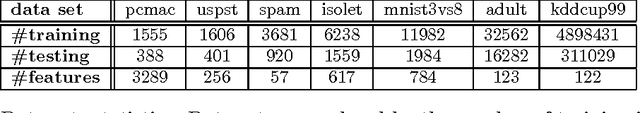

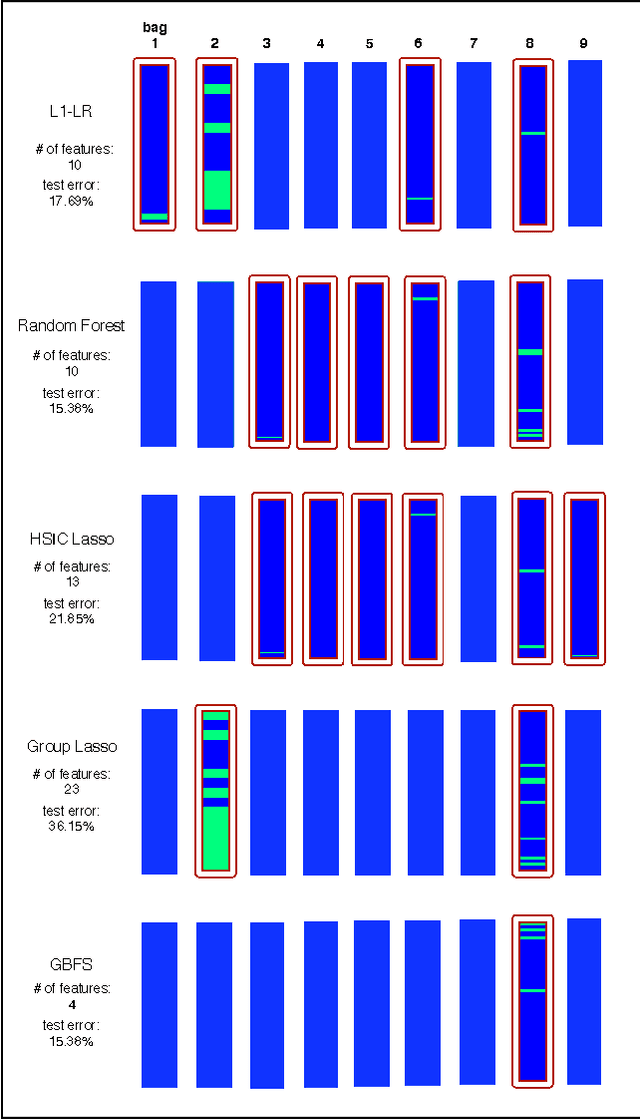

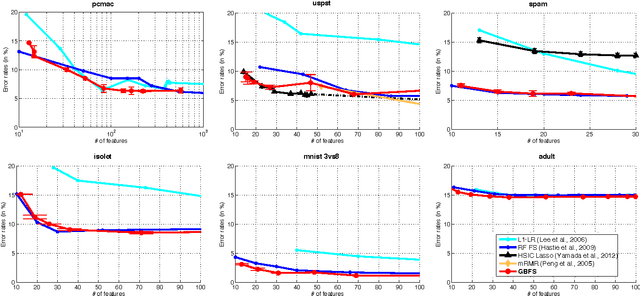

Abstract:A feature selection algorithm should ideally satisfy four conditions: reliably extract relevant features; be able to identify non-linear feature interactions; scale linearly with the number of features and dimensions; allow the incorporation of known sparsity structure. In this work we propose a novel feature selection algorithm, Gradient Boosted Feature Selection (GBFS), which satisfies all four of these requirements. The algorithm is flexible, scalable, and surprisingly straight-forward to implement as it is based on a modification of Gradient Boosted Trees. We evaluate GBFS on several real world data sets and show that it matches or out-performs other state of the art feature selection algorithms. Yet it scales to larger data set sizes and naturally allows for domain-specific side information.

Efficient Test Selection in Active Diagnosis via Entropy Approximation

Jul 04, 2012

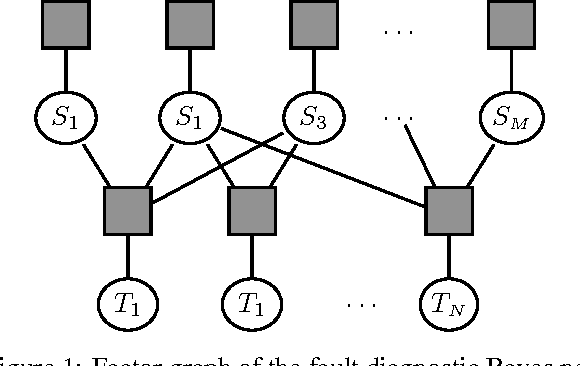

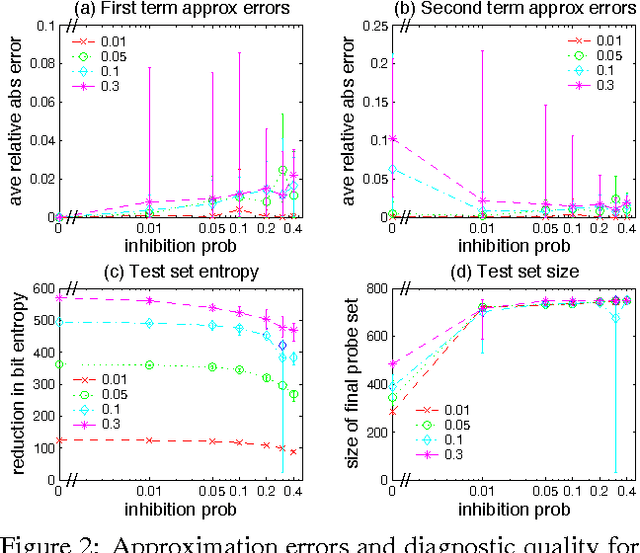

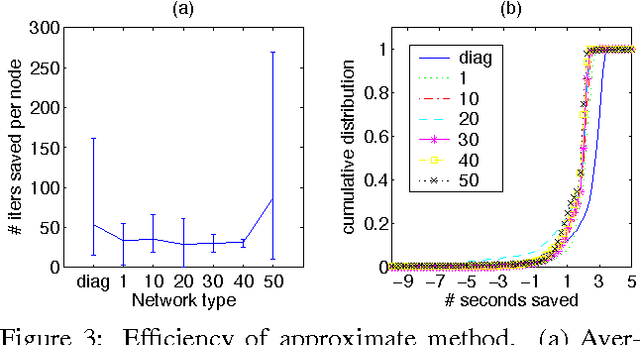

Abstract:We consider the problem of diagnosing faults in a system represented by a Bayesian network, where diagnosis corresponds to recovering the most likely state of unobserved nodes given the outcomes of tests (observed nodes). Finding an optimal subset of tests in this setting is intractable in general. We show that it is difficult even to compute the next most-informative test using greedy test selection, as it involves several entropy terms whose exact computation is intractable. We propose an approximate approach that utilizes the loopy belief propagation infrastructure to simultaneously compute approximations of marginal and conditional entropies on multiple subsets of nodes. We apply our method to fault diagnosis in computer networks, and show the algorithm to be very effective on realistic Internet-like topologies. We also provide theoretical justification for the greedy test selection approach, along with some performance guarantees.

Add to Chrome

Add to Chrome Add to Firefox

Add to Firefox Add to Edge

Add to Edge