Ali Hooshmand

A Unified Structured Query Understanding Framework for Industrial Semantic Search

May 22, 2026Abstract:Query understanding in large-scale industrial search systems is typically implemented as a cascade of disparate, task-specific components. While individually optimizable, this fragmented architecture incurs high maintenance overhead and results in inconsistent behaviors, particularly for long-tail queries. In this work, we propose and deploy a unified structured query understanding system that consolidates these heterogeneous functions into a single Small Language Model (SLM) that performs schema-constrained generation. To address the data bottlenecks inherent in unified modeling, we introduce Query Illuminator, a dual-purpose framework serving as: (i) a teacher model for high-quality auto-annotation and distillation, and (ii) a surrogate judge for scalable evaluation where human labels are scarce. We validate this approach through extensive offline and online tests within LinkedIn's Job Search system. Furthermore, we demonstrate the framework's horizontal extensibility through a cross-domain case study on People Search. The results show improved user engagement and reduced operational costs, achieved while satisfying strict low-latency serving constraints on limited GPU resources.

Semantic Search At LinkedIn

Feb 07, 2026Abstract:Semantic search with large language models (LLMs) enables retrieval by meaning rather than keyword overlap, but scaling it requires major inference efficiency advances. We present LinkedIn's LLM-based semantic search framework for AI Job Search and AI People Search, combining an LLM relevance judge, embedding-based retrieval, and a compact Small Language Model trained via multi-teacher distillation to jointly optimize relevance and engagement. A prefill-oriented inference architecture co-designed with model pruning, context compression, and text-embedding hybrid interactions boosts ranking throughput by over 75x under a fixed latency constraint while preserving near-teacher-level NDCG, enabling one of the first production LLM-based ranking systems with efficiency comparable to traditional approaches and delivering significant gains in quality and user engagement.

Energy Predictive Models with Limited Data using Transfer Learning

Jun 06, 2019

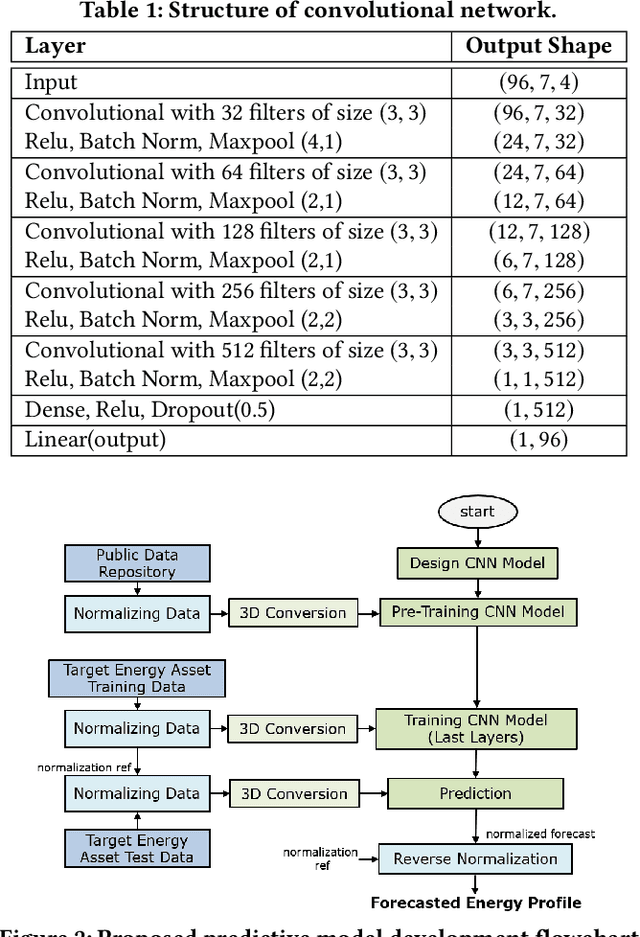

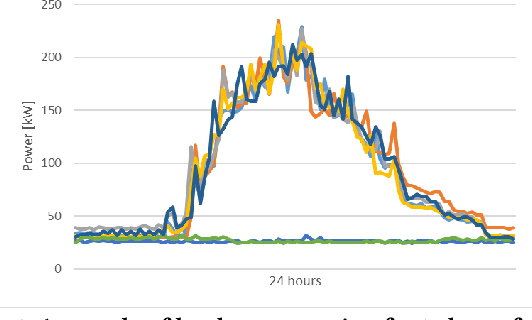

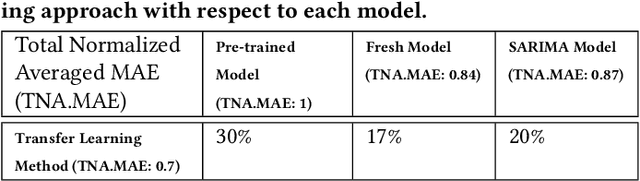

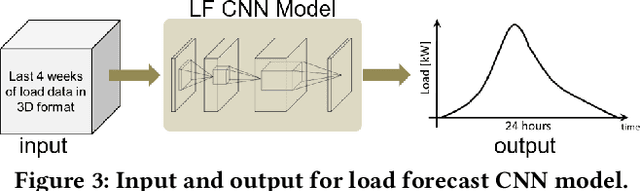

Abstract:In this paper, we consider the problem of developing predictive models with limited data for energy assets such as electricity loads, PV power generations, etc. We specifically investigate the cases where the amount of historical data is not sufficient to effectively train the prediction model. We first develop an energy predictive model based on convolutional neural network (CNN) which is well suited to capture the interaday, daily, and weekly cyclostationary patterns, trends and seasonalities in energy assets time series. A transfer learning strategy is then proposed to address the challenge of limited training data. We demonstrate our approach on a usecase of daily electricity demand forecasting. we show practicing the transfer learning strategy on the CNN model results in significant improvement to existing forecasting methods.

Add to Chrome

Add to Chrome Add to Firefox

Add to Firefox Add to Edge

Add to Edge