Alessio Gambi

Declarative Scenario-based Testing with RoadLogic

Mar 10, 2026Abstract:Scenario-based testing is a key method for cost-effective and safe validation of autonomous vehicles (AVs). Existing approaches rely on imperative scenario definitions, requiring developers to manually enumerate numerous variants to achieve coverage. Declarative languages, such as OpenSCENARIO DSL (OS2), raise the abstraction level but lack systematic methods for instantiating concrete, specification-compliant scenarios as simulations. To our knowledge, currently, no open-source solution provides this capability. We present RoadLogic that bridges declarative OS2 specifications and executable simulations. It uses Answer Set Programming to generate abstract plans satisfying scenario constraints, motion planning to refine the plans into feasible trajectories, and specification-based monitoring to verify correctness. We evaluate RoadLogic on instantiating representative OS2 scenarios as simulations in the CommonRoad framework. Results show that RoadLogic consistently produces realistic, specification-satisfying simulations within minutes and captures diverse behavioral variants through parameter sampling, thus opening the door to systematic scenario-based testing for autonomous driving systems.

Foundation Models in Autonomous Driving: A Survey on Scenario Generation and Scenario Analysis

Jun 13, 2025

Abstract:For autonomous vehicles, safe navigation in complex environments depends on handling a broad range of diverse and rare driving scenarios. Simulation- and scenario-based testing have emerged as key approaches to development and validation of autonomous driving systems. Traditional scenario generation relies on rule-based systems, knowledge-driven models, and data-driven synthesis, often producing limited diversity and unrealistic safety-critical cases. With the emergence of foundation models, which represent a new generation of pre-trained, general-purpose AI models, developers can process heterogeneous inputs (e.g., natural language, sensor data, HD maps, and control actions), enabling the synthesis and interpretation of complex driving scenarios. In this paper, we conduct a survey about the application of foundation models for scenario generation and scenario analysis in autonomous driving (as of May 2025). Our survey presents a unified taxonomy that includes large language models, vision-language models, multimodal large language models, diffusion models, and world models for the generation and analysis of autonomous driving scenarios. In addition, we review the methodologies, open-source datasets, simulation platforms, and benchmark challenges, and we examine the evaluation metrics tailored explicitly to scenario generation and analysis. Finally, the survey concludes by highlighting the open challenges and research questions, and outlining promising future research directions. All reviewed papers are listed in a continuously maintained repository, which contains supplementary materials and is available at https://github.com/TUM-AVS/FM-for-Scenario-Generation-Analysis.

MultiDrive: A Co-Simulation Framework Bridging 2D and 3D Driving Simulation for AV Software Validation

May 20, 2025Abstract:Scenario-based testing using simulations is a cornerstone of Autonomous Vehicles (AVs) software validation. So far, developers needed to choose between low-fidelity 2D simulators to explore the scenario space efficiently, and high-fidelity 3D simulators to study relevant scenarios in more detail, thus reducing testing costs while mitigating the sim-to-real gap. This paper presents a novel framework that leverages multi-agent co-simulation and procedural scenario generation to support scenario-based testing across low- and high-fidelity simulators for the development of motion planning algorithms. Our framework limits the effort required to transition scenarios between simulators and automates experiment execution, trajectory analysis, and visualization. Experiments with a reference motion planner show that our framework uncovers discrepancies between the planner's intended and actual behavior, thus exposing weaknesses in planning assumptions under more realistic conditions. Our framework is available at: https://github.com/TUM-AVS/MultiDrive

DeepHyperion: Exploring the Feature Space of Deep Learning-Based Systems through Illumination Search

Jul 05, 2021

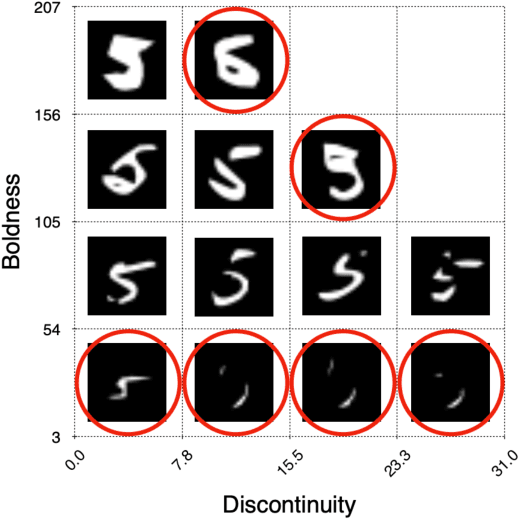

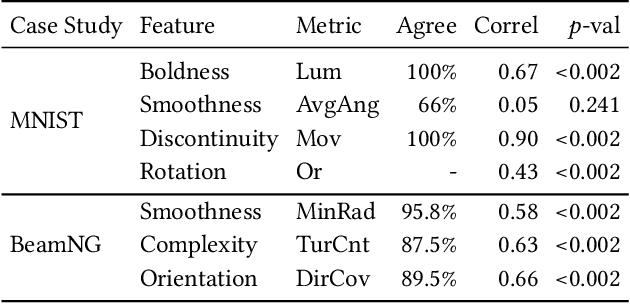

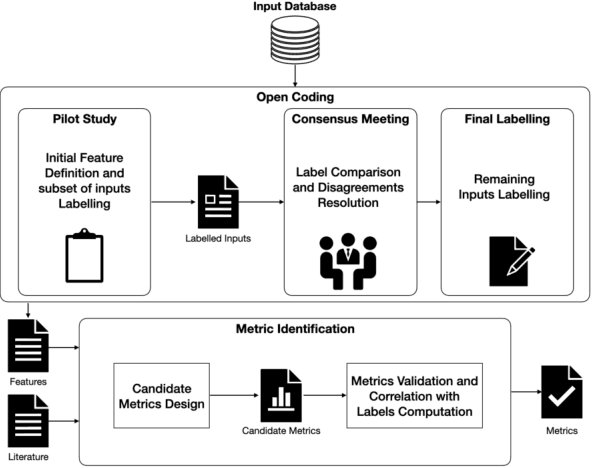

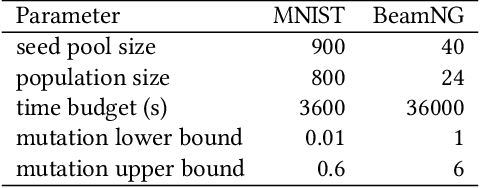

Abstract:Deep Learning (DL) has been successfully applied to a wide range of application domains, including safety-critical ones. Several DL testing approaches have been recently proposed in the literature but none of them aims to assess how different interpretable features of the generated inputs affect the system's behaviour. In this paper, we resort to Illumination Search to find the highest-performing test cases (i.e., misbehaving and closest to misbehaving), spread across the cells of a map representing the feature space of the system. We introduce a methodology that guides the users of our approach in the tasks of identifying and quantifying the dimensions of the feature space for a given domain. We developed DeepHyperion, a search-based tool for DL systems that illuminates, i.e., explores at large, the feature space, by providing developers with an interpretable feature map where automatically generated inputs are placed along with information about the exposed behaviours.

Add to Chrome

Add to Chrome Add to Firefox

Add to Firefox Add to Edge

Add to Edge