Alberto Ranavolo

GenGait: A Transformer-Based Model for Human Gait Anomaly Detection and Normative Twin Generation

Apr 02, 2026Abstract:Gait analysis provides an objective characterization of locomotor function and is widely used to support diagnosis and rehabilitation monitoring across neurological and orthopedic disorders. Deep learning has been increasingly applied to this domain, yet most approaches rely on supervised classifiers trained on disease-labeled data, limiting generalization to heterogeneous pathological presentations. This work proposes a label-free framework for joint-level anomaly detection and kinematic correction based on a Transformer masked autoencoder trained exclusively on normative gait sequences from 150 adults, acquired with a markerless multi-camera motion-capture system. At inference, a two-pass procedure is applied to potentially pathological input sequences, first it estimates joint inconsistency scores by occluding individual joints and measuring deviations from the learned normative prior. Then, it withholds the flagged joints from the encoder input and reconstructs the full skeleton from the remaining spatiotemporal context, yielding corrected kinematic trajectories at the flagged positions. Validation on 10 held-out normative participants, who mimicked seven simulated gait abnormalities, showed accurate localization of biomechanically inconsistent joints, a significant reduction in angular deviation across all analyzed joints with large effect sizes, and preservation of normative kinematics. The proposed approach enables interpretable, subject-specific localization of gait impairments without requiring disease labels. Video is available at https://youtu.be/Rcm3jqR5pN4.

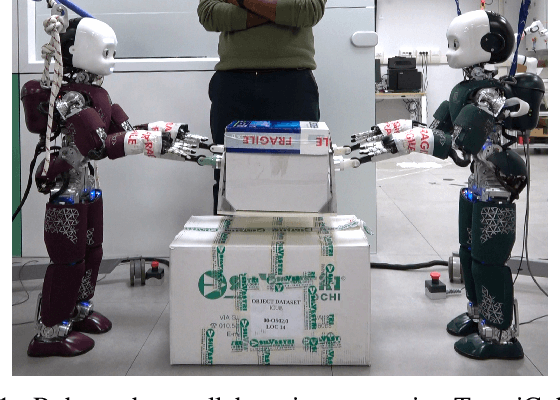

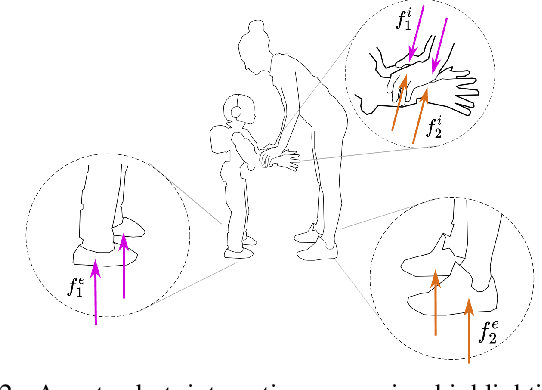

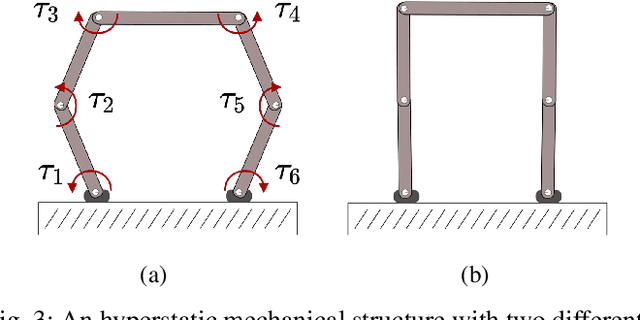

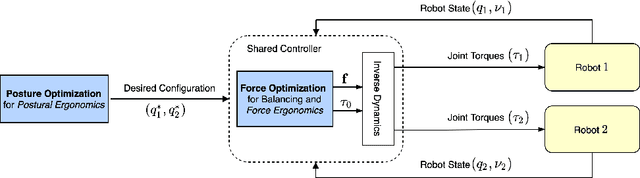

Shared Control of Robot-Robot Collaborative Lifting with Agent Postural and Force Ergonomic Optimization

Apr 28, 2021

Abstract:Humans show specialized strategies for efficient collaboration. Transferring similar strategies to humanoid robots can improve their capability to interact with other agents, leading the way to complex collaborative scenarios with multiple agents acting on a shared environment. In this paper we present a control framework for robot-robot collaborative lifting. The proposed shared controller takes into account the joint action of both the robots thanks to a centralized controller that communicates with them, and solves the whole-system optimization. Efficient collaboration is ensured by taking into account the ergonomic requirements of the robots through the optimization of posture and contact forces. The framework is validated in an experimental scenario with two iCub humanoid robots performing different payload lifting sequences.

Add to Chrome

Add to Chrome Add to Firefox

Add to Firefox Add to Edge

Add to Edge