"Time": models, code, and papers

Real-time detection of uncalibrated sensors using Neural Networks

Feb 02, 2021

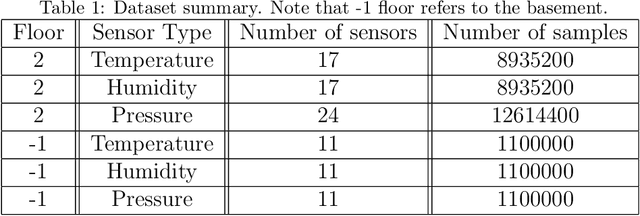

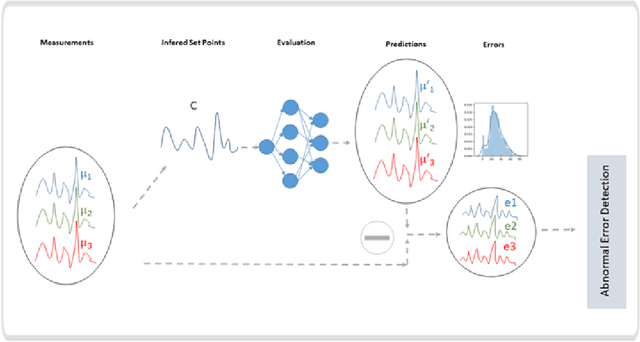

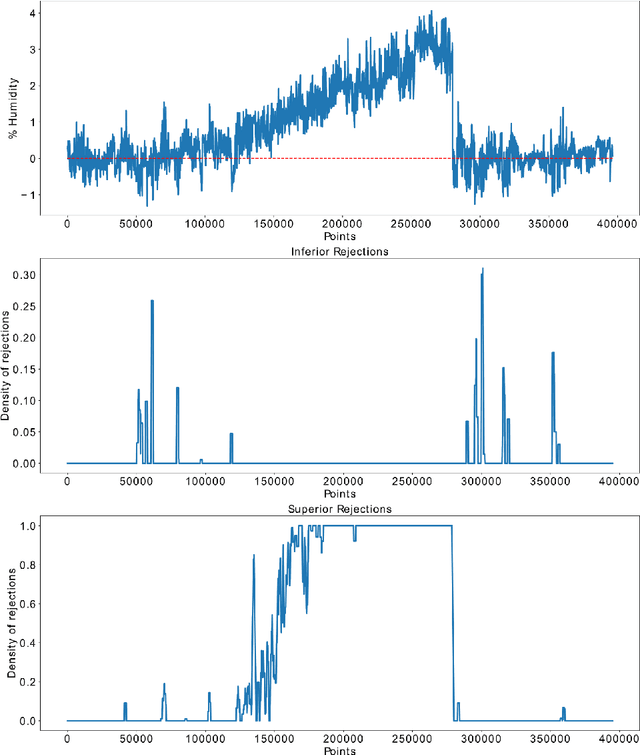

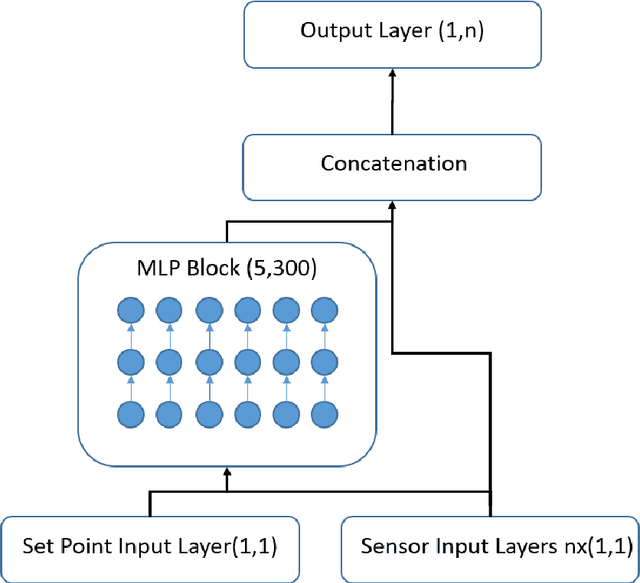

Nowadays, sensors play a major role in several contexts like science, industry and daily life which benefit of their use. However, the retrieved information must be reliable. Anomalies in the behavior of sensors can give rise to critical consequences such as ruining a scientific project or jeopardizing the quality of the production in industrial production lines. One of the more subtle kind of anomalies are uncalibrations. An uncalibration is said to take place when the sensor is not adjusted or standardized by calibration according to a ground truth value. In this work, an online machine-learning based uncalibration detector for temperature, humidity and pressure sensors was developed. This solution integrates an Artificial Neural Network as main component which learns from the behavior of the sensors under calibrated conditions. Then, after trained and deployed, it detects uncalibrations once they take place. The obtained results show that the proposed solution is able to detect uncalibrations for deviation values of 0.25 degrees, 1% RH and 1.5 Pa, respectively. This solution can be adapted to different contexts by means of transfer learning, whose application allows for the addition of new sensors, the deployment into new environments and the retraining of the model with minimum amounts of data.

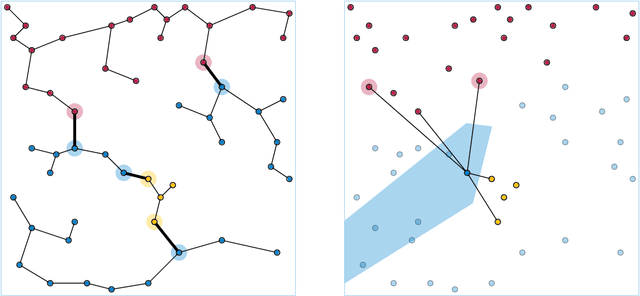

Sliding window strategy for convolutional spike sorting with Lasso : Algorithm, theoretical guarantees and complexity

Oct 29, 2021

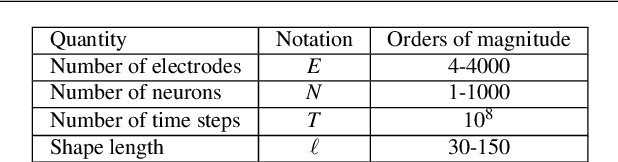

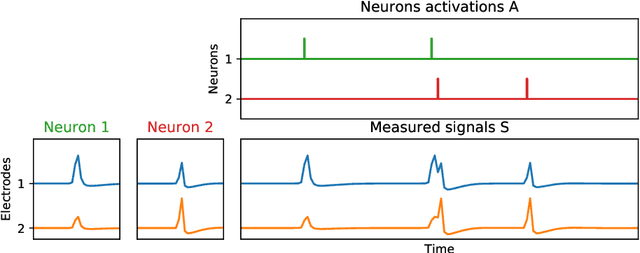

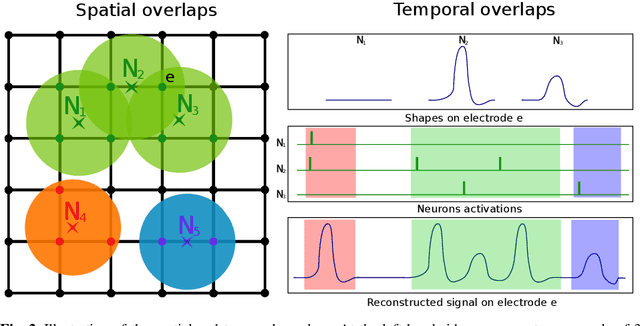

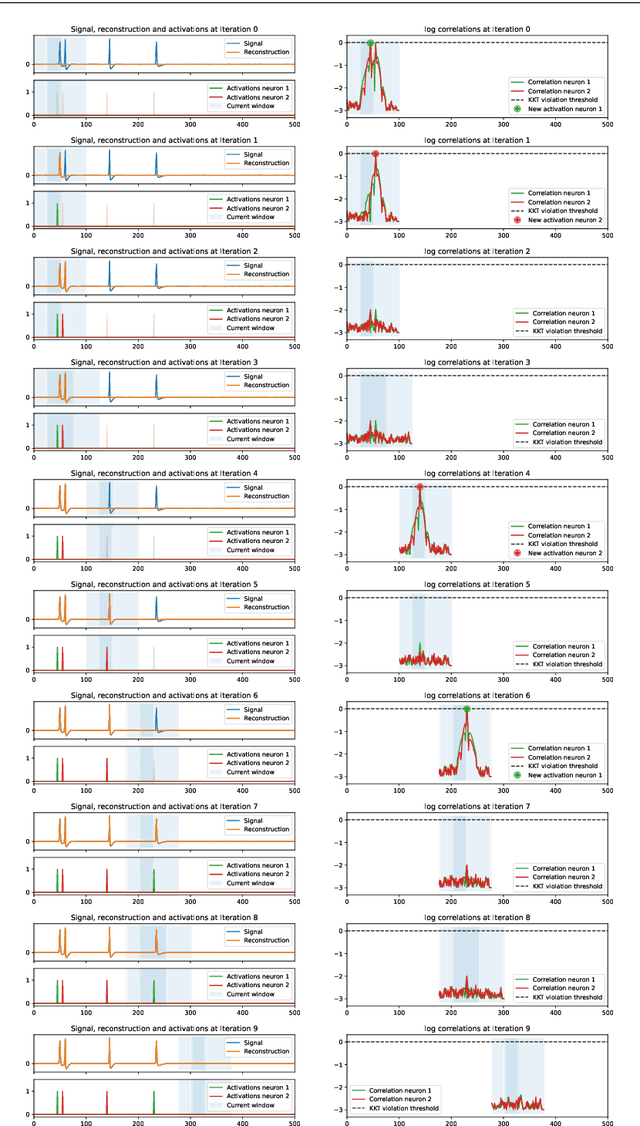

We present a fast algorithm for the resolution of the Lasso for convolutional models in high dimension, with a particular focus on the problem of spike sorting in neuroscience. Making use of biological properties related to neurons, we explain how the particular structure of the problem allows several optimizations, leading to an algorithm with a temporal complexity which grows linearly with respect to the size of the recorded signal and can be performed online. Moreover the spatial separability of the initial problem allows to break it into subproblems, further reducing the complexity and making possible its application on the latest recording devices which comprise a large number of sensors. We provide several mathematical results: the size and numerical complexity of the subproblems can be estimated mathematically by using percolation theory. We also show under reasonable assumptions that the Lasso estimator retrieves the true support with large probability. Finally the theoretical time complexity of the algorithm is given. Numerical simulations are also provided in order to illustrate the efficiency of our approach.

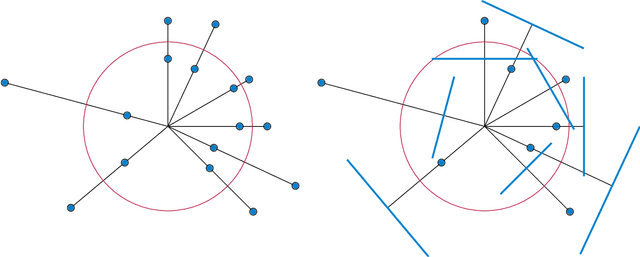

Finding Relevant Points for Nearest-Neighbor Classification

Oct 12, 2021

In nearest-neighbor classification problems, a set of $d$-dimensional training points are given, each with a known classification, and are used to infer unknown classifications of other points by using the same classification as the nearest training point. A training point is relevant if its omission from the training set would change the outcome of some of these inferences. We provide a simple algorithm for thinning a training set down to its subset of relevant points, using as subroutines algorithms for finding the minimum spanning tree of a set of points and for finding the extreme points (convex hull vertices) of a set of points. The time bounds for our algorithm, in any constant dimension $d\ge 3$, improve on a previous algorithm for the same problem by Clarkson (FOCS 1994).

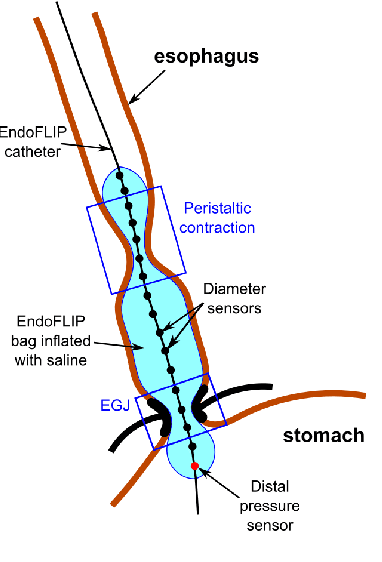

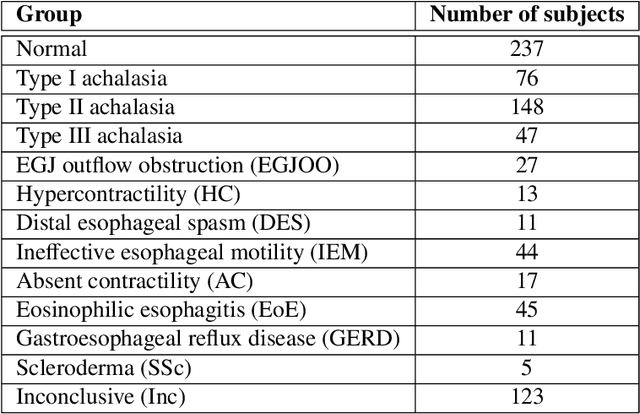

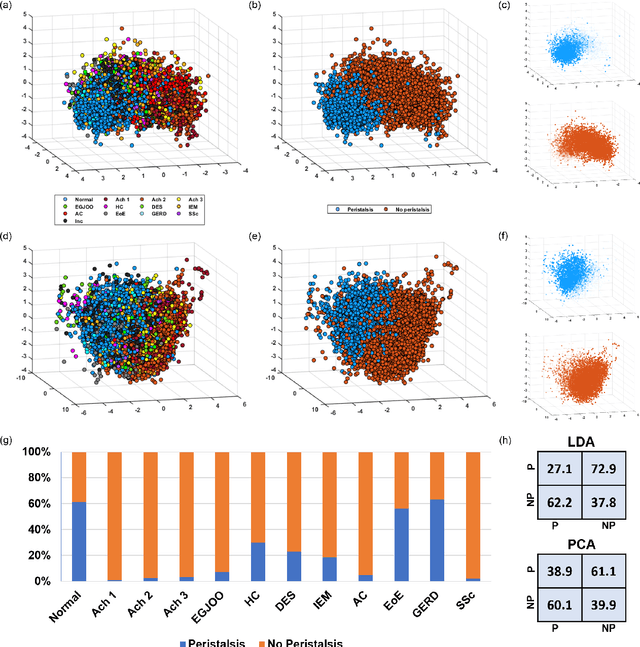

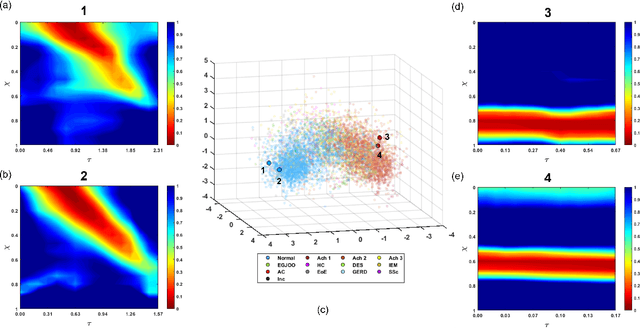

Esophageal virtual disease landscape using mechanics-informed machine learning

Nov 19, 2021

The pathogenesis of esophageal disorders is related to the esophageal wall mechanics. Therefore, to understand the underlying fundamental mechanisms behind various esophageal disorders, it is crucial to map the esophageal wall mechanics-based parameters onto physiological and pathophysiological conditions corresponding to altered bolus transit and supraphysiologic IBP. In this work, we present a hybrid framework that combines fluid mechanics and machine learning to identify the underlying physics of the various esophageal disorders and maps them onto a parameter space which we call the virtual disease landscape (VDL). A one-dimensional inverse model processes the output from an esophageal diagnostic device called endoscopic functional lumen imaging probe (EndoFLIP) to estimate the mechanical "health" of the esophagus by predicting a set of mechanics-based parameters such as esophageal wall stiffness, muscle contraction pattern and active relaxation of esophageal walls. The mechanics-based parameters were then used to train a neural network that consists of a variational autoencoder (VAE) that generates a latent space and a side network that predicts mechanical work metrics for estimating esophagogastric junction motility. The latent vectors along with a set of discrete mechanics-based parameters define the VDL and form clusters corresponding to the various esophageal disorders. The VDL not only distinguishes different disorders but can also be used to predict disease progression in time. Finally, we also demonstrate the clinical applicability of this framework for estimating the effectiveness of a treatment and track patient condition after a treatment.

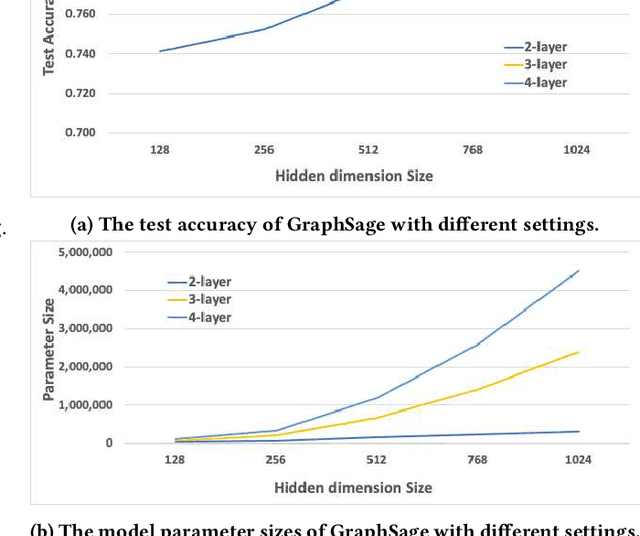

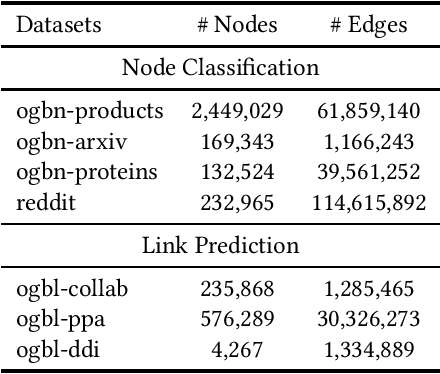

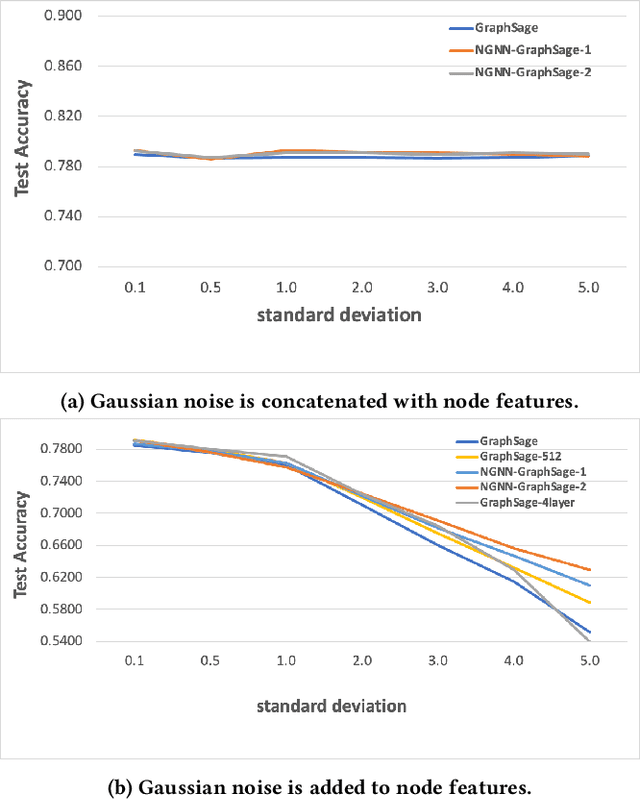

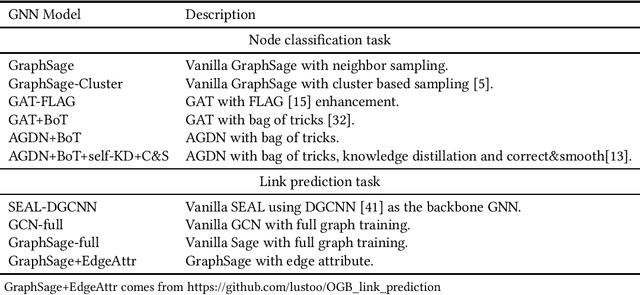

Network In Graph Neural Network

Nov 23, 2021

Graph Neural Networks (GNNs) have shown success in learning from graph structured data containing node/edge feature information, with application to social networks, recommendation, fraud detection and knowledge graph reasoning. In this regard, various strategies have been proposed in the past to improve the expressiveness of GNNs. For example, one straightforward option is to simply increase the parameter size by either expanding the hid-den dimension or increasing the number of GNN layers. However, wider hidden layers can easily lead to overfitting, and incrementally adding more GNN layers can potentially result in over-smoothing.In this paper, we present a model-agnostic methodology, namely Network In Graph Neural Network (NGNN ), that allows arbitrary GNN models to increase their model capacity by making the model deeper. However, instead of adding or widening GNN layers, NGNN deepens a GNN model by inserting non-linear feedforward neural network layer(s) within each GNN layer. An analysis of NGNN as applied to a GraphSage base GNN on ogbn-products data demonstrate that it can keep the model stable against either node feature or graph structure perturbations. Furthermore, wide-ranging evaluation results on both node classification and link prediction tasks show that NGNN works reliably across diverse GNN architectures.For instance, it improves the test accuracy of GraphSage on the ogbn-products by 1.6% and improves the hits@100 score of SEAL on ogbl-ppa by 7.08% and the hits@20 score of GraphSage+Edge-Attr on ogbl-ppi by 6.22%. And at the time of this submission, it achieved two first places on the OGB link prediction leaderboard.

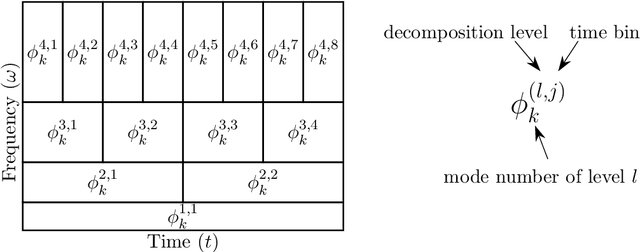

Multi-resolution Dynamic Mode Decomposition for Early Damage Detection in Wind Turbine Gearboxes

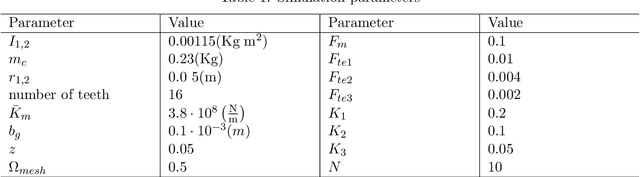

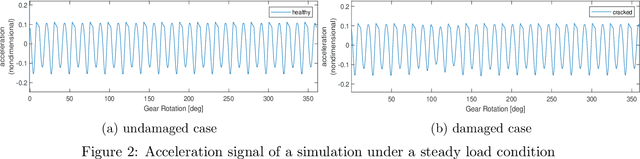

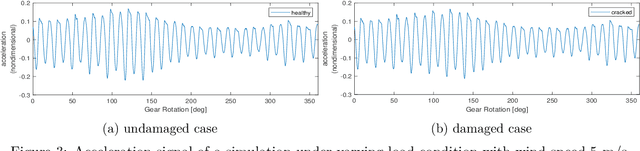

Oct 08, 2021

We introduce an approach for damage detection in gearboxes based on the analysis of sensor data with the multi-resolution dynamic mode decomposition (mrDMD). The application focus is the condition monitoring of wind turbine gearboxes under varying load conditions, in particular irregular and stochastic wind fluctuations. We analyze data stemming from a simulated vibration response of a simple nonlinear gearbox model in a healthy and damaged scenario and under different wind conditions. With mrDMD applied on time-delay snapshots of the sensor data, we can extract components in these vibration signals that highlight features related to damage and enable its identification. A comparison with Fourier analysis and Empirical Mode Decomposition shows the advantages of the proposed mrDMD-based data analysis approach for early damage detection.

Misspecified Gaussian Process Bandit Optimization

Nov 09, 2021We consider the problem of optimizing a black-box function based on noisy bandit feedback. Kernelized bandit algorithms have shown strong empirical and theoretical performance for this problem. They heavily rely on the assumption that the model is well-specified, however, and can fail without it. Instead, we introduce a \emph{misspecified} kernelized bandit setting where the unknown function can be $\epsilon$--uniformly approximated by a function with a bounded norm in some Reproducing Kernel Hilbert Space (RKHS). We design efficient and practical algorithms whose performance degrades minimally in the presence of model misspecification. Specifically, we present two algorithms based on Gaussian process (GP) methods: an optimistic EC-GP-UCB algorithm that requires knowing the misspecification error, and Phased GP Uncertainty Sampling, an elimination-type algorithm that can adapt to unknown model misspecification. We provide upper bounds on their cumulative regret in terms of $\epsilon$, the time horizon, and the underlying kernel, and we show that our algorithm achieves optimal dependence on $\epsilon$ with no prior knowledge of misspecification. In addition, in a stochastic contextual setting, we show that EC-GP-UCB can be effectively combined with the regret bound balancing strategy and attain similar regret bounds despite not knowing $\epsilon$.

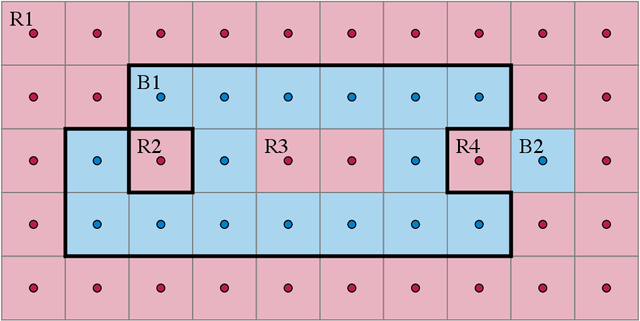

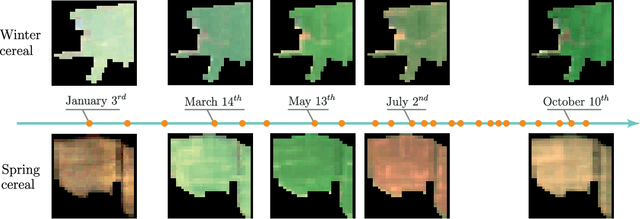

Satellite Image Time Series Classification with Pixel-Set Encoders and Temporal Self-Attention

Nov 18, 2019

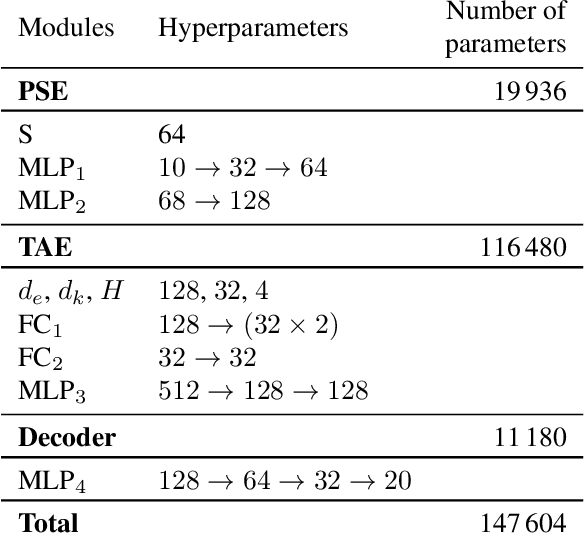

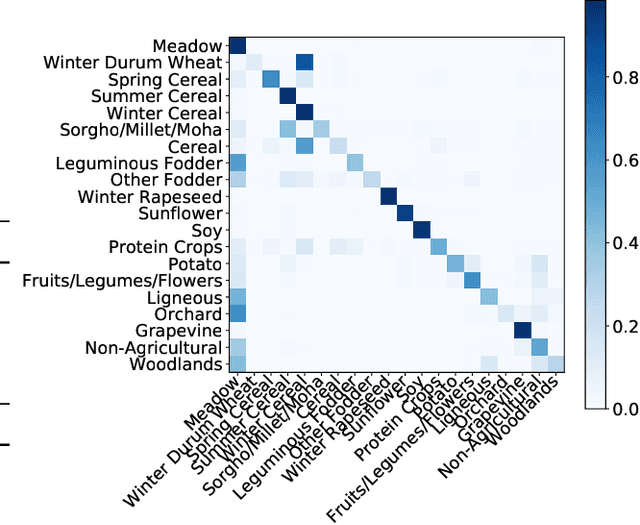

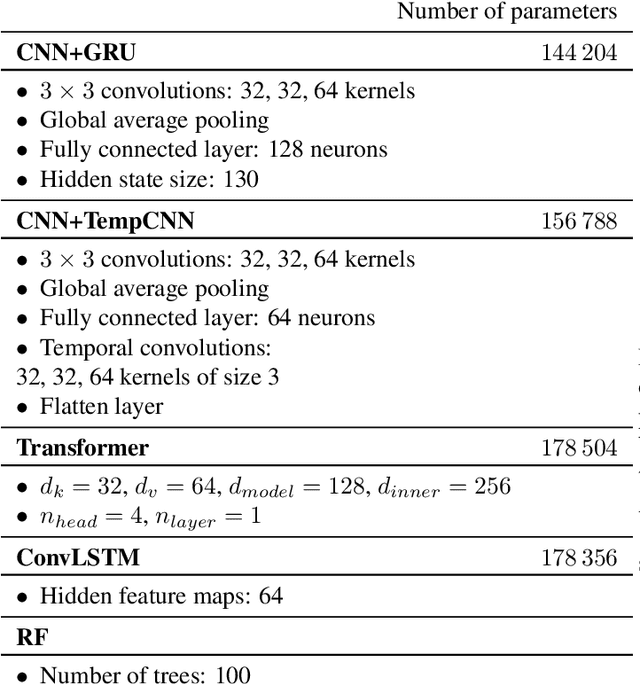

Satellite image time series, bolstered by their growing availability, are at the forefront of an extensive effort towards automated Earth monitoring by international institutions. In particular, large-scale control of agricultural parcels is an issue of major political and economic importance. In this regard, hybrid convolutional-recurrent neural architectures have shown promising results for the automated classification of satellite image time series.We propose an alternative approach in which the convolutional layers are advantageously replaced with encoders operating on unordered sets of pixels to exploit the typically coarse resolution of publicly available satellite images. We also propose to extract temporal features using a bespoke neural architecture based on self-attention instead of recurrent networks. We demonstrate experimentally that our method not only outperforms previous state-of-the-art approaches in terms of precision, but also significantly decreases processing time and memory requirements. Lastly, we release a large open-access annotated dataset as a benchmark for future work on satellite image time series.

DEEPAGÉ: Answering Questions in Portuguese about the Brazilian Environment

Oct 19, 2021

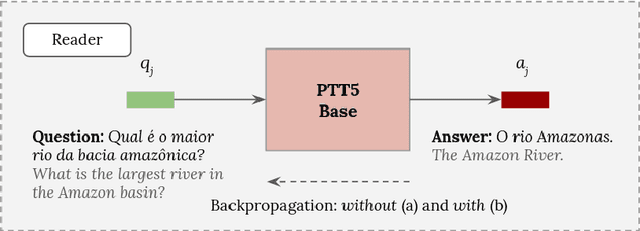

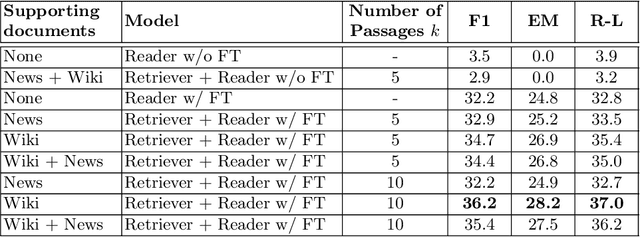

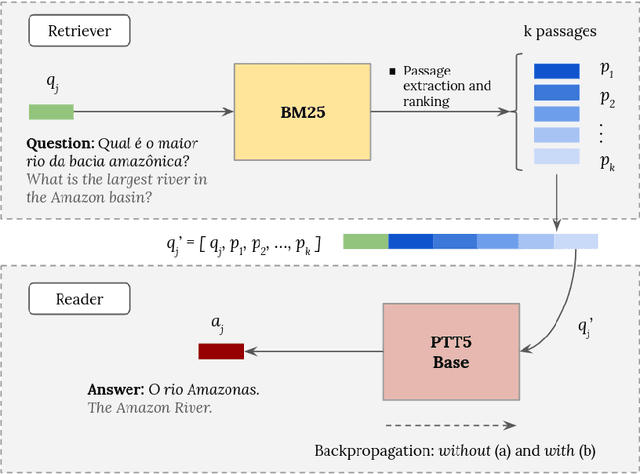

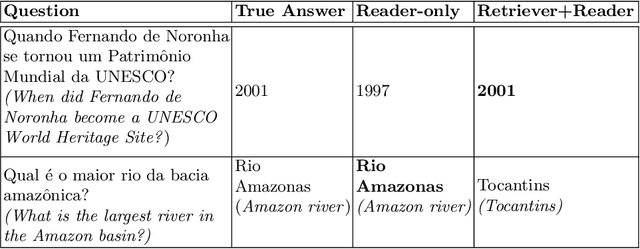

The challenge of climate change and biome conservation is one of the most pressing issues of our time - particularly in Brazil, where key environmental reserves are located. Given the availability of large textual databases on ecological themes, it is natural to resort to question answering (QA) systems to increase social awareness and understanding about these topics. In this work, we introduce multiple QA systems that combine in novel ways the BM25 algorithm, a sparse retrieval technique, with PTT5, a pre-trained state-of-the-art language model. Our QA systems focus on the Portuguese language, thus offering resources not found elsewhere in the literature. As training data, we collected questions from open-domain datasets, as well as content from the Portuguese Wikipedia and news from the press. We thus contribute with innovative architectures and novel applications, attaining an F1-score of 36.2 with our best model.

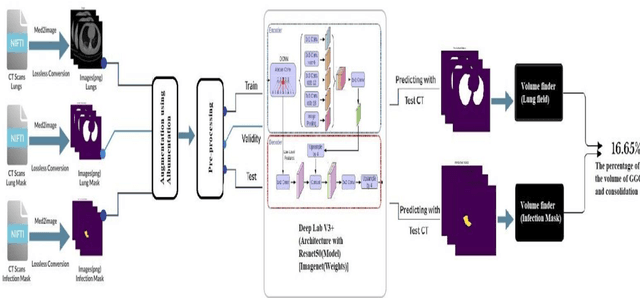

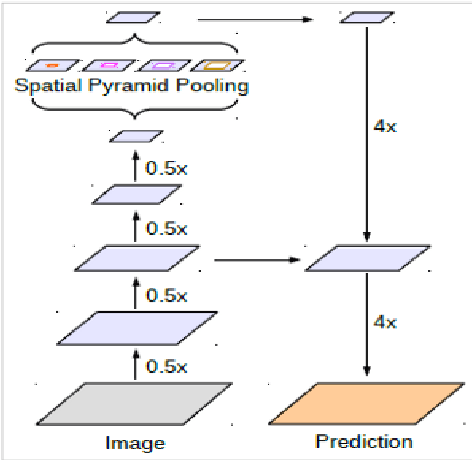

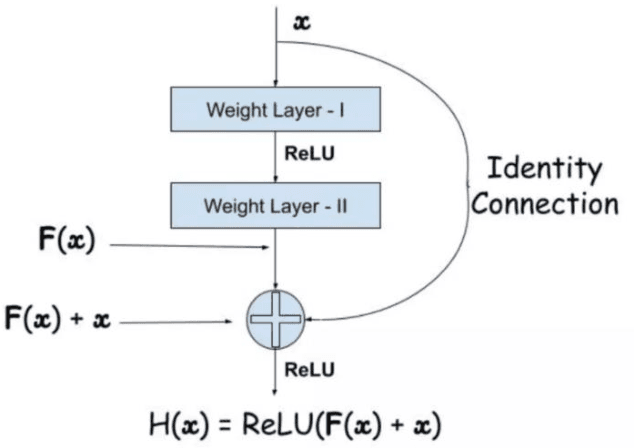

AI-Powered Semantic Segmentation and Fluid Volume Calculation of Lung CT images in Covid-19 Patients

Oct 29, 2021

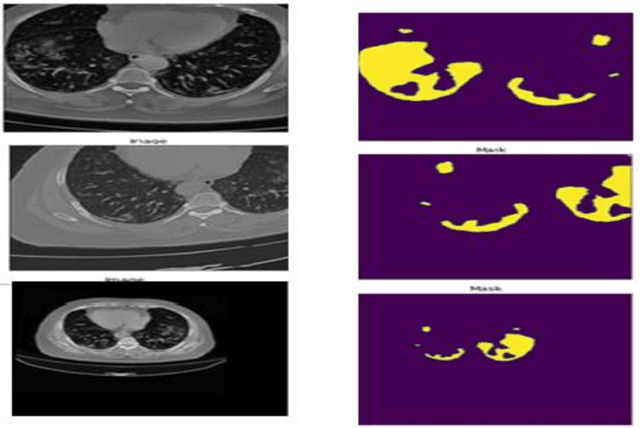

COVID-19 pandemic is a deadly disease spreading very fast. People with the confronted immune system are susceptible to many health conditions. A highly significant condition is pneumonia, which is found to be the cause of death in the majority of patients. The main purpose of this study is to find the volume of GGO and consolidation of a covid-19 patient so that the physicians can prioritize the patients. Here we used transfer learning techniques for segmentation of lung CTs with the latest libraries and techniques which reduces training time and increases the accuracy of the AI Model. This system is trained with DeepLabV3+ network architecture and model Resnet50 with Imagenet weights. We used different augmentation techniques like Gaussian Noise, Horizontal shift, color variation, etc to get to the result. Intersection over Union(IoU) is used as the performance metrics. The IoU of lung masks is predicted as 99.78% and that of infected masks is as 89.01%. Our work effectively measures the volume of infected region by calculating the volume of infected and lung mask region of the patients.

Add to Chrome

Add to Chrome Add to Firefox

Add to Firefox Add to Edge

Add to Edge