Towards Fast Computation of Certified Robustness for ReLU Networks

Paper and Code

Oct 02, 2018

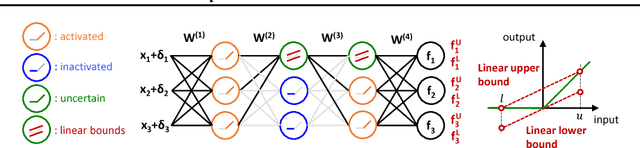

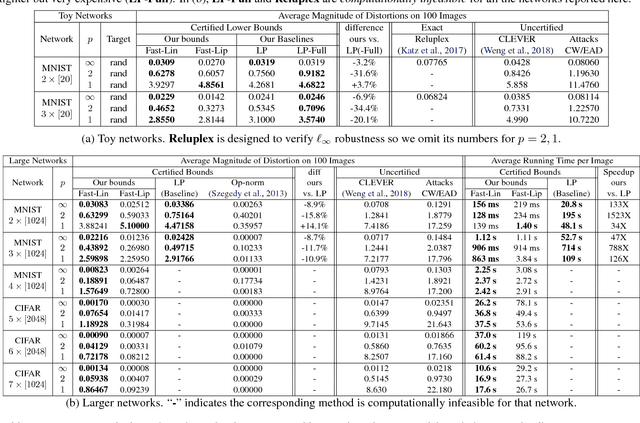

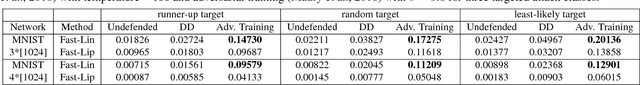

Verifying the robustness property of a general Rectified Linear Unit (ReLU) network is an NP-complete problem [Katz, Barrett, Dill, Julian and Kochenderfer CAV17]. Although finding the exact minimum adversarial distortion is hard, giving a certified lower bound of the minimum distortion is possible. Current available methods of computing such a bound are either time-consuming or delivering low quality bounds that are too loose to be useful. In this paper, we exploit the special structure of ReLU networks and provide two computationally efficient algorithms Fast-Lin and Fast-Lip that are able to certify non-trivial lower bounds of minimum distortions, by bounding the ReLU units with appropriate linear functions Fast-Lin, or by bounding the local Lipschitz constant Fast-Lip. Experiments show that (1) our proposed methods deliver bounds close to (the gap is 2-3X) exact minimum distortion found by Reluplex in small MNIST networks while our algorithms are more than 10,000 times faster; (2) our methods deliver similar quality of bounds (the gap is within 35% and usually around 10%; sometimes our bounds are even better) for larger networks compared to the methods based on solving linear programming problems but our algorithms are 33-14,000 times faster; (3) our method is capable of solving large MNIST and CIFAR networks up to 7 layers with more than 10,000 neurons within tens of seconds on a single CPU core. In addition, we show that, in fact, there is no polynomial time algorithm that can approximately find the minimum $\ell_1$ adversarial distortion of a ReLU network with a $0.99\ln n$ approximation ratio unless $\mathsf{NP}$=$\mathsf{P}$, where $n$ is the number of neurons in the network.

Add to Chrome

Add to Chrome Add to Firefox

Add to Firefox Add to Edge

Add to Edge