Zhenyuan Ma

Evaluating Temporal Plasticity in Foundation Time Series Models for Incremental Fine-tuning

Apr 20, 2025

Abstract:Time series foundation models excel at diverse time series forecasting tasks, but their capacity for continuous improvement through incremental learning remains unexplored. We present the first comprehensive study investigating these models' temporal plasticity - their ability to progressively enhance performance through continual learning while maintaining existing capabilities. Through experiments on real-world datasets exhibiting distribution shifts, we evaluate both conventional deep learning models and foundation models using a novel continual learning framework. Our findings reveal that while traditional models struggle with performance deterioration during incremental fine-tuning, foundation models like Time-MoE and Chronos demonstrate sustained improvement in predictive accuracy. This suggests that optimizing foundation model fine-tuning strategies may be more valuable than developing domain-specific small models. Our research introduces new evaluation methodologies and insights for developing foundation time series models with robust continuous learning capabilities.

On Learning and Learned Representation with Dynamic Routing in Capsule Networks

Oct 08, 2018

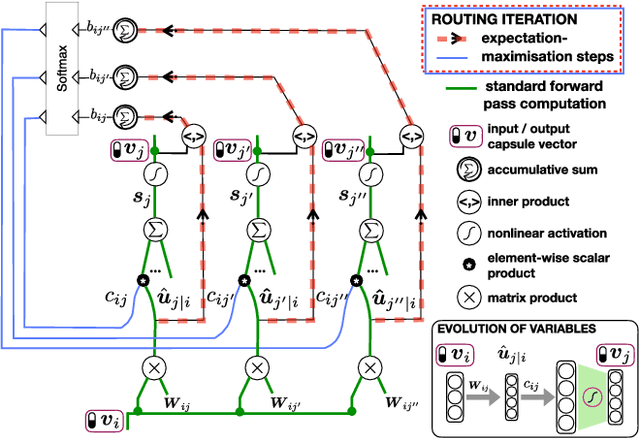

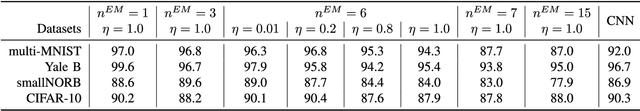

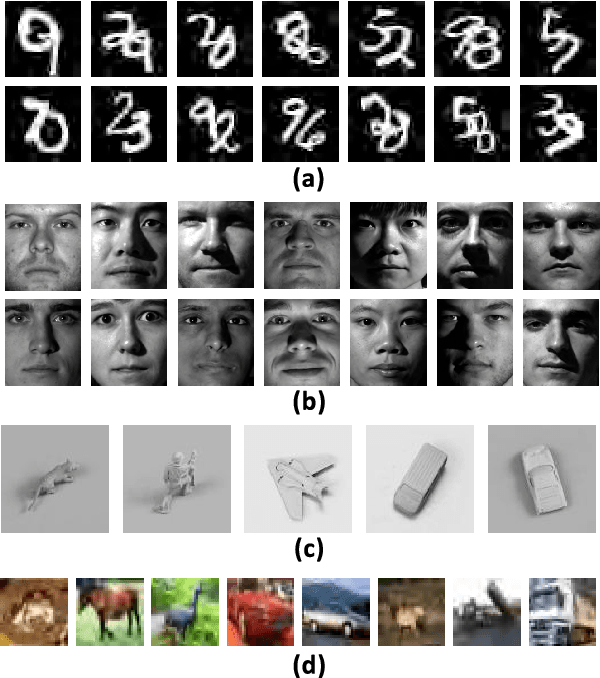

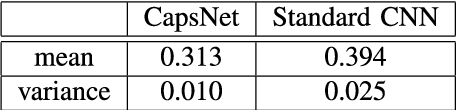

Abstract:Capsule Networks (CapsNet) are recently proposed multi-stage computational models specialized for entity representation and discovery in image data. CapsNet employs iterative routing that shapes how the information cascades through different levels of interpretations. In this work, we investigate i) how the routing affects the CapsNet model fitting, ii) how the representation by capsules helps discover global structures in data distribution and iii) how learned data representation adapts and generalizes to new tasks. Our investigation shows: i) routing operation determines the certainty with which one layer of capsules pass information to the layer above, and the appropriate level of certainty is related to the model fitness, ii) in a designed experiment using data with a known 2D structure, capsule representations allow more meaningful 2D manifold embedding than neurons in a standard CNN do and iii) compared to neurons of standard CNN, capsules of successive layers are less coupled and more adaptive to new data distribution.

Add to Chrome

Add to Chrome Add to Firefox

Add to Firefox Add to Edge

Add to Edge