Yufei Ruan

Online Linear Programming with Replenishment

Jan 21, 2026Abstract:We study an online linear programming (OLP) model in which inventory is not provided upfront but instead arrives gradually through an exogenous stochastic replenishment process. This replenishment-based formulation captures operational settings, such as e-commerce fulfillment, perishable supply chains, and renewable-powered systems, where resources are accumulated gradually and initial inventories are small or zero. The introduction of dispersed, uncertain replenishment fundamentally alters the structure of classical OLPs, creating persistent stockout risk and eliminating advance knowledge of the total budget. We develop new algorithms and regret analyses for three major distributional regimes studied in the OLP literature: bounded distributions, finite-support distributions, and continuous-support distributions with a non-degeneracy condition. For bounded distributions, we design an algorithm that achieves $\widetilde{\mathcal{O}}(\sqrt{T})$ regret. For finite-support distributions with a non-degenerate induced LP, we obtain $\mathcal{O}(\log T)$ regret, and we establish an $Ω(\sqrt{T})$ lower bound for degenerate instances, demonstrating a sharp separation from the classical setting where $\mathcal{O}(1)$ regret is achievable. For continuous-support, non-degenerate distributions, we develop a two-stage accumulate-then-convert algorithm that achieves $\mathcal{O}(\log^2 T)$ regret, comparable to the $\mathcal{O}(\log T)$ regret in classical OLPs. Together, these results provide a near-complete characterization of the optimal regret achievable in OLP with replenishment. Finally, we empirically evaluate our algorithms and demonstrate their advantages over natural adaptations of classical OLP methods in the replenishment setting.

Linear Bandits with Limited Adaptivity and Learning Distributional Optimal Design

Jul 16, 2020

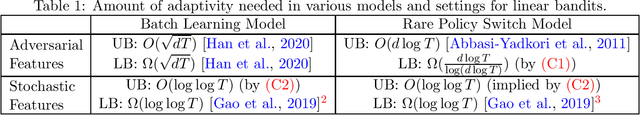

Abstract:Motivated by practical needs such as large-scale learning, we study the impact of adaptivity constraints to linear contextual bandits, a central problem in online active learning. We consider two popular limited adaptivity models in literature: batch learning and rare policy switches. We show that, when the context vectors are adversarially chosen in $d$-dimensional linear contextual bandits, the learner needs $O(d \log d \log T)$ policy switches to achieve the minimax-optimal regret, and this is optimal up to $\mathrm{poly}(\log d, \log \log T)$ factors; for stochastic context vectors, even in the more restricted batch learning model, only $O(\log \log T)$ batches are needed to achieve the optimal regret. Together with the known results in literature, our results present a complete picture about the adaptivity constraints in linear contextual bandits. Along the way, we propose the distributional optimal design, a natural extension of the optimal experiment design, and provide a both statistically and computationally efficient learning algorithm for the problem, which may be of independent interest.

Add to Chrome

Add to Chrome Add to Firefox

Add to Firefox Add to Edge

Add to Edge